नमस्कार

I’m Dhiraj Chouhan

About me

lets Connect

Download Resume

I’m Dhiraj Chouhan

This case study is best viewed on a larger screen.

Please continue on a laptop or desktop to explore the complete design narrative.

I live for flow-that sweet spot where creativity meets clarity.

Download Resume

@imdhirajchouhan

Back to Top

नमस्कार

I’m Dhiraj Chouhan

I’m Dhiraj Chouhan

About me

Download Resume

The research process documented in this case study - the user interviews, card sorting, contextual observation, affinity mapping, usability testing and A/B testing - was conducted as described. Dinero was a real, 2-year product built and shipped at Masters' Union. Some specific data values, feedback quotes and metrics shown have been modified or made representative to protect participant privacy and institutional data confidentiality. The insights, findings and design decisions accurately reflect what was discovered during the research.

Back to Home Page

Dinero

Internal Platform

for Masters' Union

UX Research Case Study

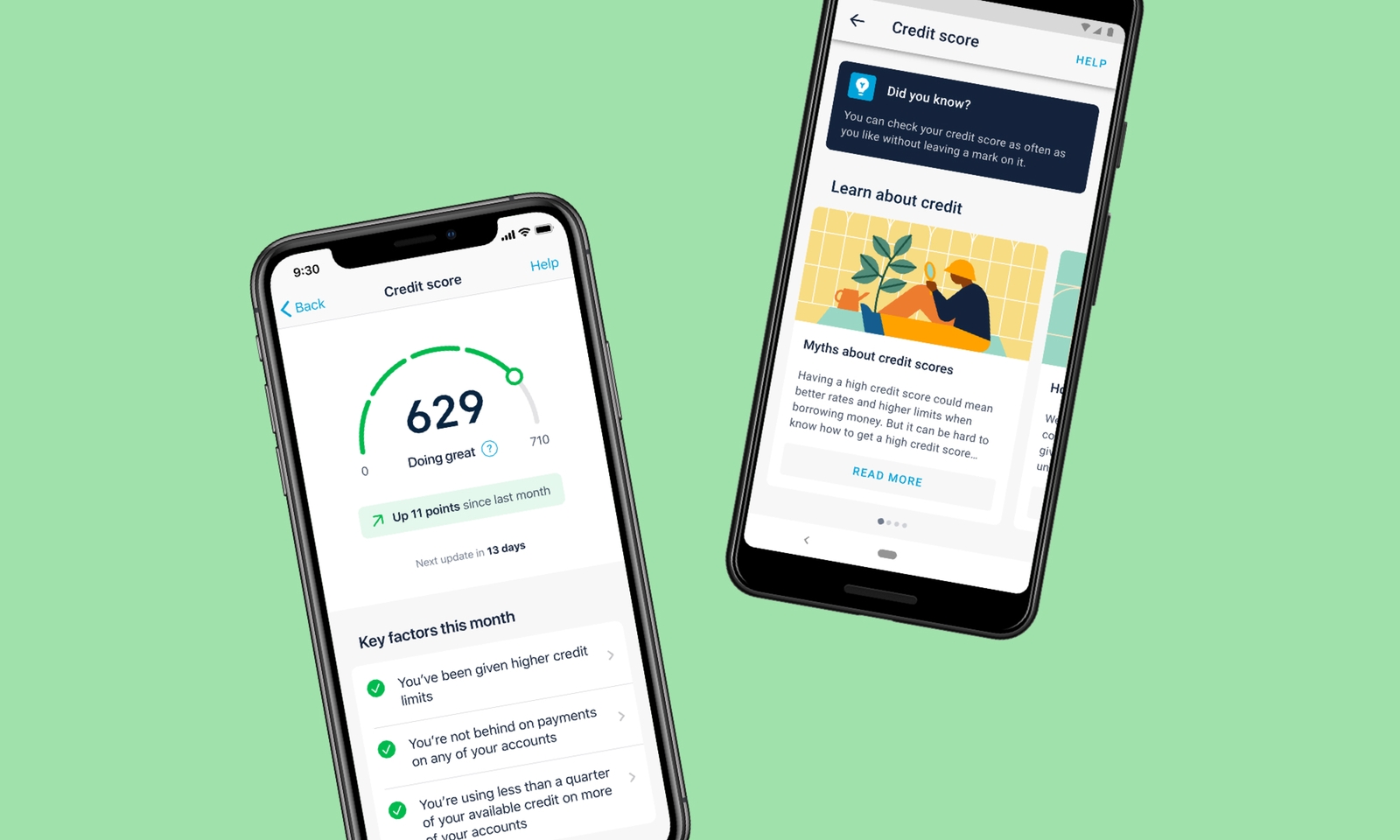

From an 8-step manual admissions journey to a unified platform - serving 1,000+ students, eliminating 3–5 hours of daily admin overhead and converting an external payment link into 93% in-app completion.

UX Research

Product Design

Double Diamond

EdTech

1,000+

Students Onboarded

87%

Usability Task Success

93%

Payment Completion (A/B)

<1 hr

Admin Daily Overhead

/ 1.1 Project Background

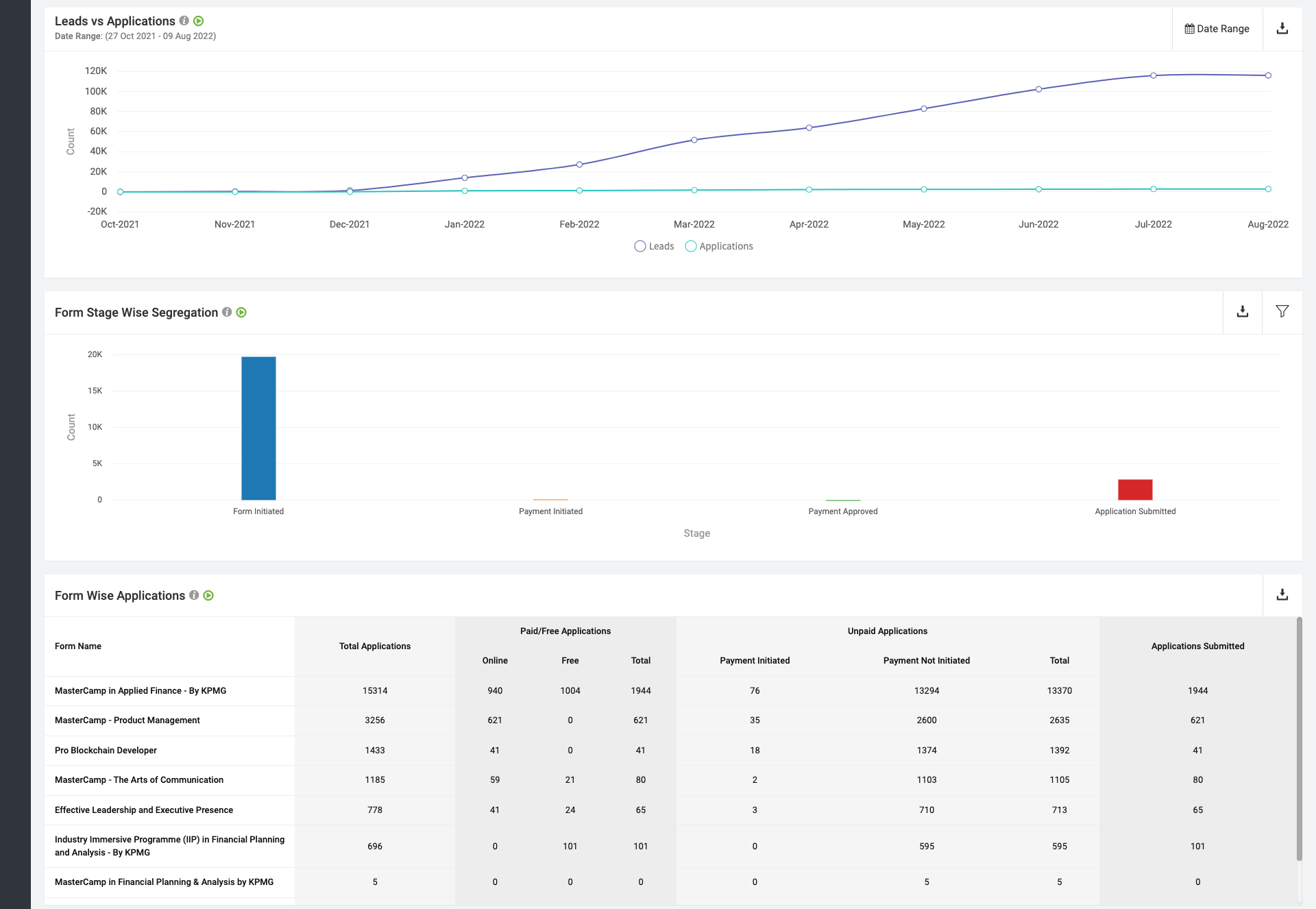

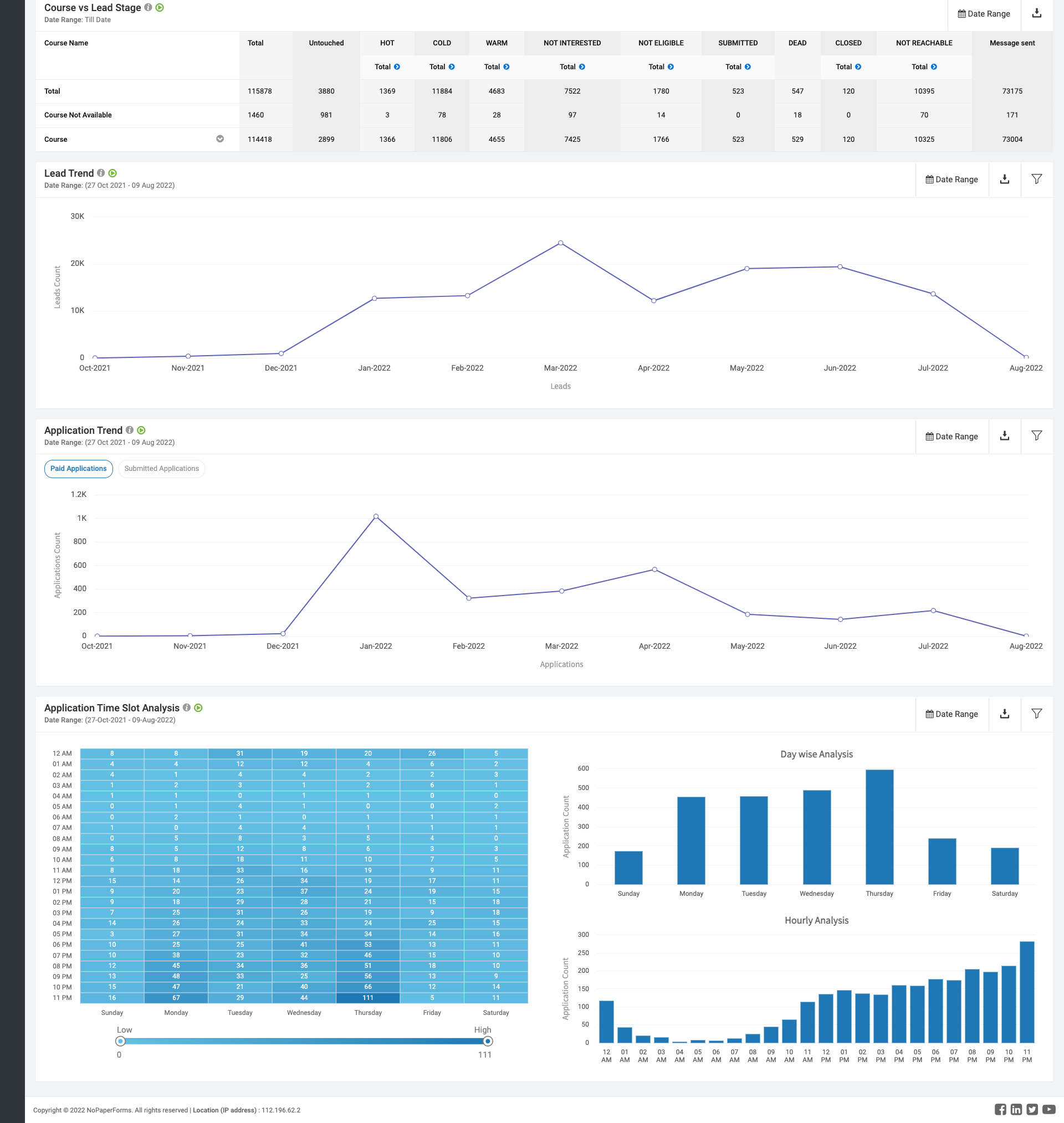

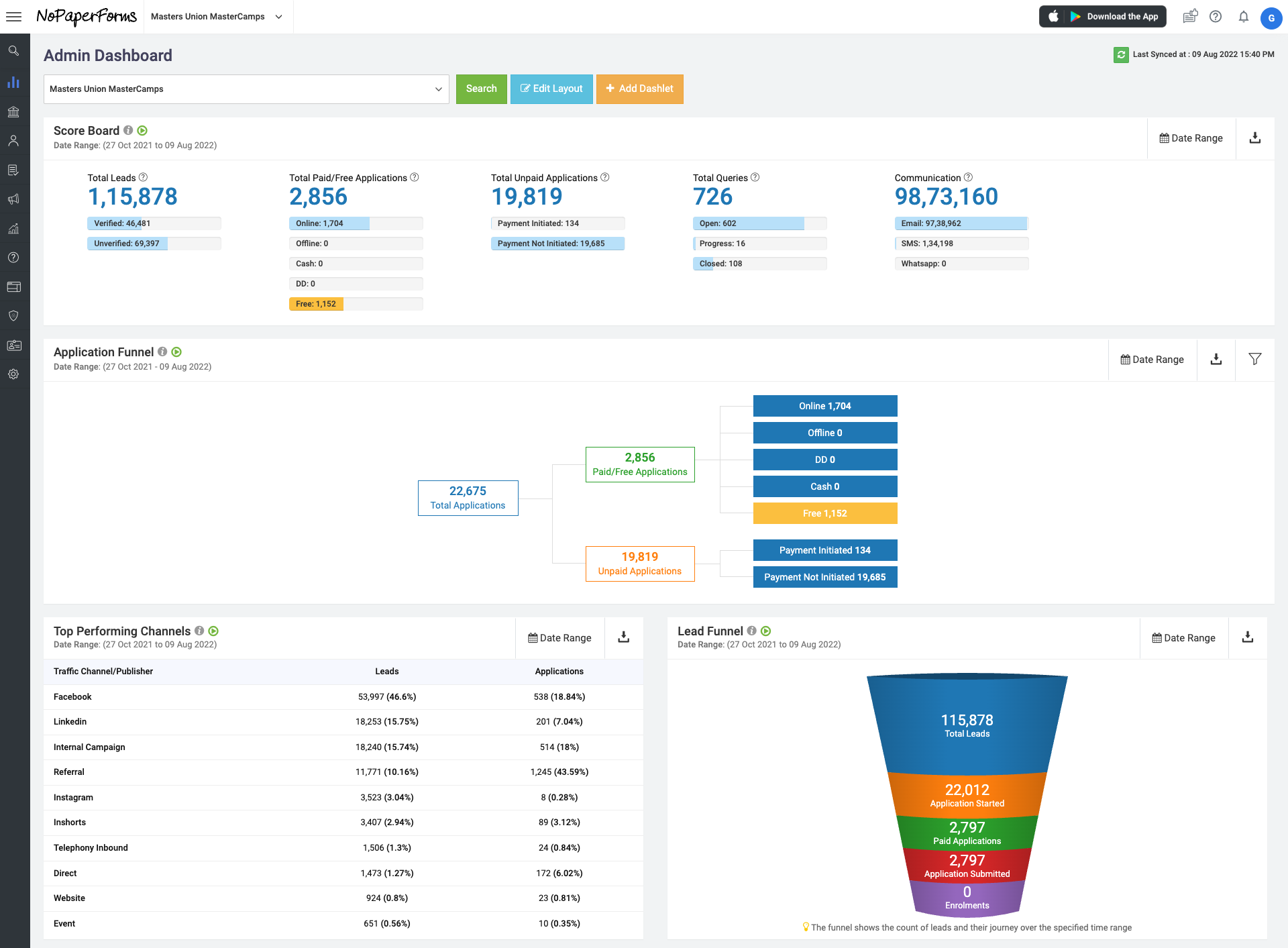

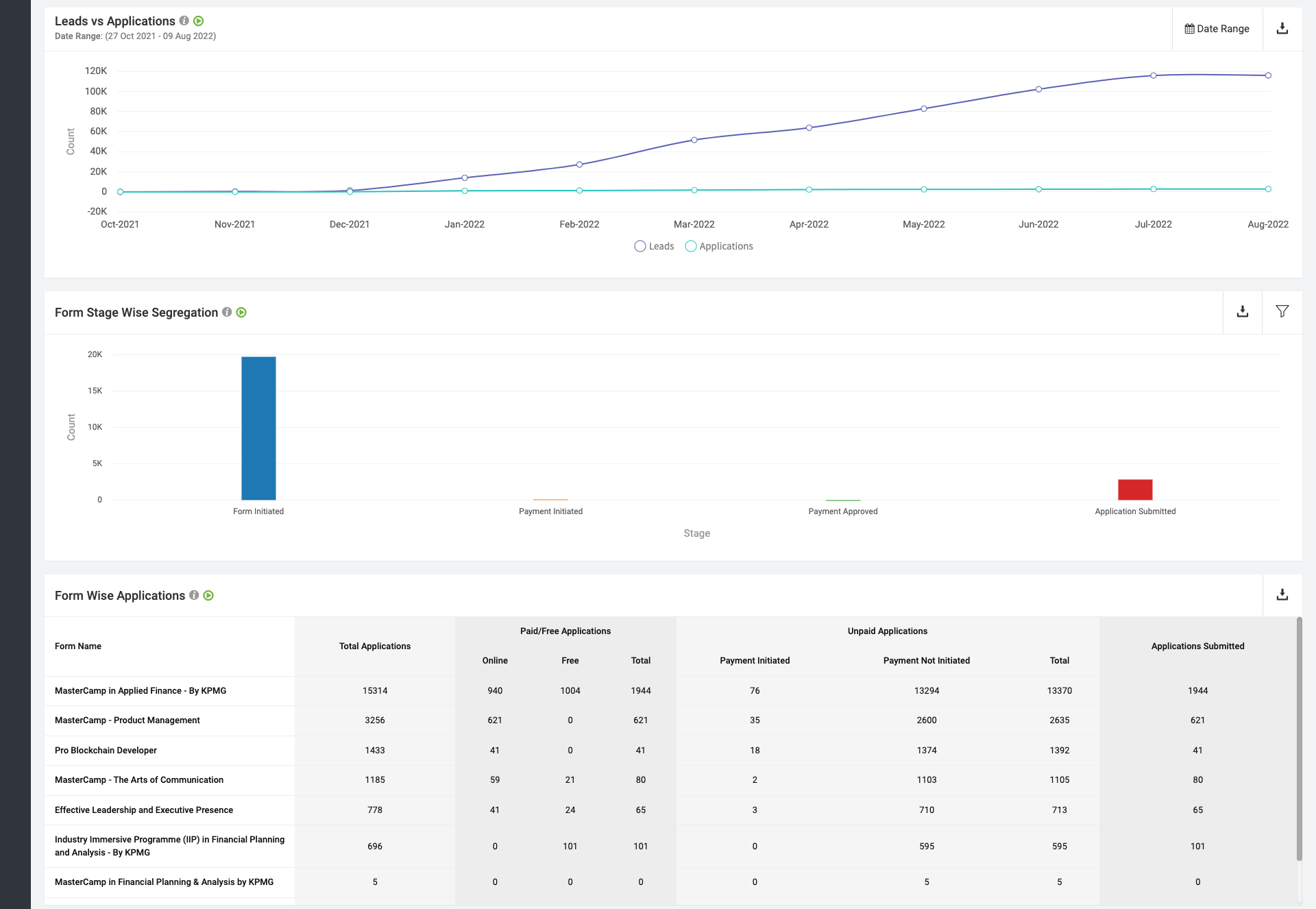

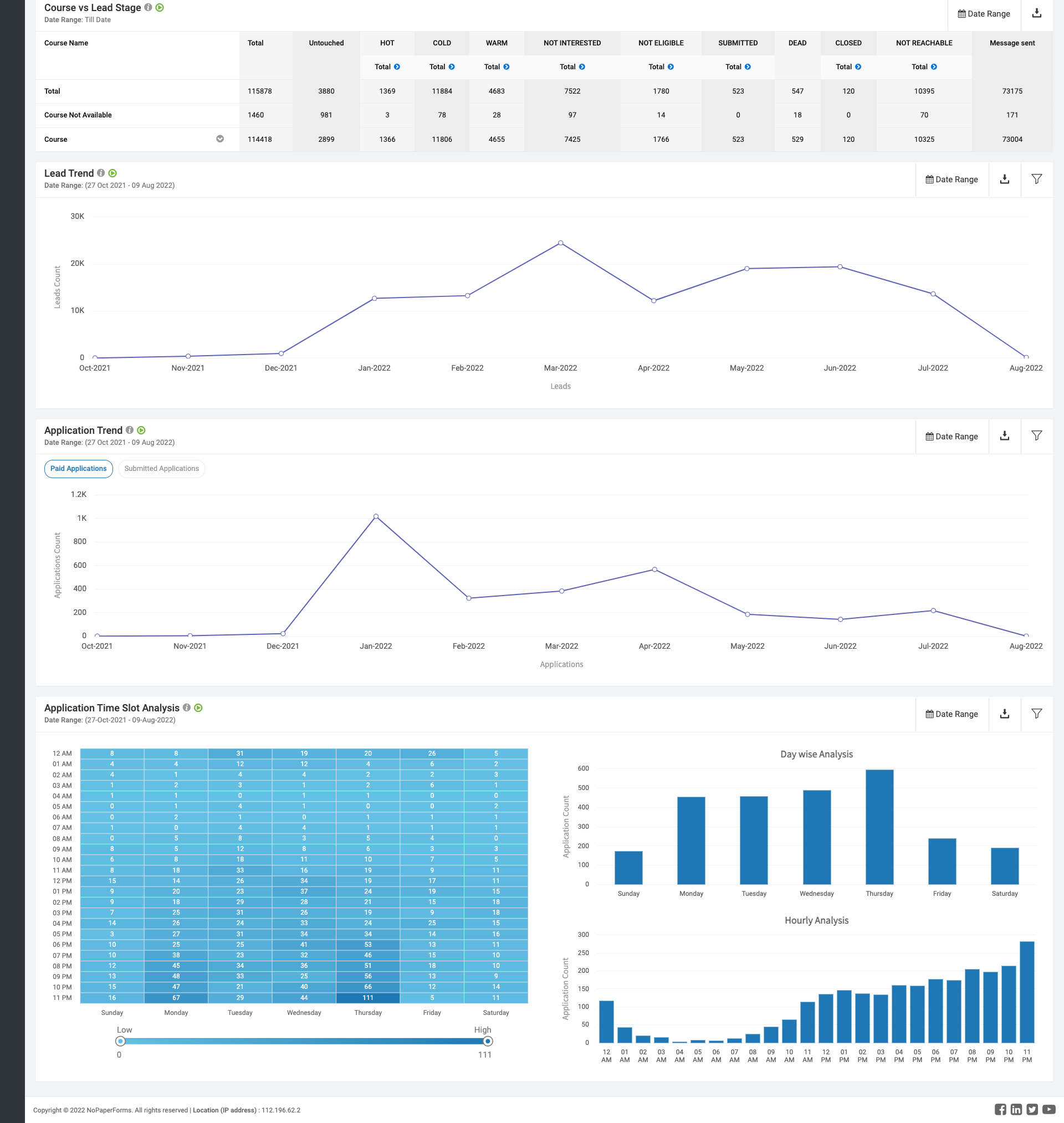

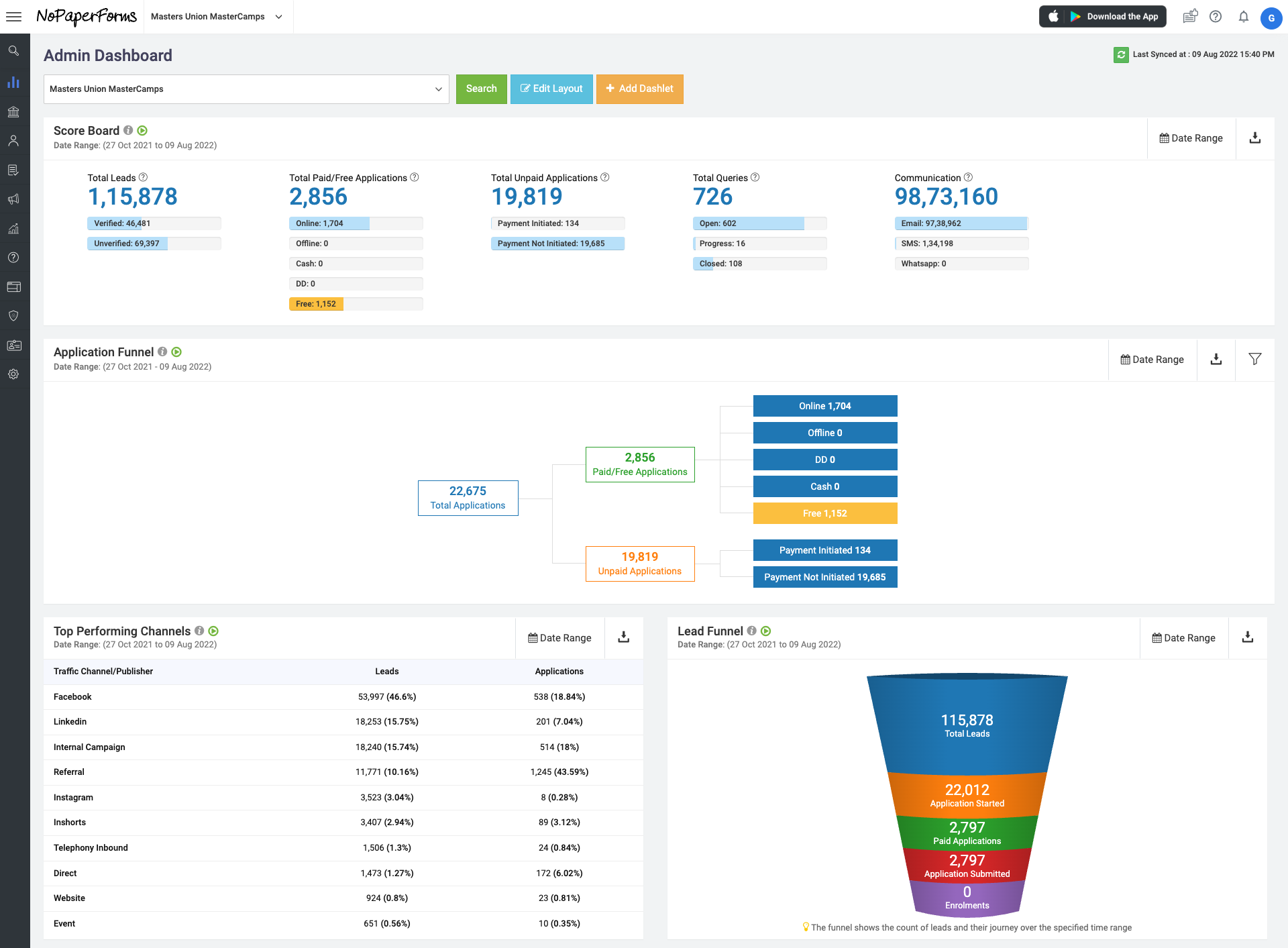

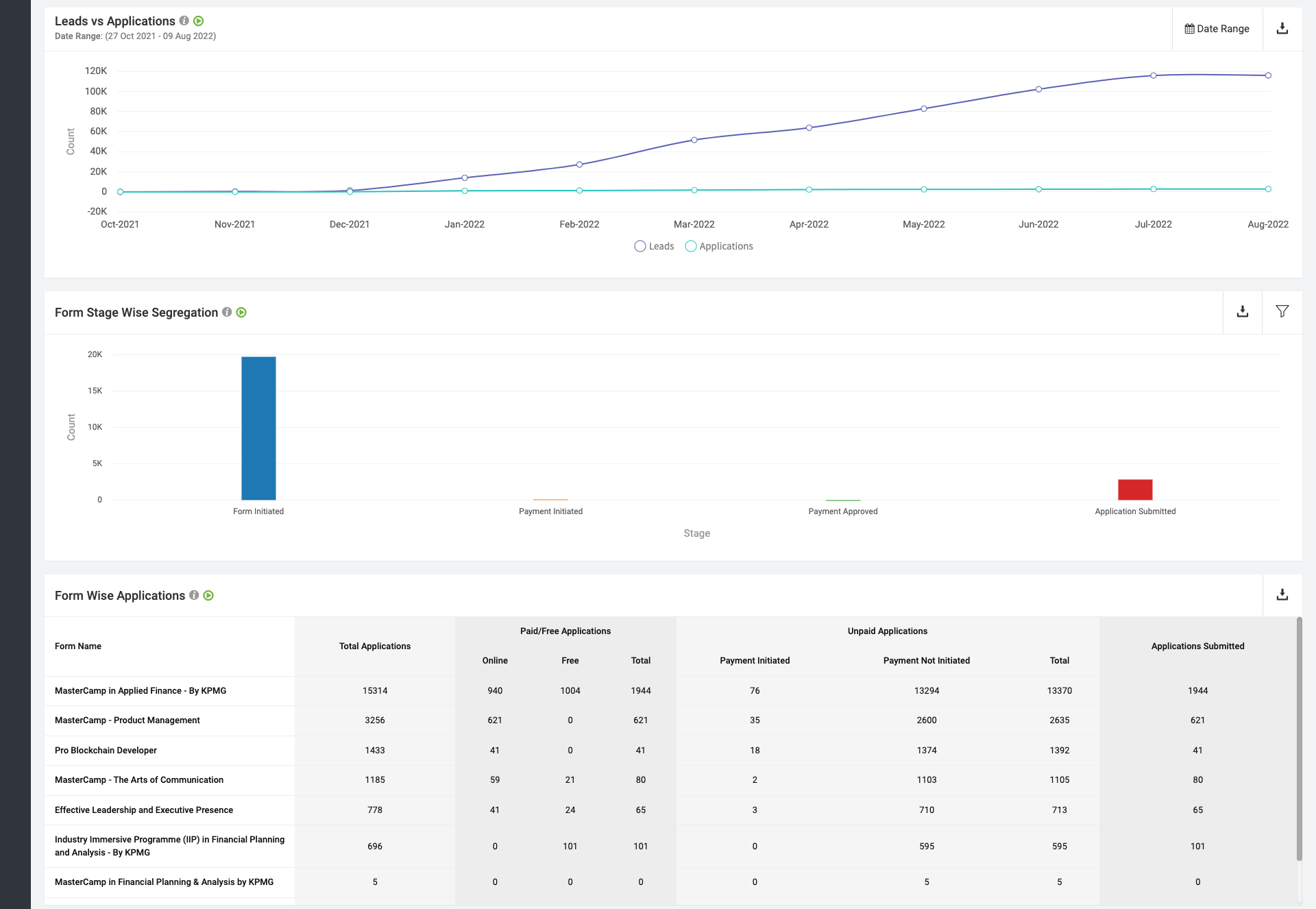

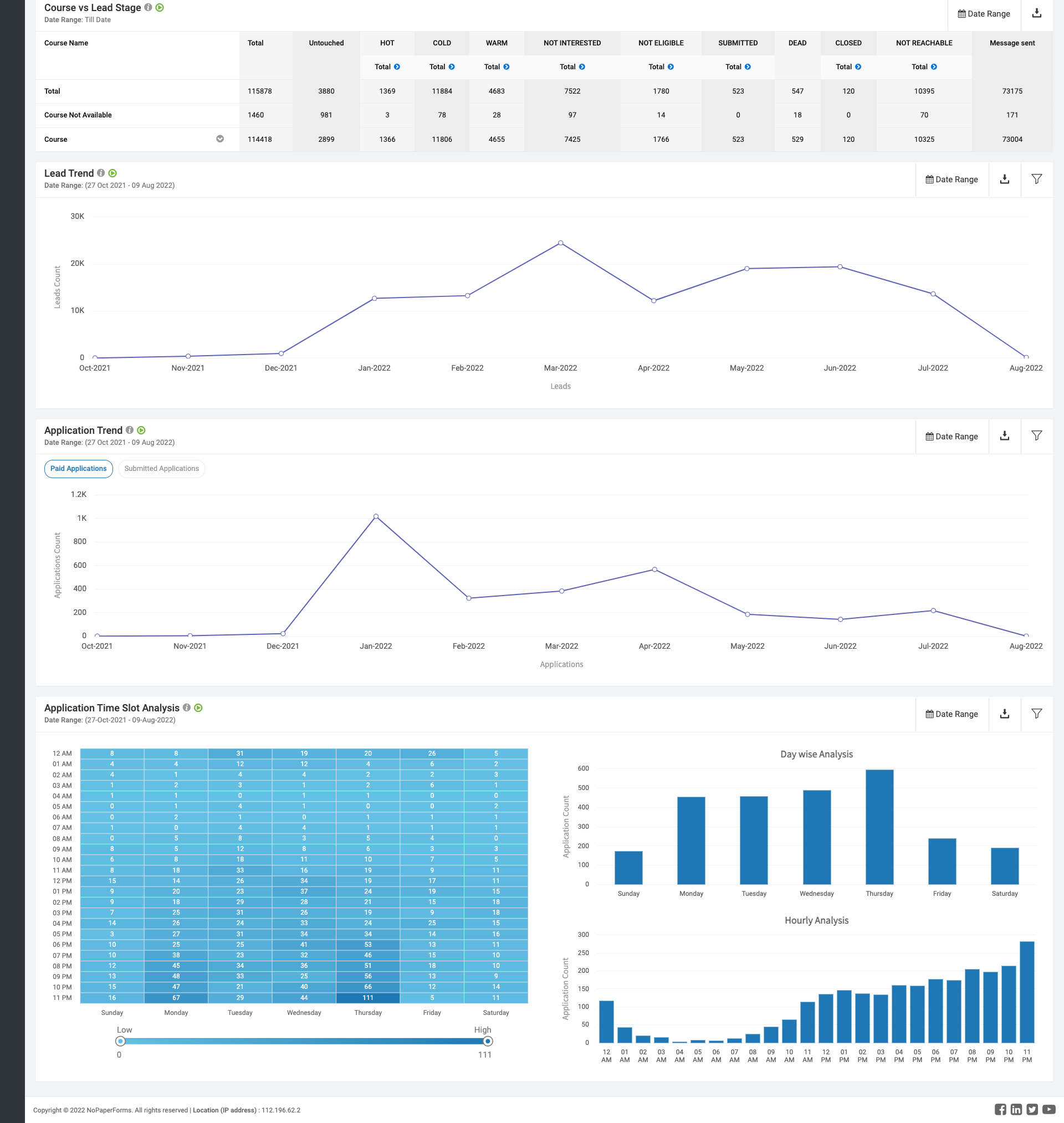

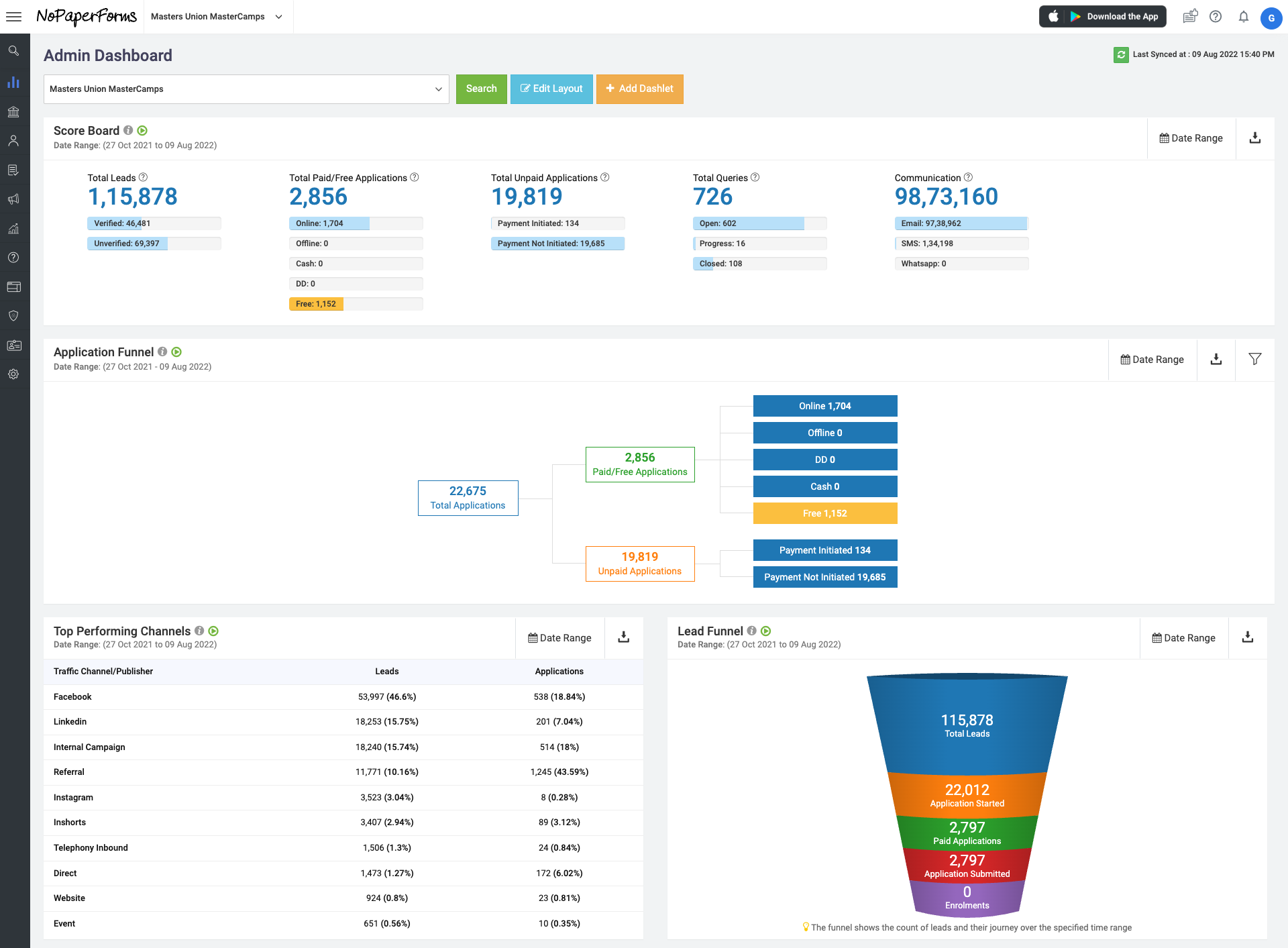

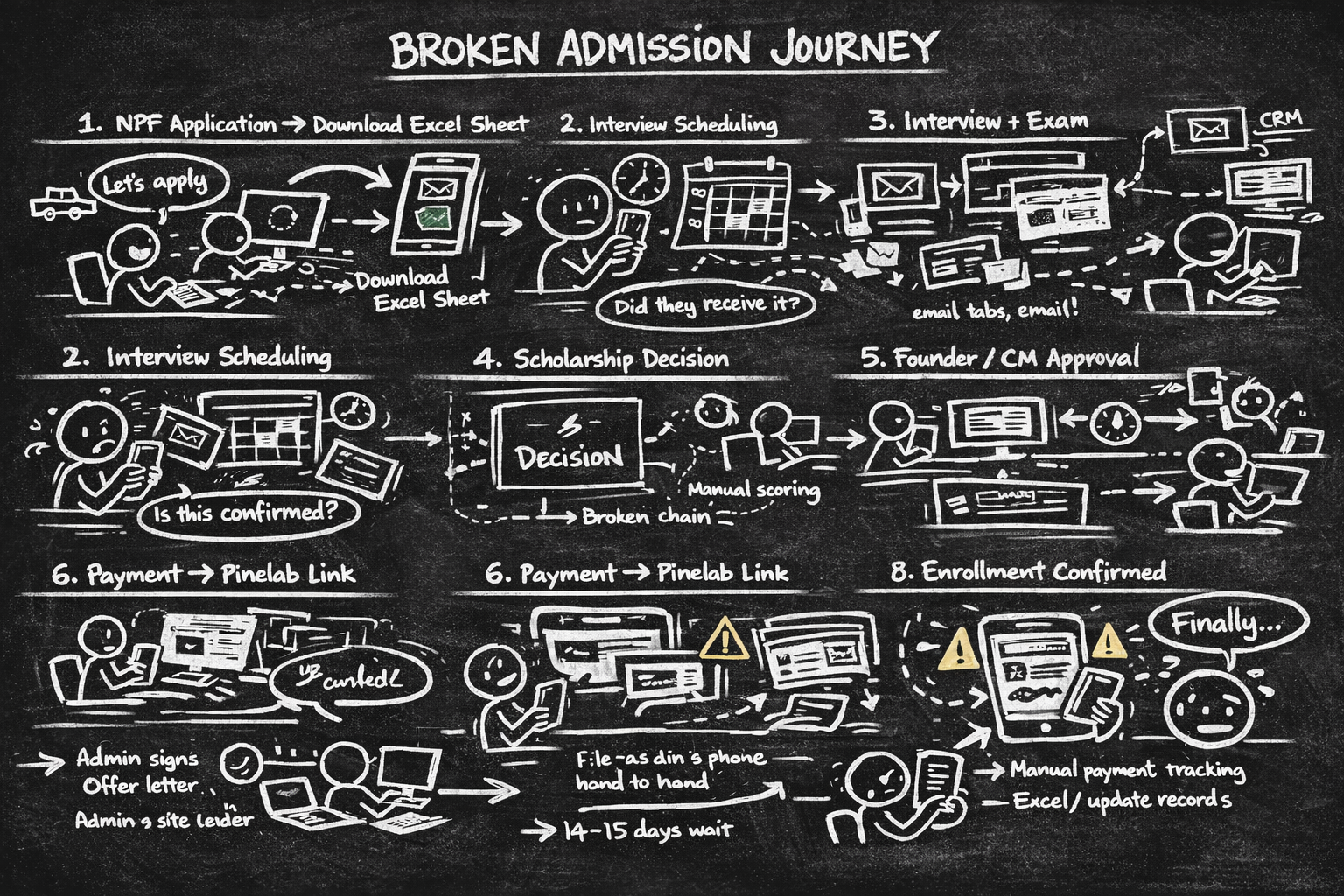

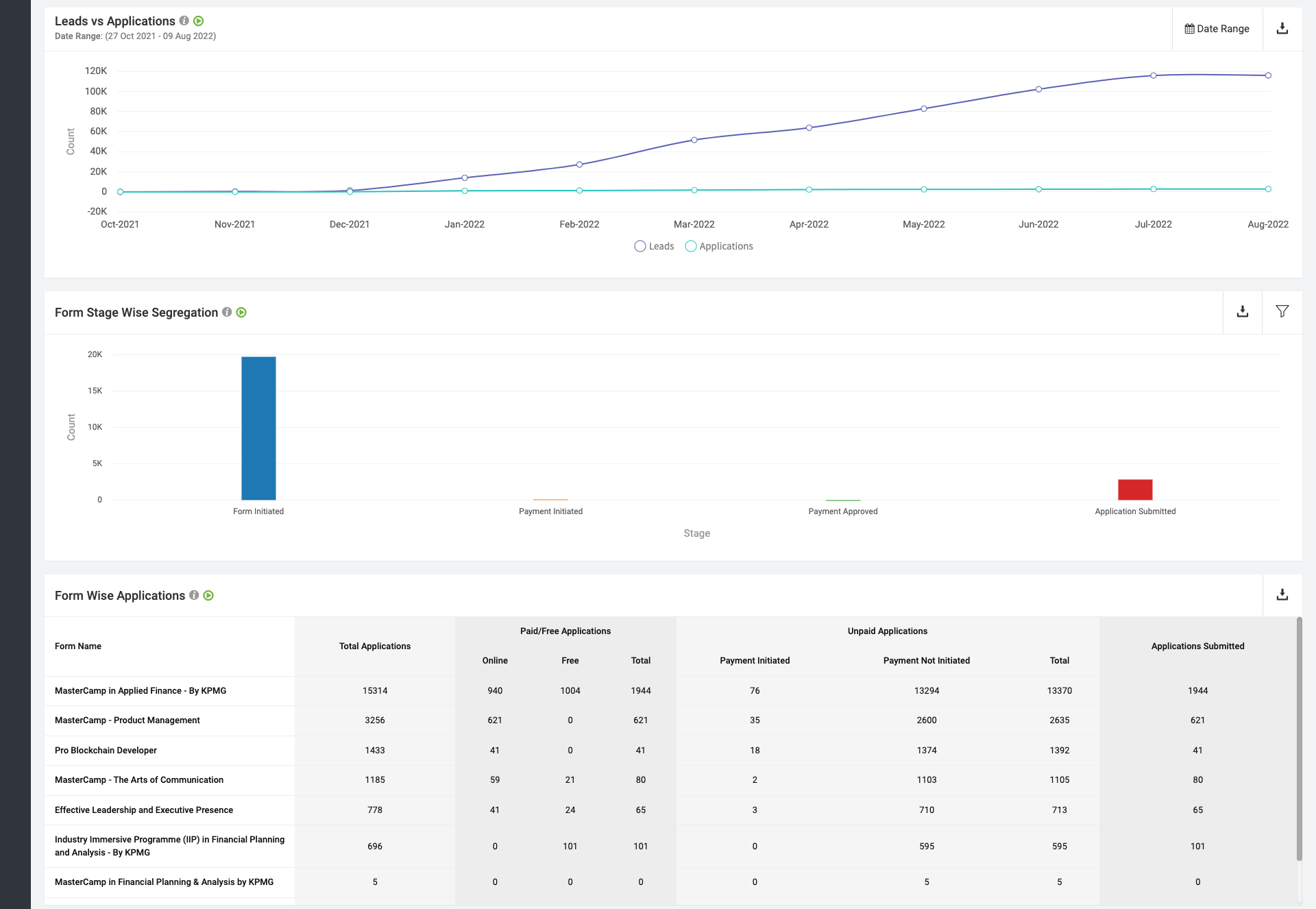

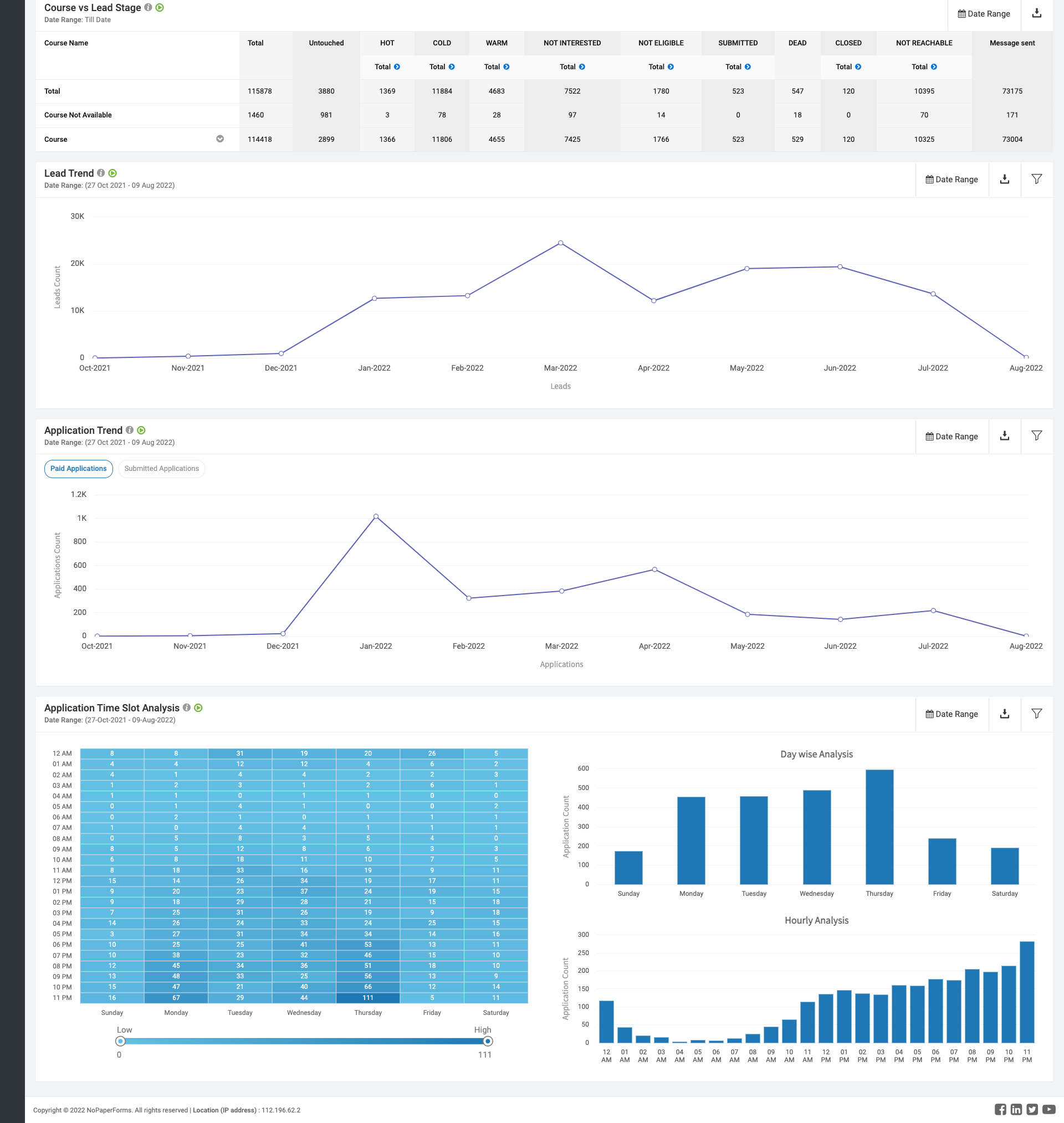

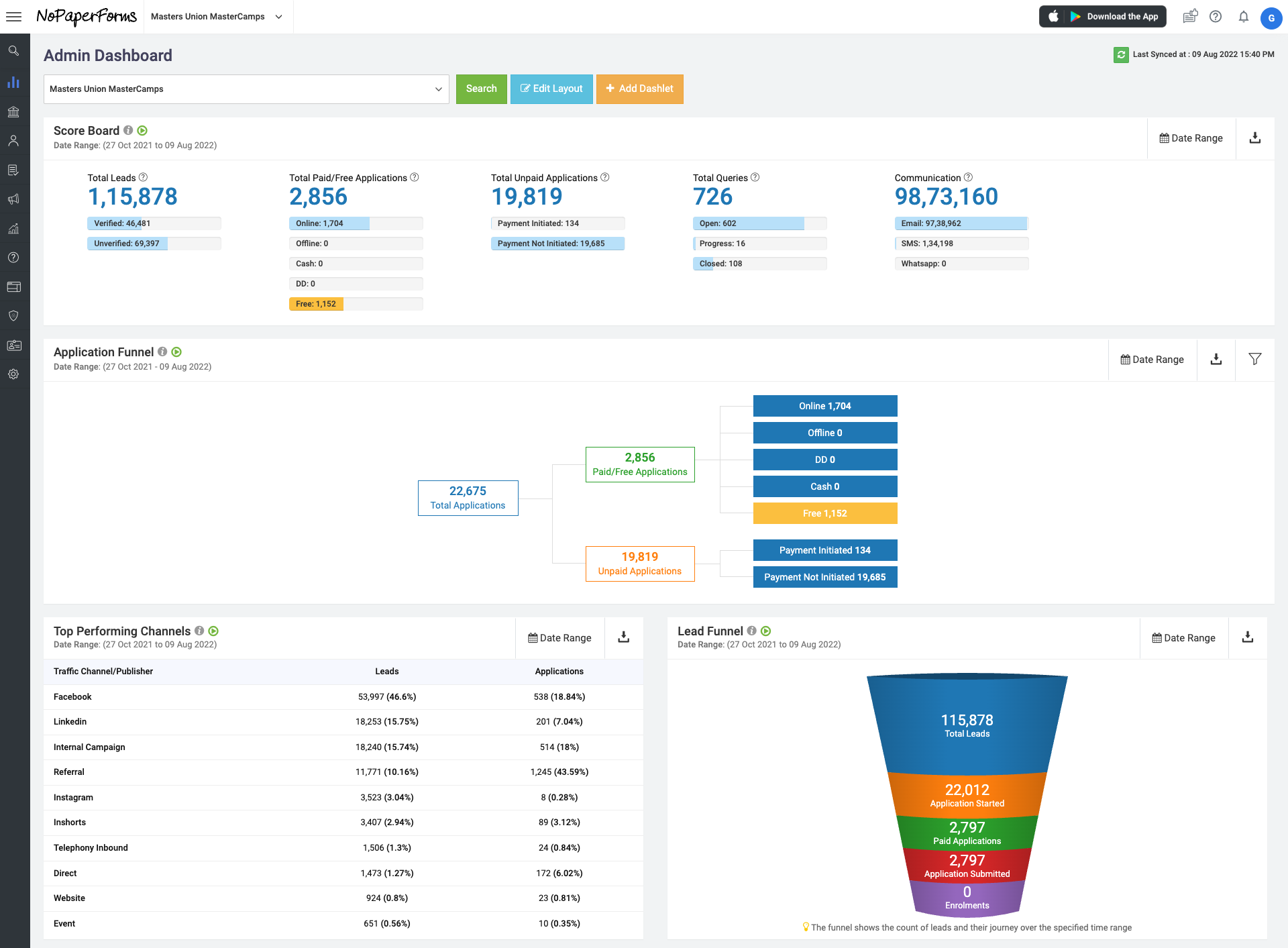

Masters' Union is a tech-first business school that scaled rapidly - but its internal operations couldn't keep pace. Before Dinero, two disconnected systems managed the entire admissions and fee cycle:

NPF handled the Lead Gen

Pinelab processed fee payments as an external gateway, accessed via a link in an email

Neither system talked to the other. Every handoff was manual. Every status update was a spreadsheet entry. Students clicking a payment link in an email - for a Rs.3,50,000+ transaction - were landing on an unbranded external page they had never seen before.

Dinero was built to unify this entire journey into one platform - grounded in a single core principle: you don't get to design the solution until you understand the problem.

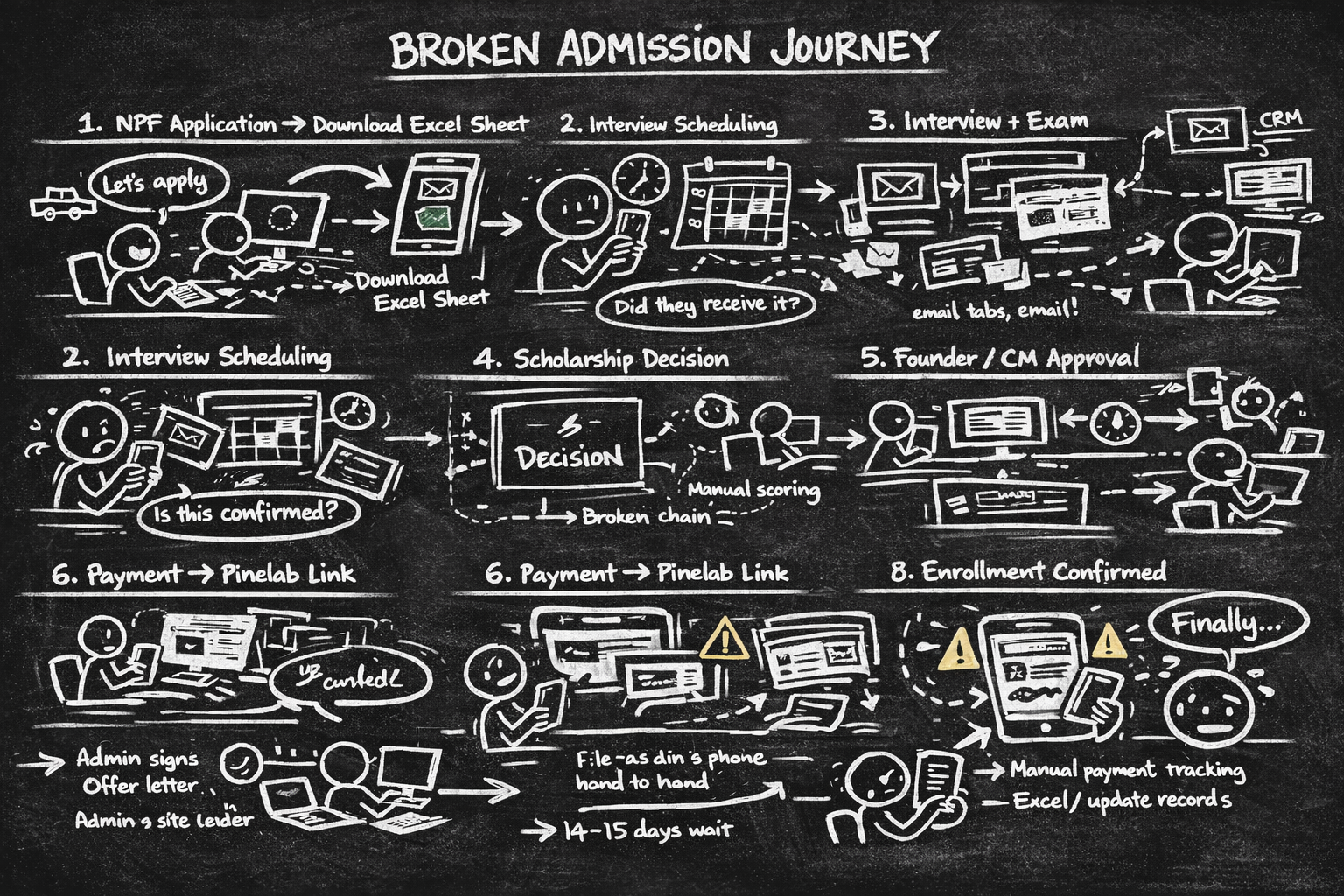

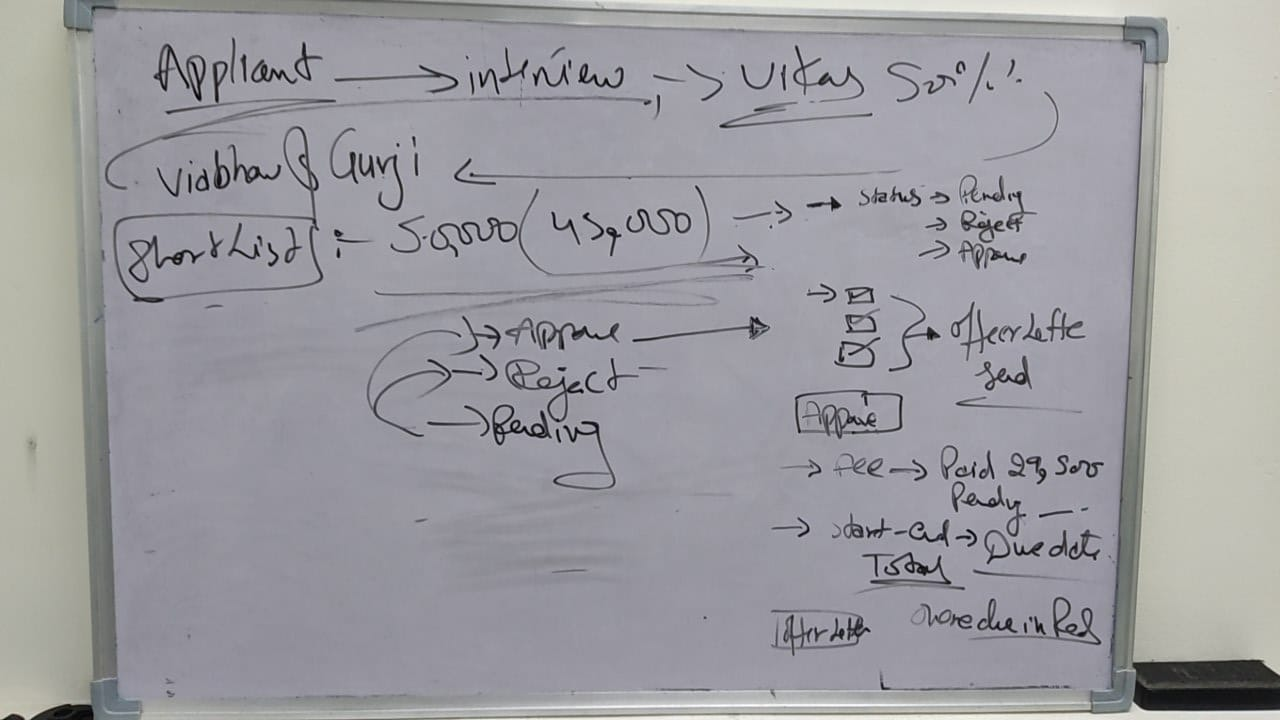

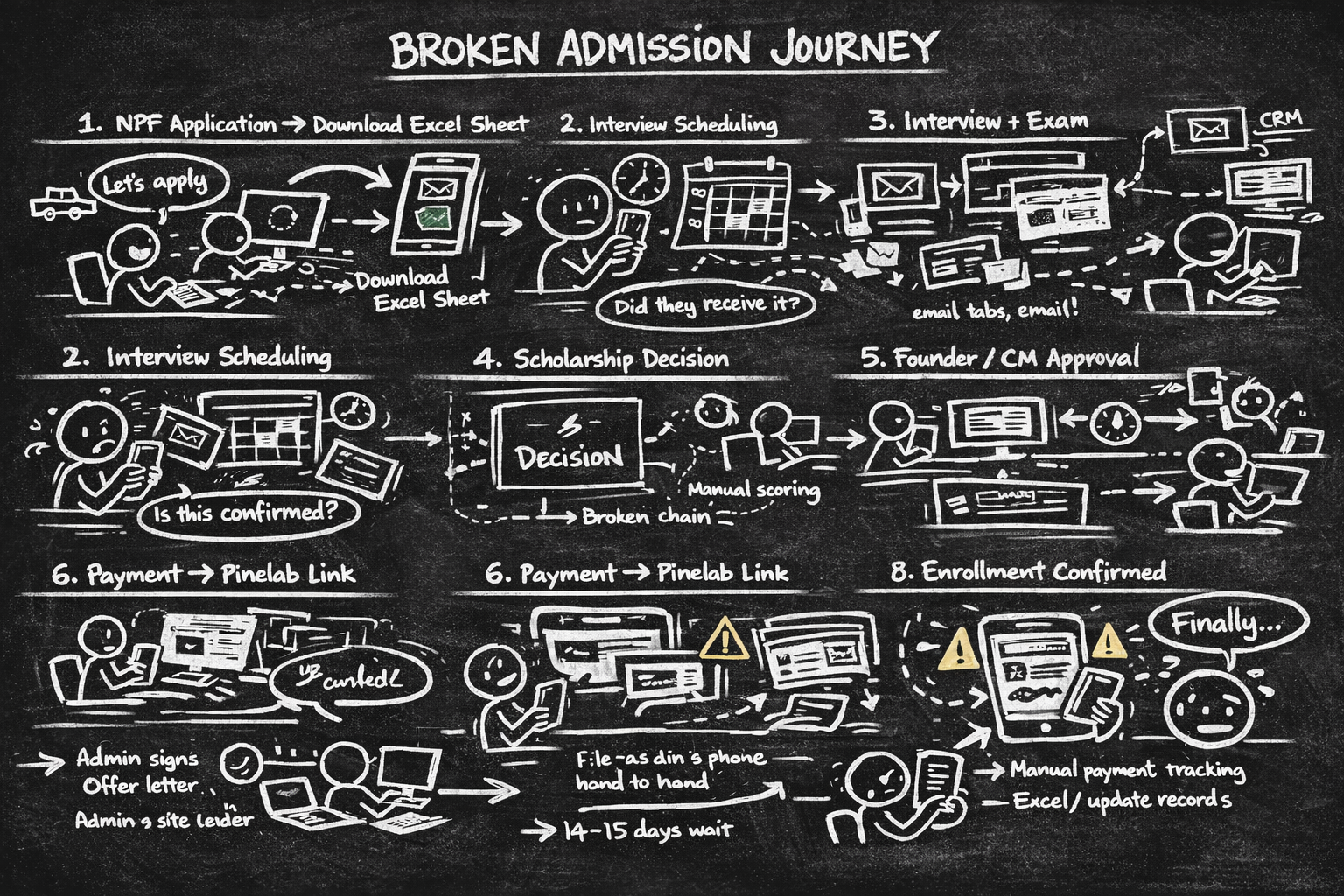

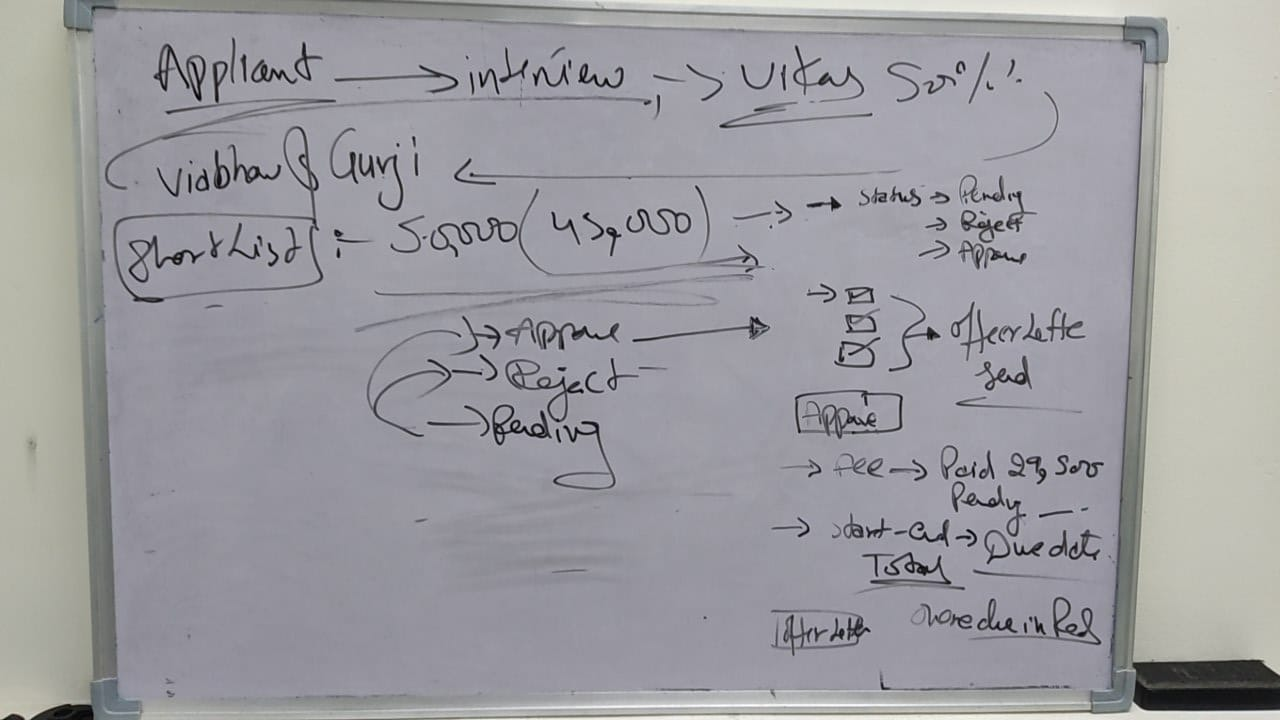

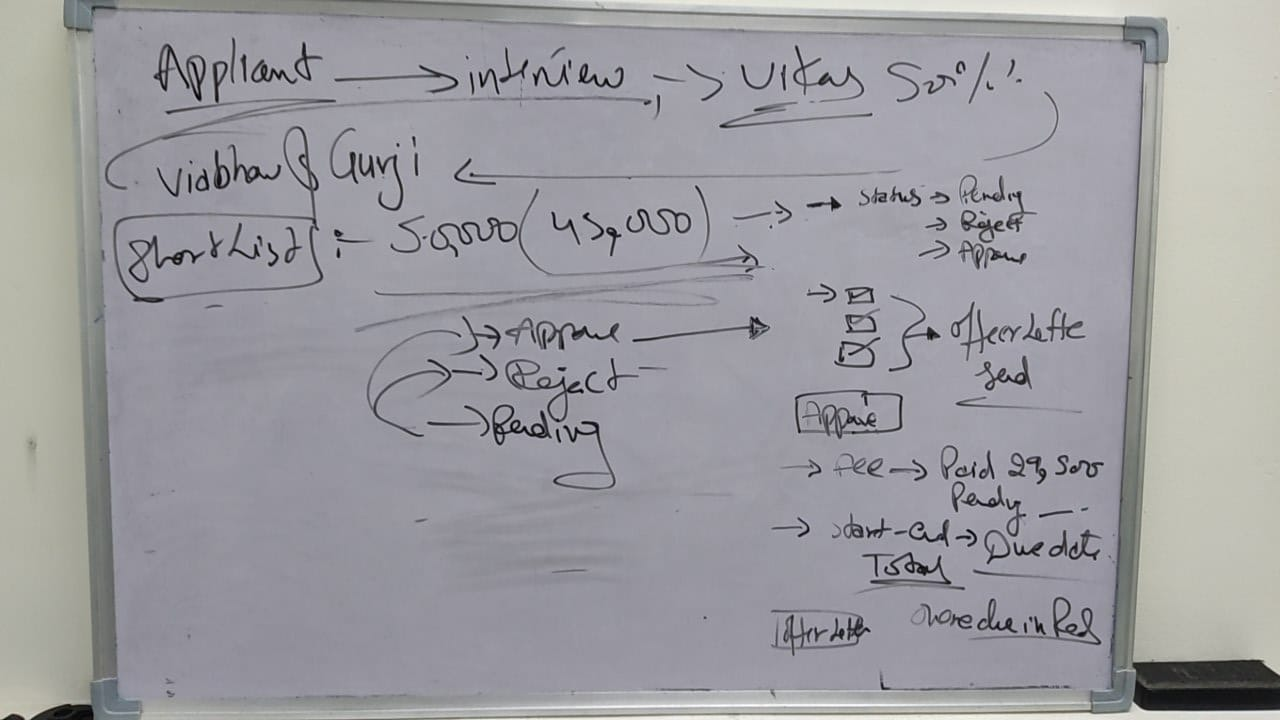

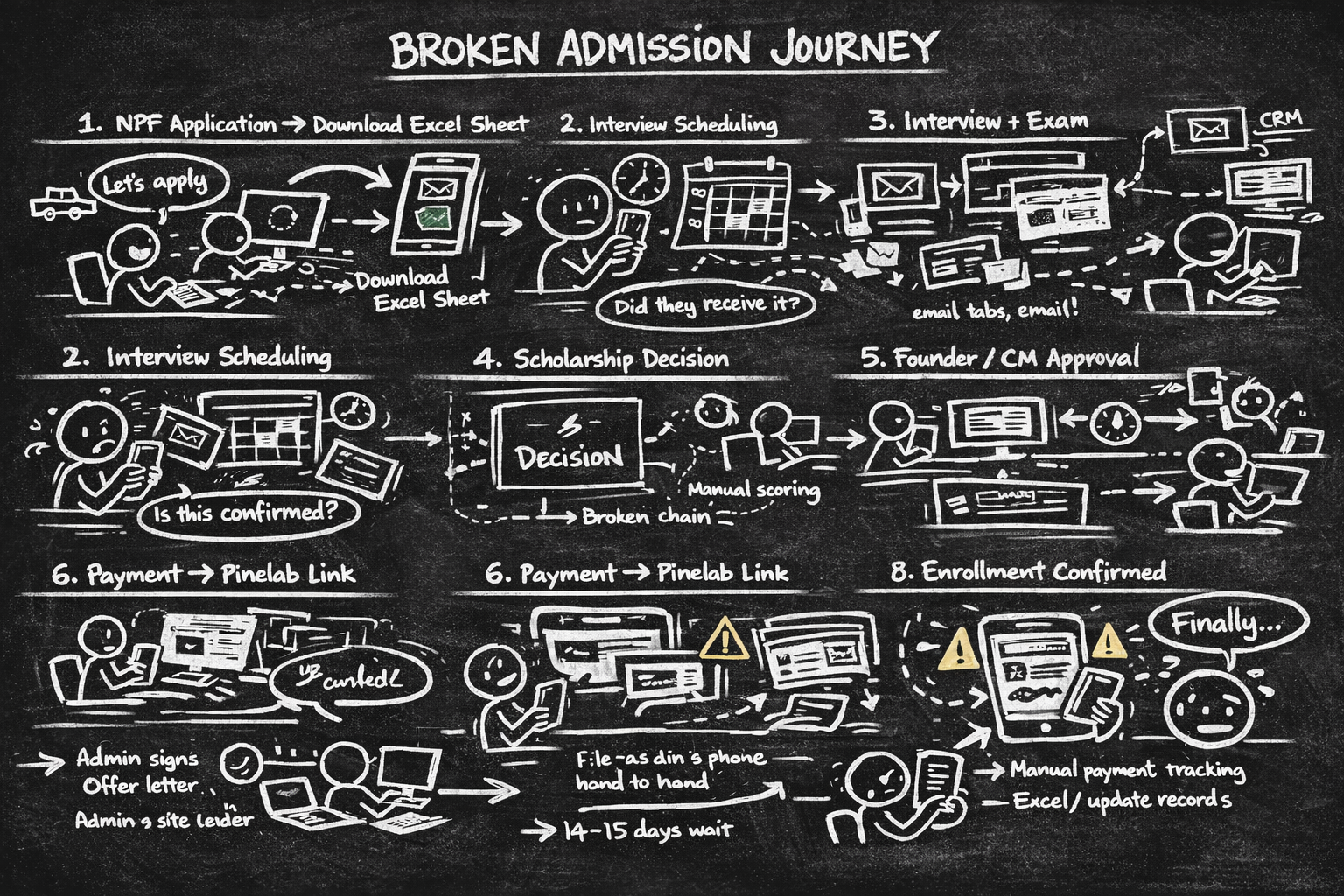

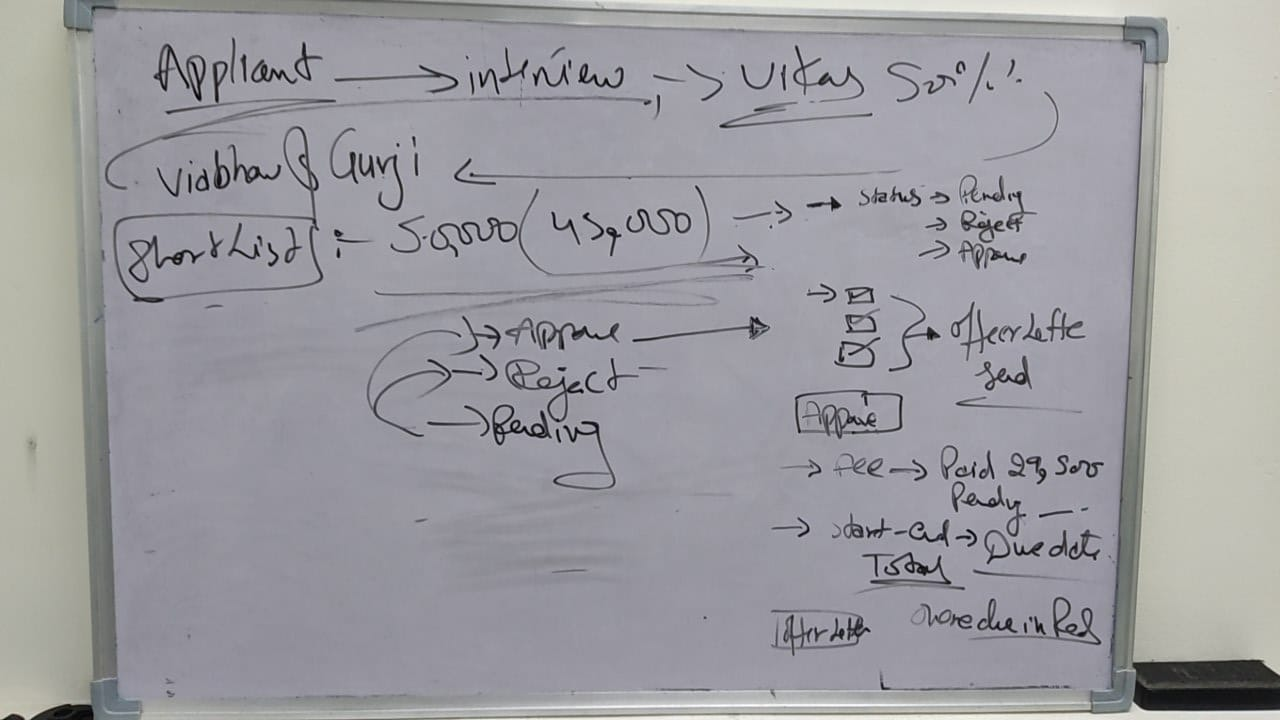

/ The Real 8-Step Manual Journey (Pre-Dinero)

STEP 1

NPF application → Export to Excel

Eliminate Excel dependency, real-time pipeline view

STEP 2

Interview slot assigned → Confirmation email sent manually

In-app slot management + automatic confirmation

STEP 3

Interview conducted + exam scored

Structured faculty scoring inside the platform

STEP 4

Scholarship decision - Approved / Rejected

Decision workflow with automated student notification

STEP 5

Student clicks payment link in email

Embed Pinelab inside Dinero. Trust signals. Instant receipt.

↳ Lands on external Pinelab page

↳ No Masters’ Union branding · No in-app confirmation · 14–15 day manual processing

↳ ~25% abandonment - students feared the link was phishing

STEP 6

Offer letter sent by business team via email

Automated offer letter trigger inside platform

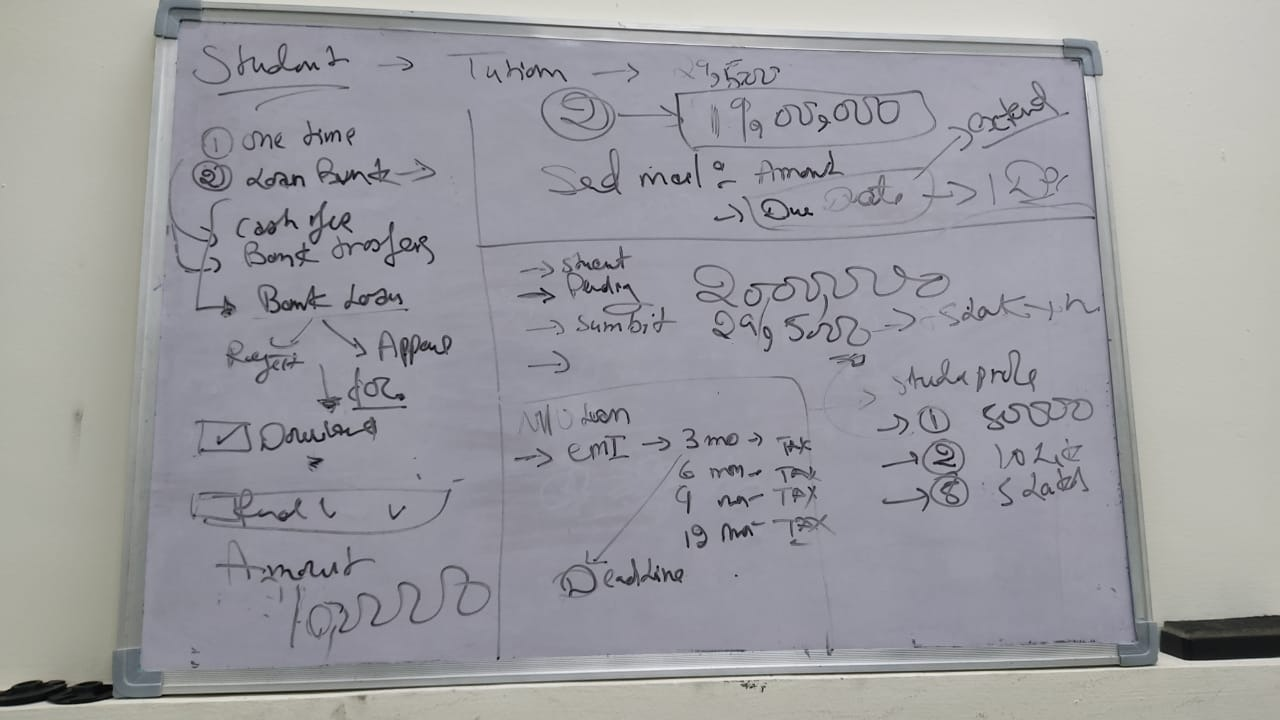

STEP 7

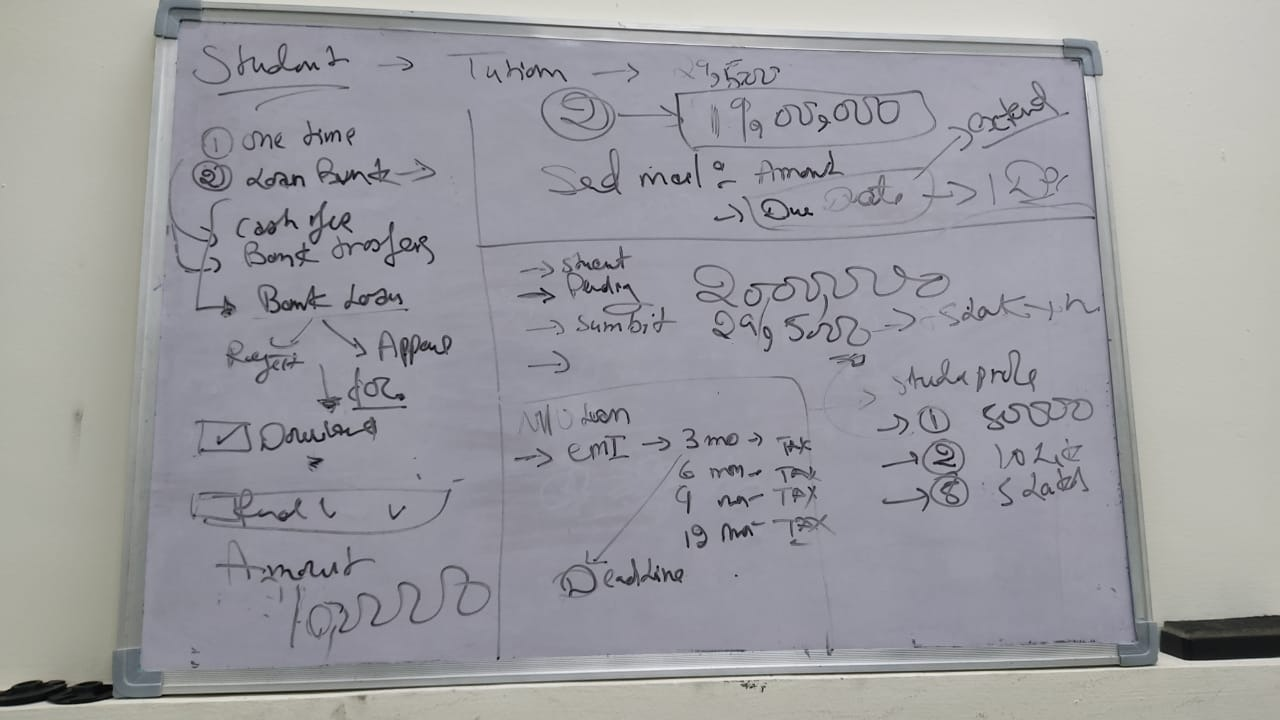

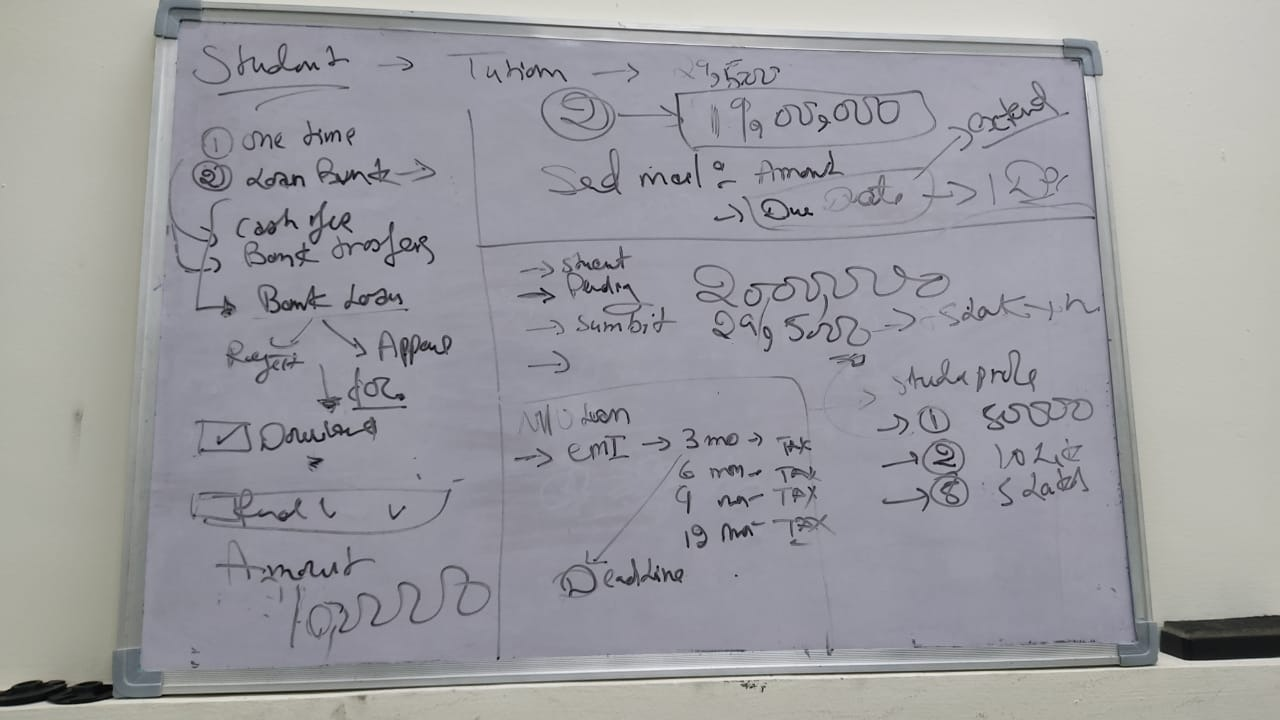

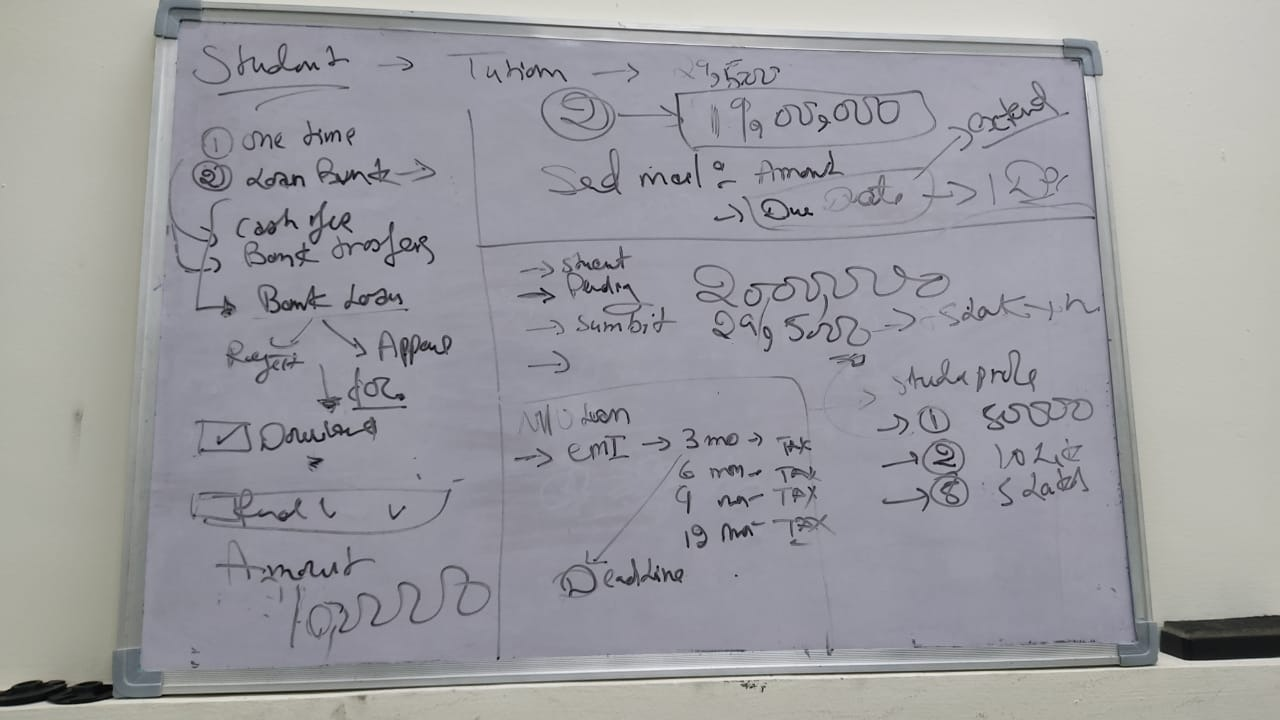

Student submits admission fee + tuition fee

In-platform fee split + loan request flow

STEP 7 or

If student cannot pay → Manual loan approval process begins

STEP 8

Payment confirmed → Enrollment updated manually across NPF + Excel + CRM

Auto-enrollment on payment confirmation. Single source of truth.

Total overhead: 3–5 hours of non-value-added admin work daily. Every step above = a design opportunity Dinero solved.

Total overhead: 3–5 hours of non-value-added admin work daily. Every step above = a design opportunity Dinero solved.

/ 1.2 Research Goals

G1:

Understand the end-to-end student journey across all 8 stages from NPF application to enrollment

G2:

Define what a unified platform must do to replace the fragmented NPF + Pinelab workflow

G3:

Identify the highest-severity pain points across students, admins and faculty

G4:

Validate design decisions through iterative usability testing before development

G5:UX quality post-launch using the HEART framework

/ 1.3 Research Questions

Type

Research Question

Method

What does the real 8-step admission journey look like and where does it break?

Contextual inquiry + User interviews

Why do students distrust the Pinelab payment link sent via email?

User interviews (laddering)

Where do admins lose the most time across NPF, Excel and Pinelab?

Contextual observation

How do counselors track student progress and session outcomes today?

User interviews

Can users complete core flows in Dinero without help?

Moderated usability testing

Generative

Generative

Generative

Generative

Evaluative

Evaluative

Which payment flow variant performs better?

Moderated usability testing

/ 1.4 KPIs & Success Metrics

Admin daily manual effort

Baseline

3–5 hrs/day

Target

< 1 hr/day

Outcome

Significantly reduced - all workflows unified

Payment abandonment rate

Baseline

~25%

Target

< 10%

Outcome

Drop-off eliminated - trust design solved

Platforms in daily use

Baseline

2+ disconnected

Target

1 platform

Outcome

Single platform shipped

Task success rate (usability)

Baseline

N/A

Target

≥ 80%

Outcome

87% in Round 2

Students onboarded

Baseline

0

Target

2,000+

Outcome

1,000+ onboarded

CSAT score

Baseline

N/A

Target

≥ 4.2 / 5

Outcome

Positive across all roles

/ 1.5 Methodology

Mixed Methods, Two Phases

We used a two-phase mixed-methods approach: Generative research to discover and frame the problem, then Evaluative research to validate and refine the solution. The rule was simple - understand before you design, test before you ship.

01

Discover

Qualitative

Stakeholder Alignment Interviews

Business goals, constraints, success definition

02

Discover

Qualitative

Contextual Inquiry

Real admin workflow map - NPF | Pinelab |→ Excel

03

Discover

Qualitative

Semi-structured User Interviews

Pain points, mental models, JTBD per role

04

Discover

Secondary

Competitive Analysis

Admission feature gap matrix

05

Define

Synthesis

Affinity Mapping (KJ Method)

200+ observations → 5 insight clusters

06

Define

Synthesis

Jobs to Be Done (JTBD)

JTBD map per role × use case

07

Define

Synthesis

User Personas

3 research-backed personas

08

Define

Synthesis

Experience / Journey Mapping

8-step emotional arc - application to enrollment

09

Ideate

Quantitative

Card Sorting (Open)

User-defined IA - confirmed role-based dashboards

10

Test

Evaluative

Moderated Usability Testing

Round 1: 63% · Round 2: 87% task success

11

Test

Expert Review

Heuristic Evaluation

Nielsen-rated issue list - 4 violations resolved

12

Test

Quantitative

A/B Testing

74% → 93% payment completion

13

Measure

Quantitative

HEART Framework

UX quality across 5 dimensions post-launch

/ 1.6 Participants

5

Students

User interviews + Usability testing

3

Admins

User interviews + Contextual observation

5

Counsellors

User interviews + Card sorting

/ Screening Criteria

→

Active users of NPF or Pinelab payment flow

→

Minimum 2 months tenure with the institution

→

Informed consent obtained for all sessions

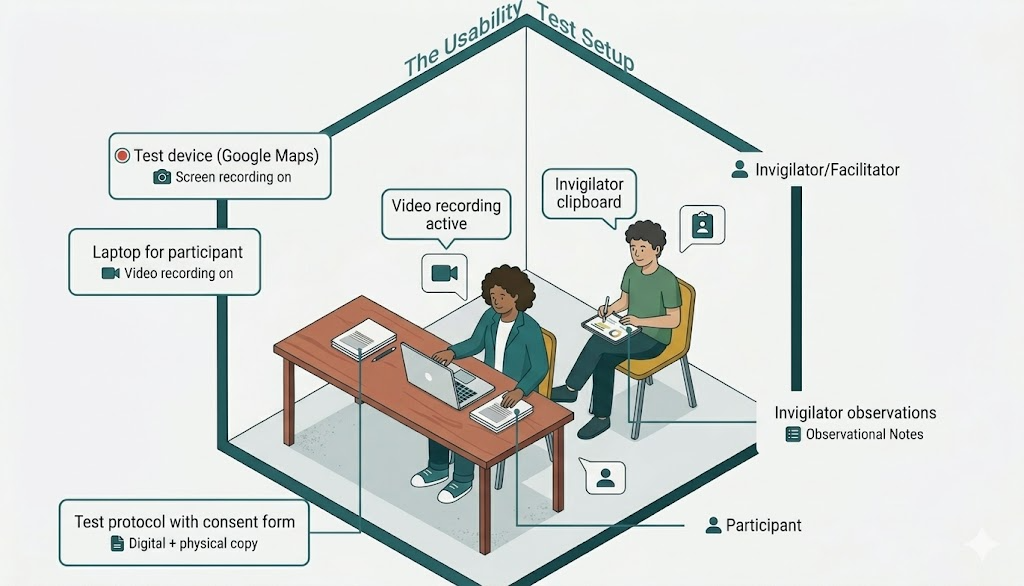

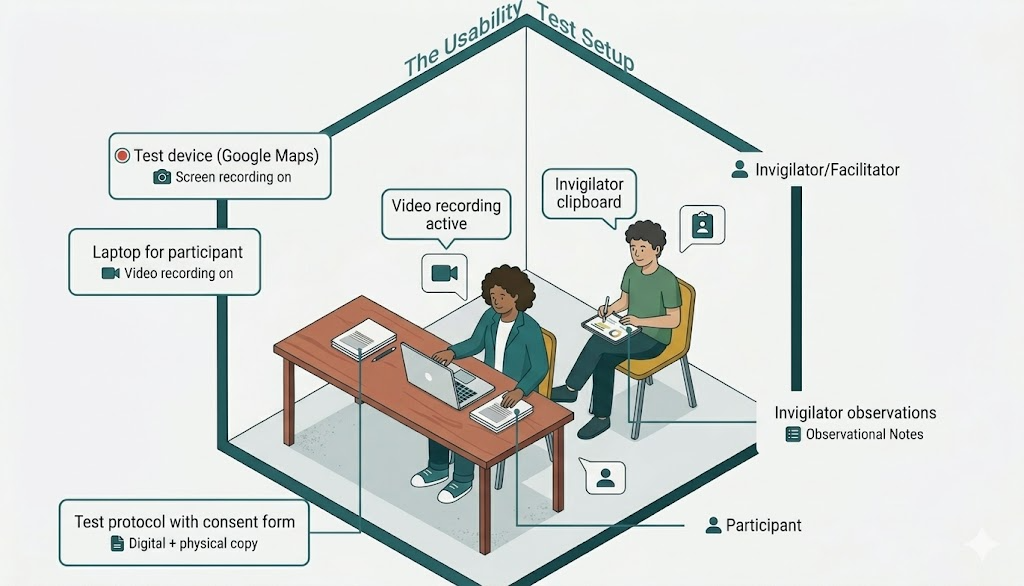

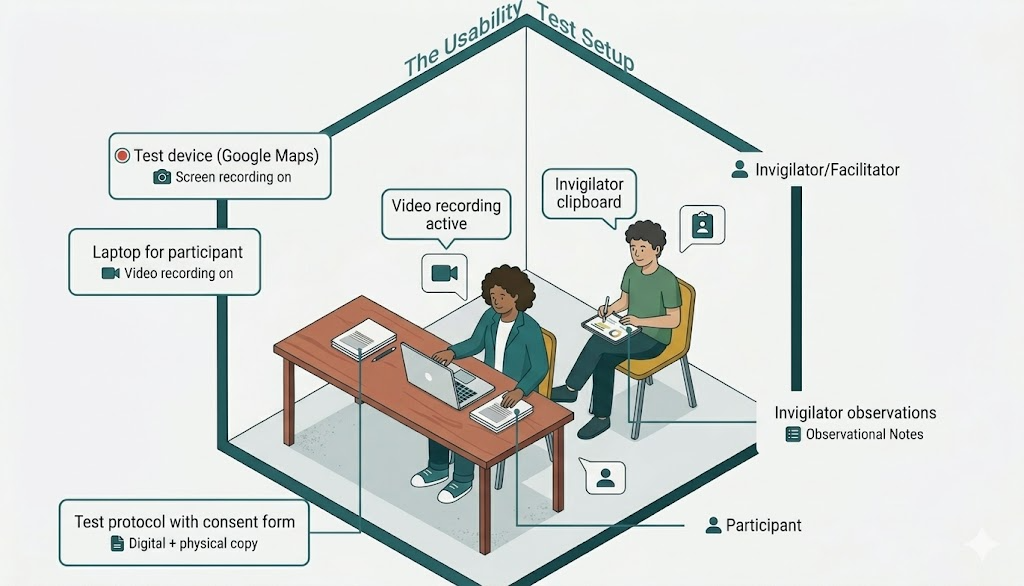

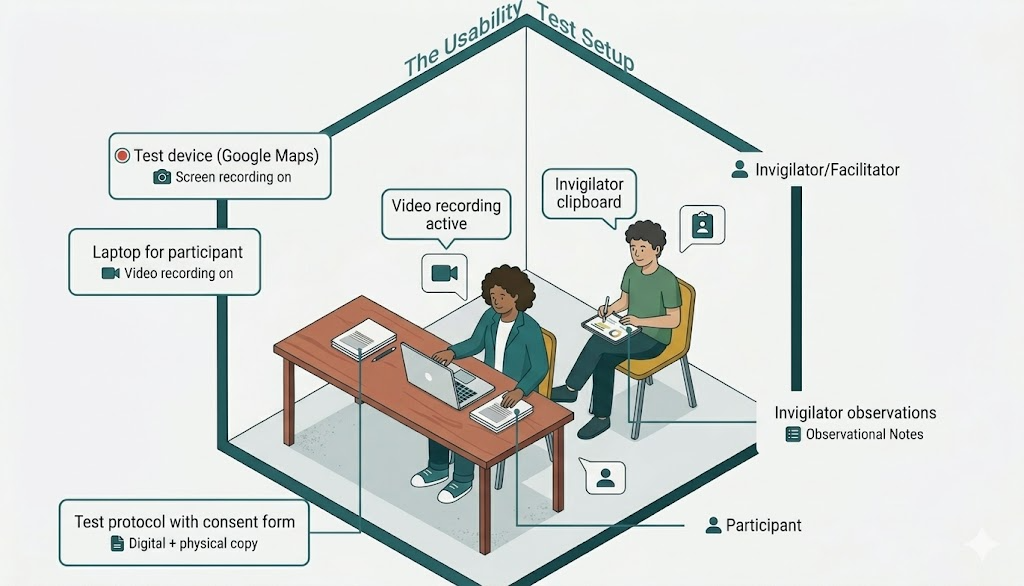

/ 1.7 Usability Test Script

Moderator Introduction - Read Verbatim

"Hi, thank you for joining us. I'm Dhiraj - a designer on the Dinero team. We're testing the product today, not you. There are no wrong answers. Please think out loud - tell us what you're reading, what you expect, what surprises you. You can stop at any time. Any questions before we begin?"

Task 1 - Student: Pay Your Semester Fee

“You’ve just received your offer letter. Please pay your first semester fee using Dinero.”

- Success: Payment completed within 3 minutes, no moderator prompts

- Watch for: Trust hesitation, confusion on fee breakdown, missing confirmation

Task 2 - Admin: Update a Student Status

“A student just completed their interview. Please update their status to Shortlisted.”

- Success: Status updated within 90 seconds

- Watch for: Navigation path, search vs browse, dead ends

Task 3 - Faculty: Log a Session Note

“You just finished counseling a student. Please log your notes and set a follow-up reminder.”

- Success: Note saved + reminder set within 90 seconds

- Watch for: Where they look first, save confirmation, follow-up confusion

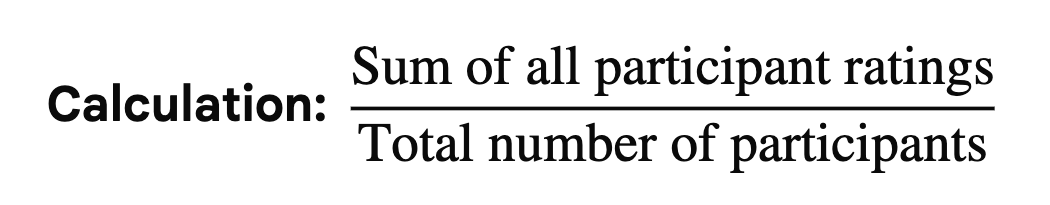

Post-Task Questions

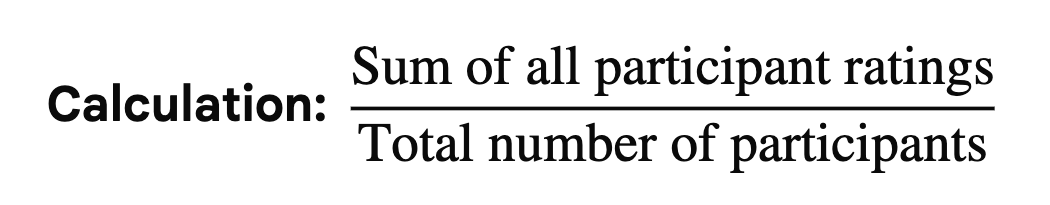

- How difficult was that task? Rate 1 (very easy) to 5 (very hard).

- Was there anything that surprised you?

- What did you expect to happen after that action?

- If you could change one thing about that flow, what would it be?

Exit Interview Questions

- Overall, how does this compare to the tools you currently use?

- What is the single most important thing this platform does for you?

- What would stop you from using this daily?

/ 1.8 Study Schedule

2022–2023

Week 1–2

Week 3-4

Week 5-6

Week 7-8

Week 9-10

Week 11-12

Week 13–14

Week 15-16

Week 17-18

2022–2023

Stakeholder interviews · Research plan finalisation · Participant recruitment

User interviews Students (5 sessions) · Admin (3 sessions)

User interviews Faculty (3 sessions)

Card sorting

Competitive analysis

Affinity mapping · JTBD framework · Persona development · Journey mapping

Round 1 usability testing 5 participants

Design iteration based on Round 1 findings

Round 2 usability testing Heuristic evaluation

Wireframing · Lo-fi to mid-fi prototype (35 screens)

Hi-fi UI · Design system (30 components) · Figma Dev Mode handoff

A/B testing · HEART measurement · Post-launch analytics · Continuous iteration

/ 2.1 Stakeholder Interviews

Before talking to any end users, we ran alignment sessions with the Product Manager and two Admin Leads. The goal was not to gather requirements - it was to understand the business context, the constraints and how each stakeholder defined success.

60 min

Product Manager

Business goals, success definition, scope constraints

45 min

Admin Lead

Current NPF → CRM workflow, daily pain points, time estimates

45 min

Finance

Payment tracking, reconciliation process, error frequency

Key Tensions Surfaced

- Leadership believed the primary problem was "students not completing payments" - research revealed it was a trust design problem, not a student behaviour problem

- Admins underreported their daily manual effort in initial interviews (said "1-2 hours") - contextual observation revealed the real number was 3-5 hours

- No stakeholder had mapped the full 8-step journey end-to-end before this project - it had never been documented in one place

/ 2.2 Competitive Analysis

We mapped the tools the team was actually using - NPF for admissions tracking and Pinelab (alongside Flywire and Blackbaud as market alternatives) for payments - against what the team actually needed. The gap became the design brief.

Unique Value Proposition

What makes this company unique?

Company Advantages

What are the things that provide a leg up?

Company Disadvantages

Where might drawbacks exist?

- A lead tracking platform that helps institutions manage student applications and financial processes.

- Primarily a lead management system, helping institutions handle student inquiries, applications and enrolment.

- Focuses on CRM-style automation for student recruitment and communication.

- Handles lead tracking and applicant approvals.

- May include basic financial tracking for student payments.

- Automates admissions and lead funnel management with CRM-style tracking.

- Provides real-time applicant status updates for universities.

- Integrates AI-driven analytics for student recruitment trends.

- Reduces manual follow-ups by automating communication workflows.

- Limited admin-side financial tracking (refunds, reports and approvals).

- Lacks post-admission features, making it only useful for lead management.

- No fee tracking, refund approvals, or payment integration.

- Requires third-party integrations for financial & engagement tools.

- A secure global tuition payment platform that simplifies university transactions, loan processing and refunds.

- A global education payment solution, allowing universities to accept international and domestic tuition payments securely.

- Focuses on fraud prevention and currency conversion for global students.

- Provides secure fee payments & refunds.

- Supports loan approvals and payment verification.

- Supports multi-currency transactions with localized payment methods.

- Offers secure, institution-approved fee collection with built-in fraud detection.

- Provides detailed financial reporting dashboards for universities.

- Ensures compliance with financial regulations in different regions.

- No lead tracking or interview process.

- No student engagement features (meetings, clubs, help desk, etc.).

- Not an internal tool-relies on external payment providers.

- Lacks post-admission features, making it only useful for lead management.

- No fee tracking, refund approvals, or payment integration.

- Requires third-party integrations for financial & engagement tools.

Apparent Differences

What are the differences between the Product?

NPF automates communication & recruitment, but Flywire automates tuition processing & compliance.

NPF focuses on pre-admission (lead tracking, CRM) while Flywire focuses on post-admission (fee payment, financial tracking).

Global Fee Payments → Flywire is the only platform with a strong global payment system.

Lead Tracking & Admissions → Only NPF focuses on lead management, while Flywire and Blackbaud do not.

Similar Capabilities

What do all the companies have in common?

Fee & Payment Tracking → All platforms (NPF, Flywire, Blackbaud) provide financial transaction management.

Basic Student Data Management → Most competitors offer some form of student tracking (admissions, fee details, or loan approvals).

Secure Data Handling → Both platforms follow data security & compliance regulations for handling student information.

Administrative Support → Platforms allow admins to monitor payments, refunds and approvals.

CRM & Communication Automation → NPF and Flywire both help institutions communicate with students.

Custom API Integrations → Both offer API-based solutions, allowing institutions to connect their existing tools.

Key Learnings

What can we learn from this process?

High Dependence on External Integrations → Both competitors require third-party add-ons to handle a full student lifecycle.

There is no all-in-one solution → Competitors specialize in either lead tracking, finance, or student engagement-but not all three together.

Most platforms rely on third-party tools → Institutions often have to use multiple services to manage different aspects (NPF for leads, Flywire for payments, Blackbaud for academics).

Lack of an End-to-End Student Management Solution → No company combines admissions, financial tracking and student engagement in one tool.

Finance vs. Admissions Gap → Universities must manage payments and student services separately, leading to inefficiencies.

Admin workflows are still highly manual → Even Blackbaud (which offers reporting) lacks automation for student feedback, counselor interactions and engagement tracking.

Opportunities

Where can we progress or create value?

Centralized Platform → Develop a single internal tool that integrates lead tracking, finance, interview tracking and student engagement.

Automation & Smart Workflows → Streamline admin operations by reducing manual approval processes and real-time tracking of applications, finances and reports.

Holistic Student Experience → Provide a student-friendly interface where they can track interviews, fees, counselor meetings and participation in clubs/events-all in one place.

All-in-One University Lifecycle Management → Instead of just lead tracking (NPF) or payments (Flywire), an internal tool can streamline everything from admissions to student engagement.

Integrated Workflow Optimization → Reduce manual approvals and fragmented processes by connecting admissions, finance and engagement into one structured platform.

Automated Student-Centric Platform → Unlike Flywire, which only supports payments, a platform can include counselor feedback, interview tracking and real-time student interaction features.

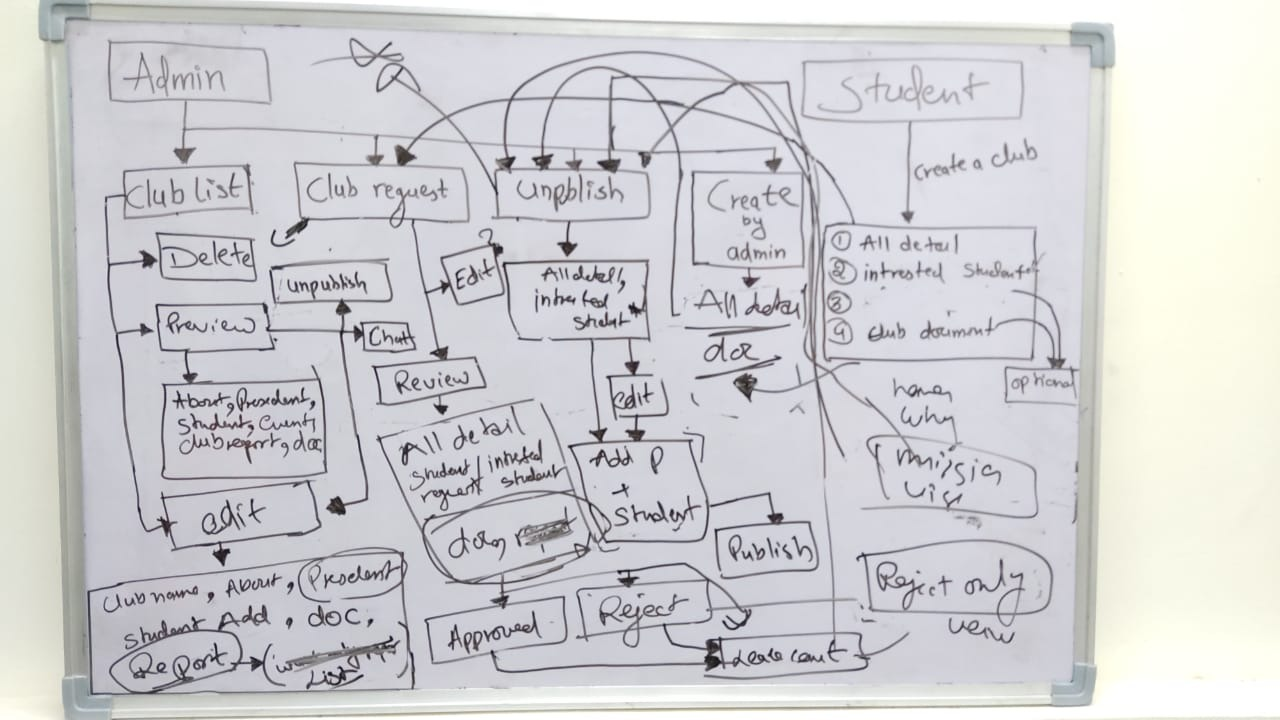

/ 2.3 Card Sorting

Information Architecture Discovery

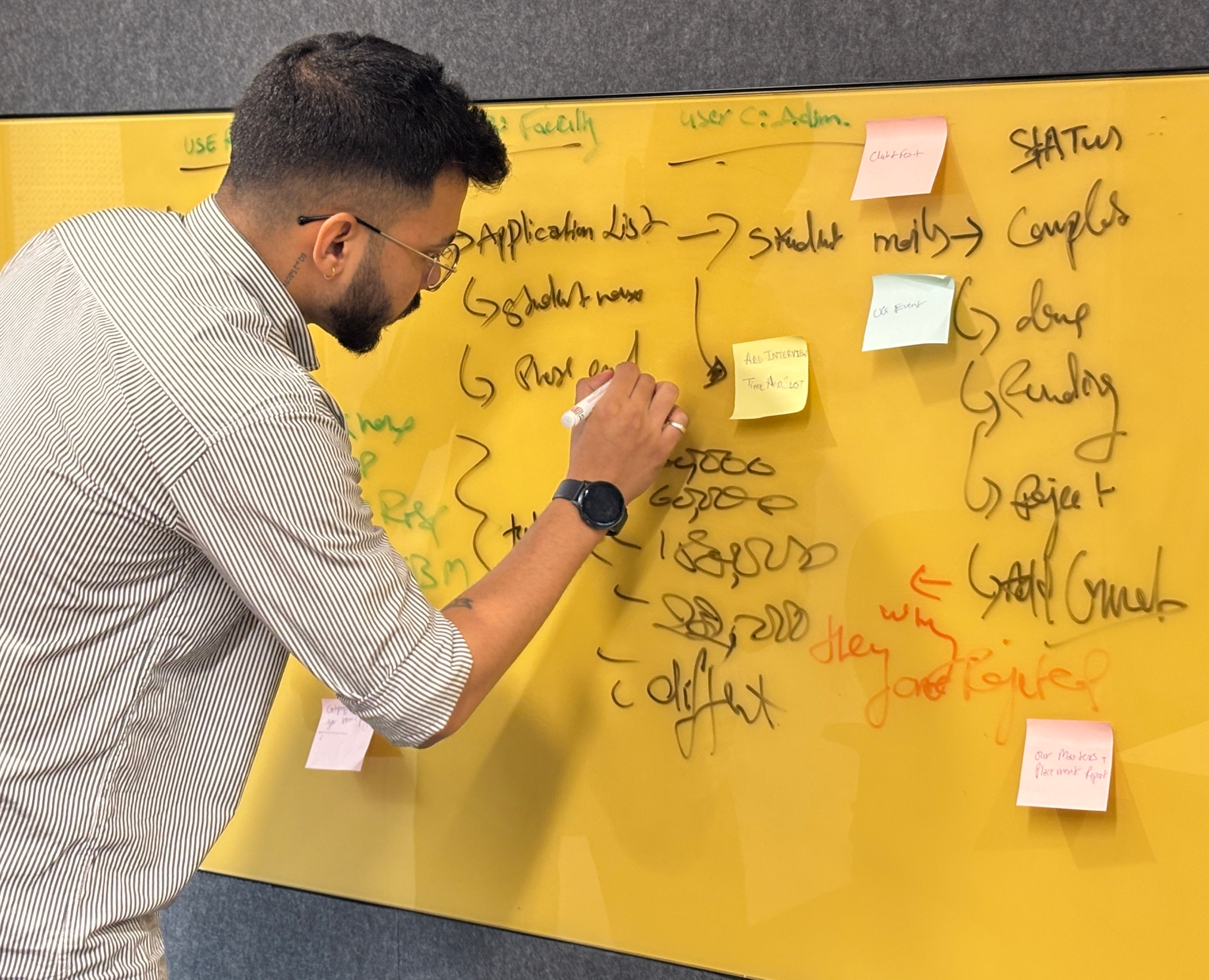

Before sketching a single screen, we ran open card sorting with all 11 participants to let users define the information architecture. We weren’t going to impose a structure - we were going to discover the one that already existed in users’ minds.

/ 2.4 Affinity Mapping

KJ Method + Jobs to Be Done

After 11 interviews and 2 contextual observation sessions, we had 200+ individual data points. Every observation, quote and pain point went onto its own sticky note. Then we grouped. The clusters don't come from analysis - they emerge from the grouping. That's the KJ method.

Cluster

Representative Quote

Notes

Fragmentation Fatigue

“I use 4 tabs before 9am - NPF, Pinelab, Excel, email”

48 notes

Visibility Anxiety

“I check email every hour just to know where I stand”

41 notes

Payment Trust Deficit

“The link looks fake. What if it’s phishing?”

26 notes

Admin Info Overload

“I’m firefighting every morning before I do any real work”

41 notes

Counselor Invisibility

“My session notes live in my personal email drafts”

32 notes

Jobs to Be Done

JTBD moves the conversation away from features and toward motivation. Instead of “what do users want?”, you ask: what job are they hiring this product to do?

User

When I…

I want to…

So I can…

STUDENt

Check my admission status

See it instantly - no emails

Stop anxiously checking my inbox

STUDENt

Pay my semester fee

Complete it inside one trusted platform

Have proof and peace of mind

ADMIN

Start my workday

See every pending action in one view

Stop opening NPF, Pinelab and Excel

ADMIN

Update a student status

Do it in one click with confirmation

Move to the next task immediately

Faculty

Finish a counseling session

Log notes right there in the platform

Not lose context or forget follow-ups

/ 2.5 User Personas - 3 Research-Backed Roles

These personas were built directly from interview data - not from assumptions. Every goal, pain point and JTBD below was mentioned by at least 2 of the participants in that role group.

Aditya Verma

MBA Student - Age: 22 | Location: Pune, India

Aditya is a driven MBA student focused on securing a great job post-graduation, but he finds the admission and financial process confusing. He struggles to track interview progress, fee payments and counselor feedback - often missing important updates. He prefers digital solutions but gets frustrated when he has to check multiple platforms. He wants one place where everything just works.

Goals

→

Track interview progress and feedback in real time - no more waiting for email updates

→

Pay fees quickly without worrying about third-party links or whether payment went through

→

Access counselor reports and meeting history in one place

→

Join clubs and events without long approval processes or scattered notifications

Pain Points

×

Confusing interview process - unclear next steps, no visibility into stage

×

Third-party payment issues - external link felt untrustworthy, no receipt

×

Scattered feedback from counselors - difficult to access reports from previous sessions

HEARS

→

You need to pay your fees via this third-party link.

→

Your interview status will be updated soon.

→

You missed the deadline for your counselor feedback session.

SEES

Multiple emails from admissions with unclear instructions. Screenshots and spreadsheets to track interview progress. Other students discussing the process in WhatsApp groups.

SAYS & DOES

→

Where do I check my interview status?

→

Did my payment go through? How do I get a receipt?

→

Asks peers for updates. Logs into multiple platforms.

THINKS & FEELS

→

I wish there was one place to track everything.

→

Why do I need to use third-party payment links?

→

Am I missing important deadlines?

Priya Sharma

Admissions & Finance Administrator - Age: 42 | Location: New Delhi, India

Priya is responsible for managing student admissions, financial records and interview tracking. She works with multiple tools daily to approve applications, verify transactions and track refund requests. The lack of a centralized system makes her job exhausting - she handles 2-4 data discrepancy errors daily across NPF, Pinelab, Excel and email.

Goals

→

Streamline the admission approval process to reduce manual work

→

Get real-time insights into student finances, transactions and interview status

→

Automate fee processing and refunds to avoid delays and student complaints

→

Ensure data accuracy in reports without switching between multiple platforms

Pain Points

×

Too many manual approvals - time-consuming and error-prone

×

Scattered data across NPF, Pinelab, Excel and email - hard to get a complete student picture

×

Delayed fee verification and refund processing - leading to daily student complaints

×

No single dashboard for managing student interactions across all touchpoints

HEARS

→

We need to approve 50+ applications today

→

How many students have completed fee payments?

→

Students are complaining about missing payment confirmations

SEES

Multiple spreadsheets tracking student fee payments. Unorganized email requests for refund approvals. Confusion about fee statuses across different platforms.

SAYS & DOES

→

I need to check multiple systems to track student applications

→

Tracks counselor interactions separately from financial records. Compiles manual reports each morning.

THINKS & FEELS

→

There must be a better way to manage all of this.

→

I spend 4 hours a day just reconciling data.

→

Why can't I see a student's full journey in one place?

Dr. Rajeev Menon

Faculty & Student Counselor- Age: 40 | Location: Bangalore, India

Dr. Rajeev Menon has been mentoring students for over a decade, helping them navigate academic and career paths. Without a structured system, his feedback gets lost in emails. He struggles to track student engagement across counseling sessions and has no way to flag at-risk students early.

Goals

→

Provide timely and structured feedback to students after interviews and sessions

→

Have a centralized system to track student history, progress and counselor interactions

→

Improve student engagement in career mentorship and academic guidance

→

Reduce the need for manual note-keeping and fragmented communication

Pain Points

×

No centralized student tracking system - feedback often gets lost in email drafts

×

Manual documentation is inefficient and takes too much time between sessions

×

Hard to ensure students are following up on counseling sessions and interview feedback

HEARS

→

Can you check in on this student? I think they're struggling

→

Your session notes weren't attached to the student record

→

A student from last month missed their follow-up.

SEES

SAYS & DOES

→

My session notes live in my profesional email drafts.

→

I have to reconstruct context from memory each time

→

Manually tracks follow-ups in a personal notebook.

THINKS & FEELS

→

I'm losing context between sessions - I can't be an effective mentor this way.

→

I want to flag at-risk students but there's no channel

→

This should all be in one place.

/ 2.6 Experience & Journey Mapping

We mapped the emotional arc of the full 8-step journey for each role - not just the tasks, but how users felt at each stage. This is where the design priorities became undeniable.

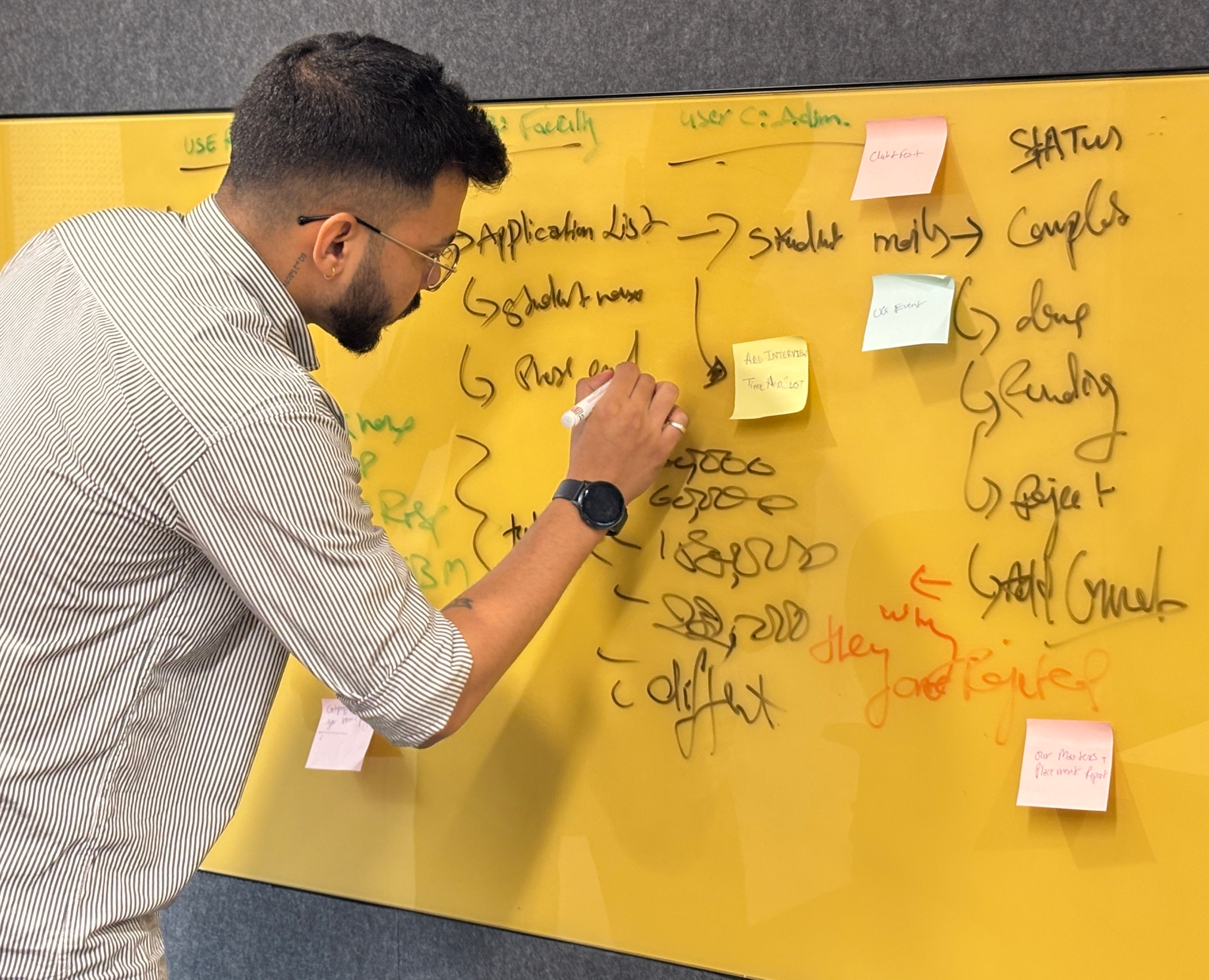

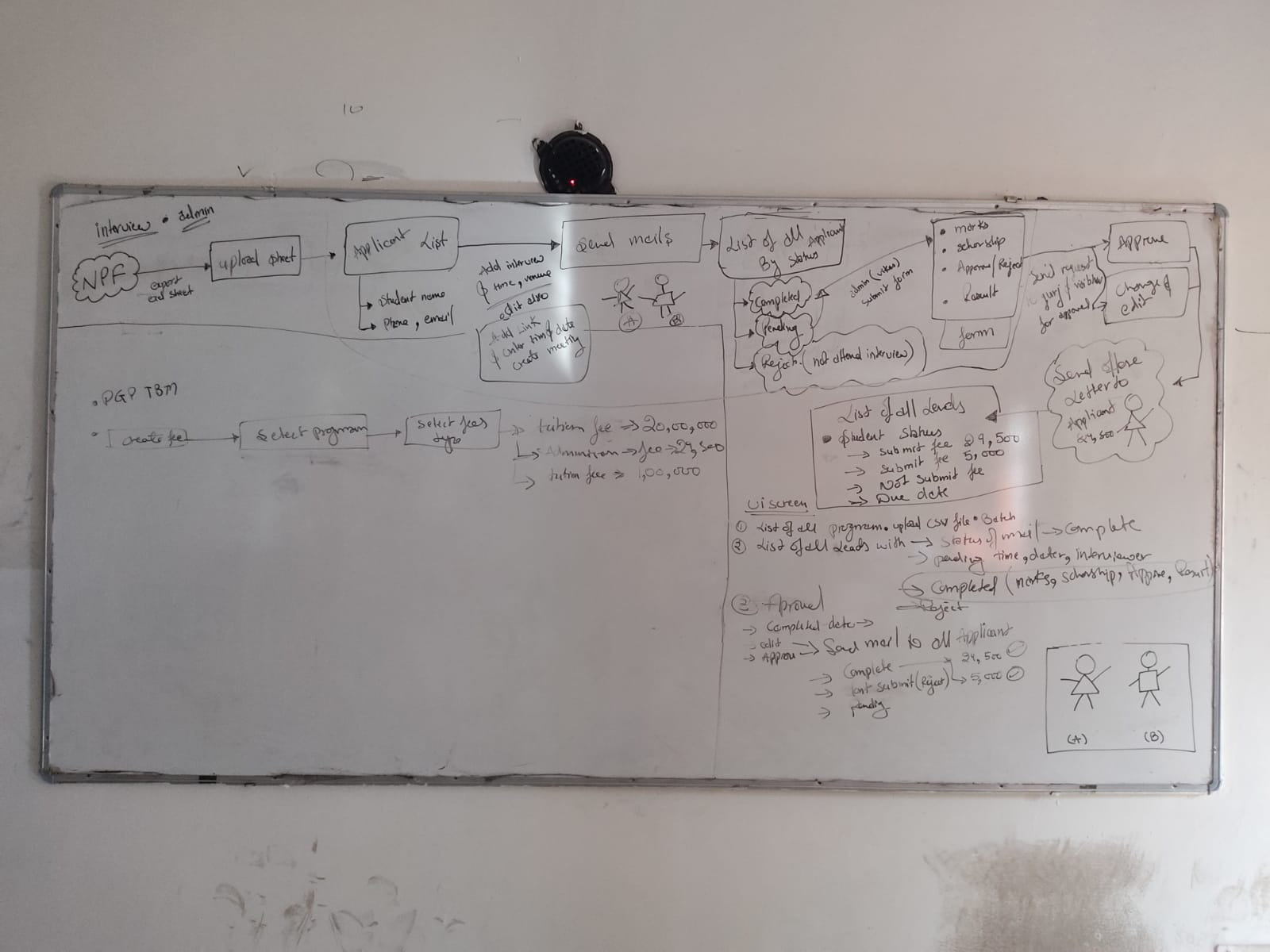

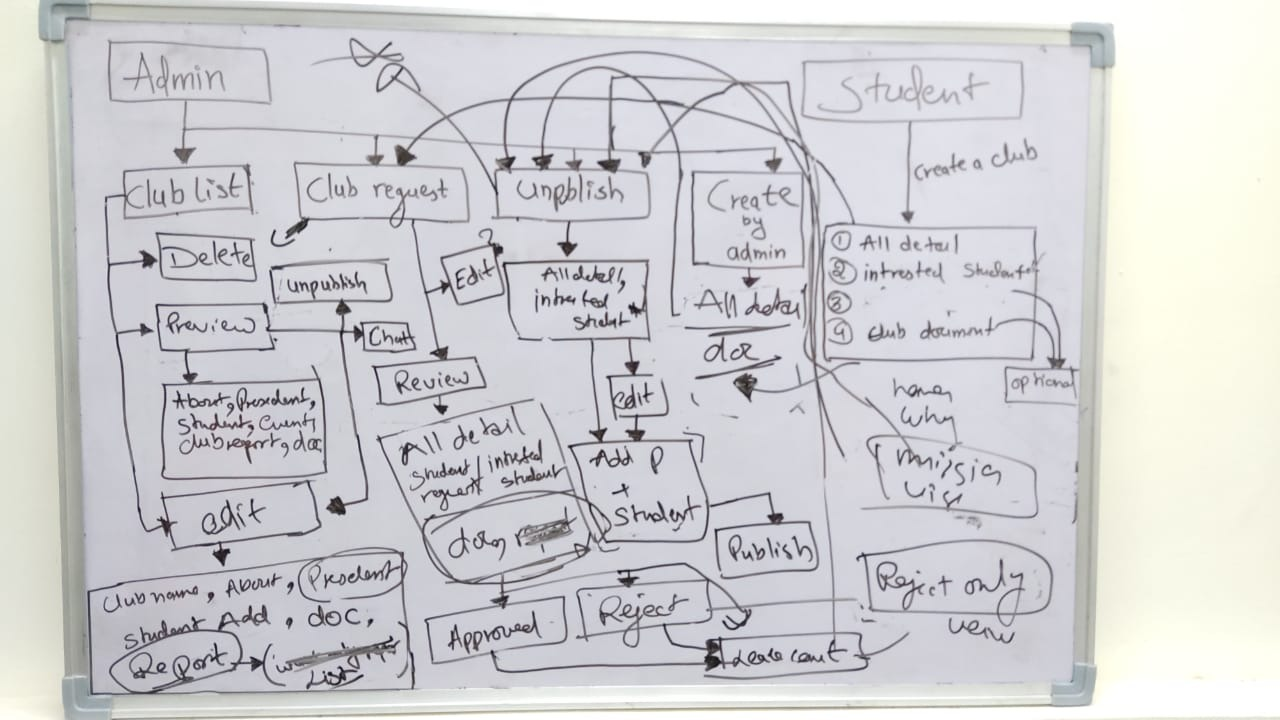

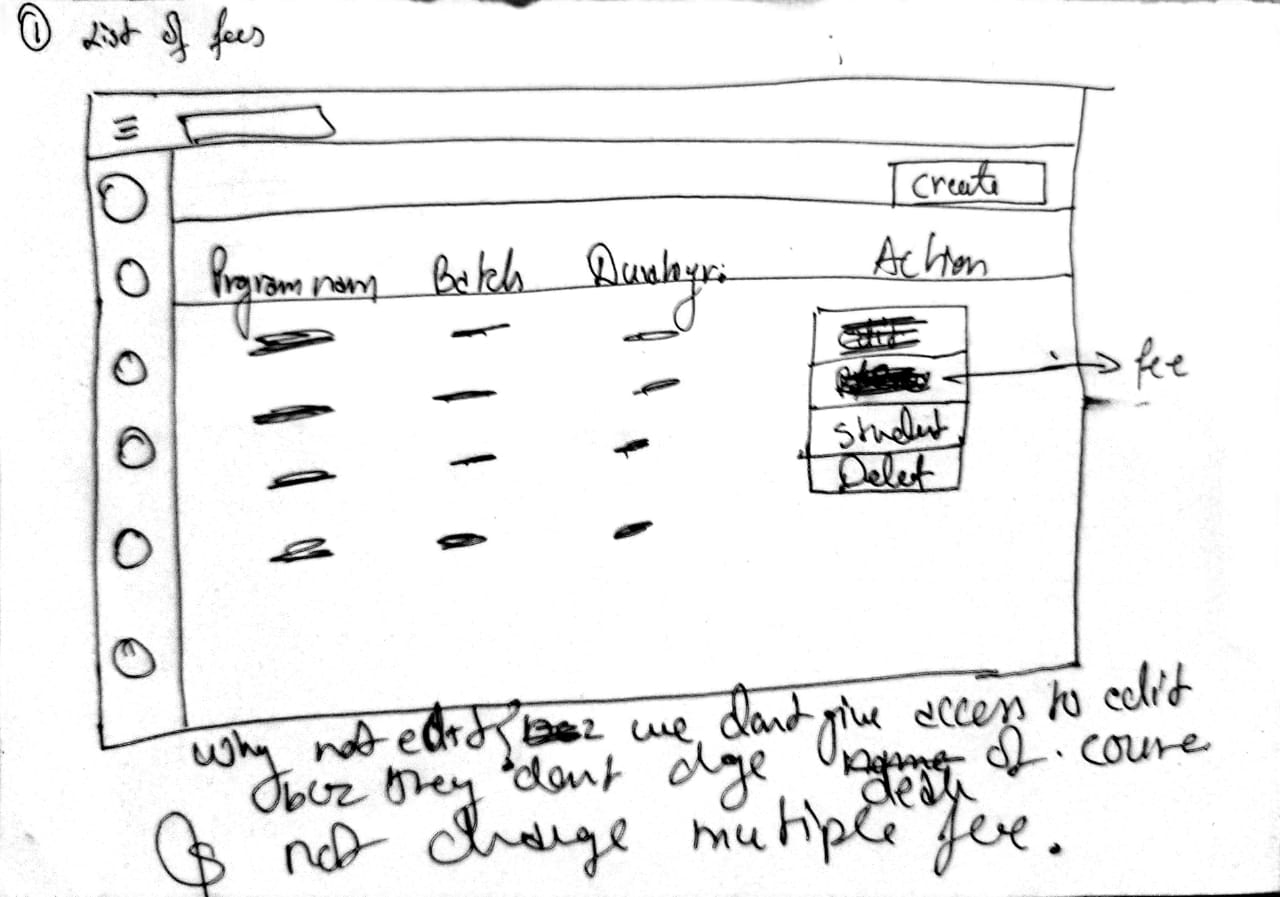

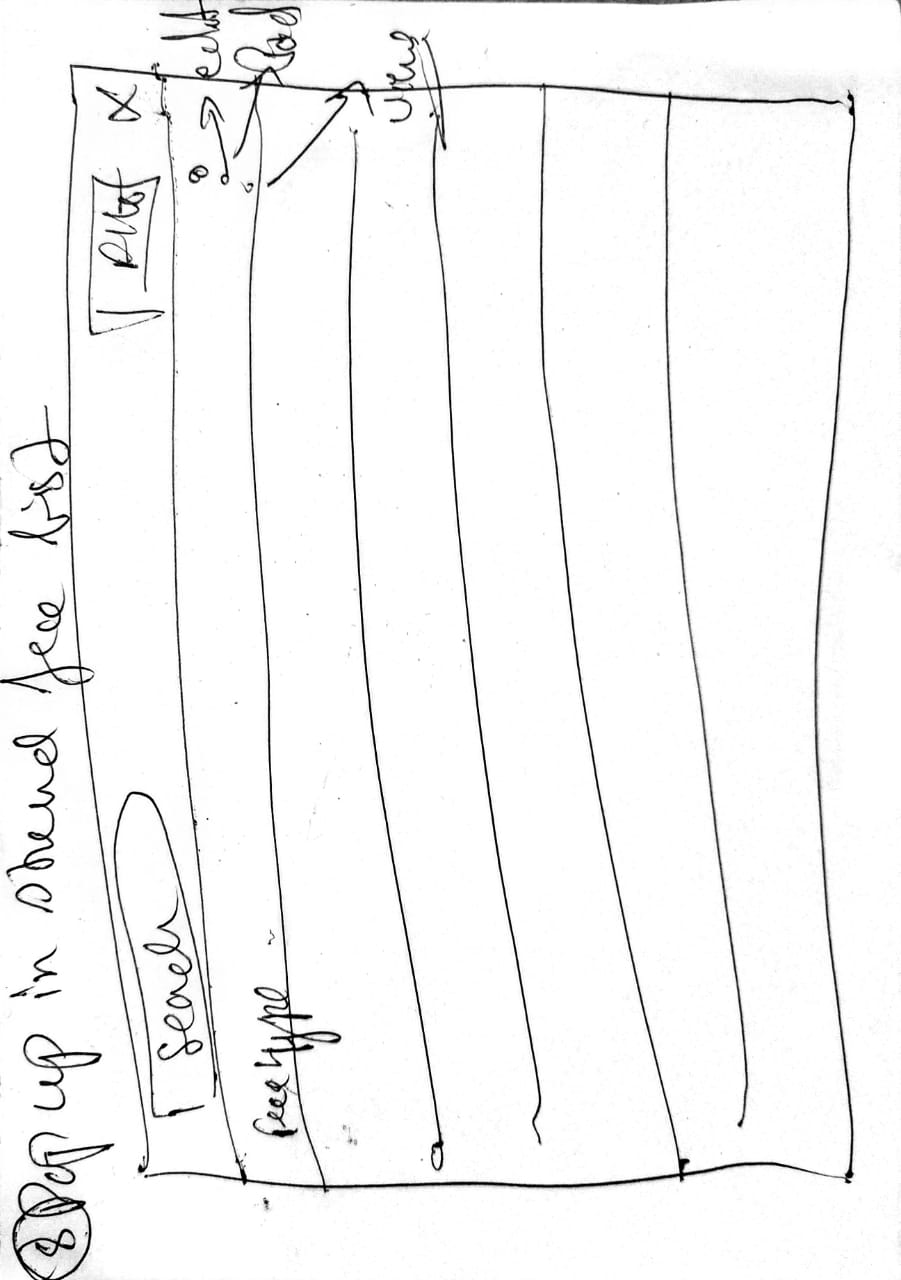

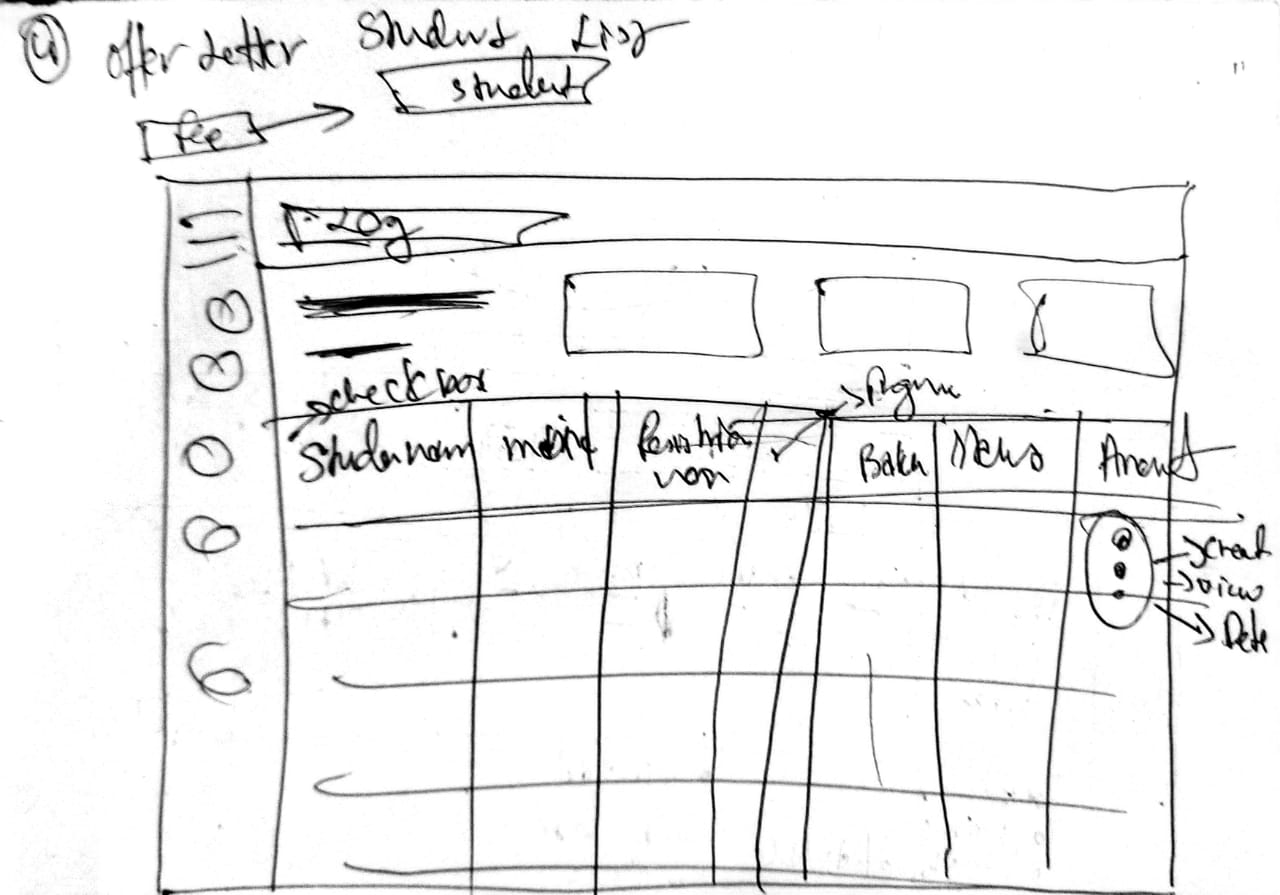

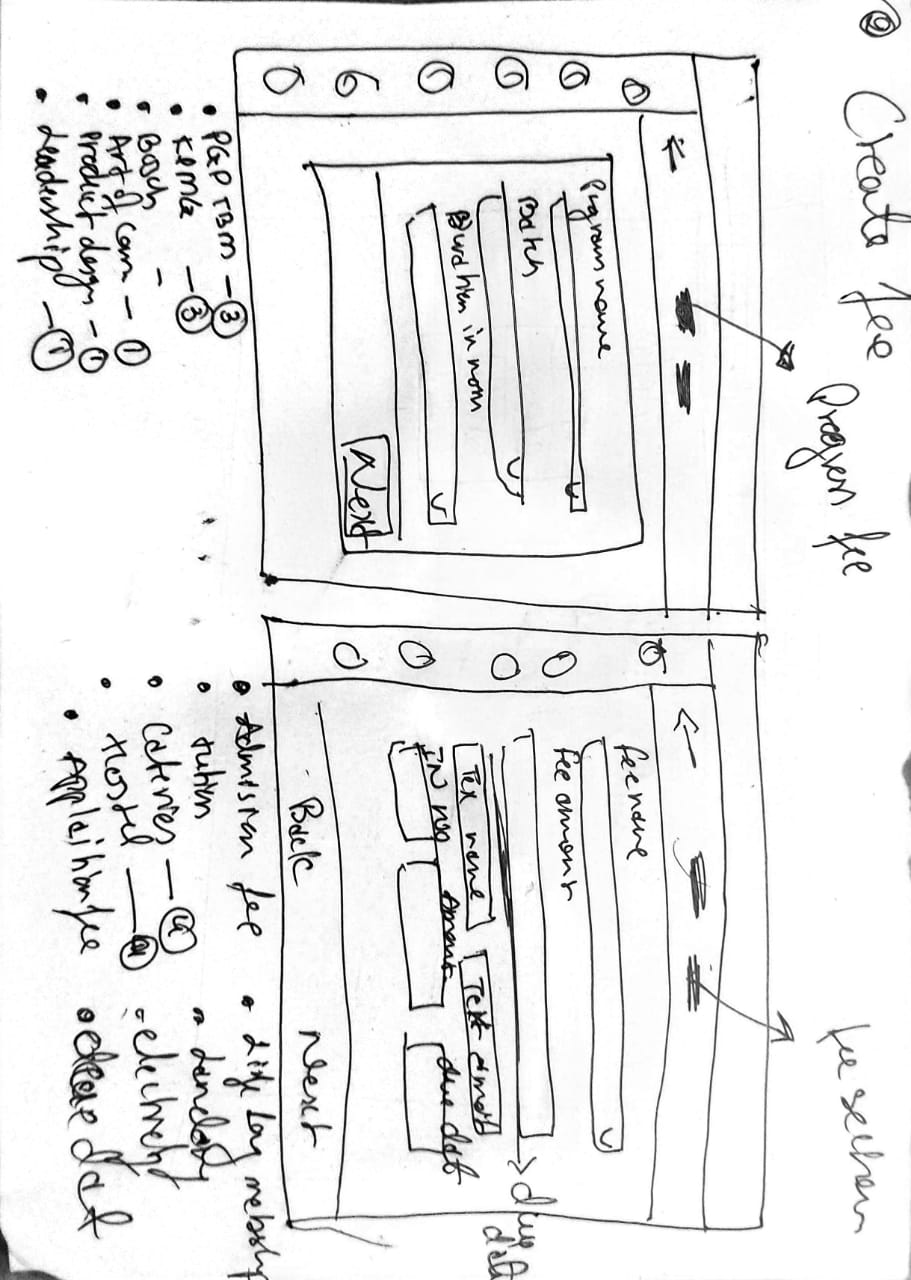

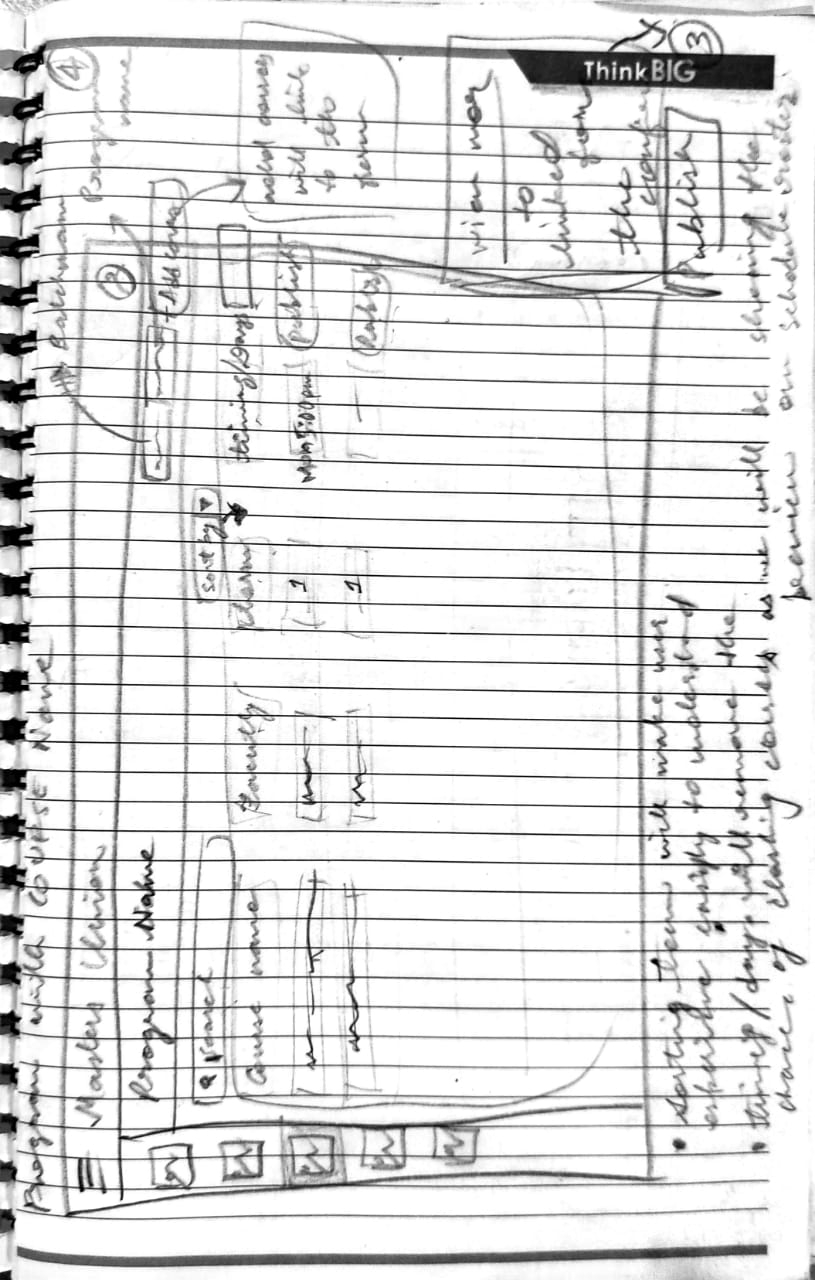

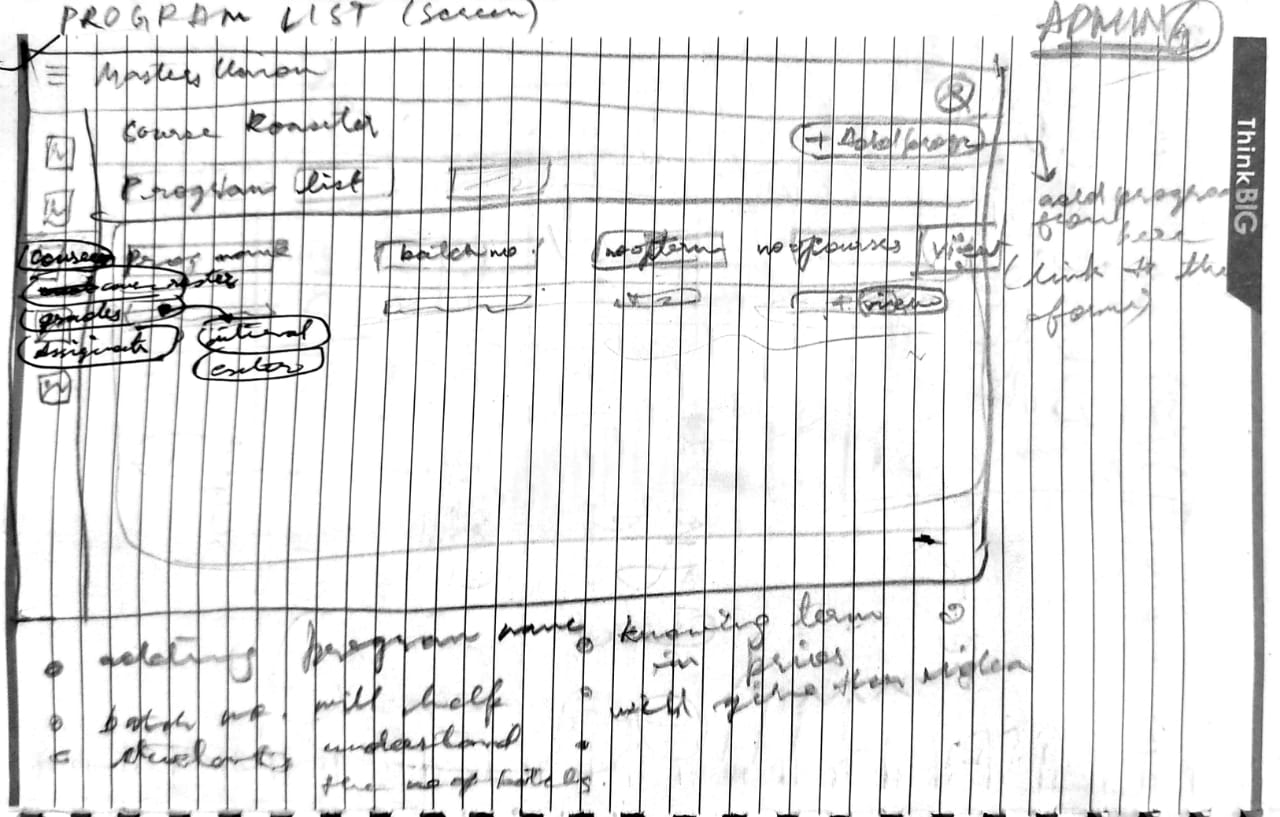

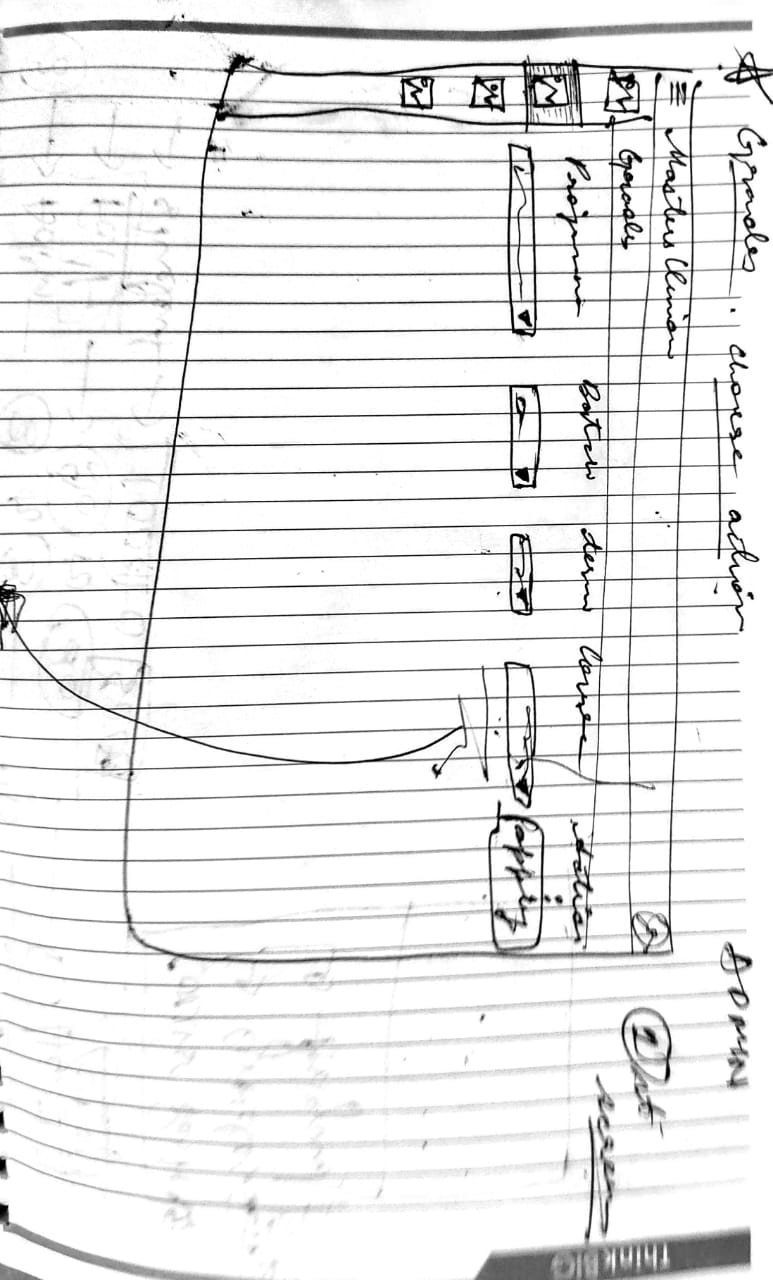

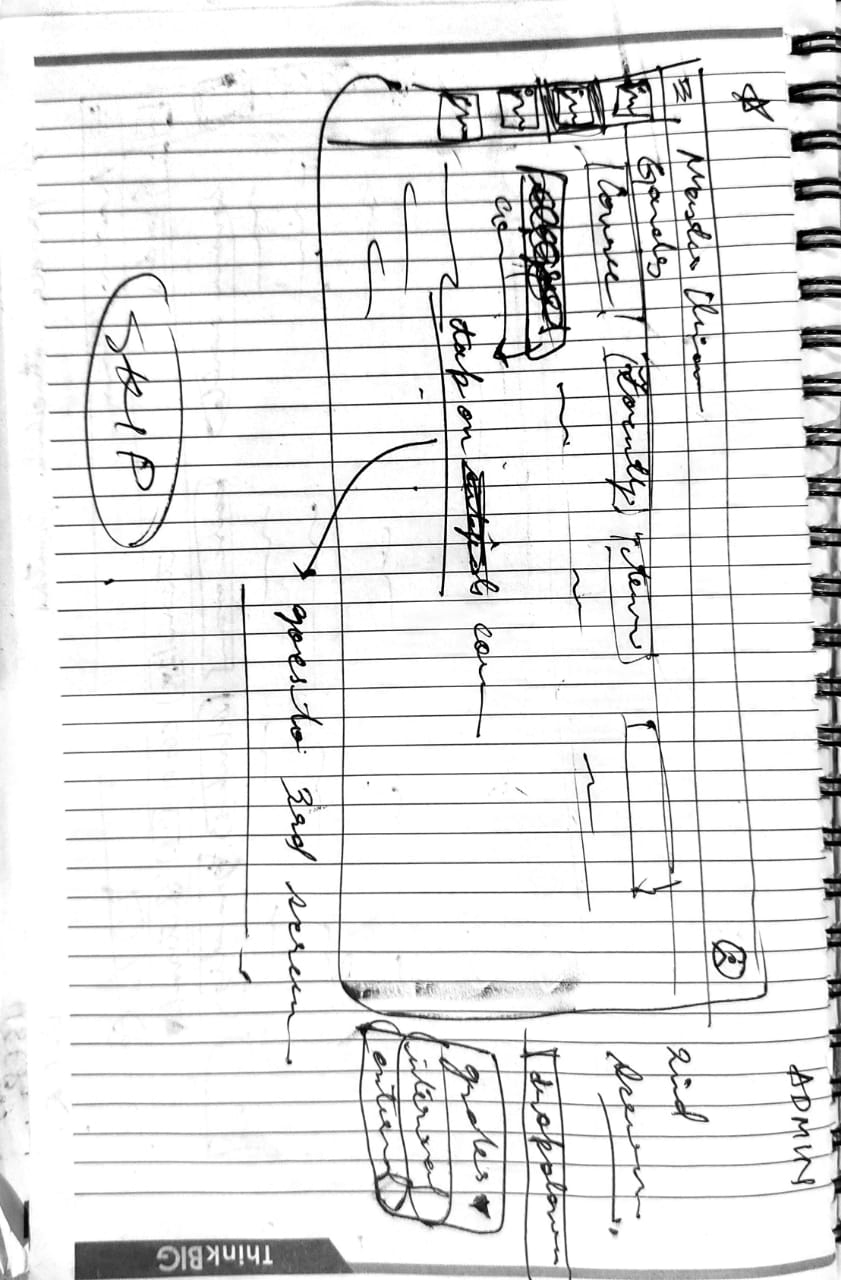

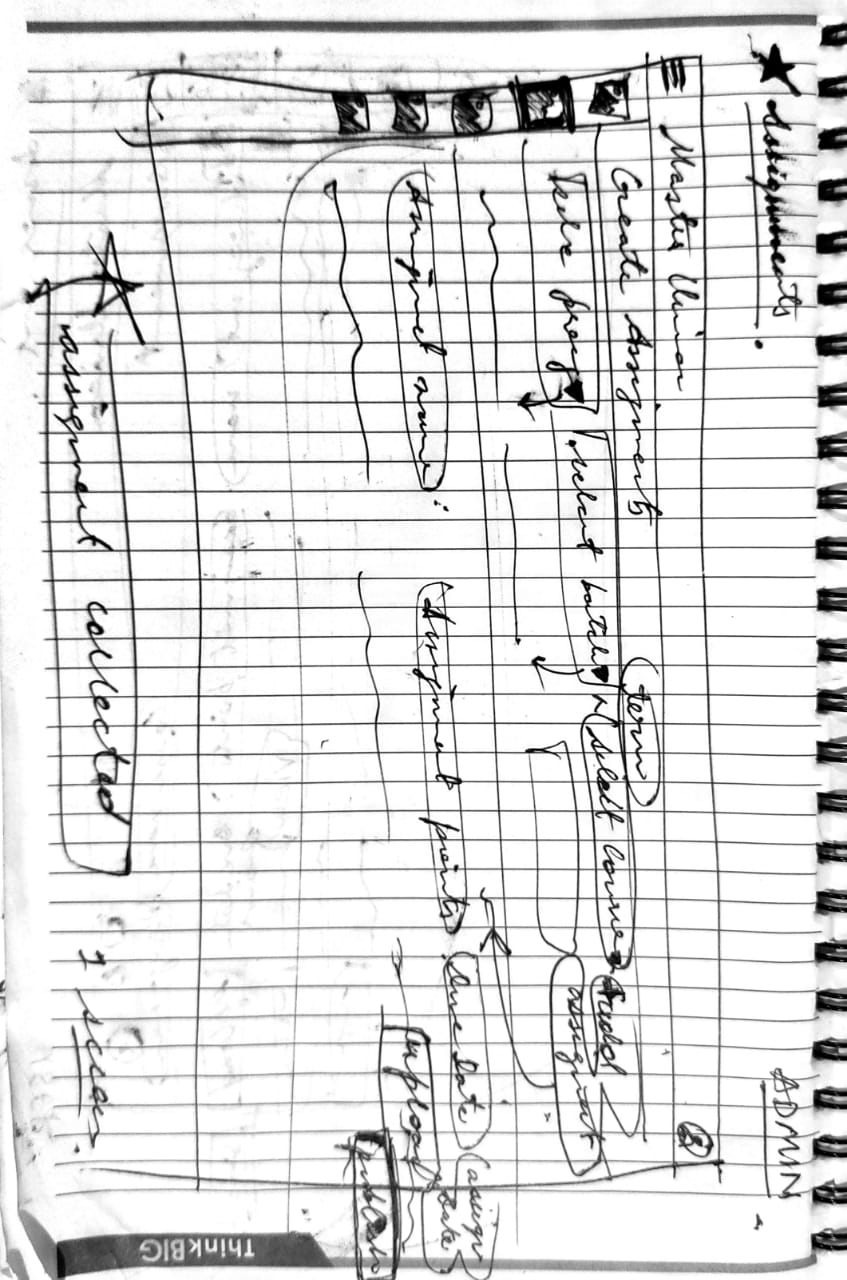

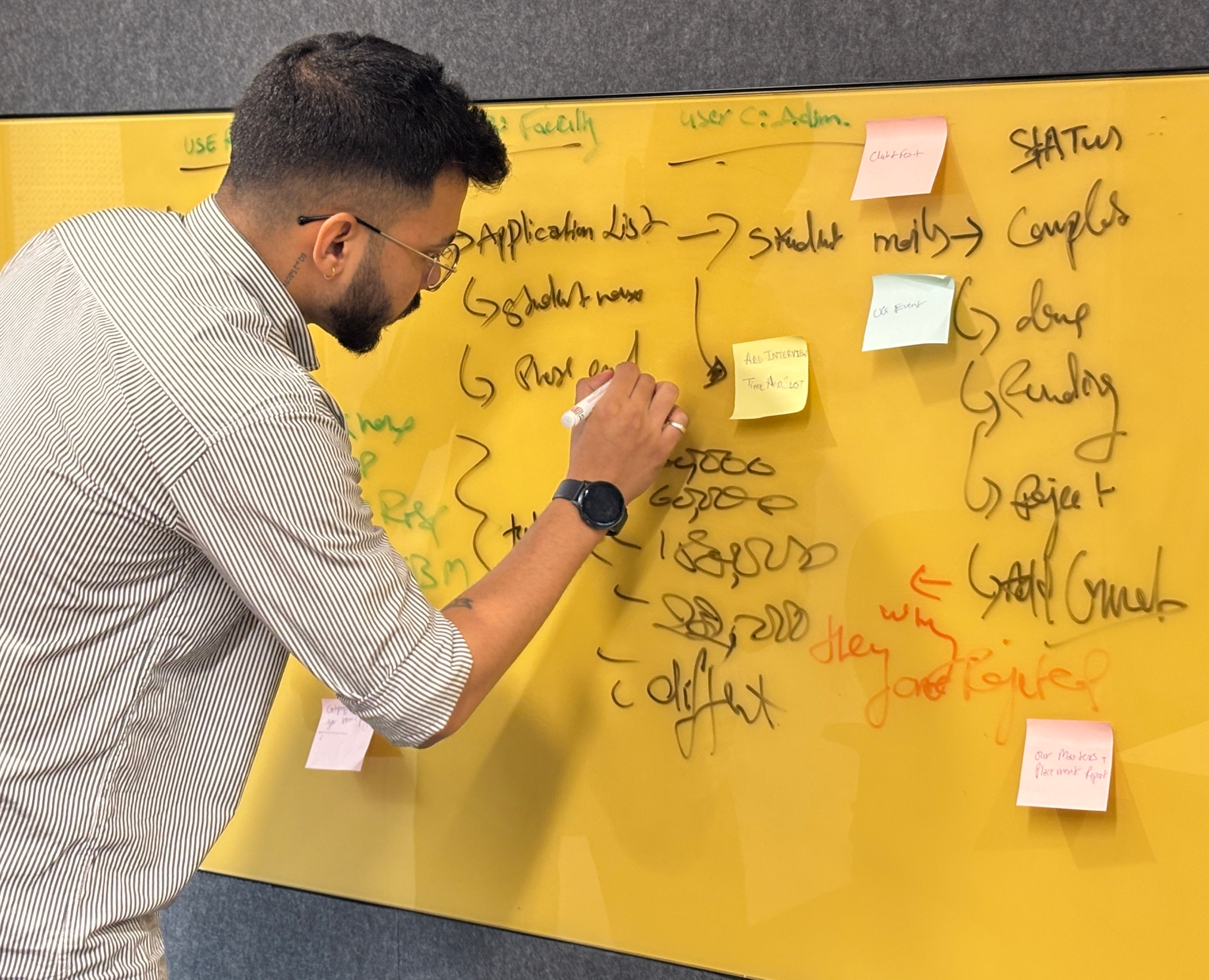

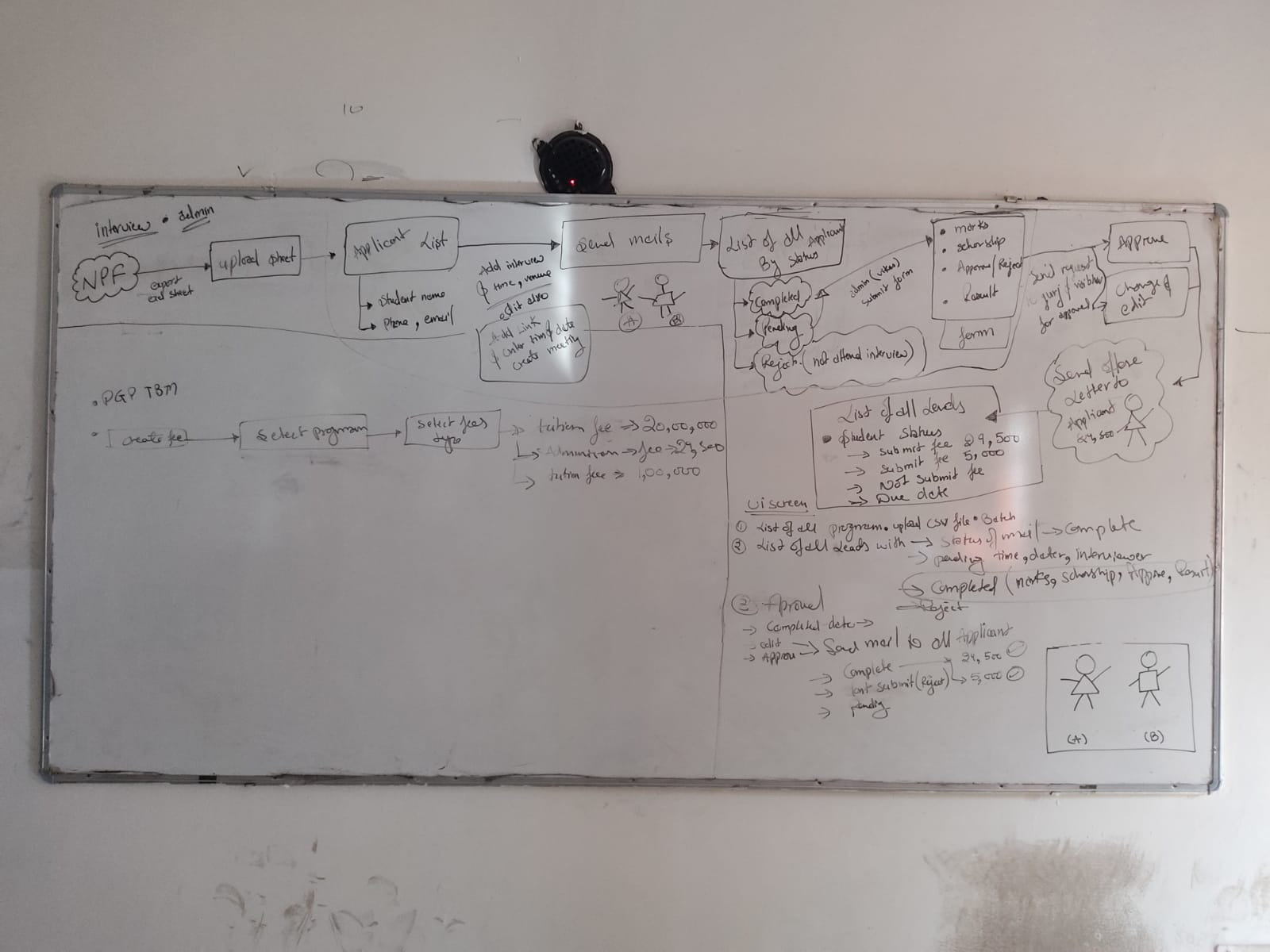

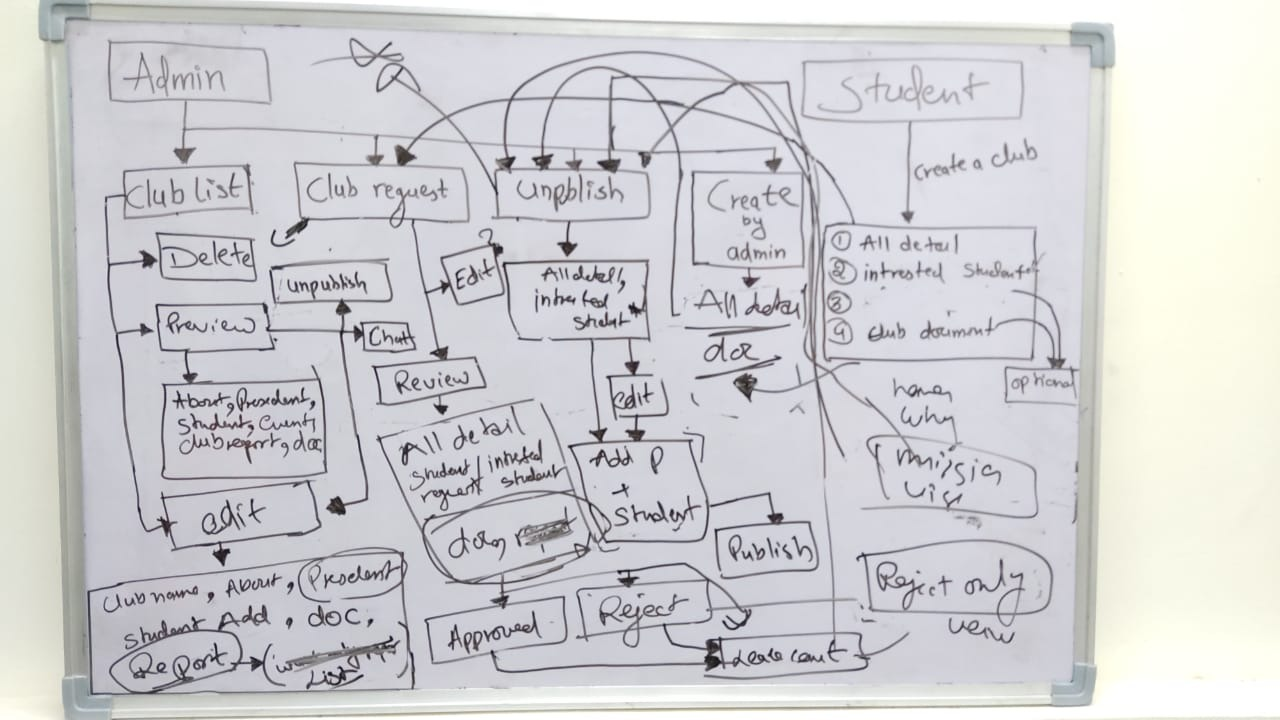

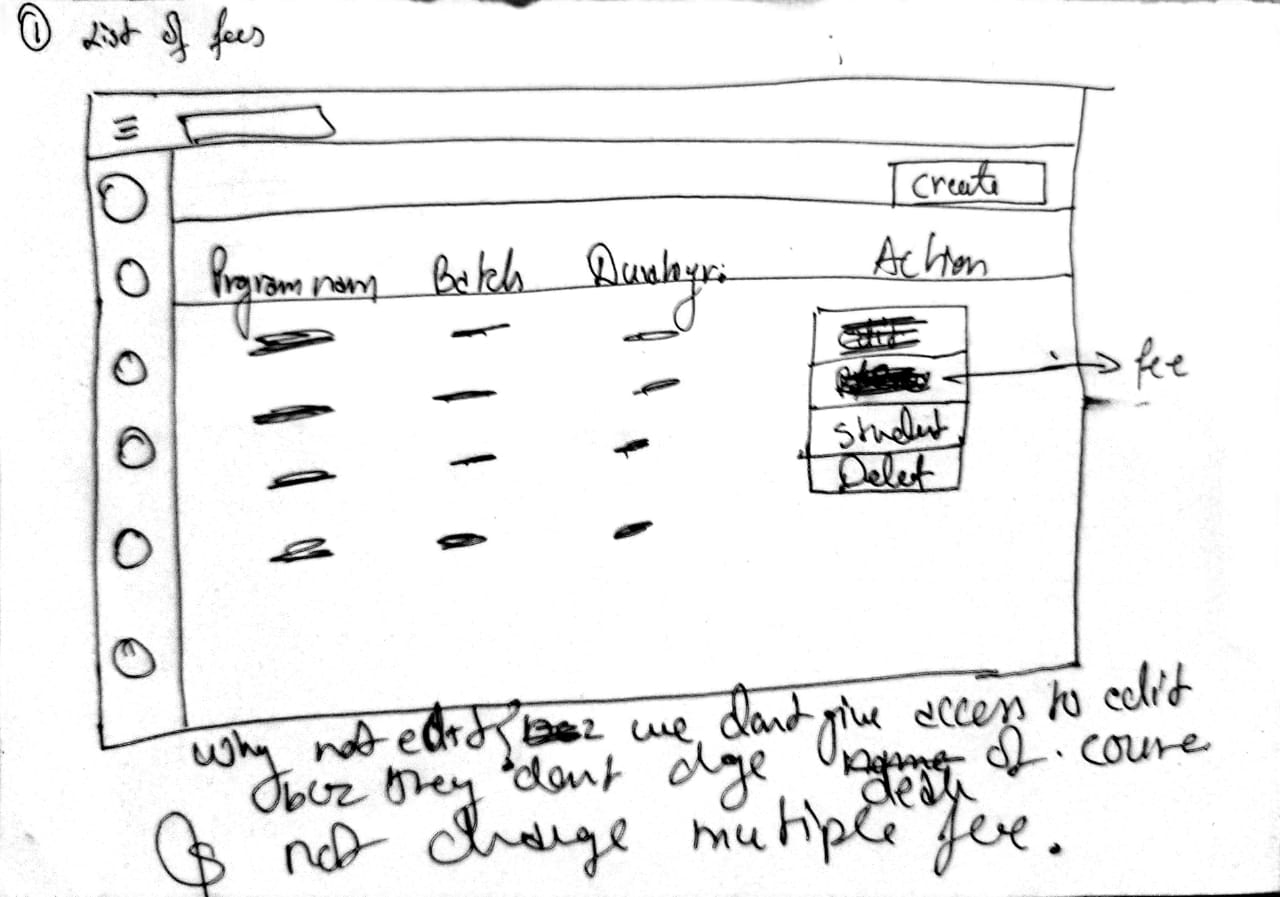

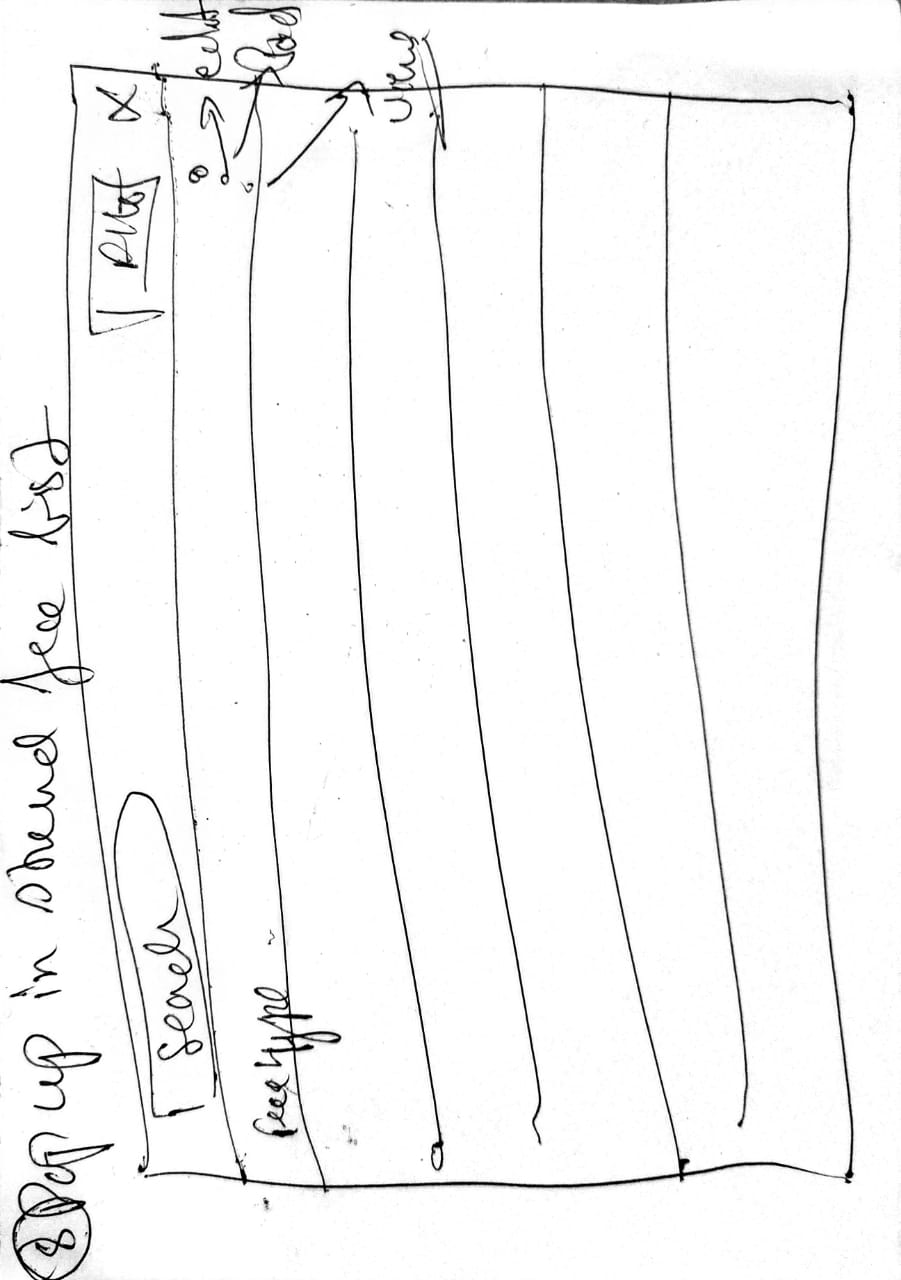

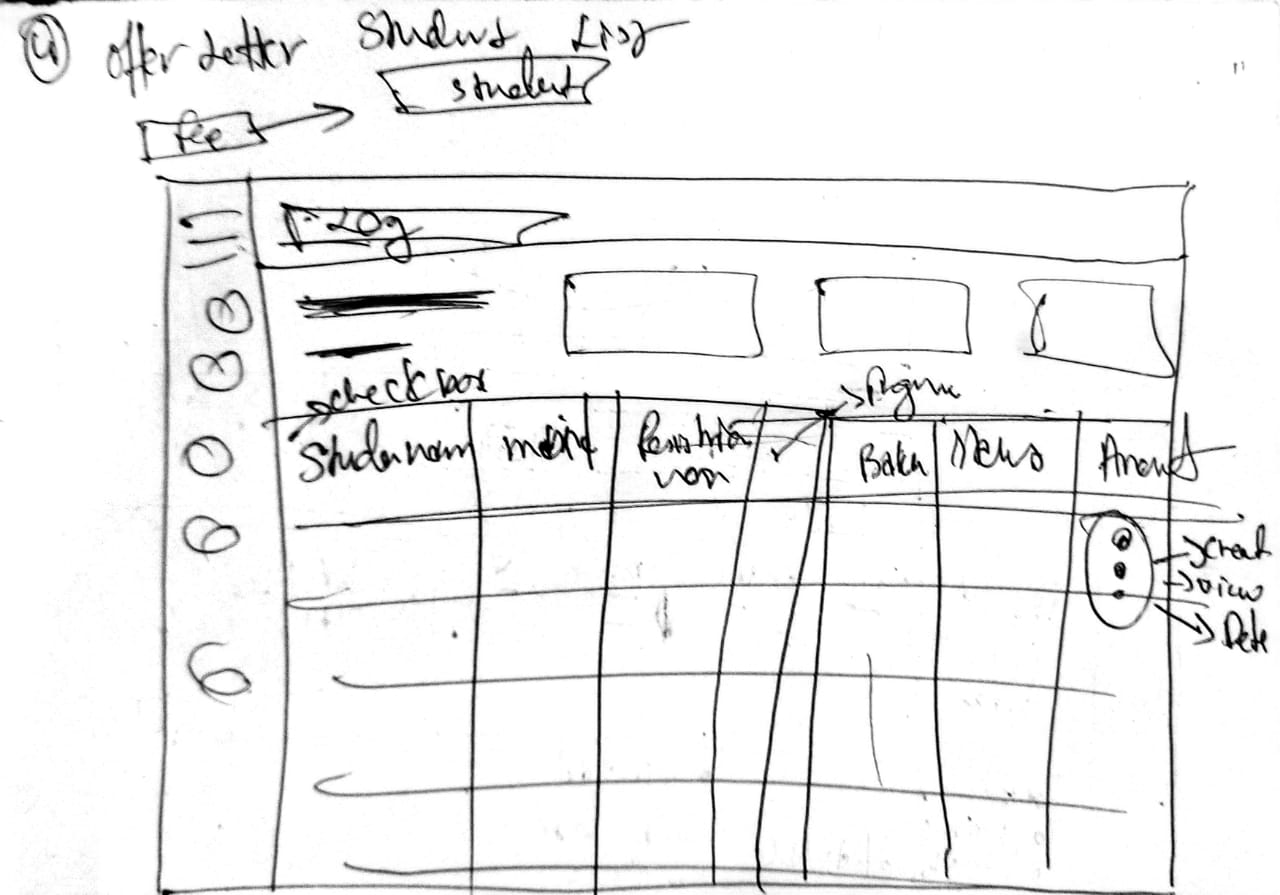

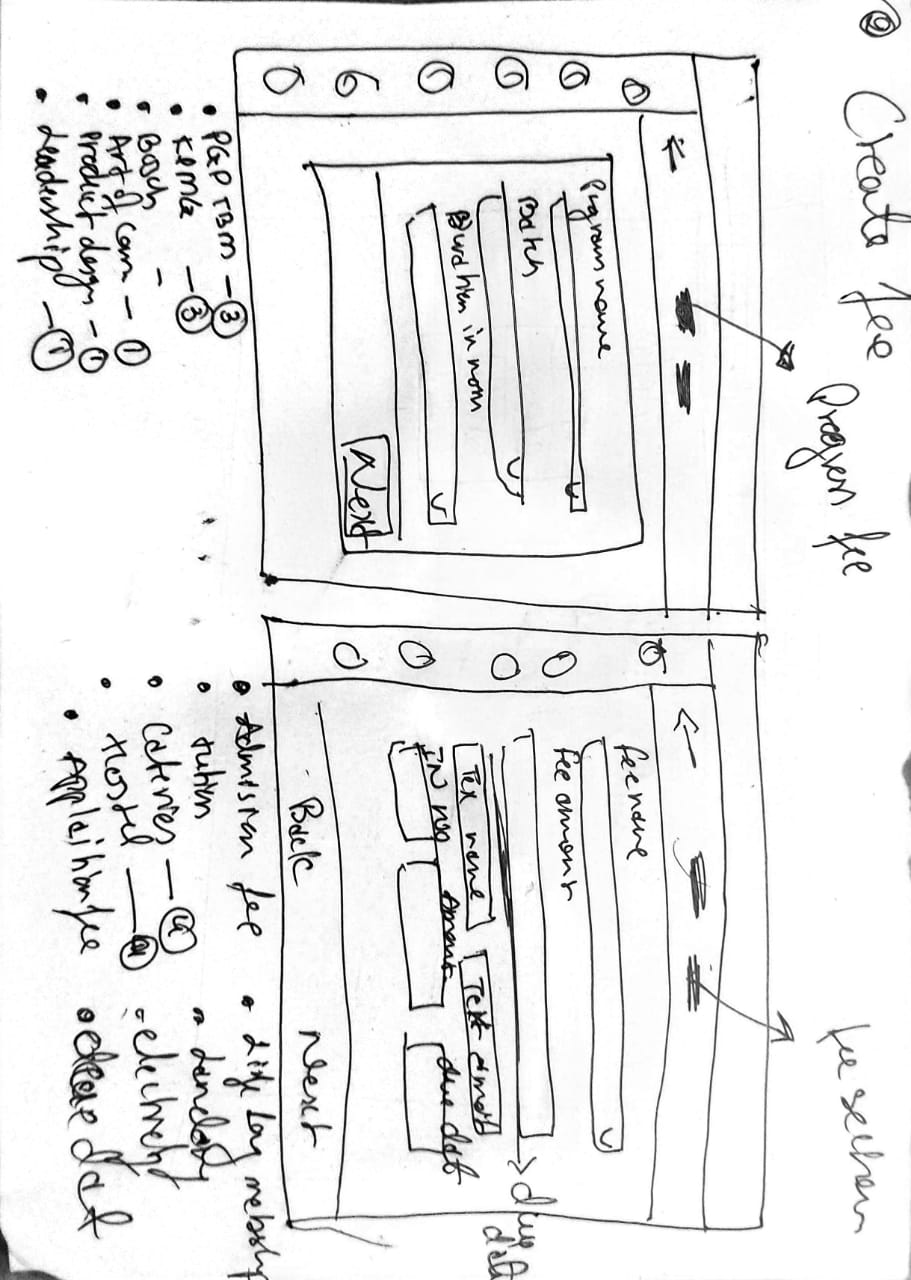

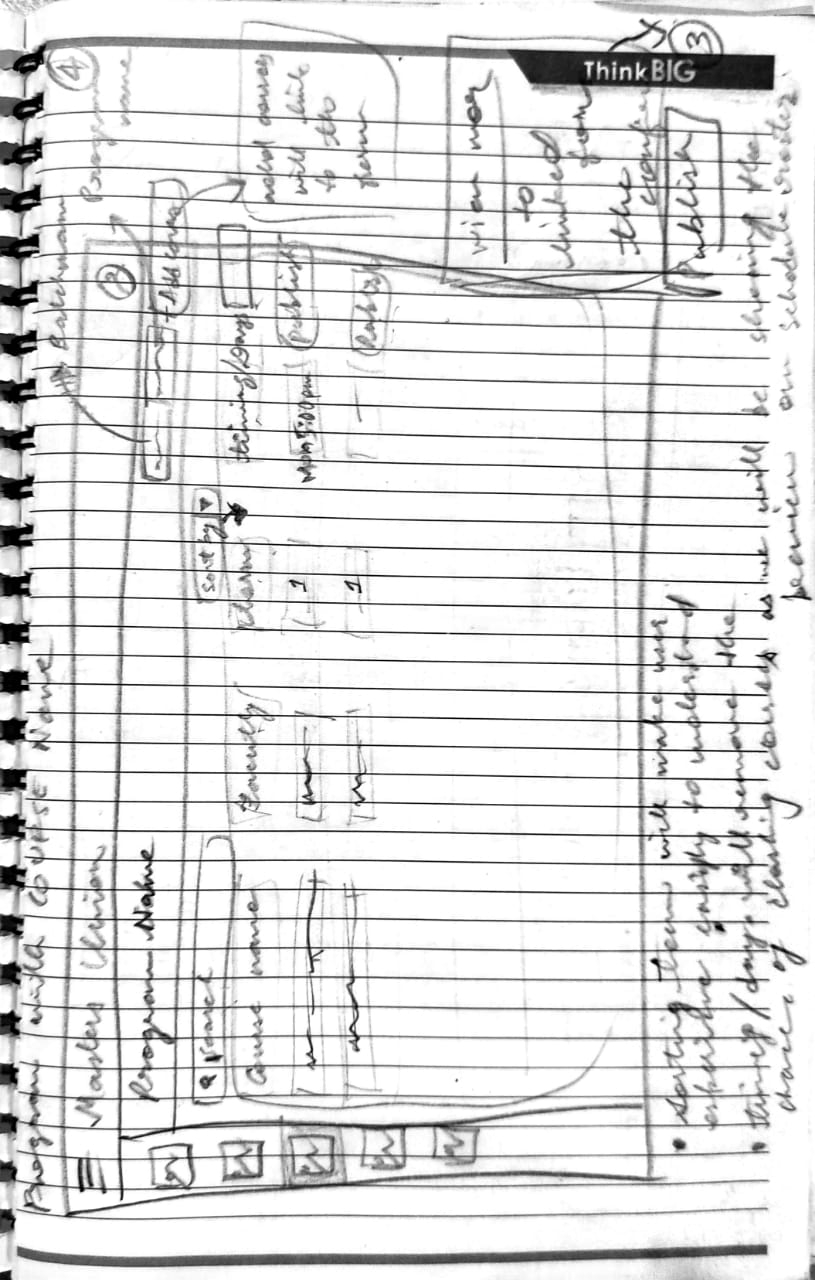

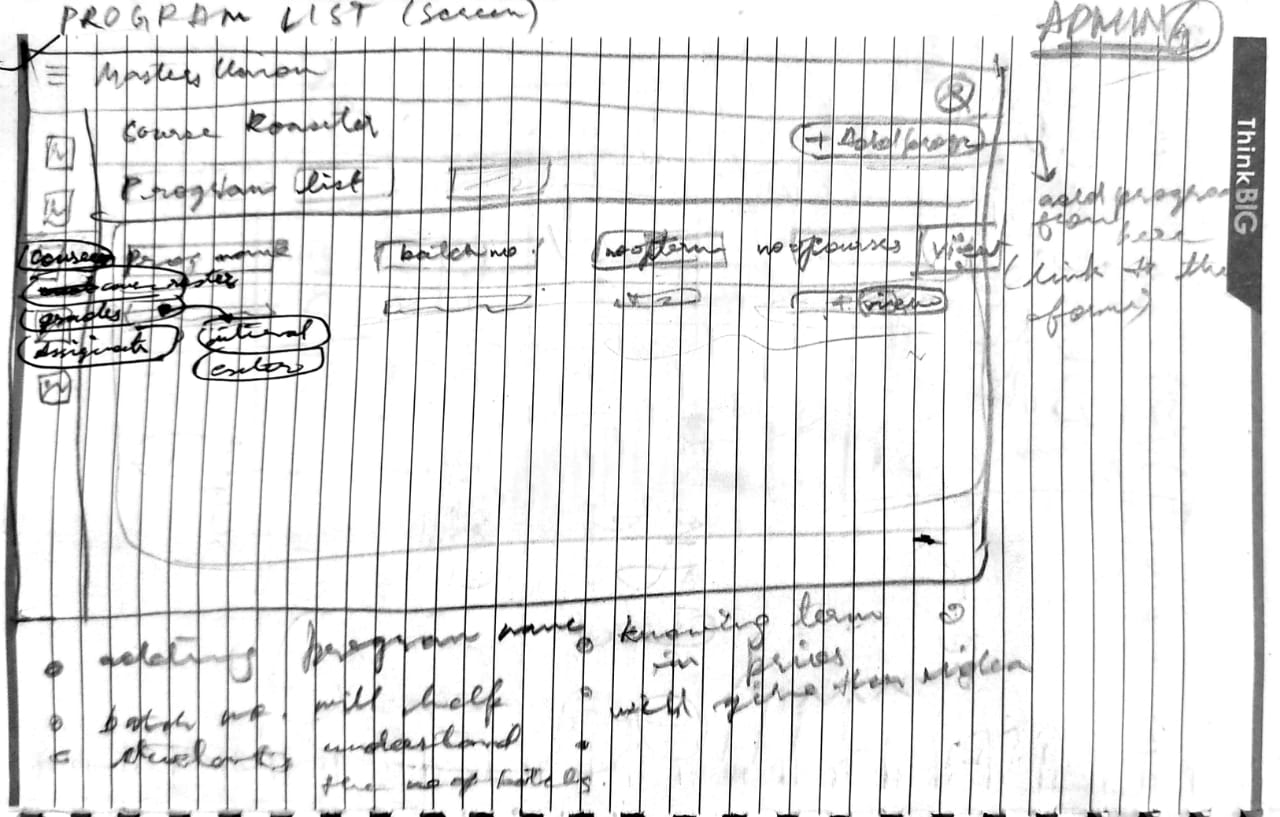

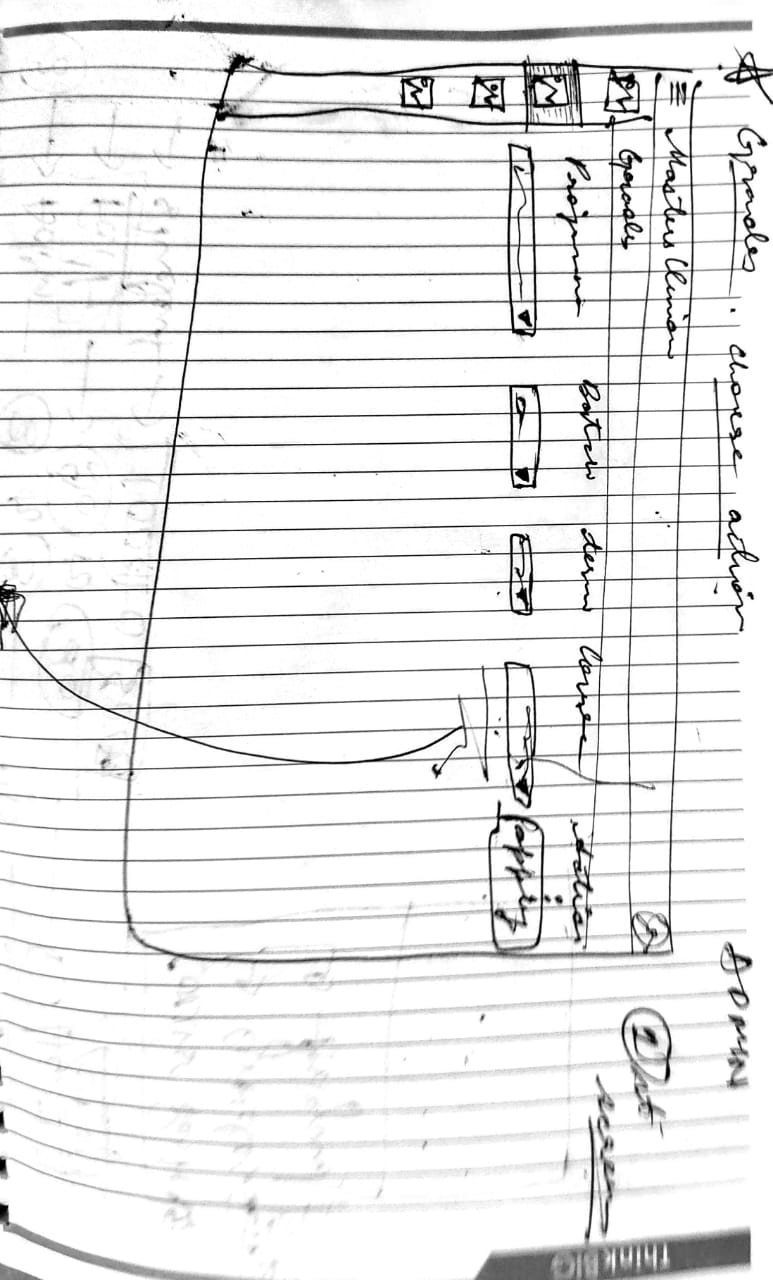

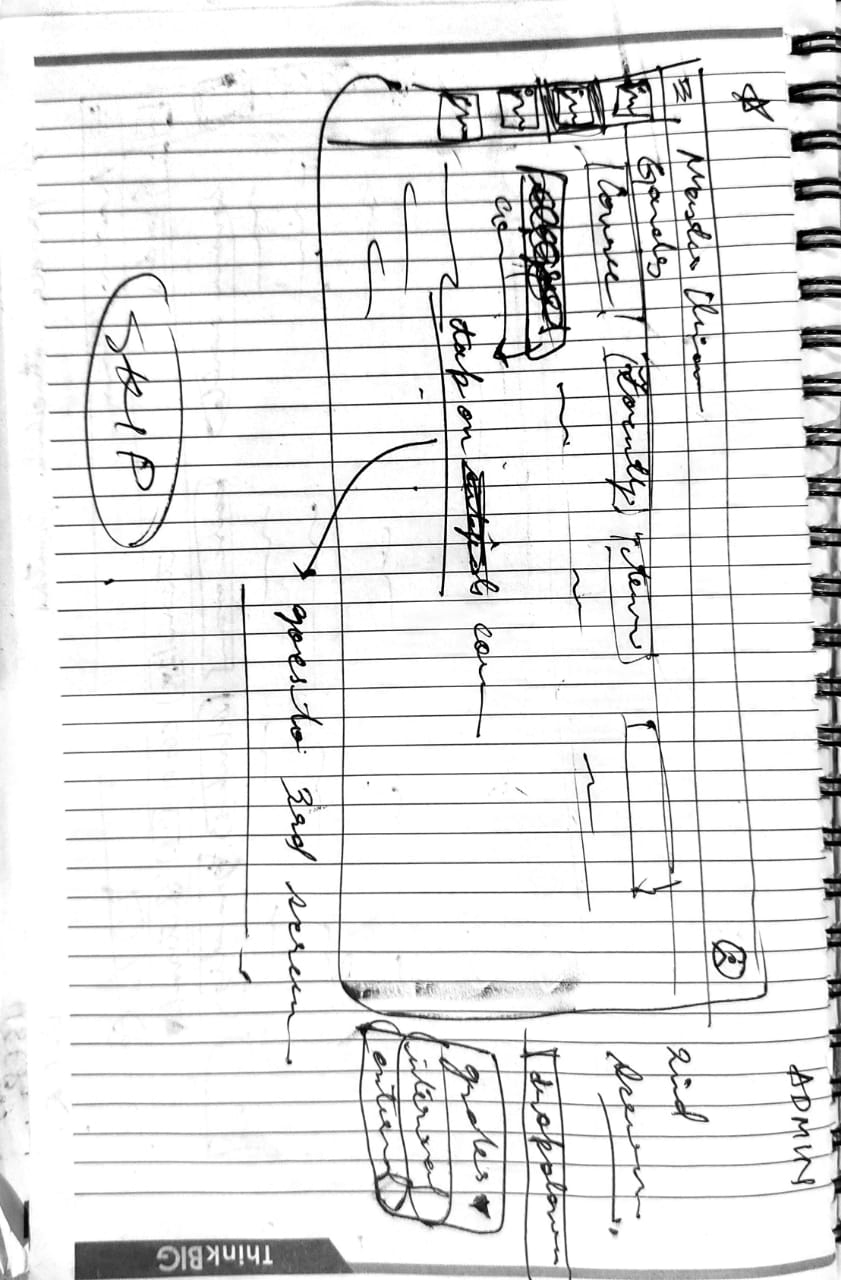

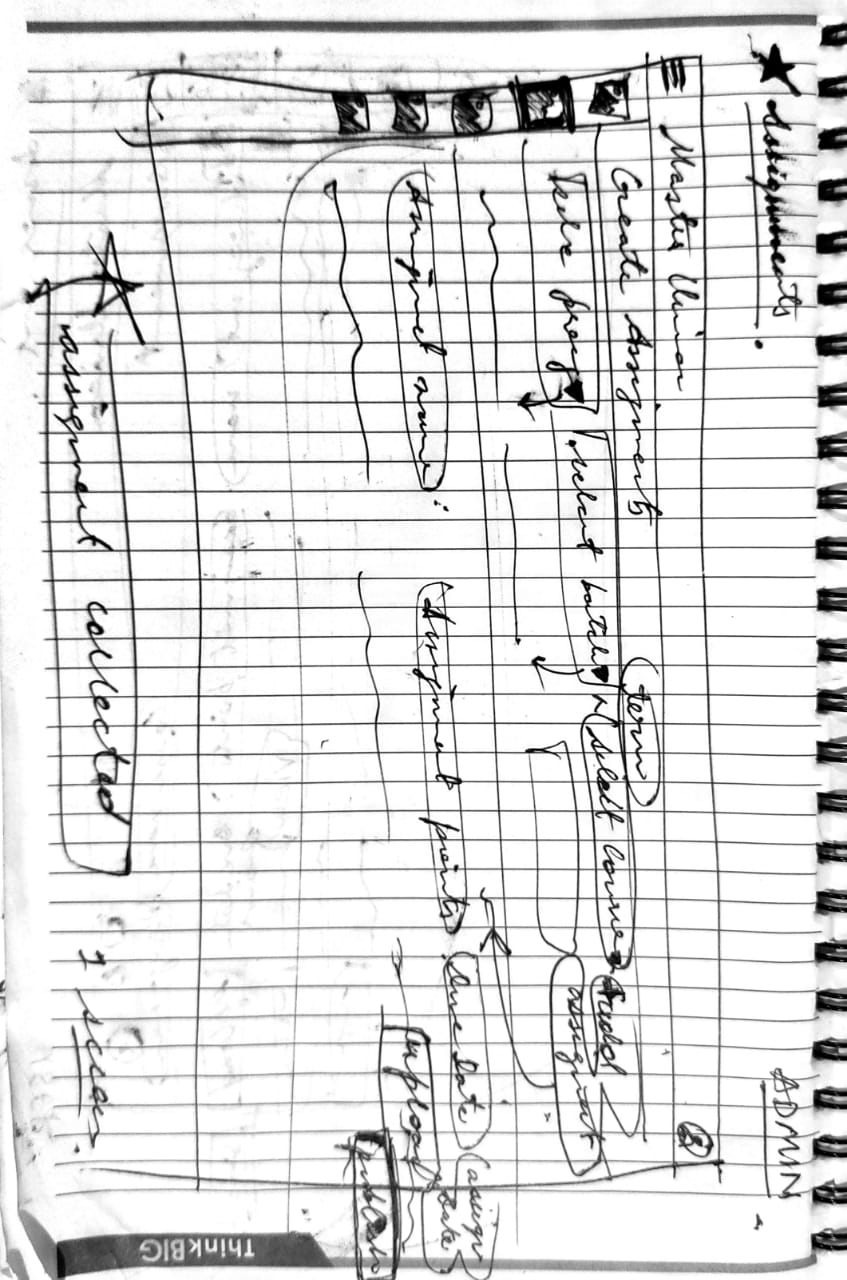

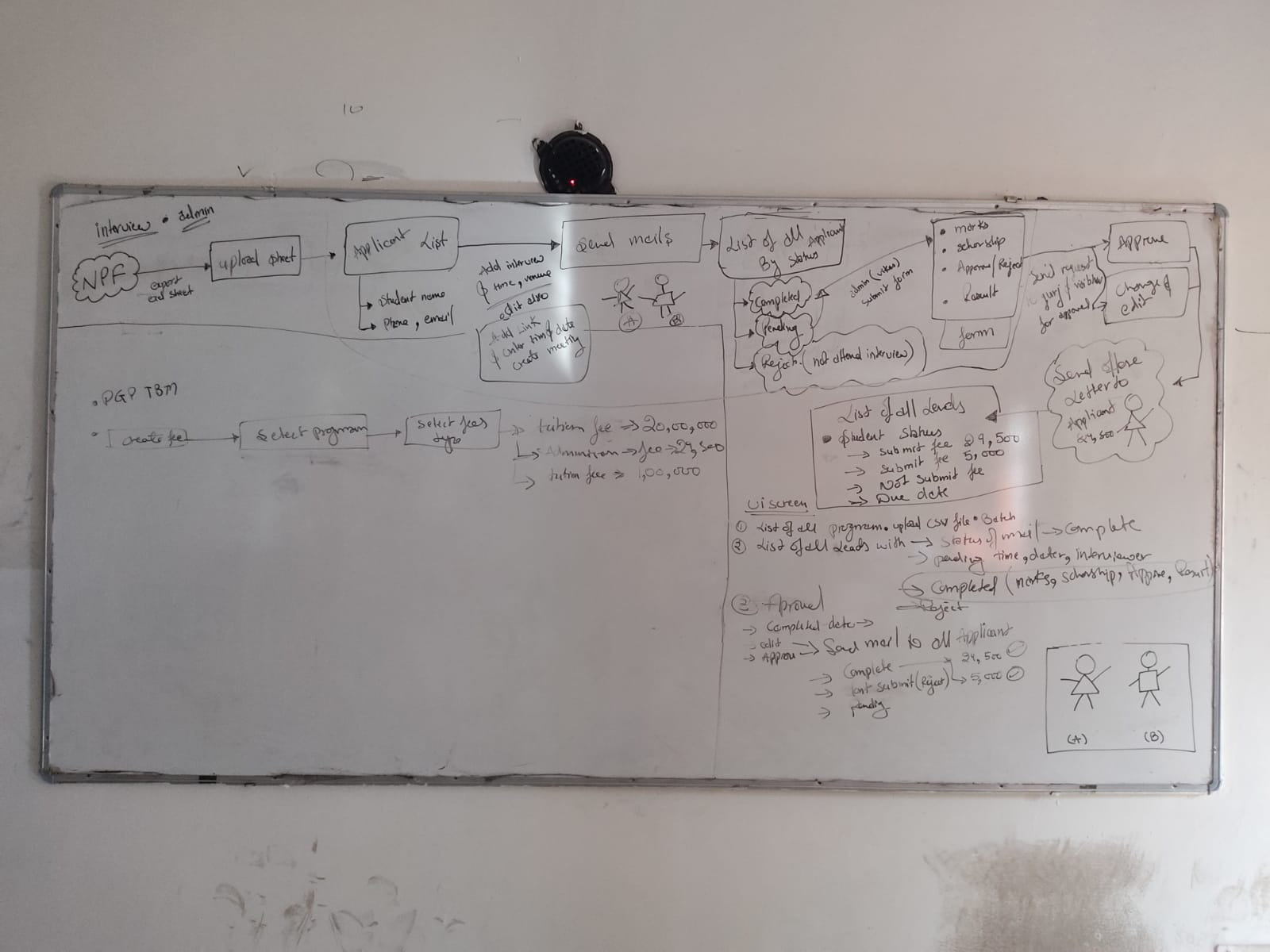

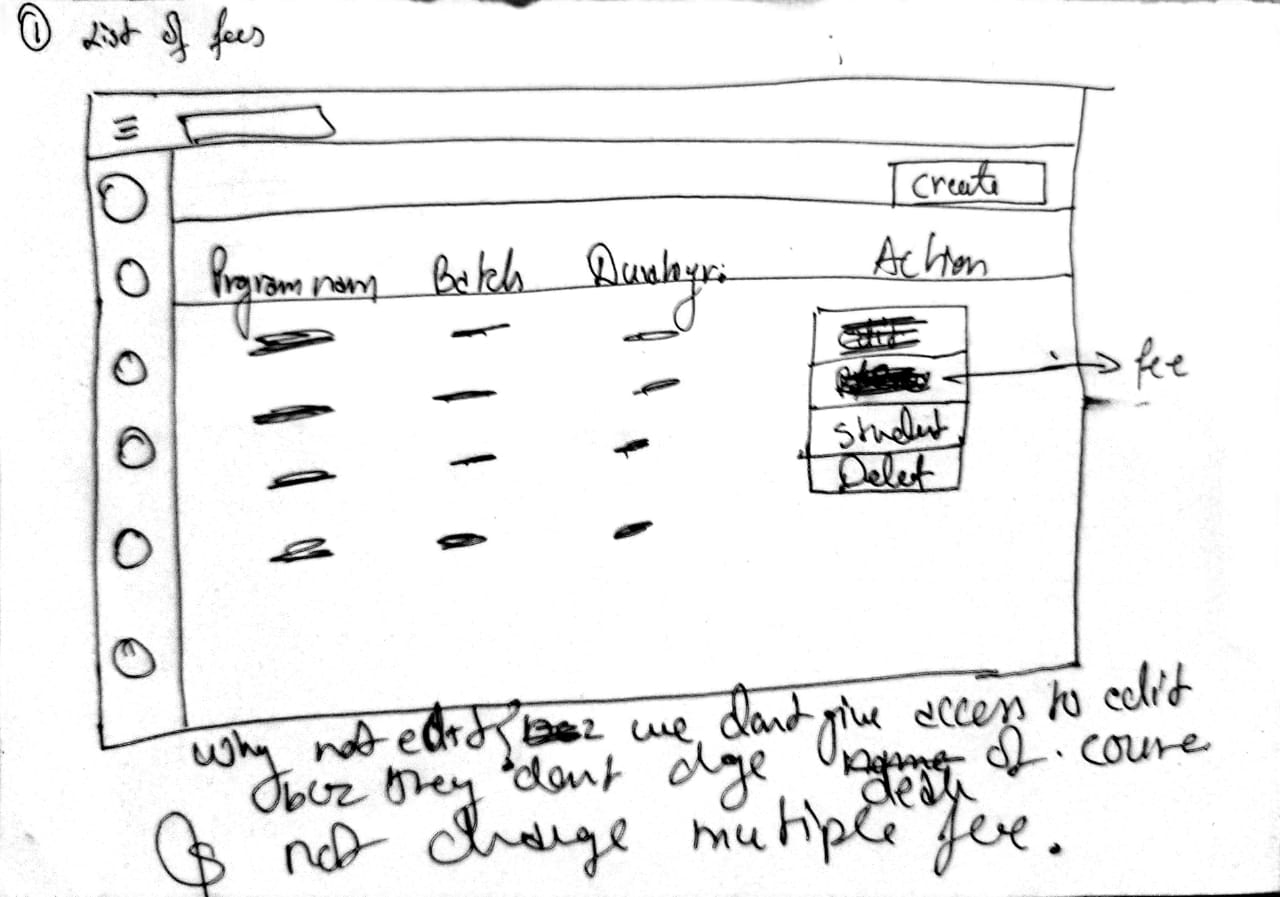

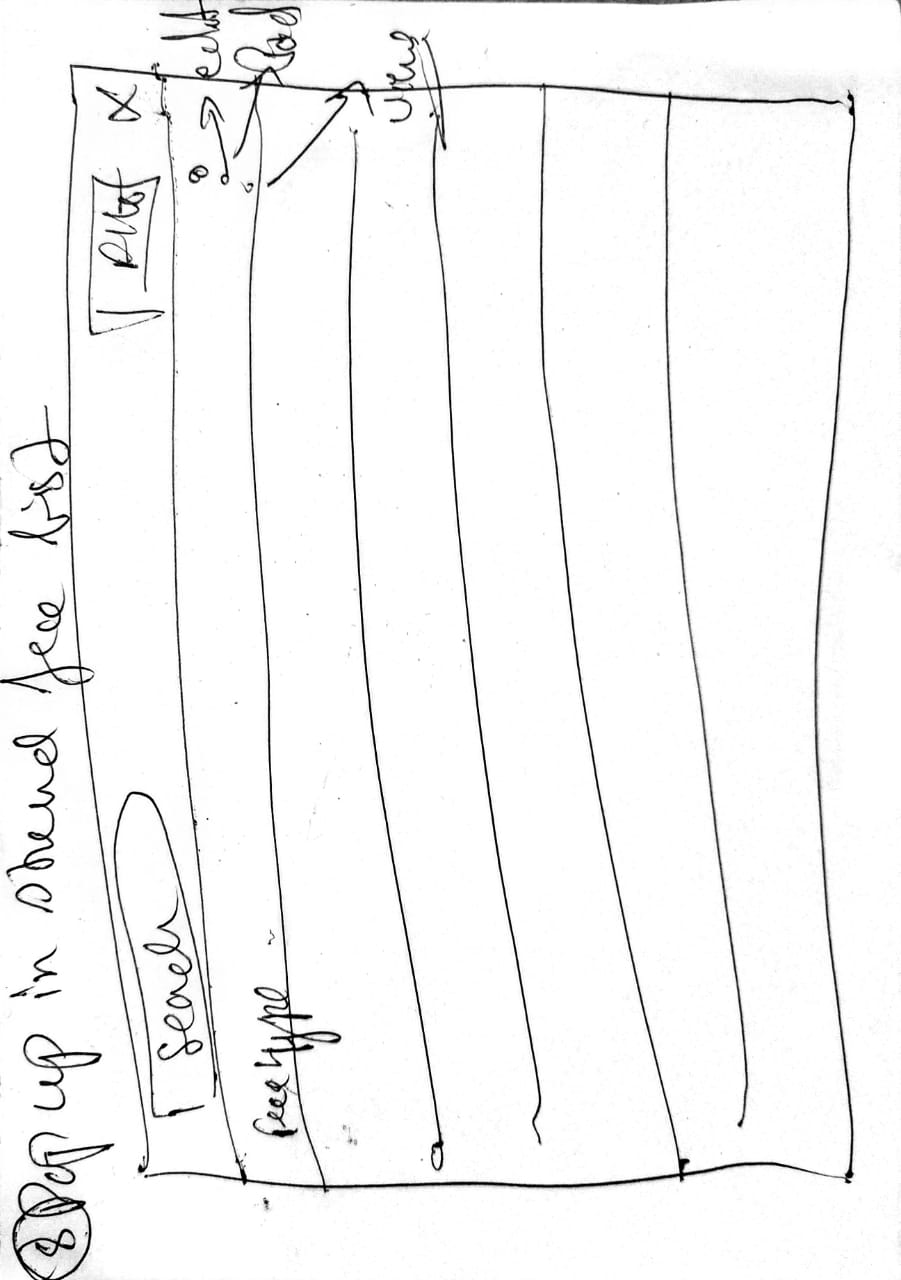

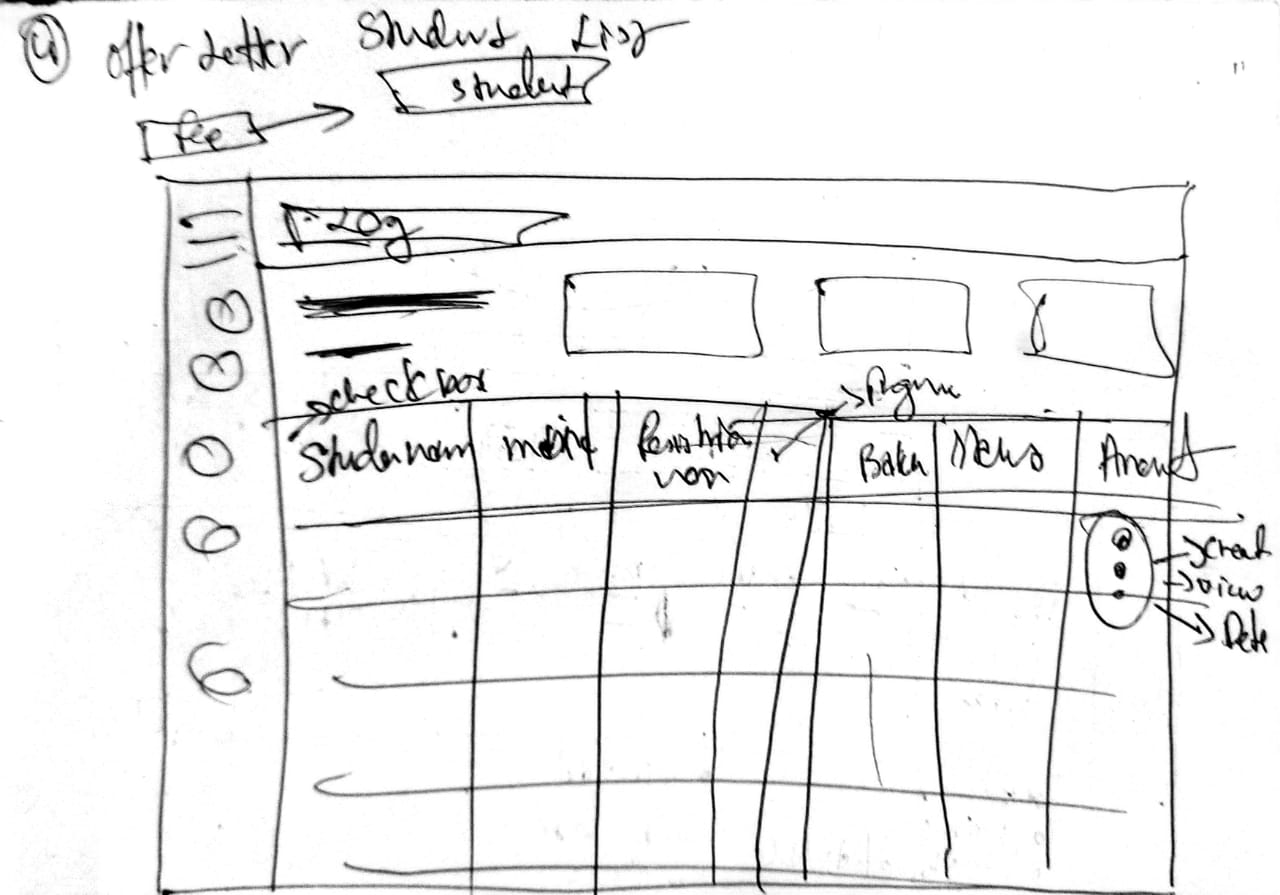

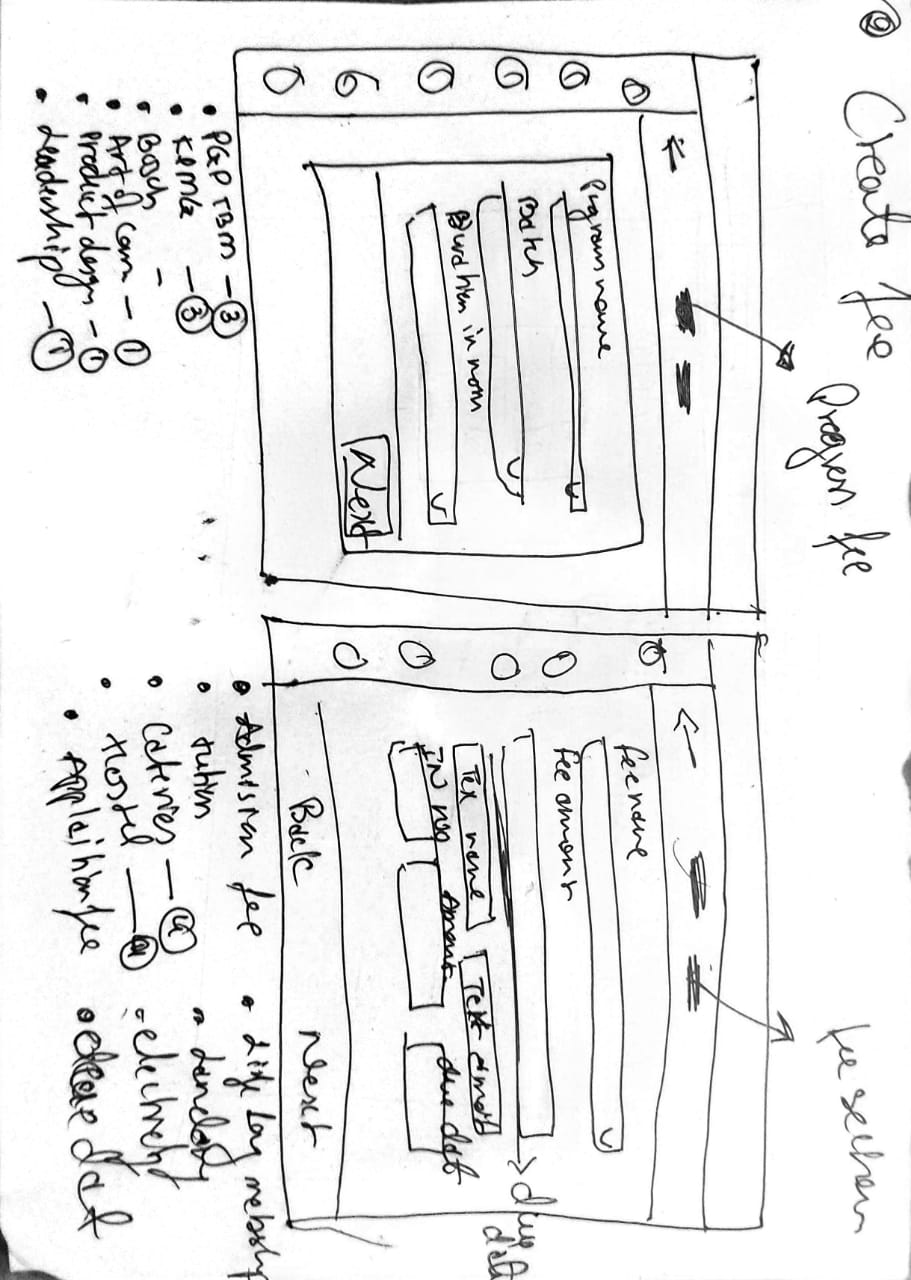

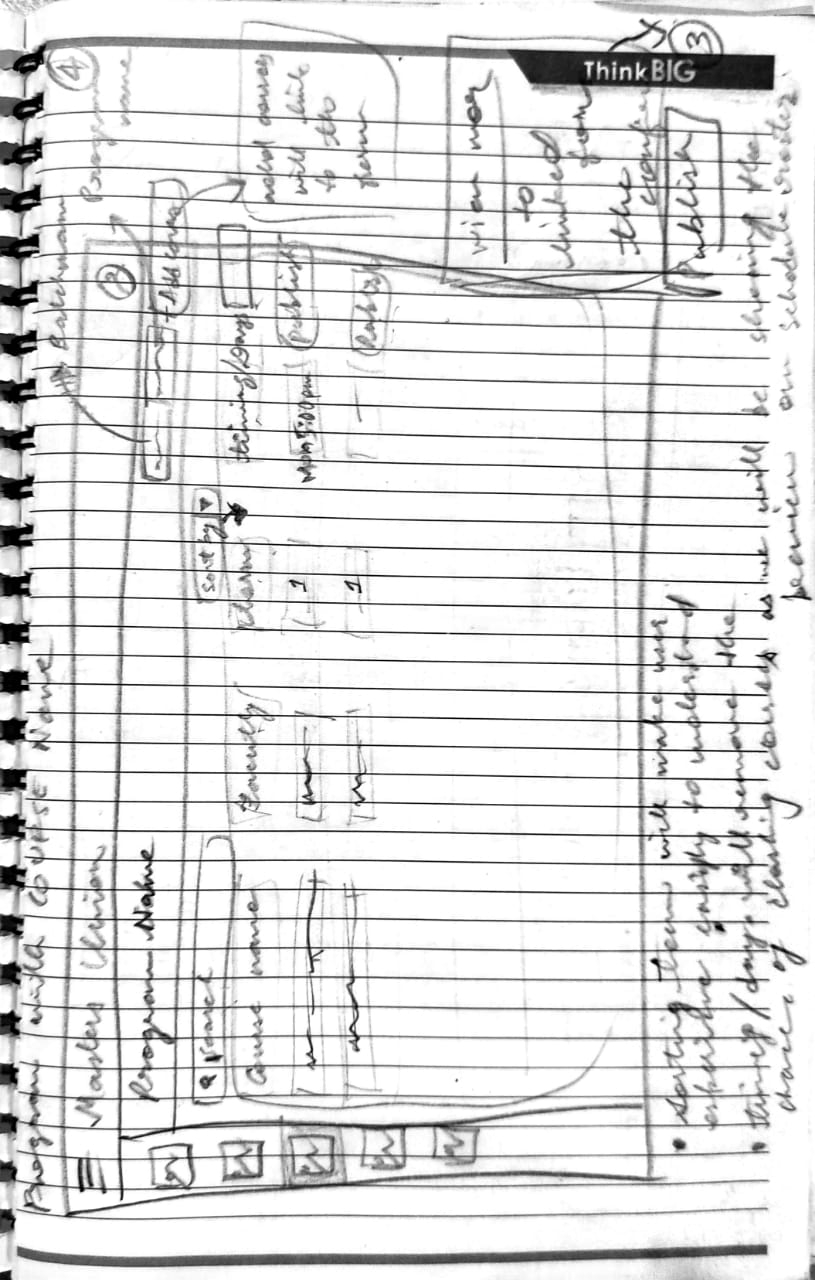

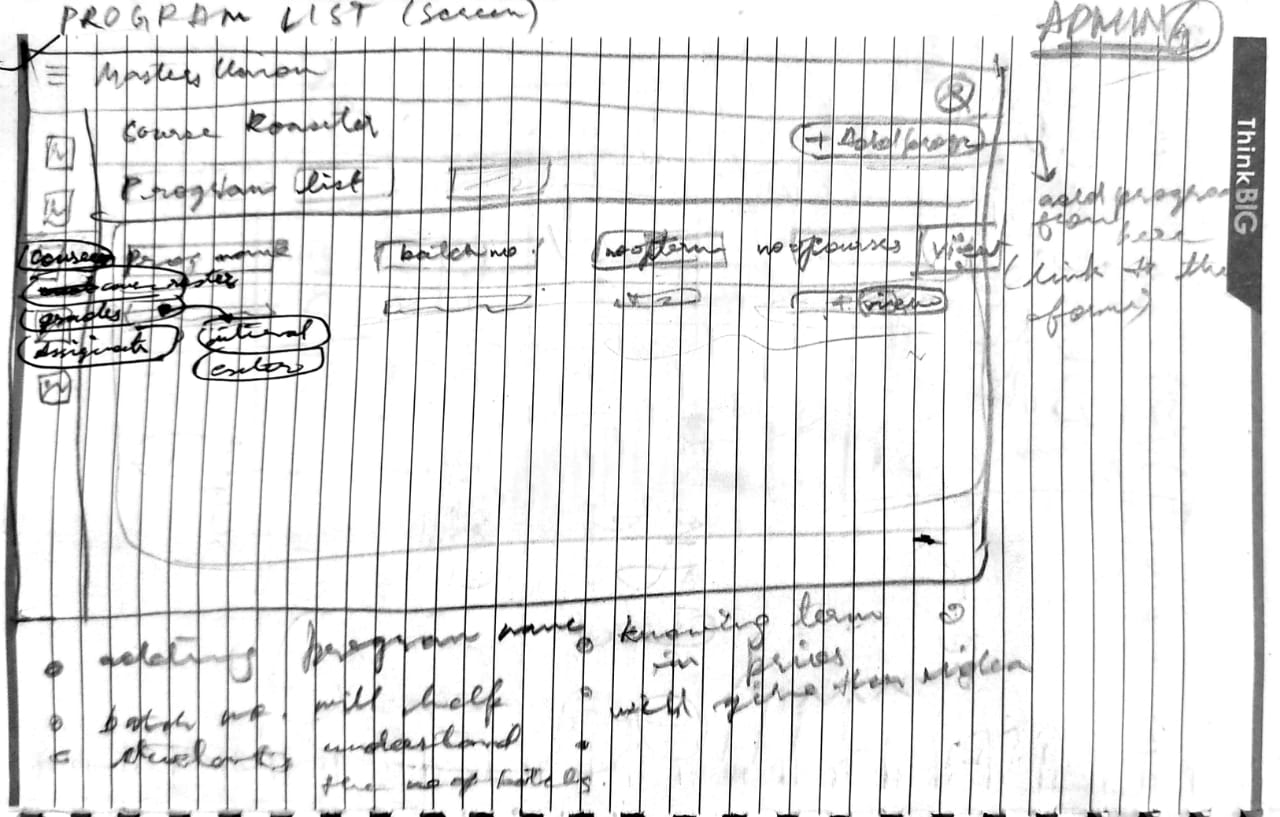

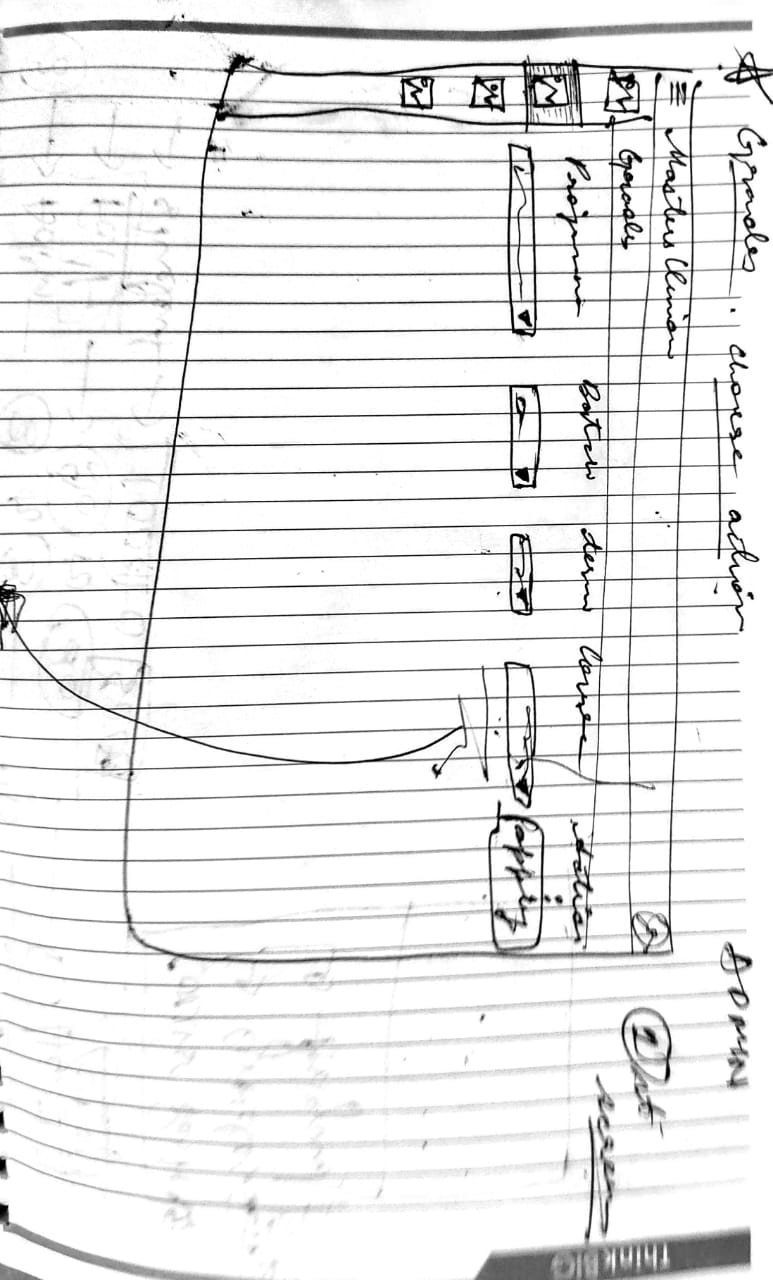

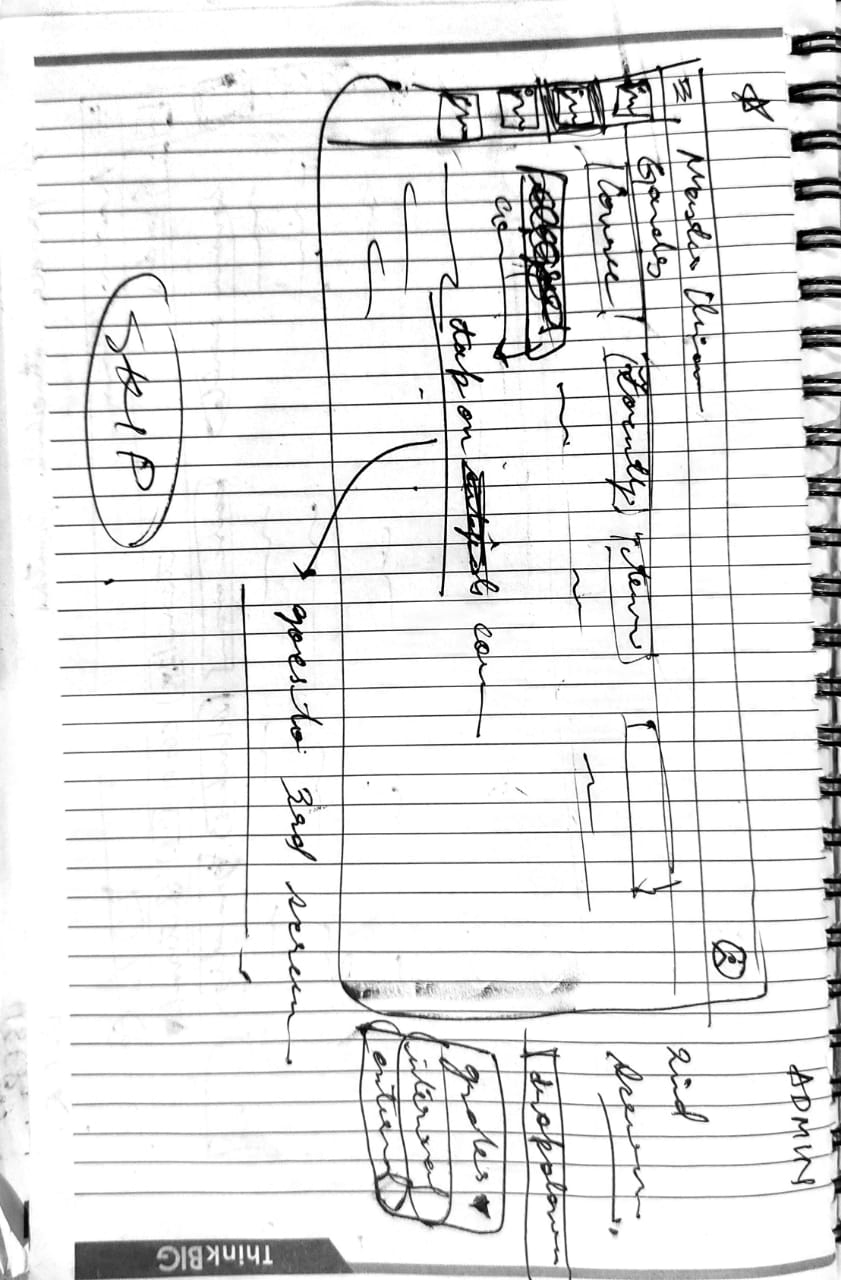

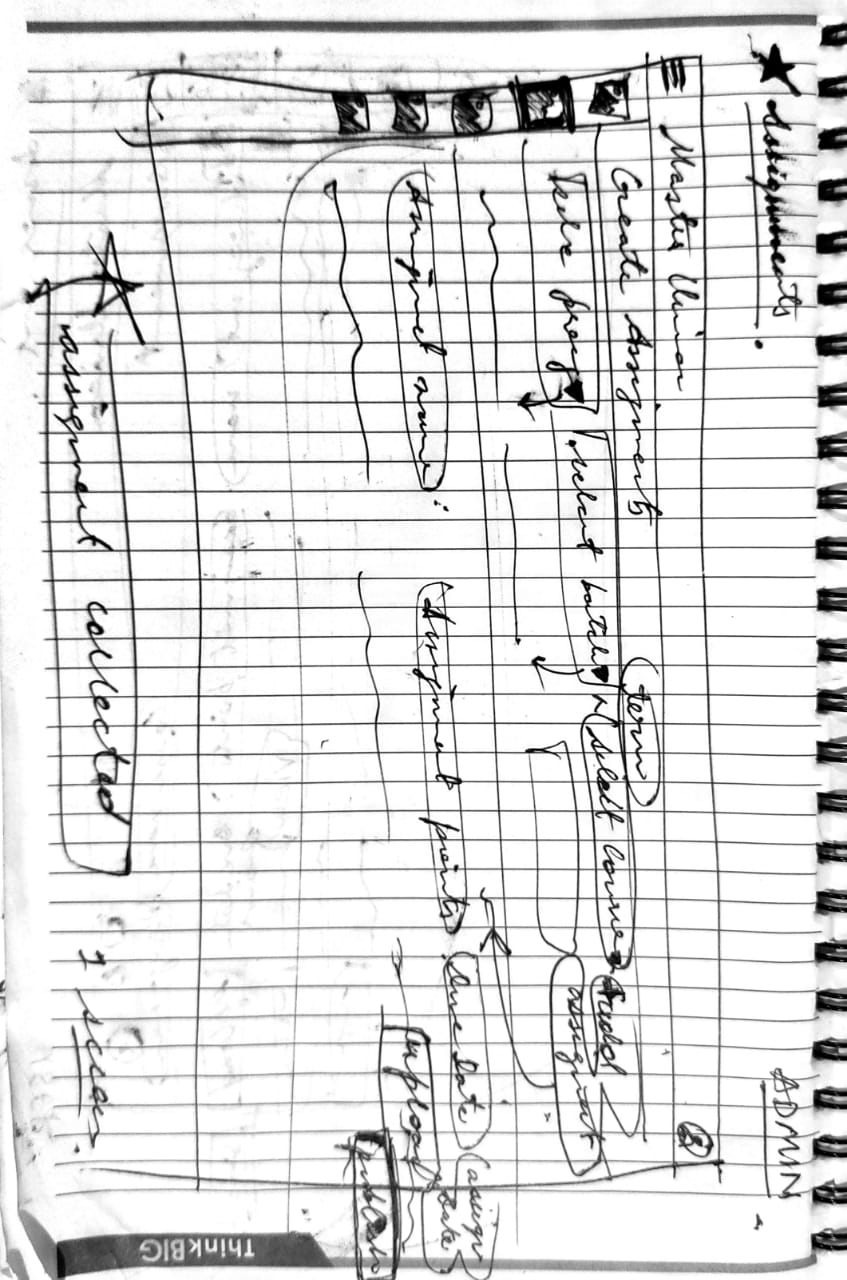

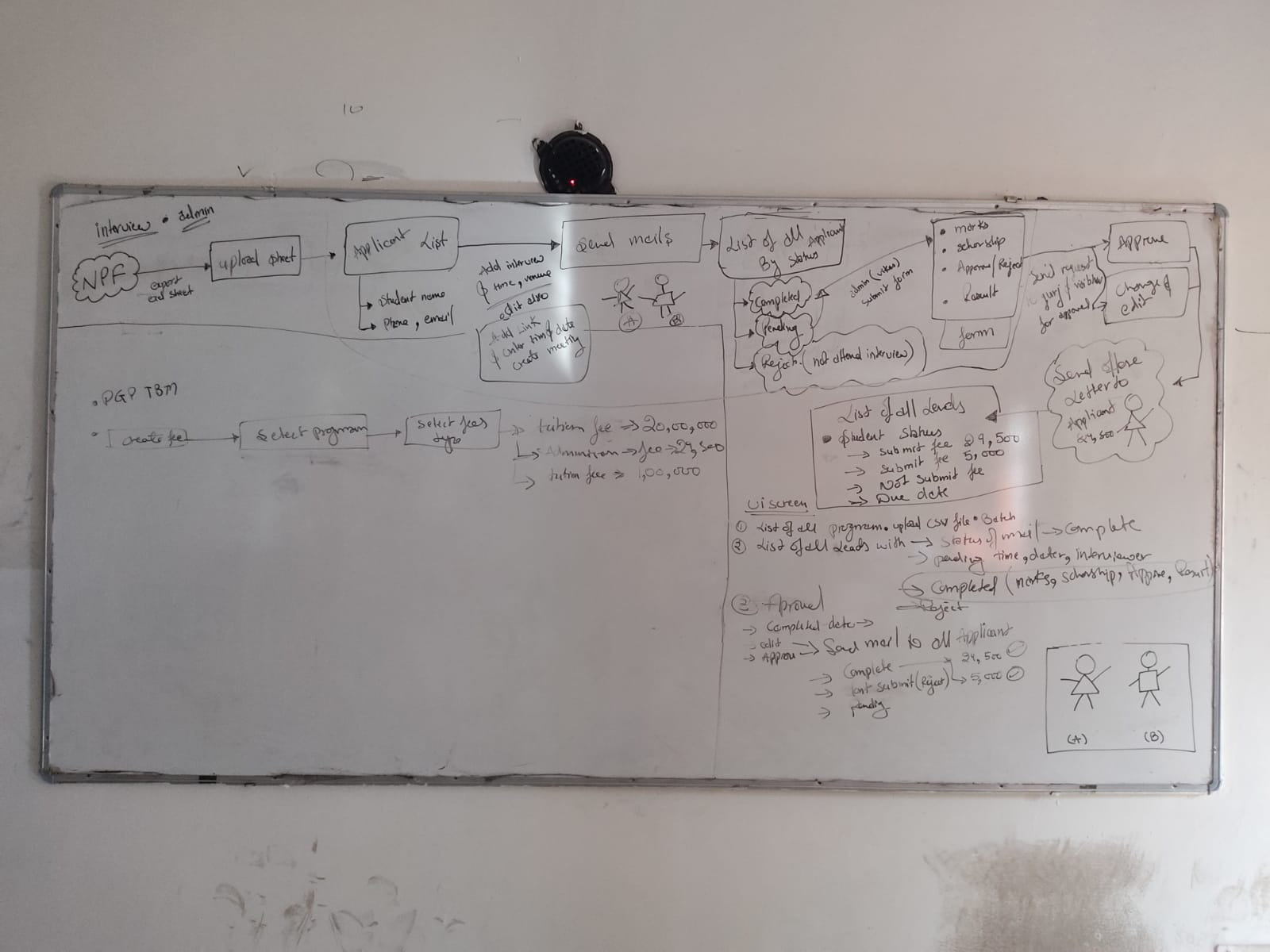

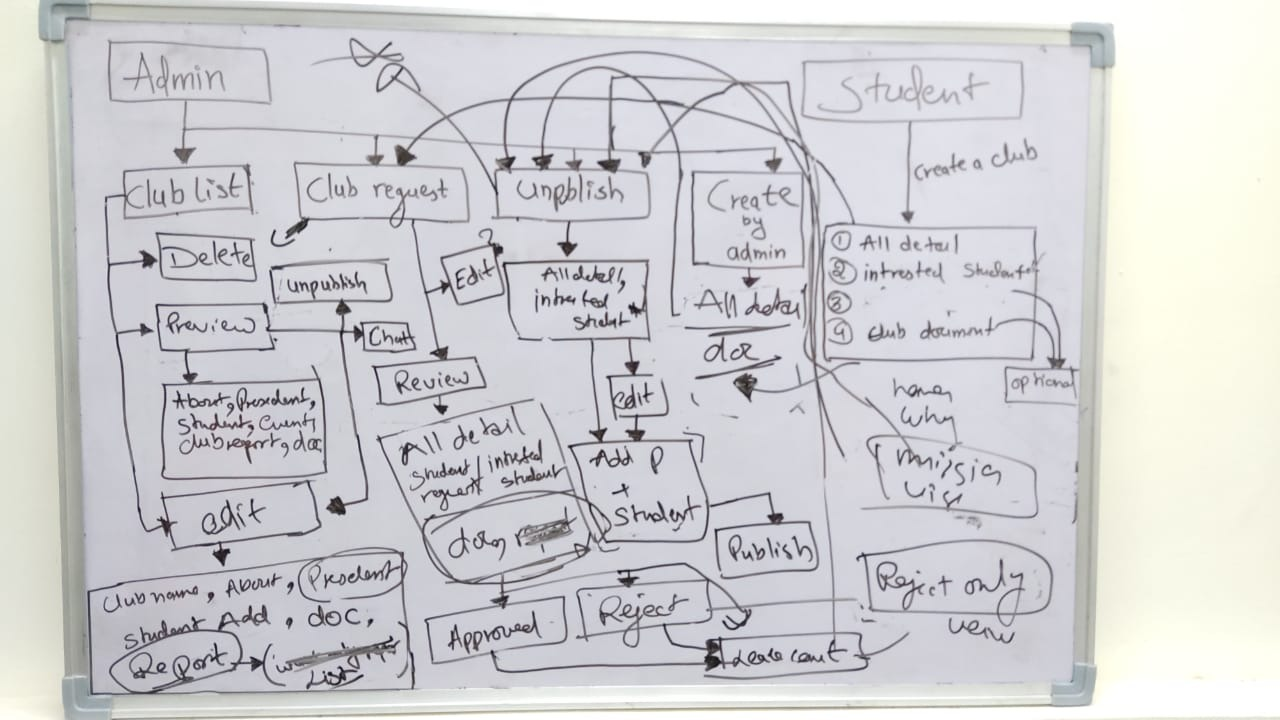

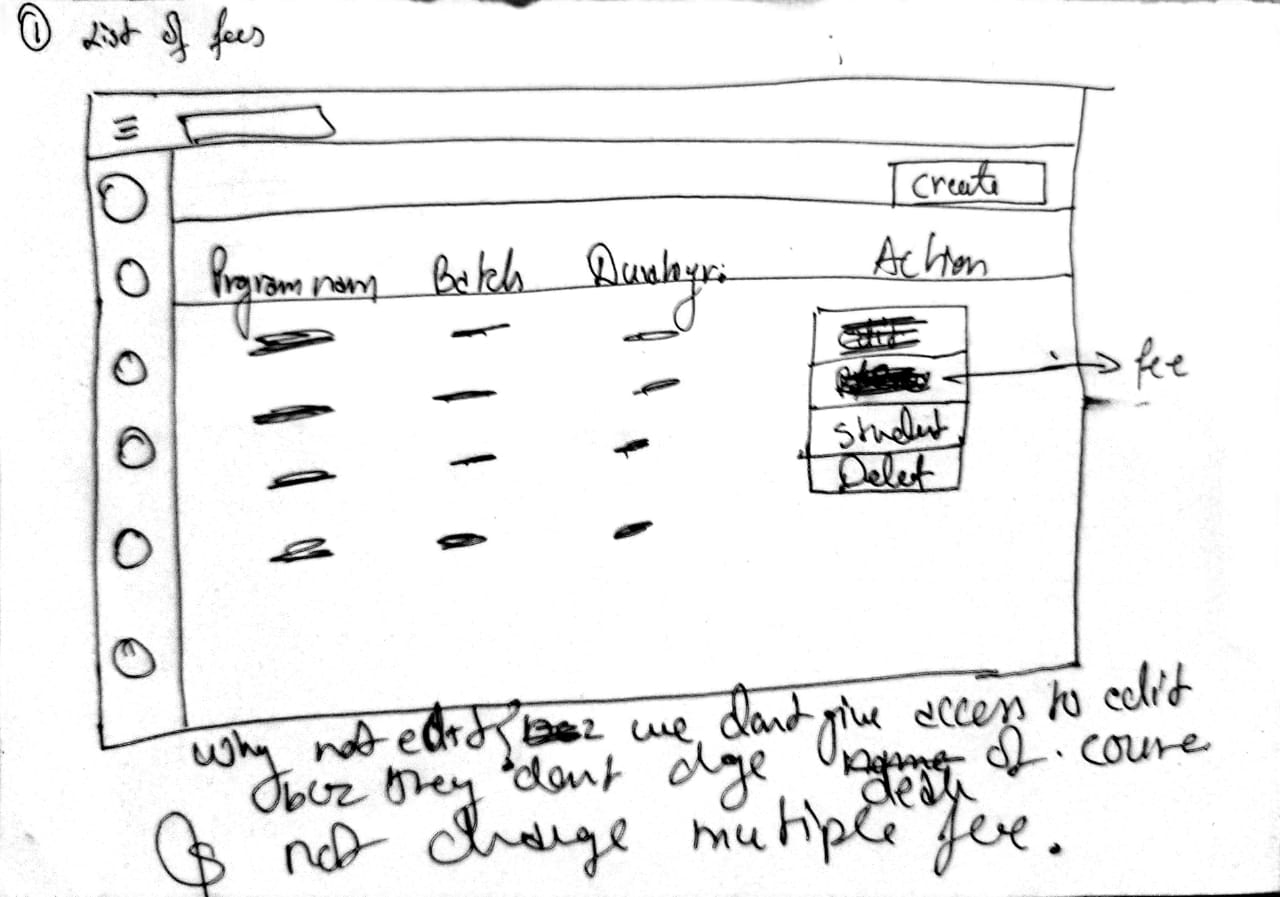

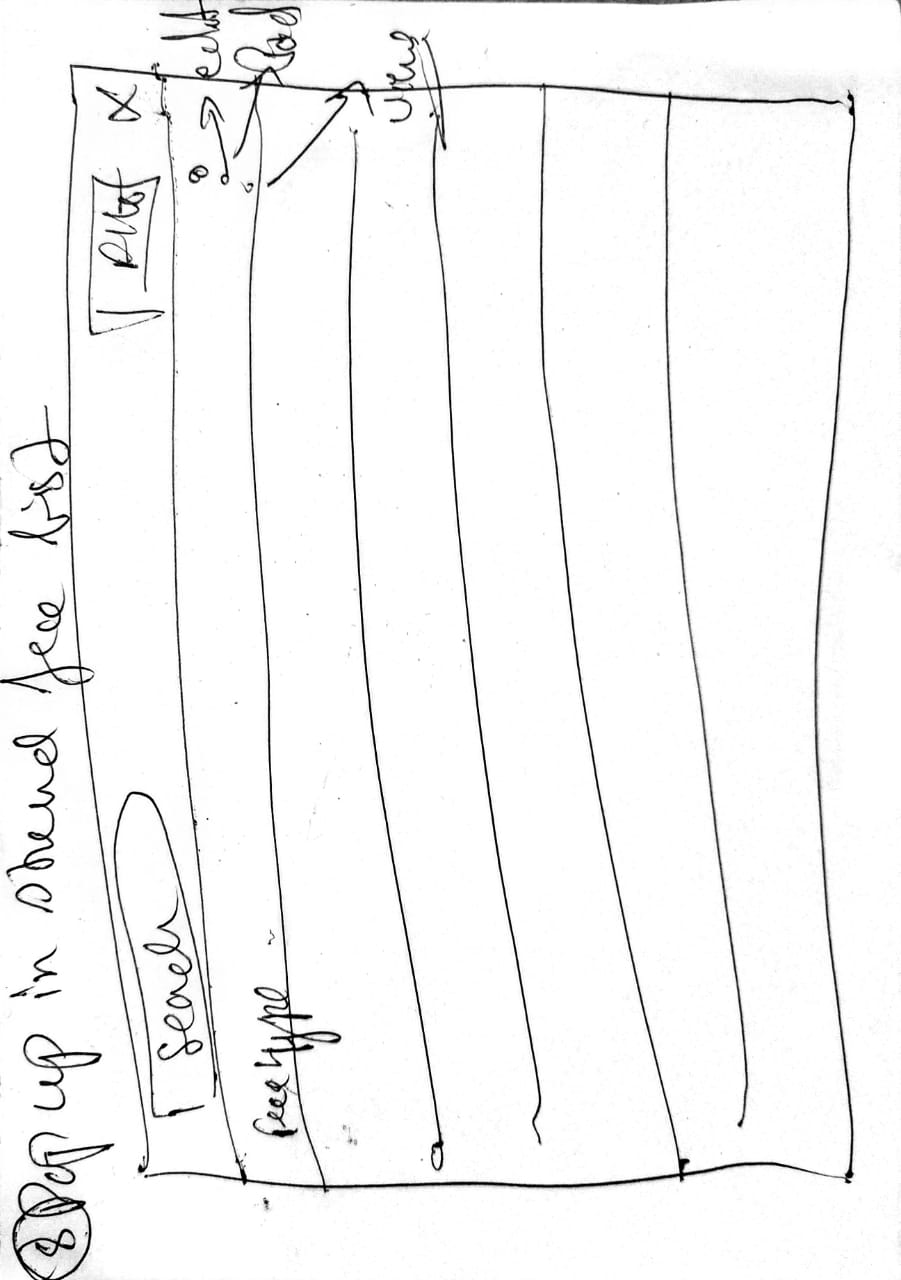

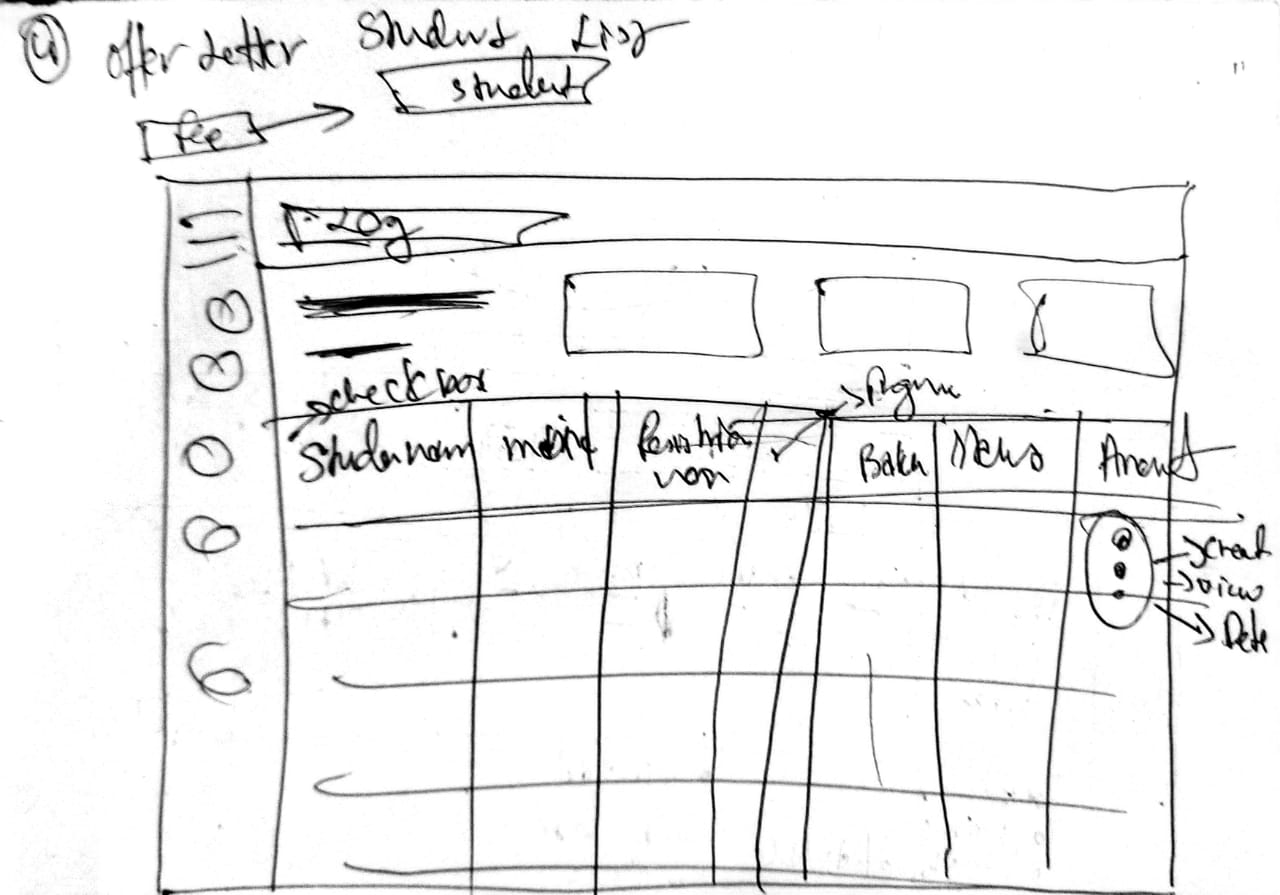

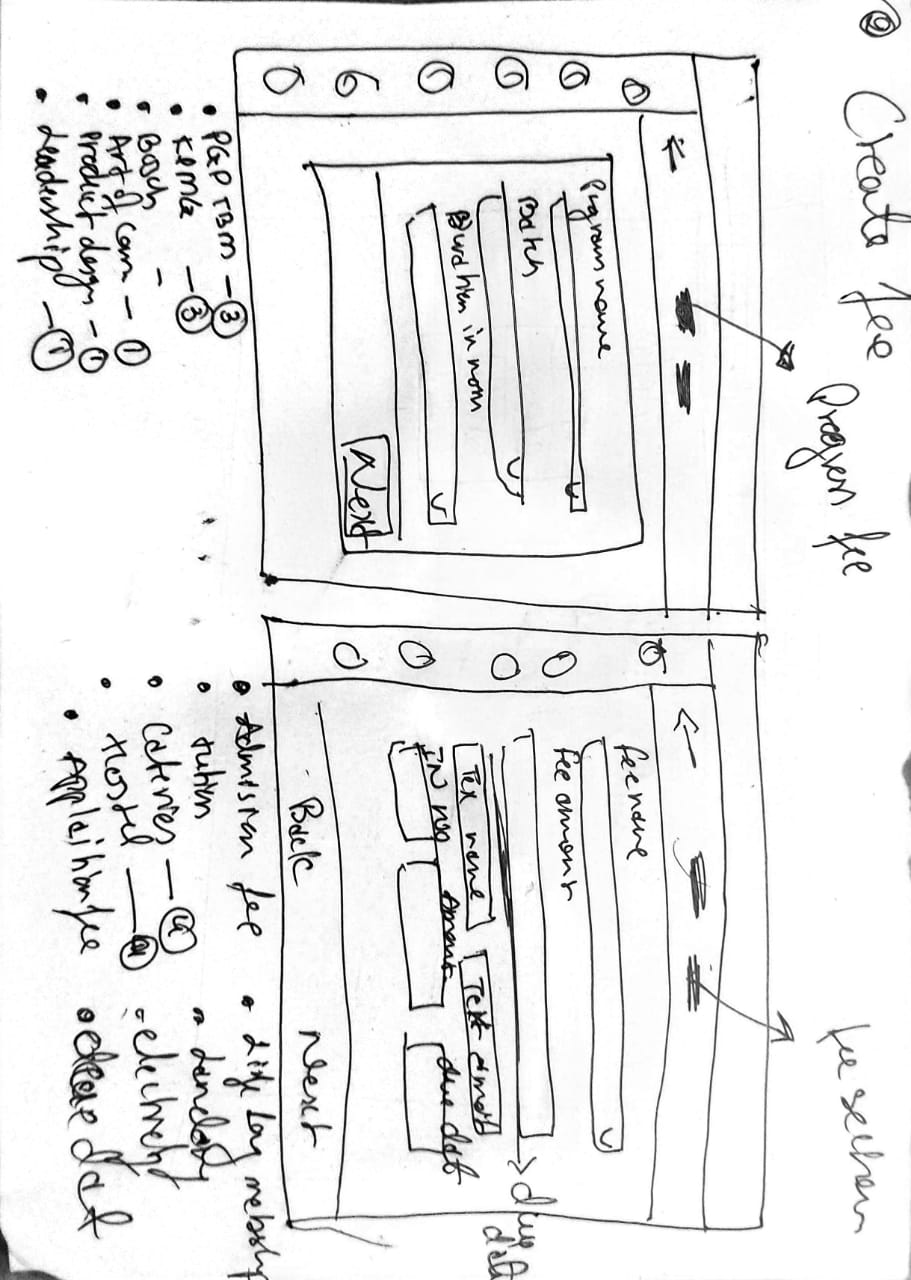

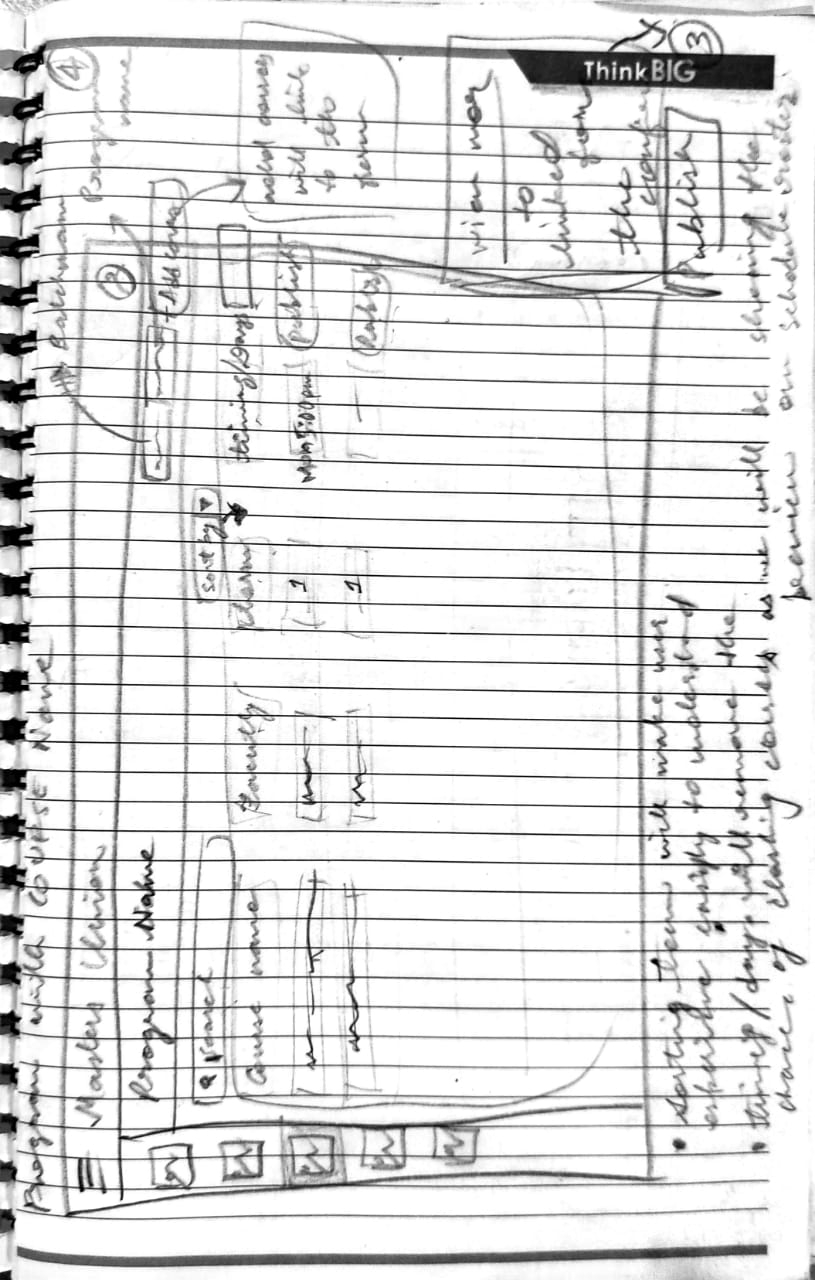

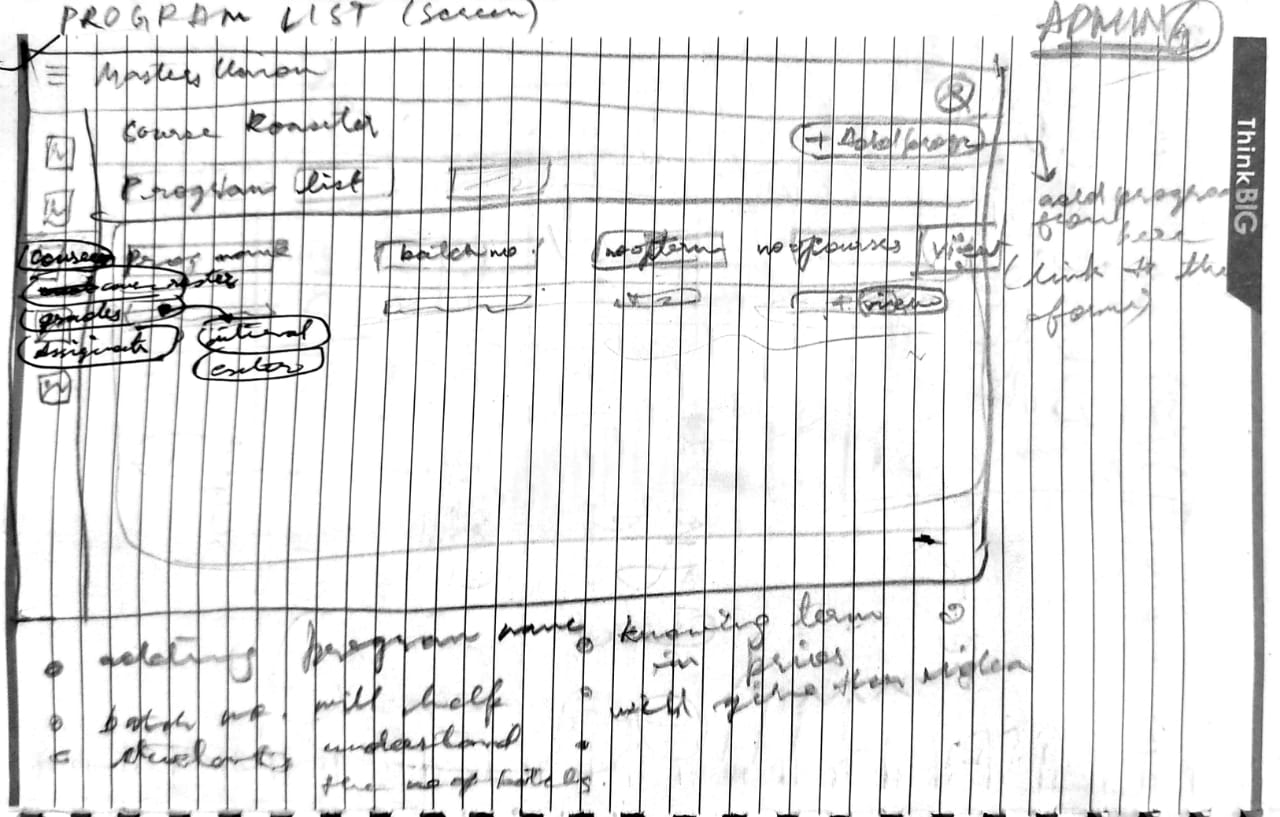

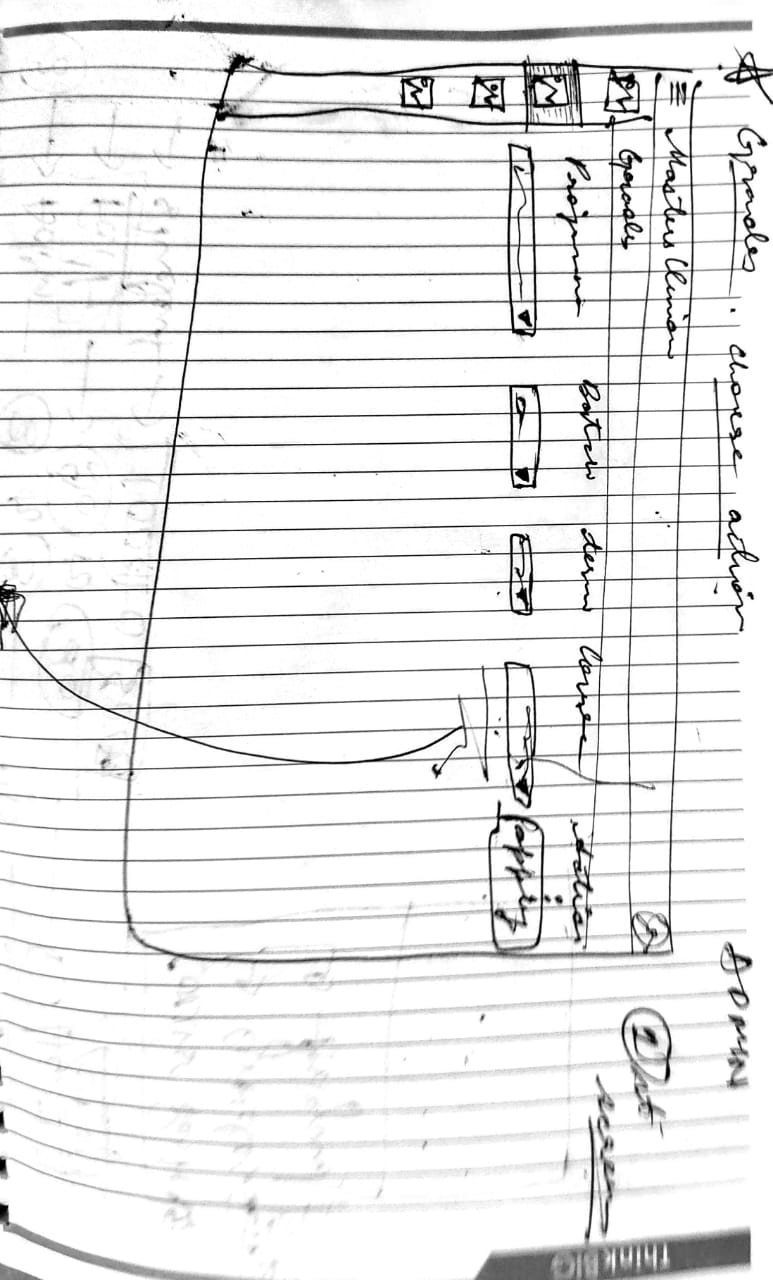

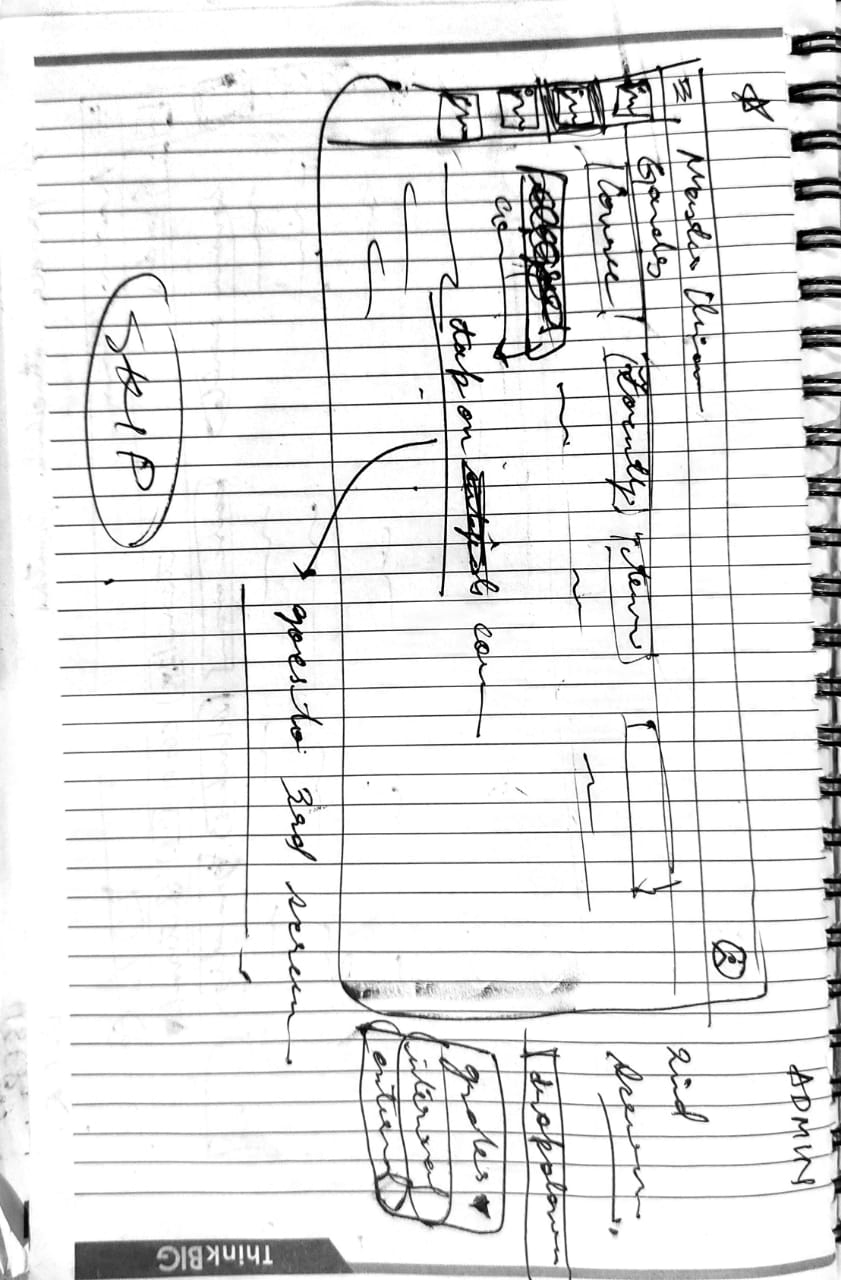

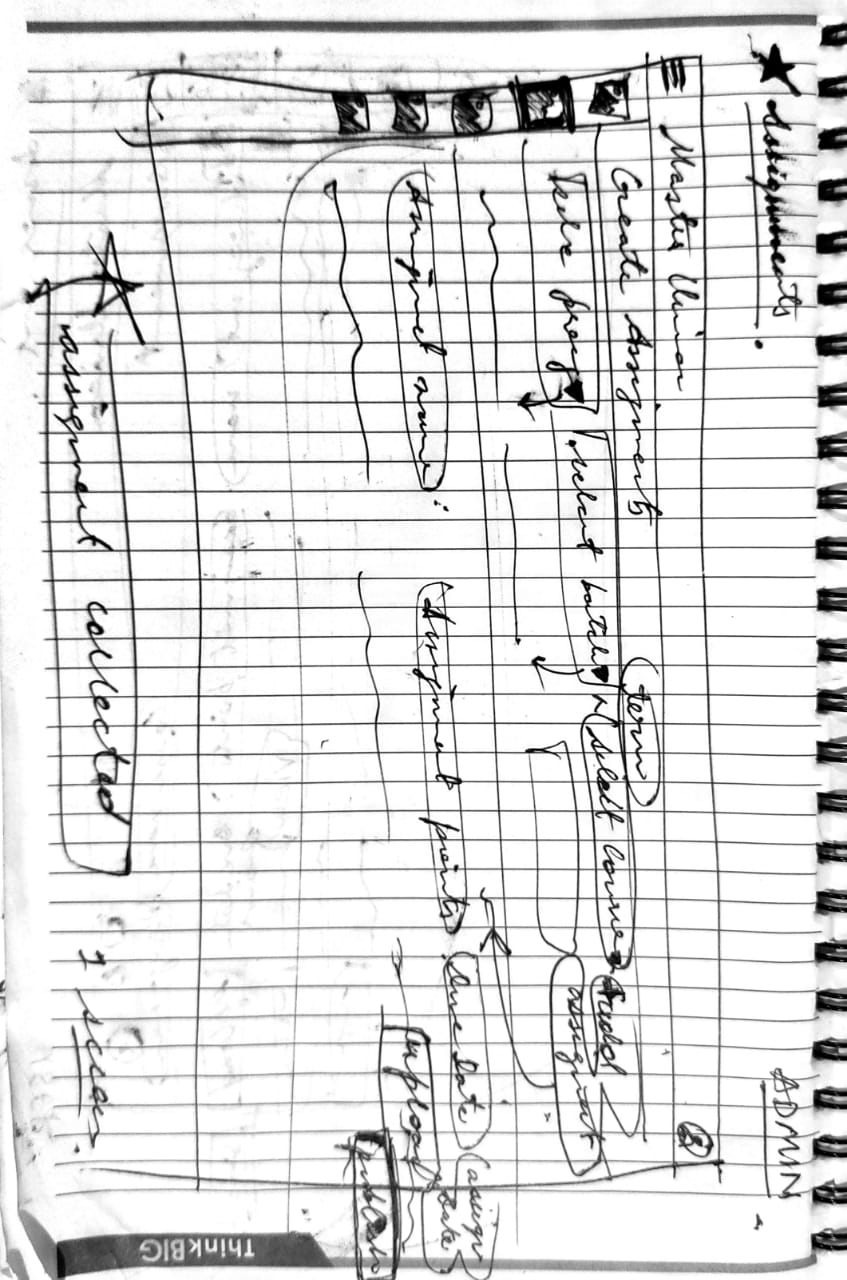

/ 2.7 Wireframing - Lo-Fi

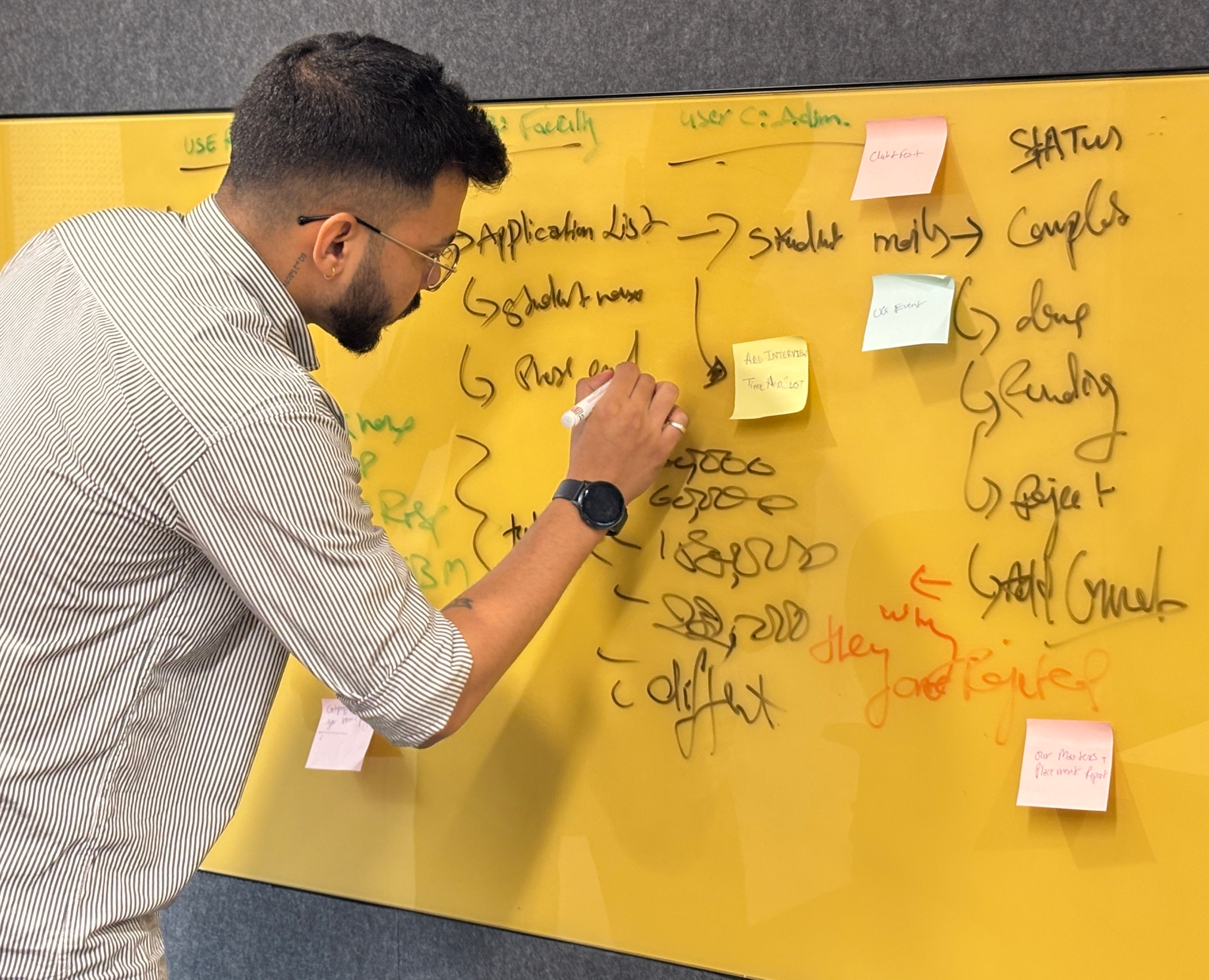

With card sorting results defining the IA and research findings defining the priorities, we moved into solution space. The rule: generate first, judge later.

/ 2.8 Usability Testing - Two Rounds

Two rounds of moderated usability testing - Round 1 on mid-fidelity prototype before any iteration, Round 2 on hi-fidelity after design changes. Testing early meant failing cheaply. Testing again proved the iteration worked.

Parameter

Round 1 (Mid-Fi)

Round 2 (Hi-Fi)

Participants

5 (mixed roles)

5 (matched to Round 1)

Prototype fidelity

Mid-fi Figma clickthrough

Hi-fi Figma - real content

Tasks

Fee payment · Status check · Admin update

All Round 1 tasks + Session log + Report export

Duration

45–60 min

45 min

Format

Moderated in-person

Moderated remote (video call)

Task success rate

63%

87%

Issues found

7 critical · 4 high · 3 medium

1 critical · 2 high · 5 medium (resolved)

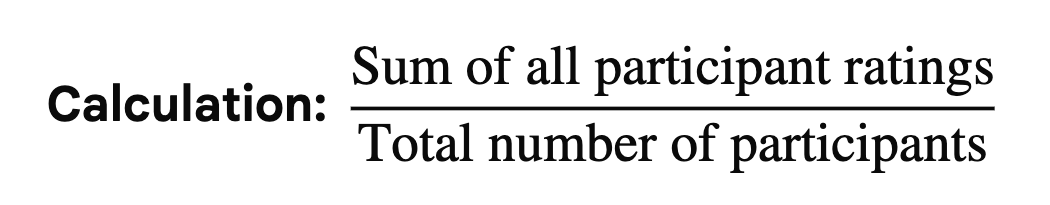

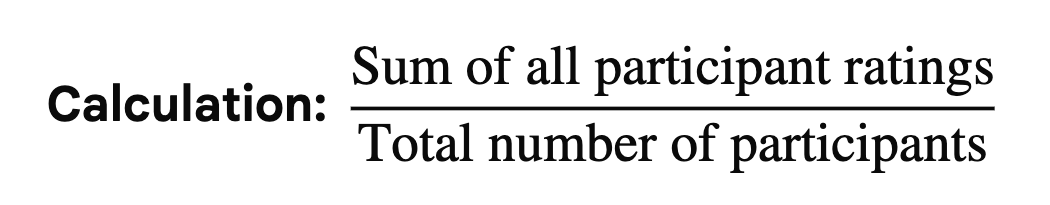

Task Difficulty Scores

Task

Round 1 Difficulty

Round 2 Difficulty

Key Change Made

Student: Pay semester fee

4.2 / 5 (hard)

1.9 / 5 (easy)

Pinelab embedded inside Dinero + itemised fee breakdown + trust signals

Admin: Update student status

2.4 / 5

1.6 / 5

Simplified dropdown + inline confirmation modal

Faculty: Log session note

4.1 / 5 (hard)

2.1 / 5

Dedicated Log Session CTA + autosave + follow-up reminder inline

Report export (added R2)

N/A

1.8 / 5 (easy)

One-click export with 3 pre-built templates

/ 2.9 User Interviews - Laddering Technique

11 semi-structured interviews across 3 user roles. Sessions were 45–60 minutes each. We used laddering: start with behaviour, move to consequence, then feeling, then the underlying need. This is where the real insight lives.

Laddering Example - How One Question Unlocked the Payment Trust Insight

Level

Exchange

L1 Behaviour | What they do

“How do you pay your semester fee?” → “I get an email with a link and just click it.”

L2 Consequence | What happens next

“What happens after you click it?” → “It goes to a page I’ve never seen before. Looks sketchy.”

L3 Emotion | How they feel

“How does that make you feel?” → “Anxious. I’m paying 3.5 lakhs - what if it’s phishing?”

L4 Value | What they actually need

“What would make you feel confident?” → “If it was inside the college portal. Official-looking.”

Insight

Payment trust is a design problem, not a behaviour problem. → Design decision: Embed Pinelab inside Dinero so students never leave the platform.

Student Interview Areas

Fee Payment: How did you pay your semester fee? Did you feel confident the payment went through? Why or why not?

Admission Process: Walk me through your application journey. How did you know what stage your application was at?

Post-Admission: After admission, how did you track your progress? How easy was it to connect with your counselor?

Admin Interview Areas

Managing Admissions: Take me through a typical morning. How do you track who has completed interviews? Where do you lose the most time?

Financial Management: How do you track fee payments? What happens when a student says they paid but it hasn't shown up?

Data & Reports: What information do you need at a glance first thing in the morning?

Faculty / Counselor Interview Areas

Student Progress: How do you manage your student meeting schedule? Where do you log session notes?

At-Risk Flagging: What would change if you had real-time visibility into each student's journey? How do you flag a student you're worried about?

/ 2.10 Data Collected

11 interview recordings (45–60 min each)

2 contextual observation sessions real admin workflow

3 card sorting result sets - 11 participants across 3 role groups

NPF vs Flywire competitive gap analysis

Usability test recordings - Round 1 (5 sessions) + Round 2 (5 sessions)

200+ individual sticky note observations from all interviews and sessions

Research Limitations

Naming limitations is not a weakness - it tells the reader exactly how far to generalise the findings.

Limitation

Impact

Mitigation

Small qualitative sample (n=11)

Findings are directional, not statistically significant

Triangulated across 3+ methods before elevating to design decision

Recruitment from single institution

Mental models may differ at other EdTech companies

Findings validated through usability testing - not assumed to transfer

Admin participants self-selected (volunteered)

May skew toward more engaged, tech-comfortable admins

Contextual observation sessions compensated for self-report bias

/ 3.1 Identify Patterns

Synthesis is where raw data becomes design direction. With 200+ observations, 11 interviews, 2 observation sessions and card sorting results, the risk was finding patterns that weren't really there - or missing the ones that were. This is how we separated signal from noise.

Step 1 - Role-Based Sorting

Every sticky note was first tagged by role (Student / Admin / Faculty) and by data type (Observation / Quote / Behaviour / Pain Point). This prevented cross-role noise from masking role-specific insights - and revealed which problems were universal vs. role-specific.

Step 2 - Open Clustering (KJ Method)

Notes were clustered by affinity - no predefined categories. Clusters that appeared independently across multiple sessions were flagged as candidate patterns. Clusters with notes from only one participant were kept as individual observations, not elevated to patterns.

Cluster

Name

Note

Count

Roles

Represented

Elevated

to Pattern?

Reason

Fragmentation Fatigue

48

Admin

Faculty

yes

Appeared in interviews + contextual observation (2 methods)

Visibility Anxiety

41

Student

Faculty

yes

Appeared in interviews + journey map + card sorting (3 methods)

Payment Trust Deficit

26

Student

yes

Same quote structure from 3 of 5 students independently

Admin Info Overload

41

Admin

yes

Corroborated by contextual observation (4 hrs vs 1-2 hrs self-reported)

Admin Info Overload

41

Admin

yes

Corroborated by contextual observation (4 hrs vs 1-2 hrs self-reported)

Counselor Invisibility

32

Faculty

yes

Consistent across all 3 faculty participants

Leadership Reporting Gap

11

Admin

No

Stakeholder need, not user pain. Shipped as low-priority in v1.5.

Step 3 - Triangulation Gate

Before a pattern became a design decision, it had to pass through a triangulation gate: corroboration by at least 2 independent methods. This prevented a single vivid interview quote from driving a design decision.

Visibility Anxiety

User interviews

(41 notes)

Journey mapping

(peak frustration at Stage 1)

Card sorting

(students separated Journey from Money)

Payment Trust Deficit

User interviews (phishing 3/5 unprompted)

Laddering (L4: want official portal, not external link)

Usability test R1

(Task 1 hardest: 4.2/5 difficulty)

Admin Fragmentation

User interviews

(1-2 hrs self-reported)

Contextual observation (real: 3-5 hrs)

Usability test R1 (admin status update: 2.4/5 difficulty)

Counselor Invisibility

User interviews

(32 notes, all 3 faculty)

Usability test R1 (session log: 4.1/5 hardest task)

Card sorting (faculty grouped by student, not by task)

/ 3.2 Key Findings - 5 Findings, Severity-Rated

5 Findings, Severity-Rated

Findings rated by severity. Critical and High findings drove v1.0 priorities. Medium findings shipped in v1.5.

Finding

Design Decisions

F1

CRITICAL

Status Visibility: No Self-Serve Journey View

Students had no visibility across any of the 8 admission stages. Every status check required emailing admin, creating dual pain: student anxiety + admin call volume.

- Status timeline covering all 8 stages always visible on student homepage

- Auto-notification in-app + email on every stage change

- Admin pipeline refreshes in real-time

Impact: Eliminated status-query emails to admin. Students self-serve their journey tracking.

F2

CRITICAL

Payment Trust:

External Pinelab Link Caused 25% Abandonment

Students received a payment link via email, landed on an unbranded external Pinelab page and abandoned due to fear of phishing. 14-15 day manual processing cycle made it worse. 3 of 5 students used the word "phishing" unprompted.

- Pinelab gateway embedded inside Dinero - student never leaves the platform.

- Mandatory itemised breakdown: admission fee + tuition fee before confirmation.

- Masters' Union branding throughout.

- Instant in-app receipt + email confirmation.

Impact: 74% -> 93% payment completion (A/B proven). Abandonment eliminated.

F3

CRITICAL

CRITICAL

Admin Fragmentation: 3-5 Hours of Manual Daily Overhead

Admins opened 4+ tools before 9am daily (NPF, Pinelab, Excel, email). Every workflow required manual reconciliation across disconnected systems. 2-4 data errors per day from record mismatches.

- Unified admin dashboard: single view of all pending actions.

- Automated status sync across NPF -> Dinero - no manual Excel uploads.

- One-click report export replacing manual compilation.

Impact: Admin daily overhead reduced from 3-5 hrs to <1 hr. 0 platform switching for core flows.

F4

HIGH

Counselor Invisibility:

Session Notes Living in Email Drafts

Faculty had no system for logging session notes. Notes lived in personal email drafts or notebooks. Follow-ups relied on memory. No way to flag at-risk students or track whether advice was acted upon.

- Dedicated Log Session CTA as primary action on faculty dashboard.

- Per-student session history - all notes in one place.

- Follow-up reminder inline with note entry.

- At-risk flag visible to admin on shared pipeline.

Impact: Faculty task difficulty dropped from 4.1 -> 2.1 / 5 in Round 2. 0 notes lost post-launch.

F5

HIGH

IA Mismatch: One Navigation Cannot Serve Three Mental Models

Card sorting confirmed that students, admins and faculty grouped identical information in completely different ways. Students separated Journey from Money. Admins thought in pipeline stages. Faculty thought in student relationships.

- Role-based entry point at login - 3 separate dashboards, not a shared navigation.

- Student: Journey timeline + Fee tracker as primary tabs.

- Admin: Pipeline columns as primary view.

- Faculty: Student roster as primary view.

Impact: All 3 dashboards actively adopted (70%+ DAU within 30 days). No user reported navigation confusion post-launch.

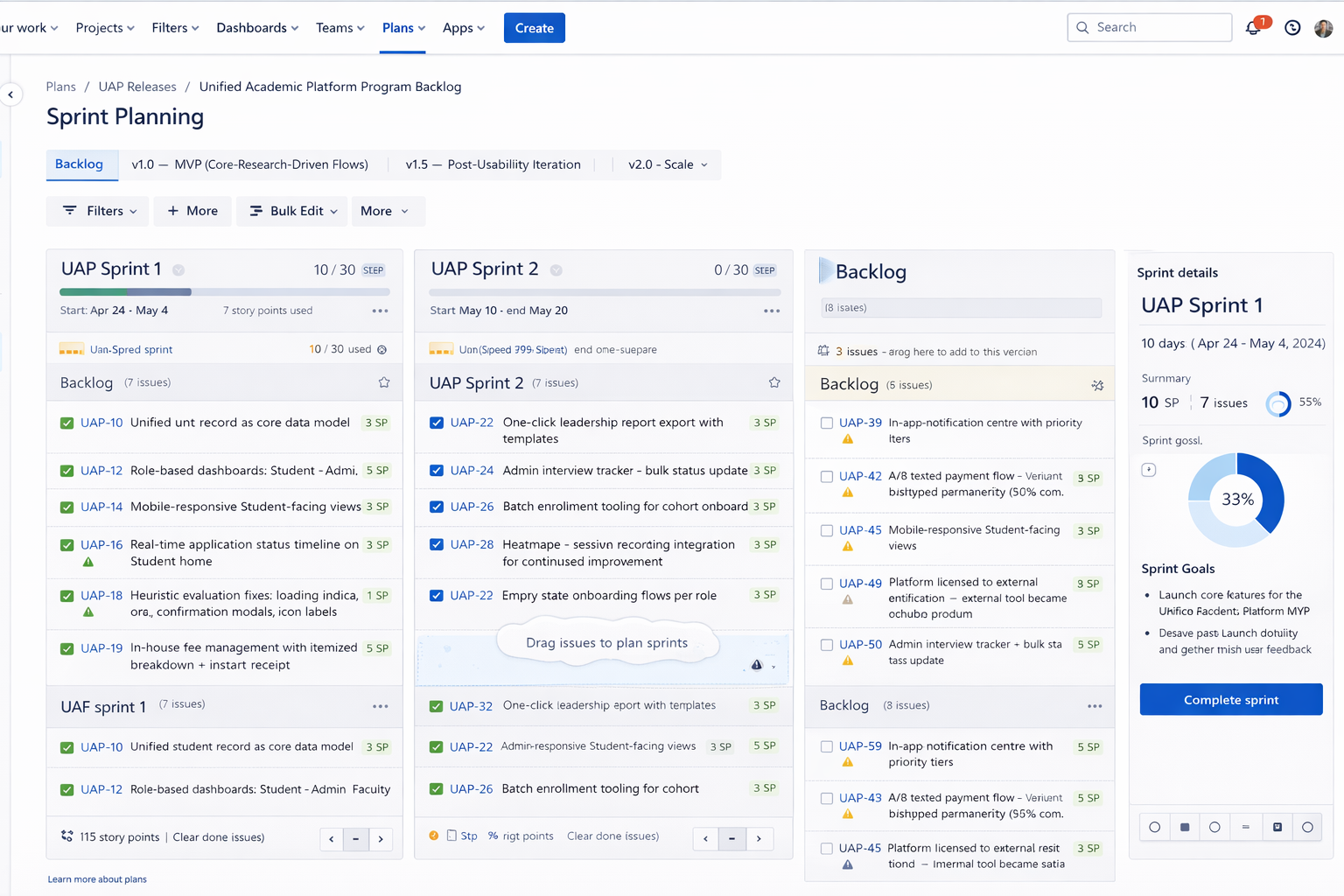

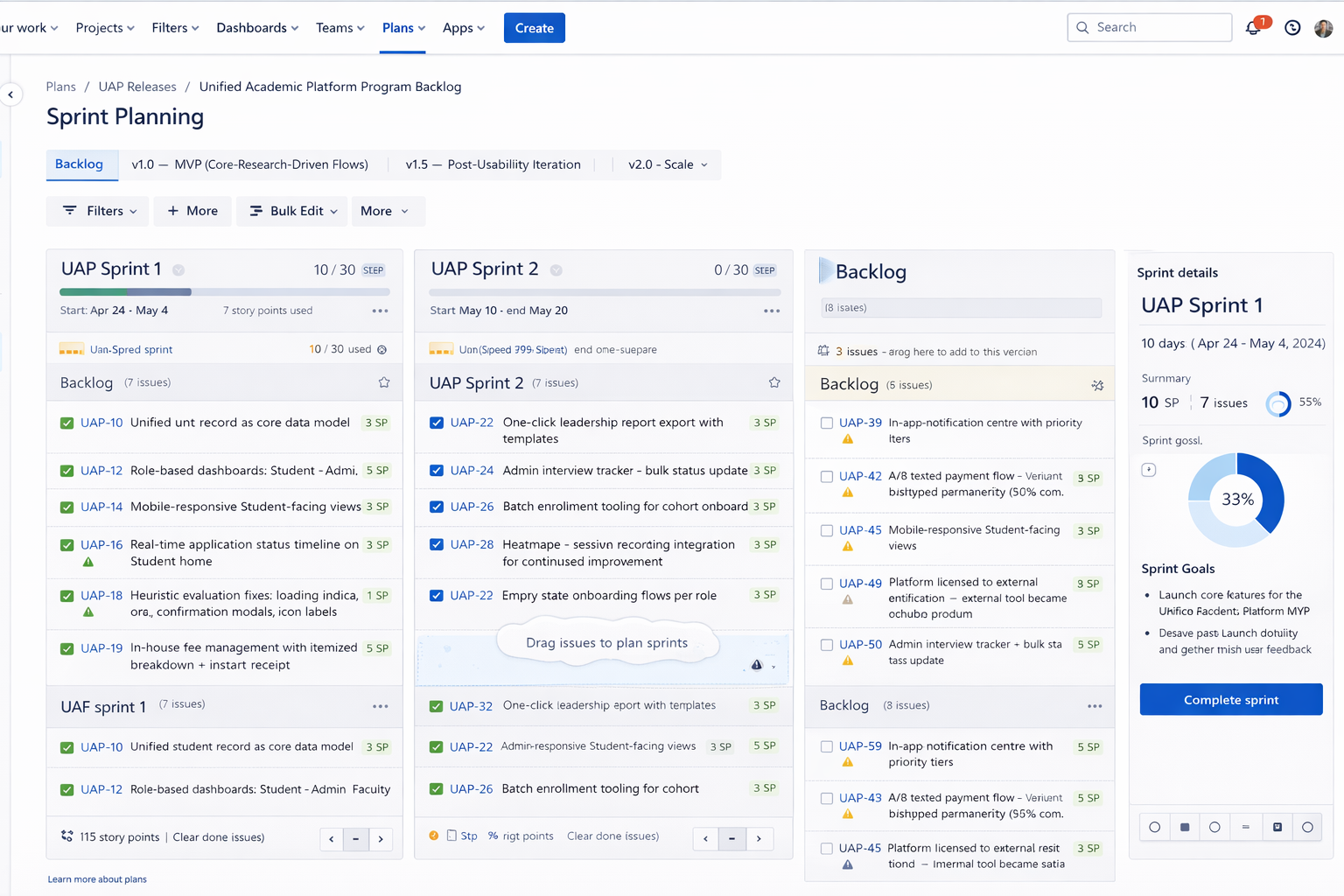

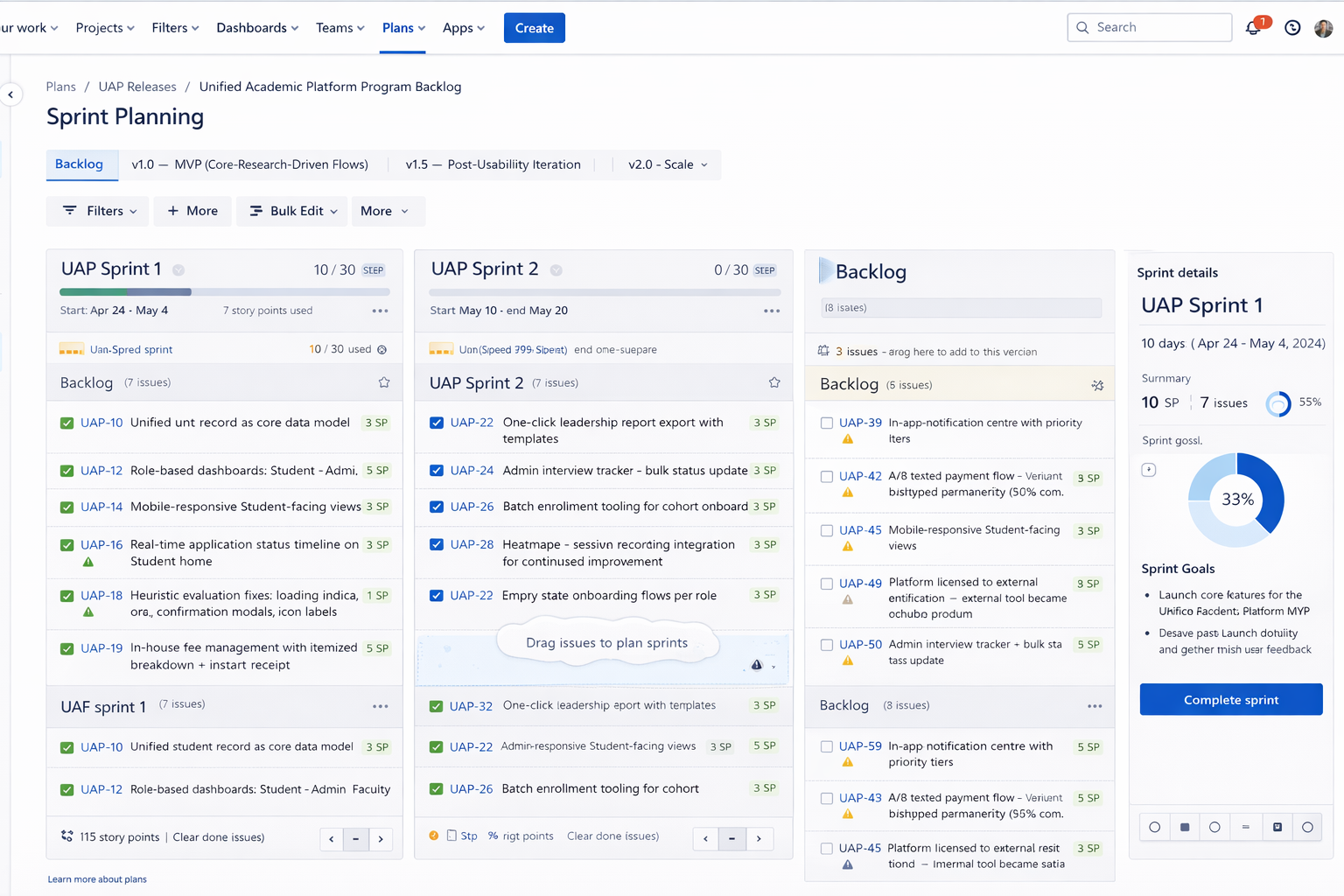

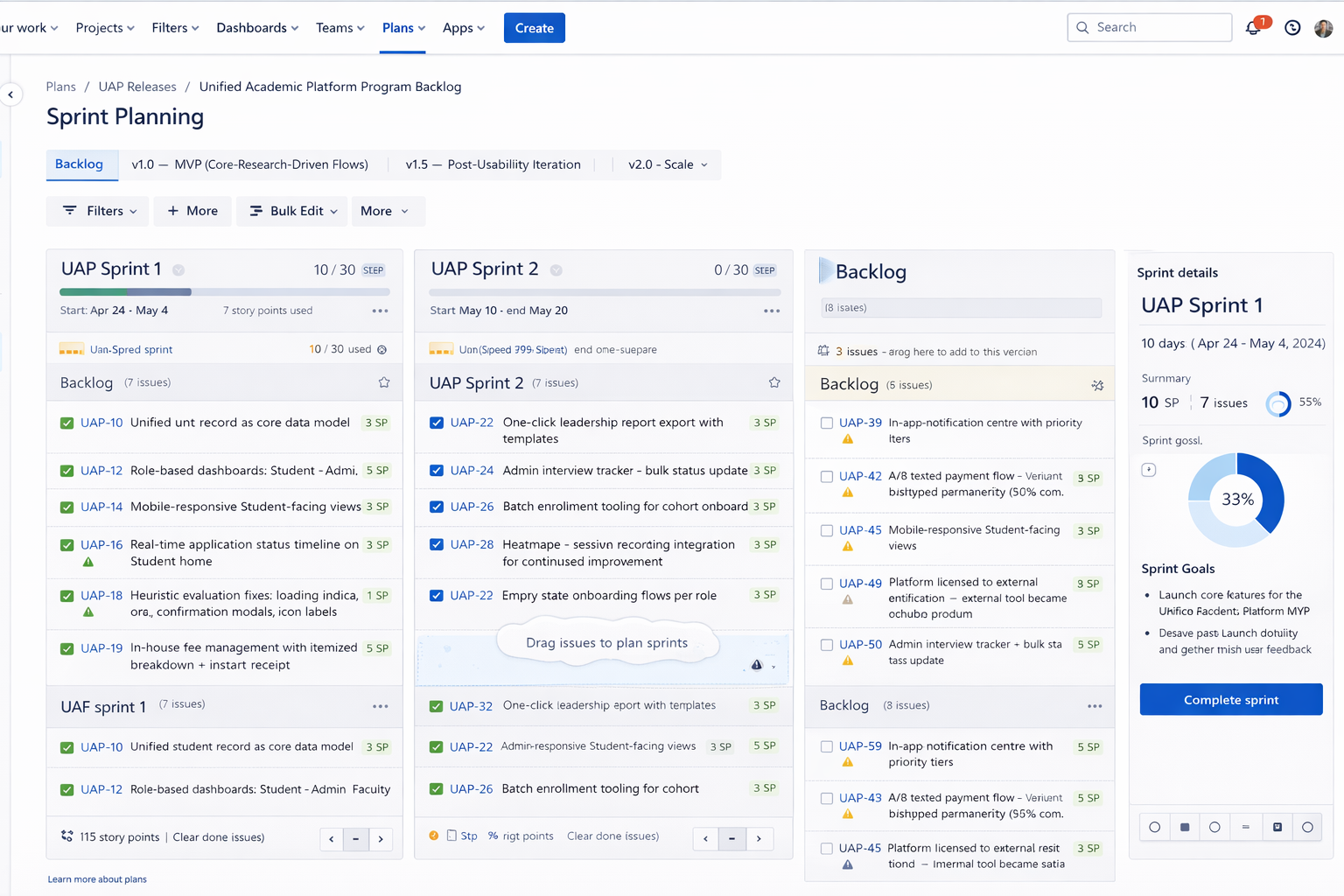

/ 4.1 Sprint planning

v1.0 → v1.5 → v2.0 · Research Drove Every Release

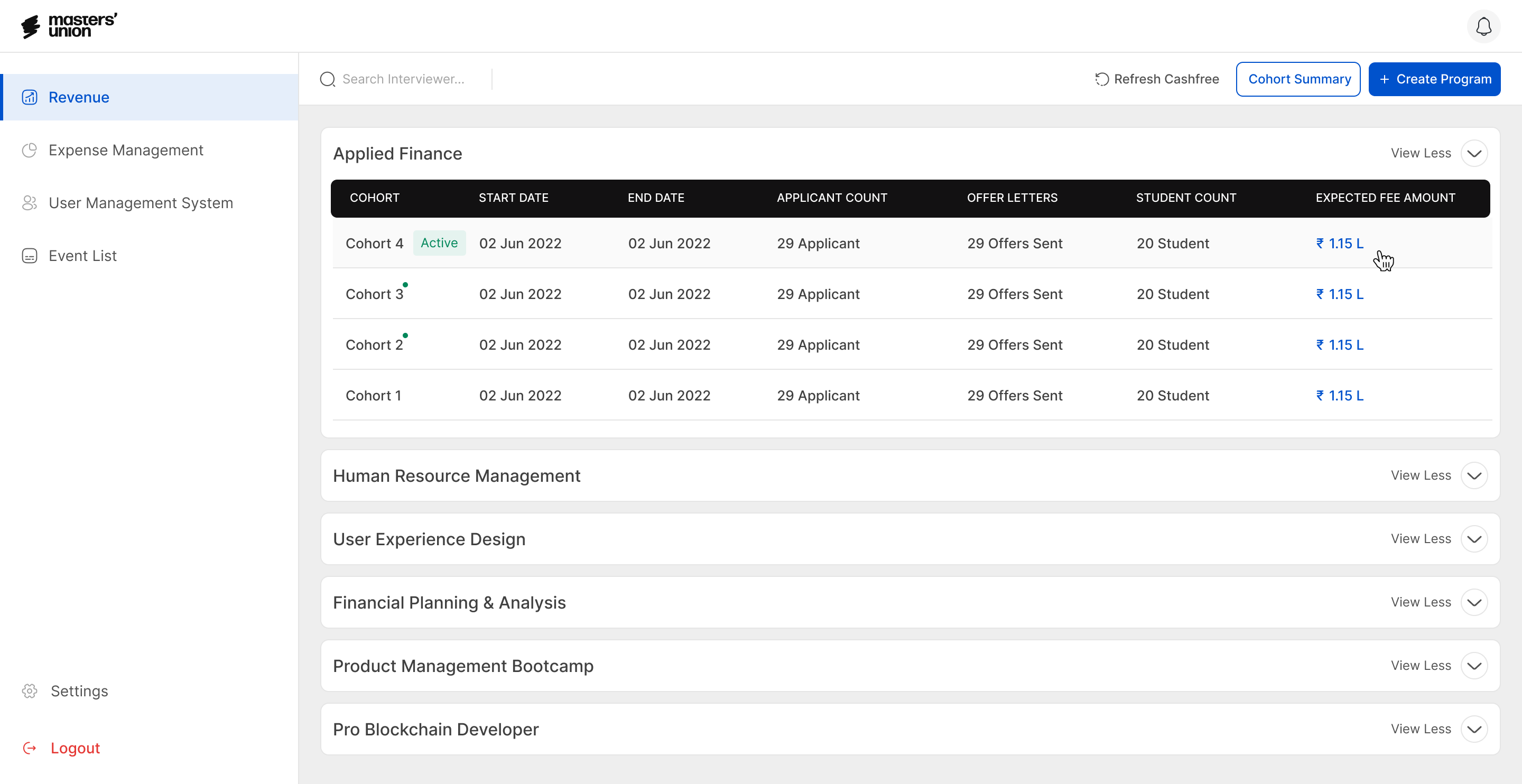

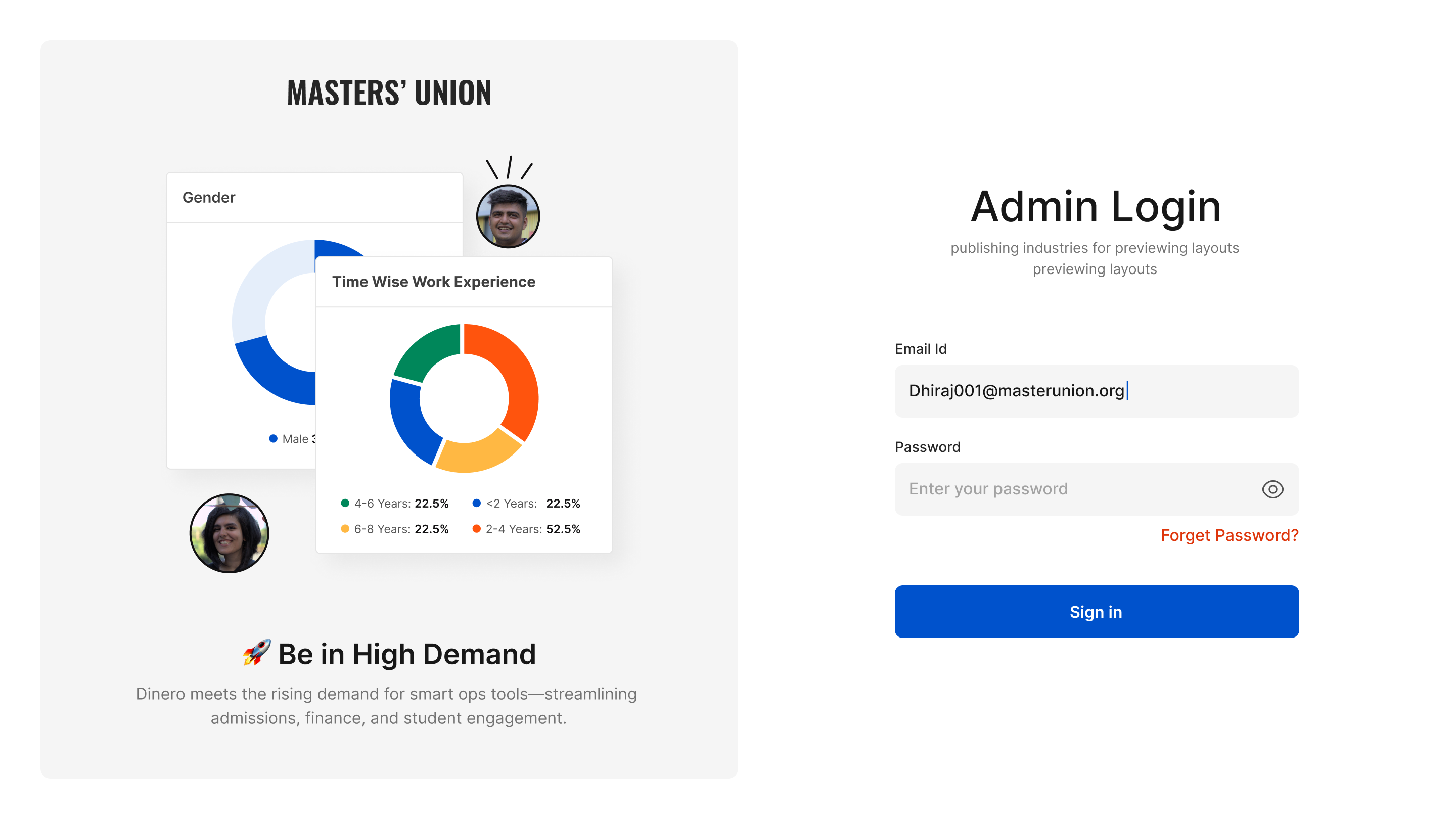

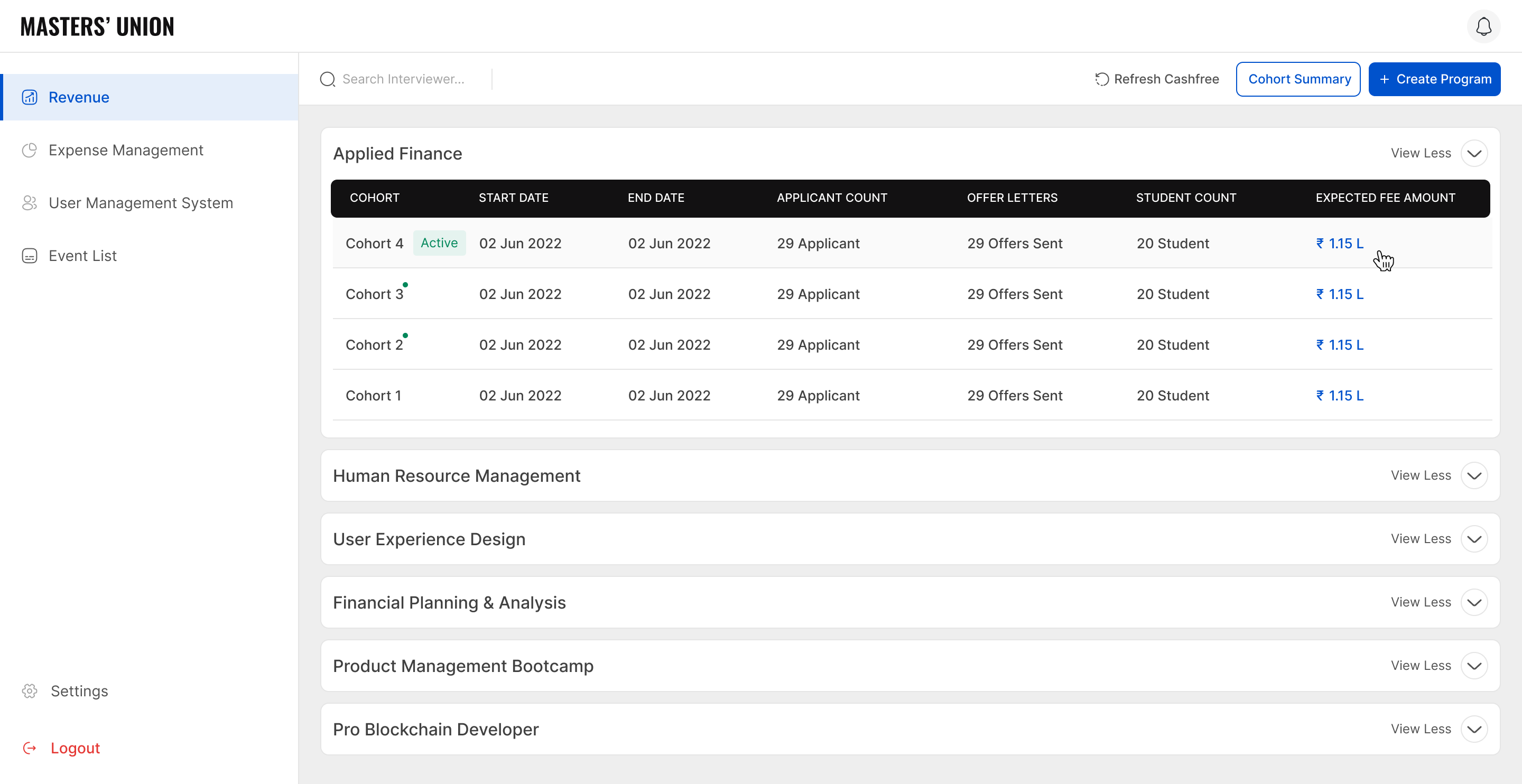

/ 4.1 UI Screen

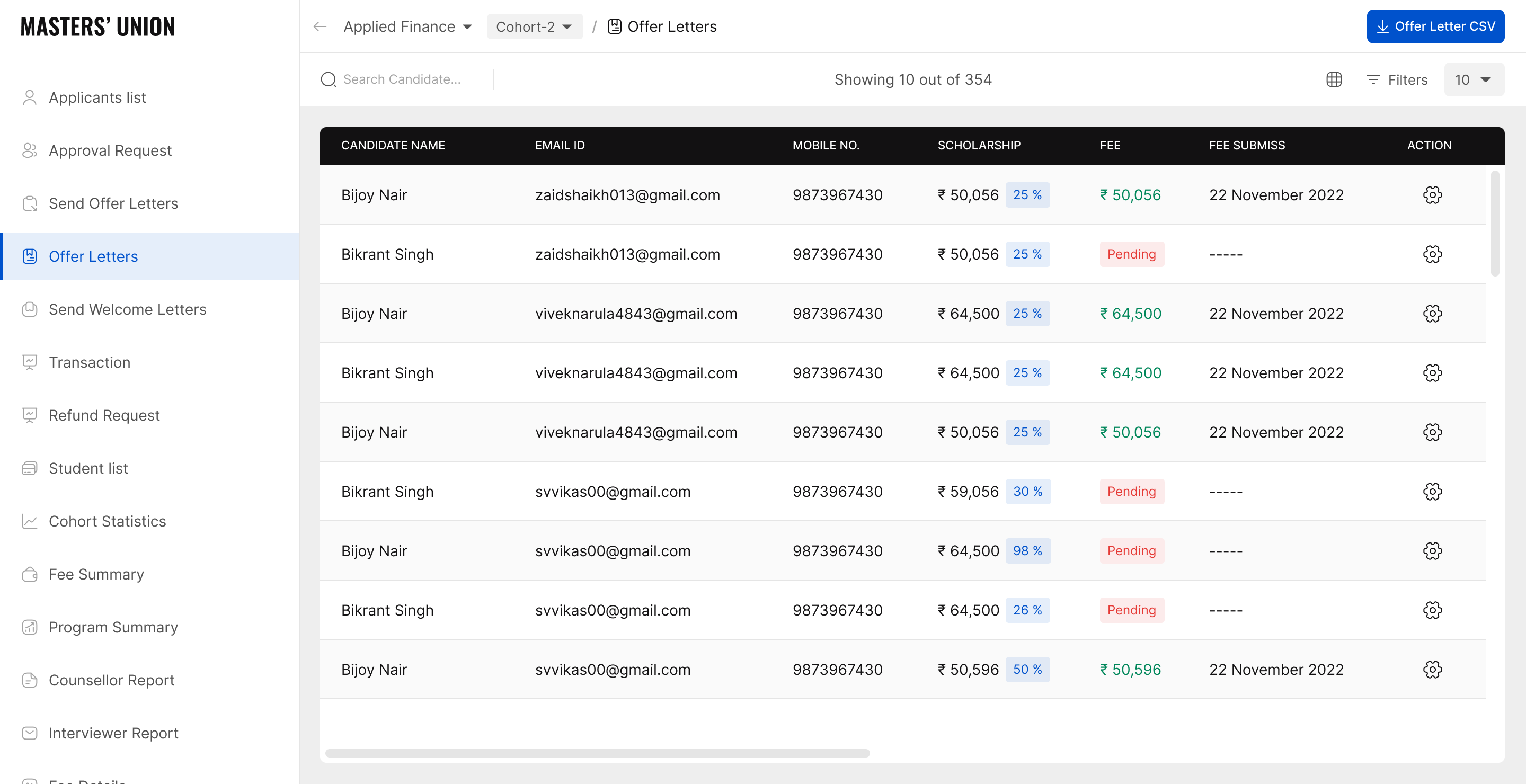

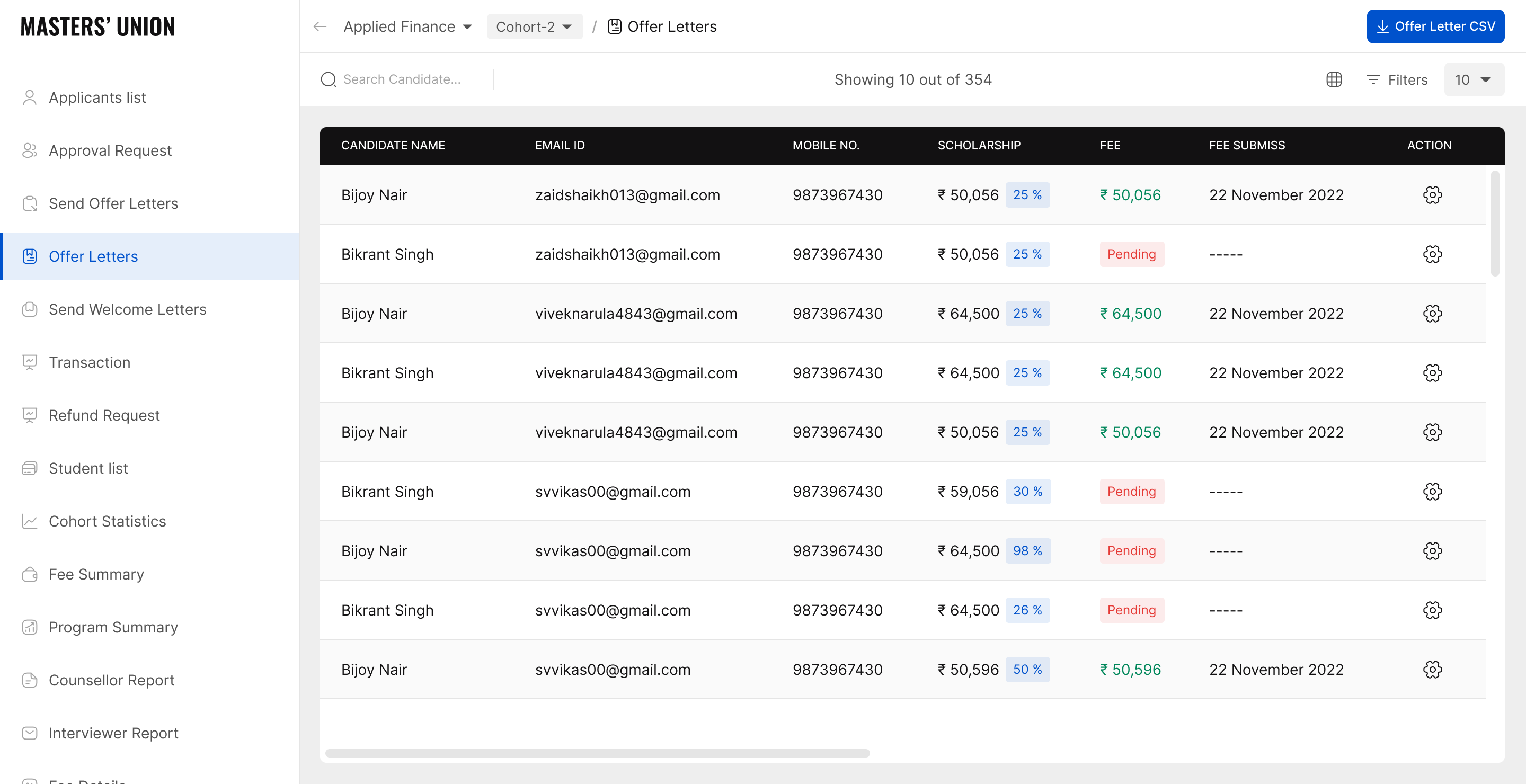

Due to company confidentiality policy, only a representative selection of UI screens from the Dinero platform can be shared publicly in this case study. The screens shown illustrate the core flows documented in this research. The full screen set is available for review in a confidential setting upon request.

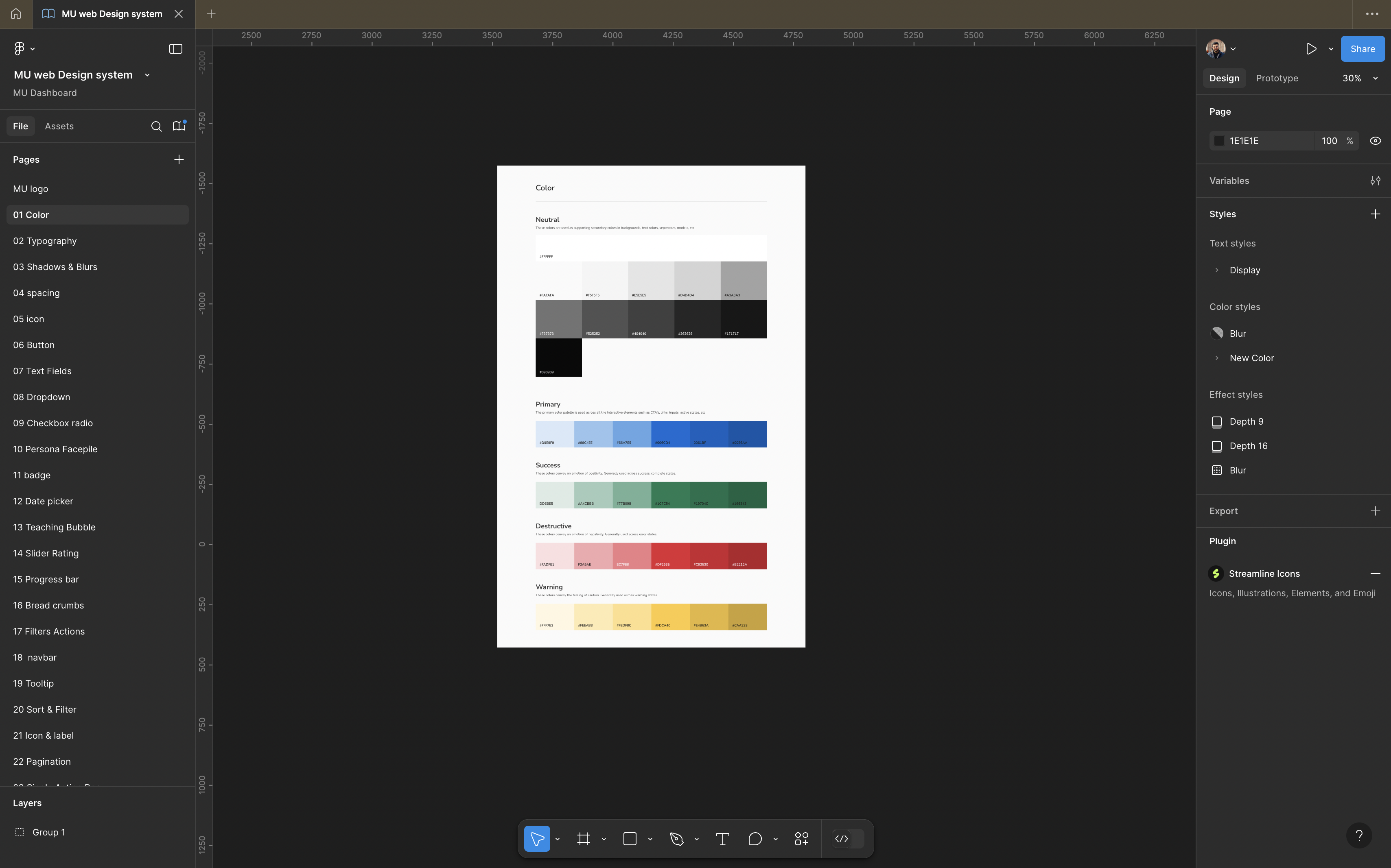

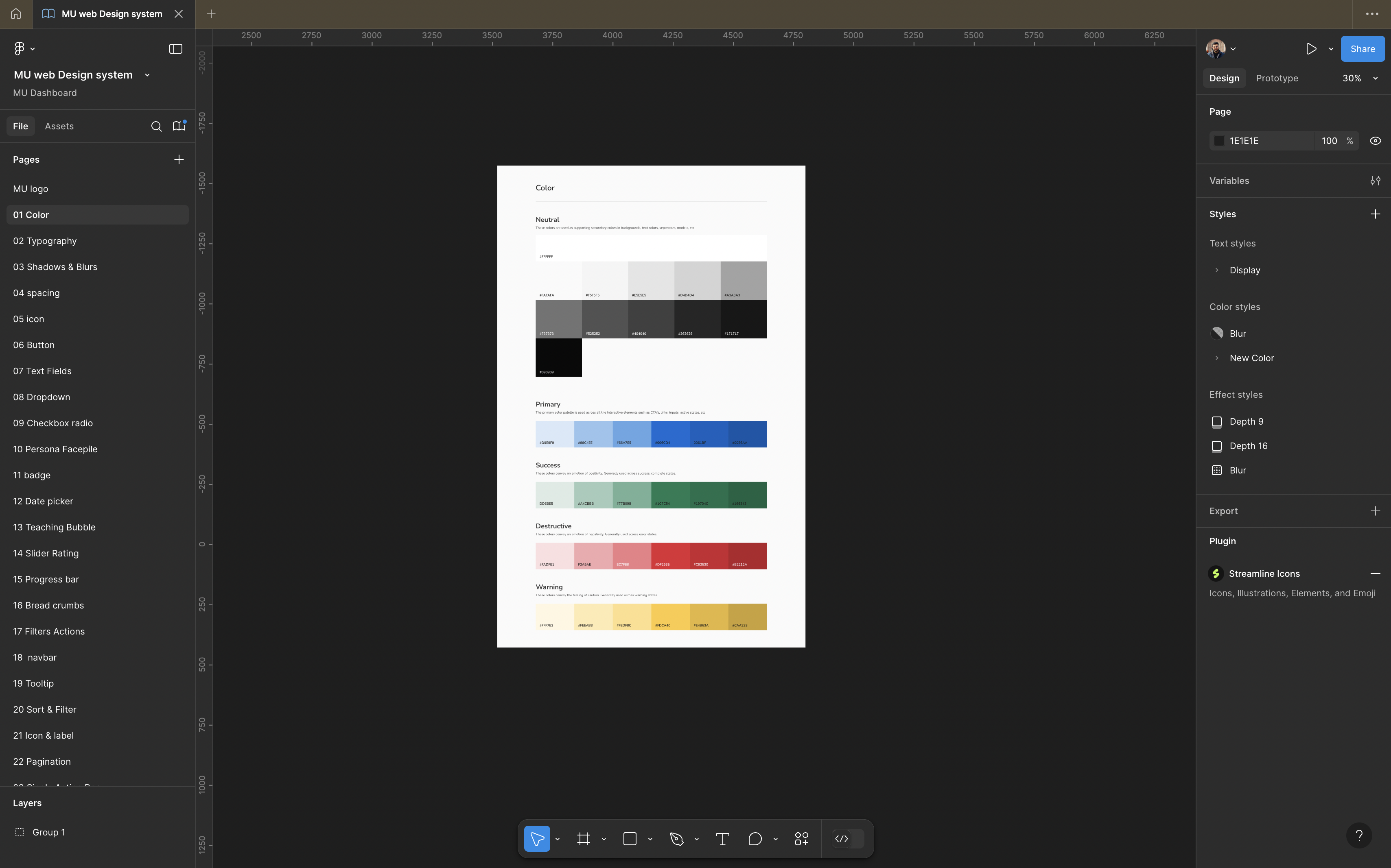

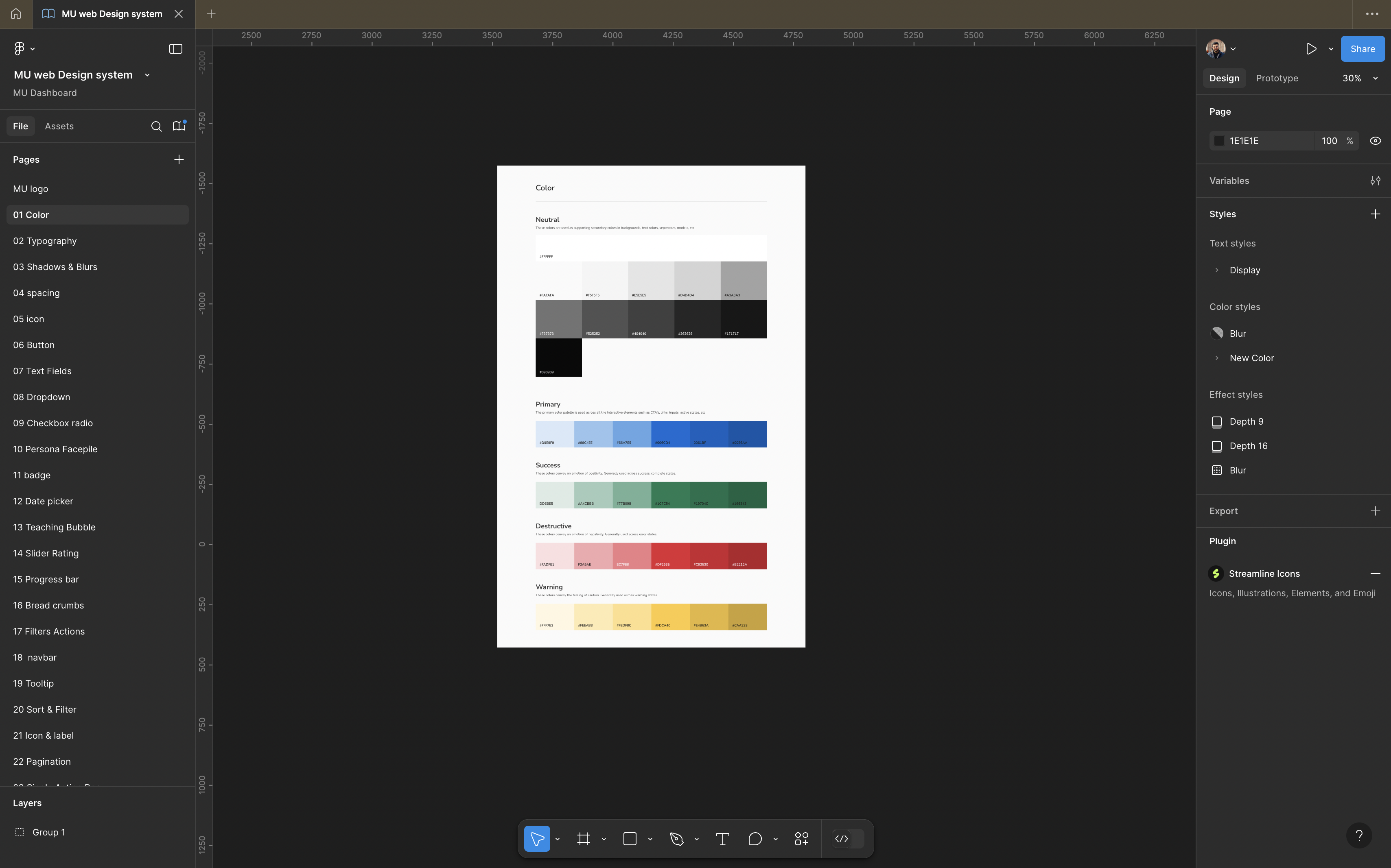

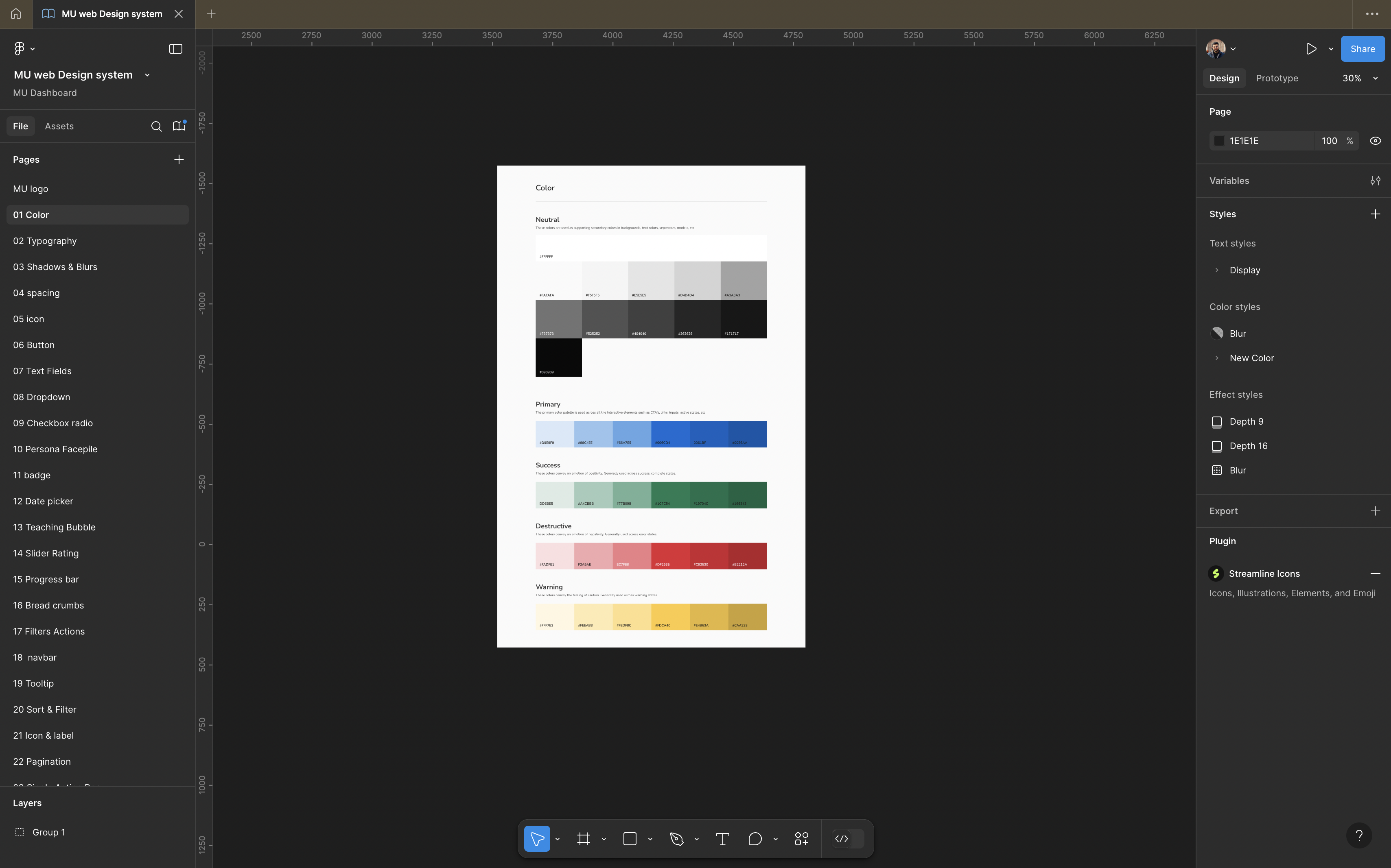

- 30 components Atomic Design: atoms -> molecules -> organisms

- Token-based: colour, spacing, typography fully tokenized for consistency across 3 dashboards

- Figma Dev Mode handoff to 8 frontend engineers zero post-handoff design questions

- Component library: buttons, inputs, cards, pipeline chips, status badges, modals, tables, toast notifications

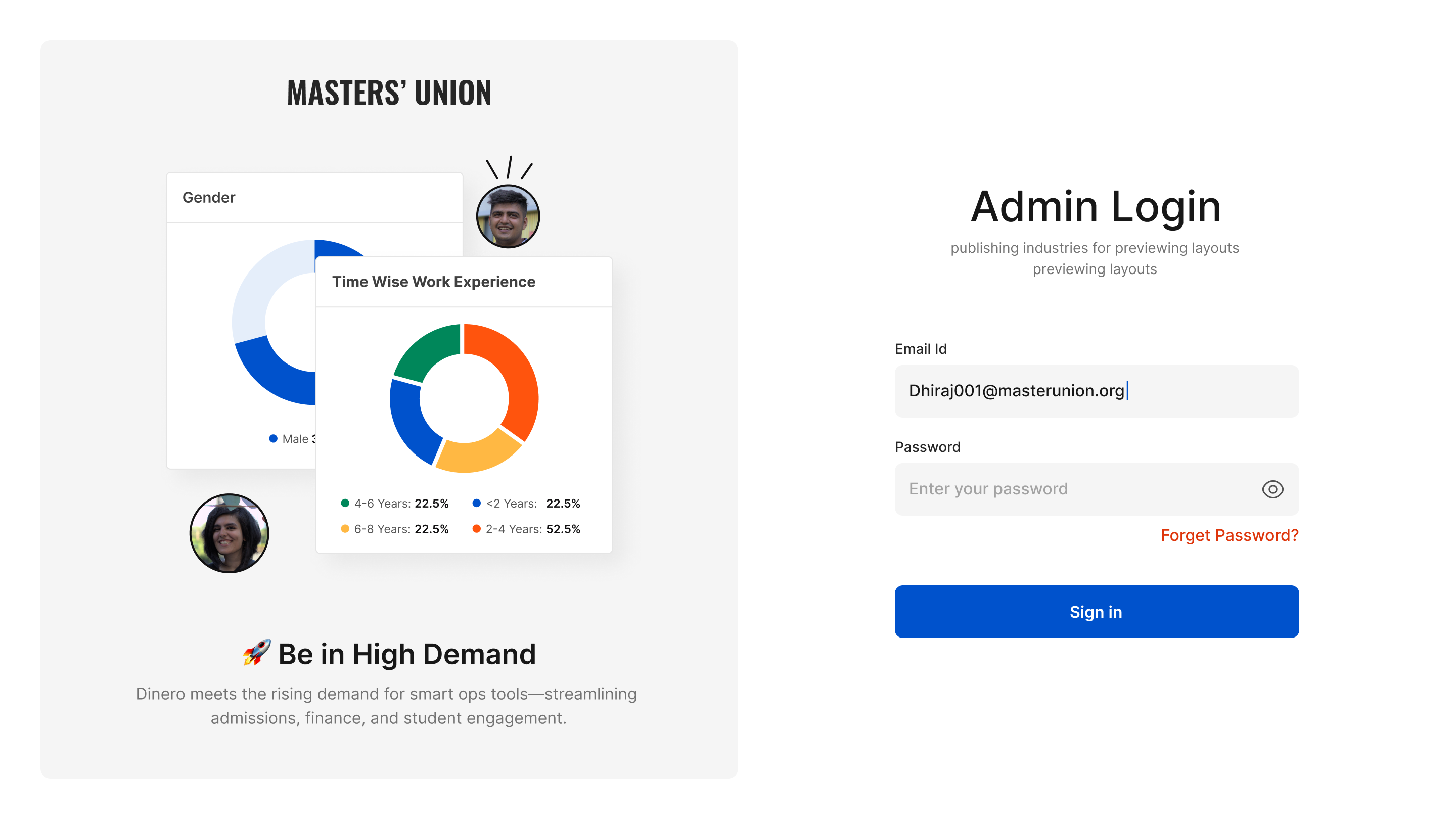

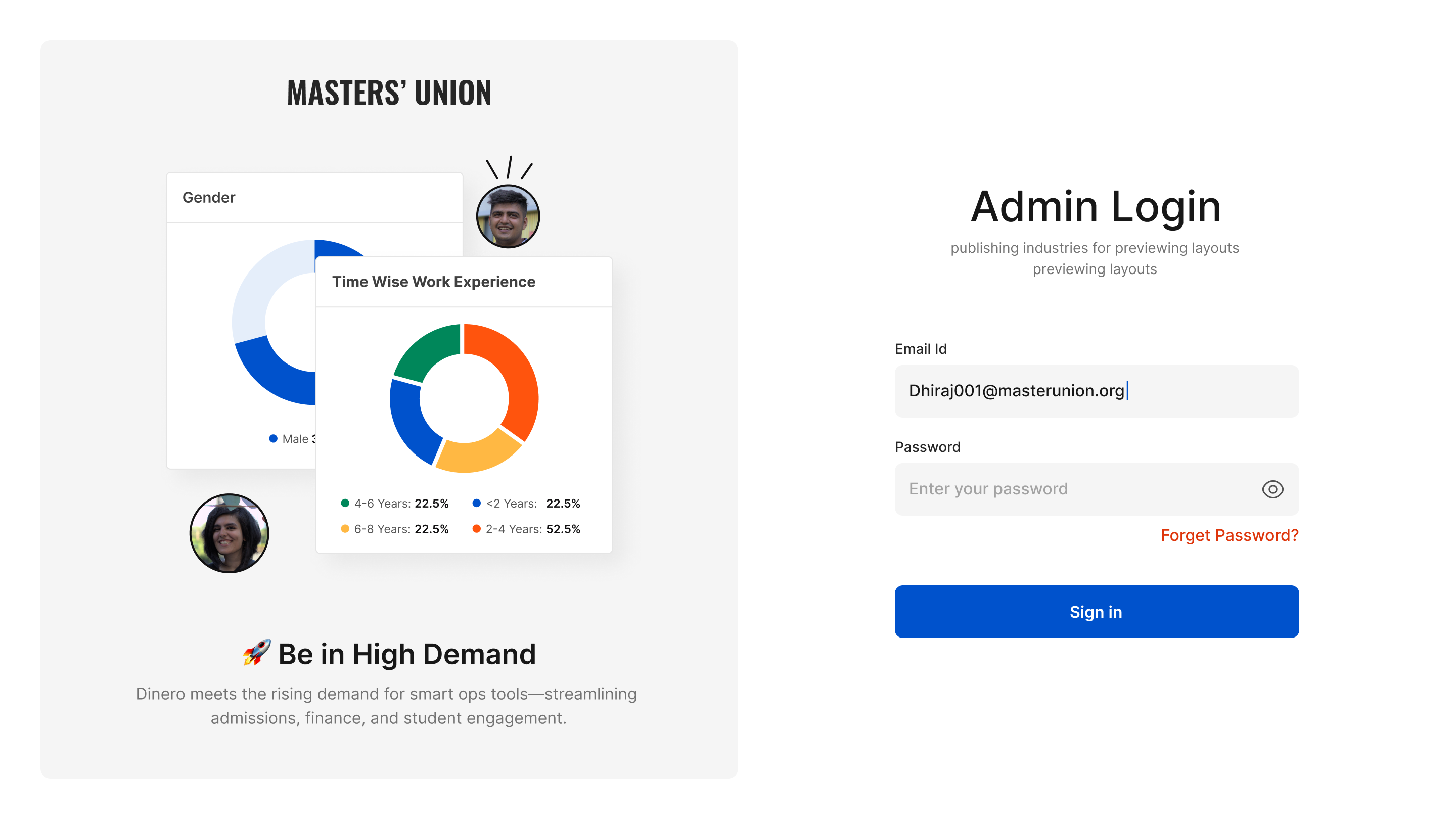

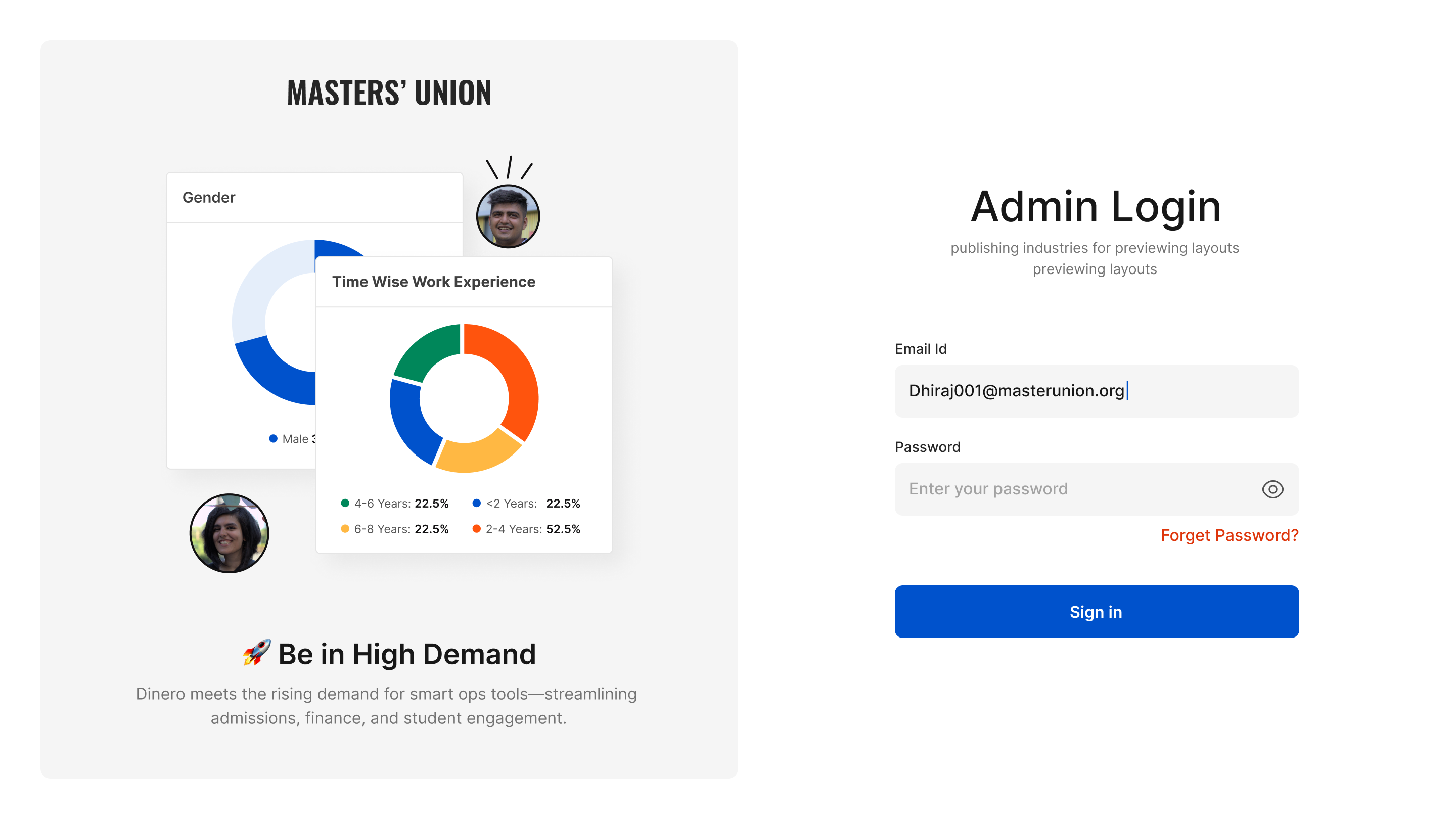

Admin Login Role-based entry point. Admin authenticates with institutional email.

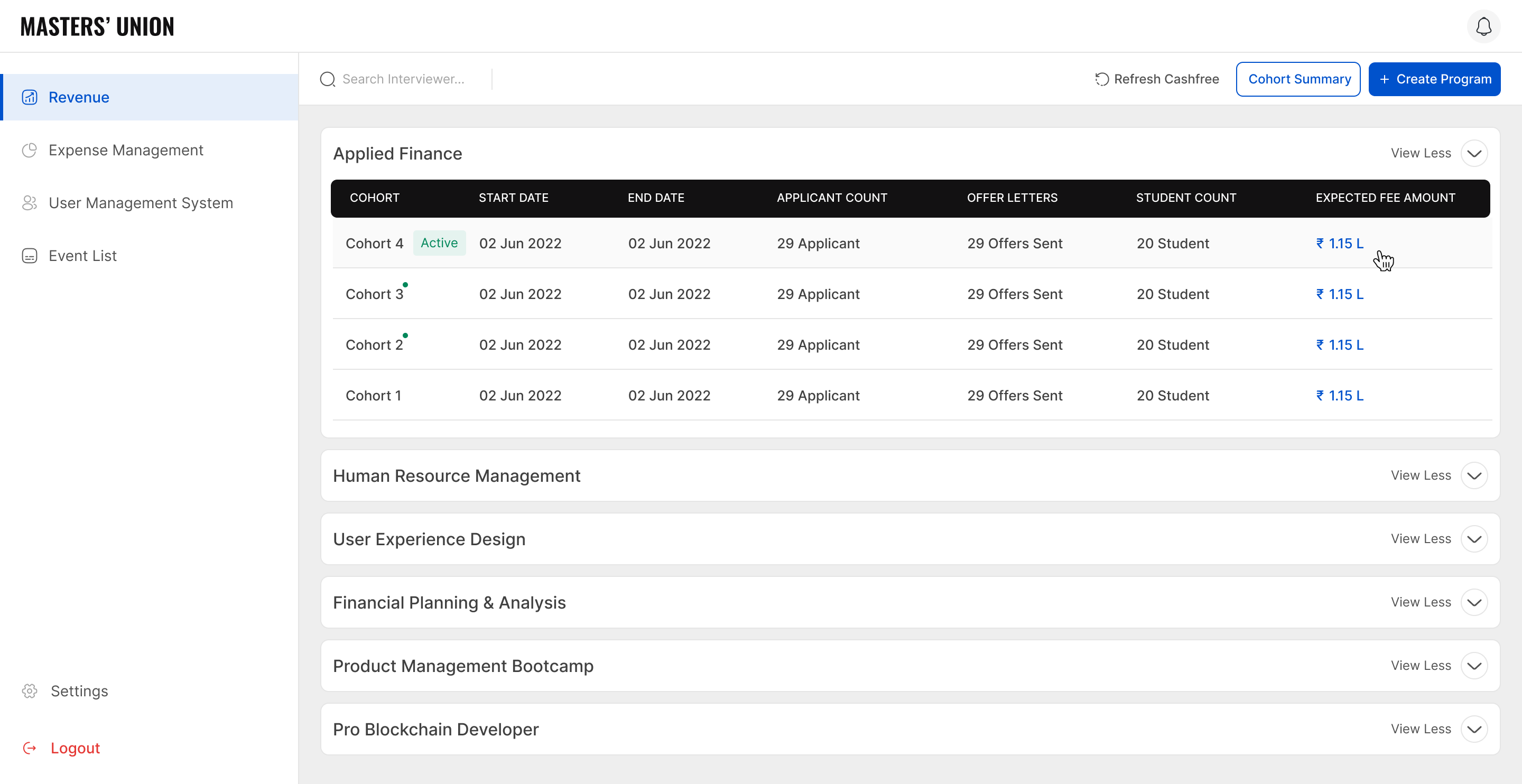

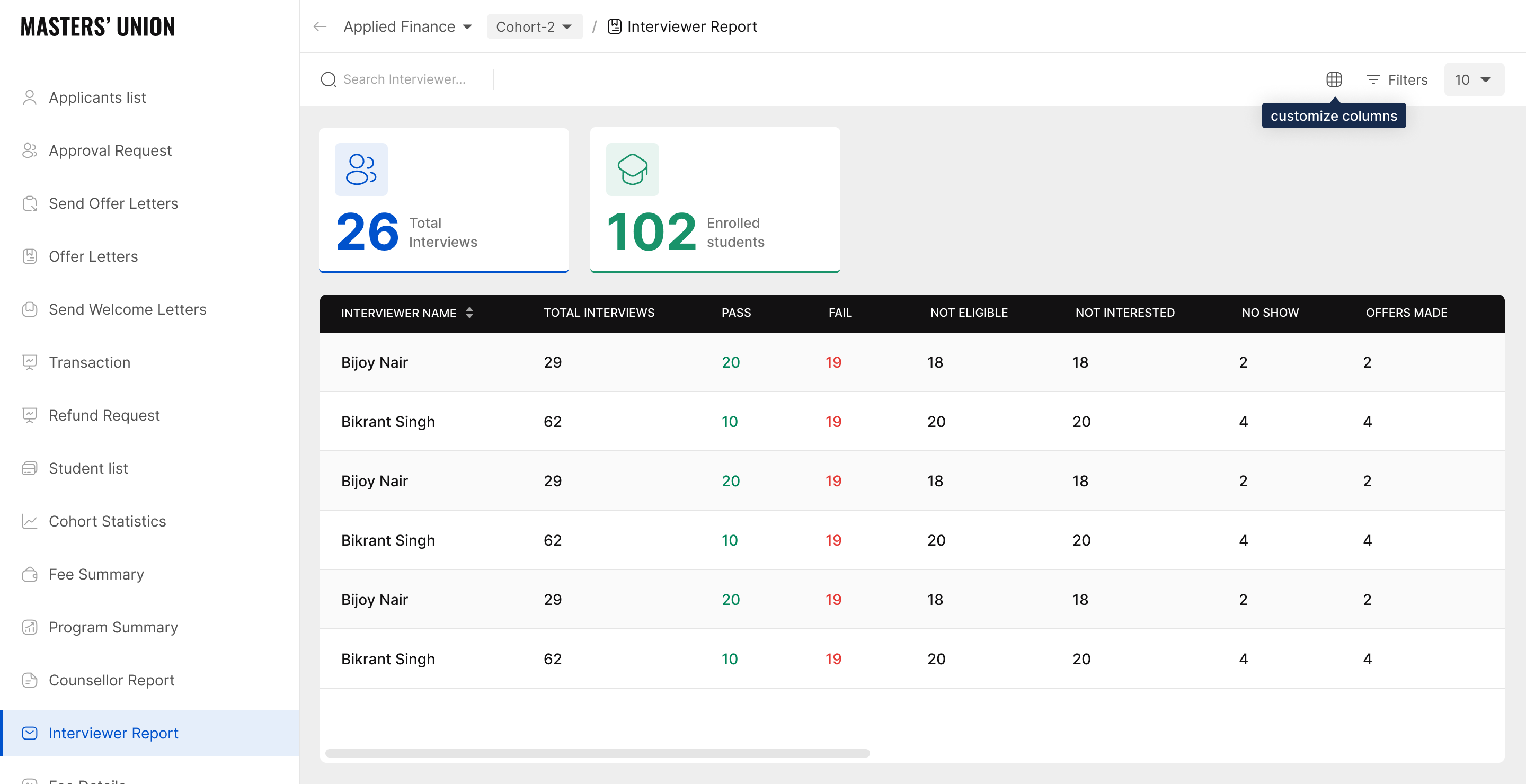

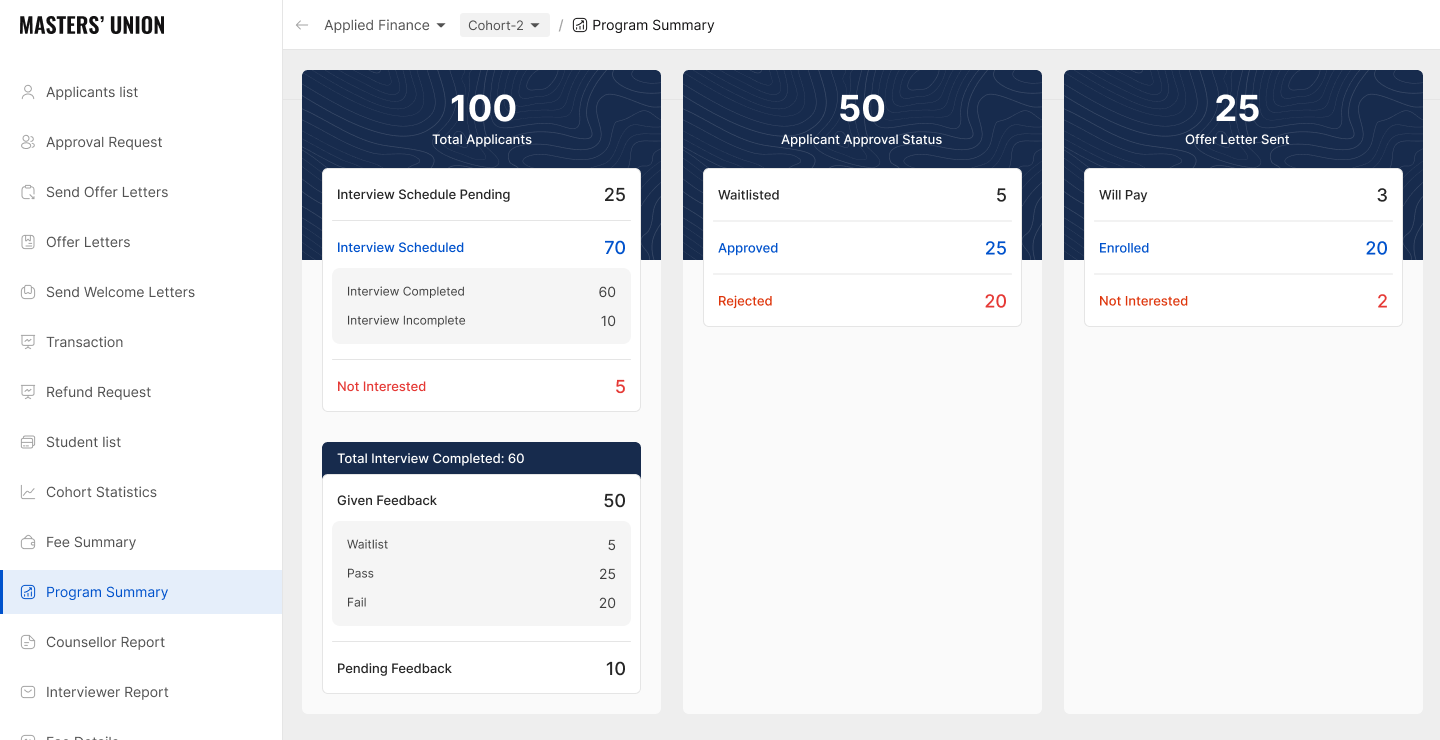

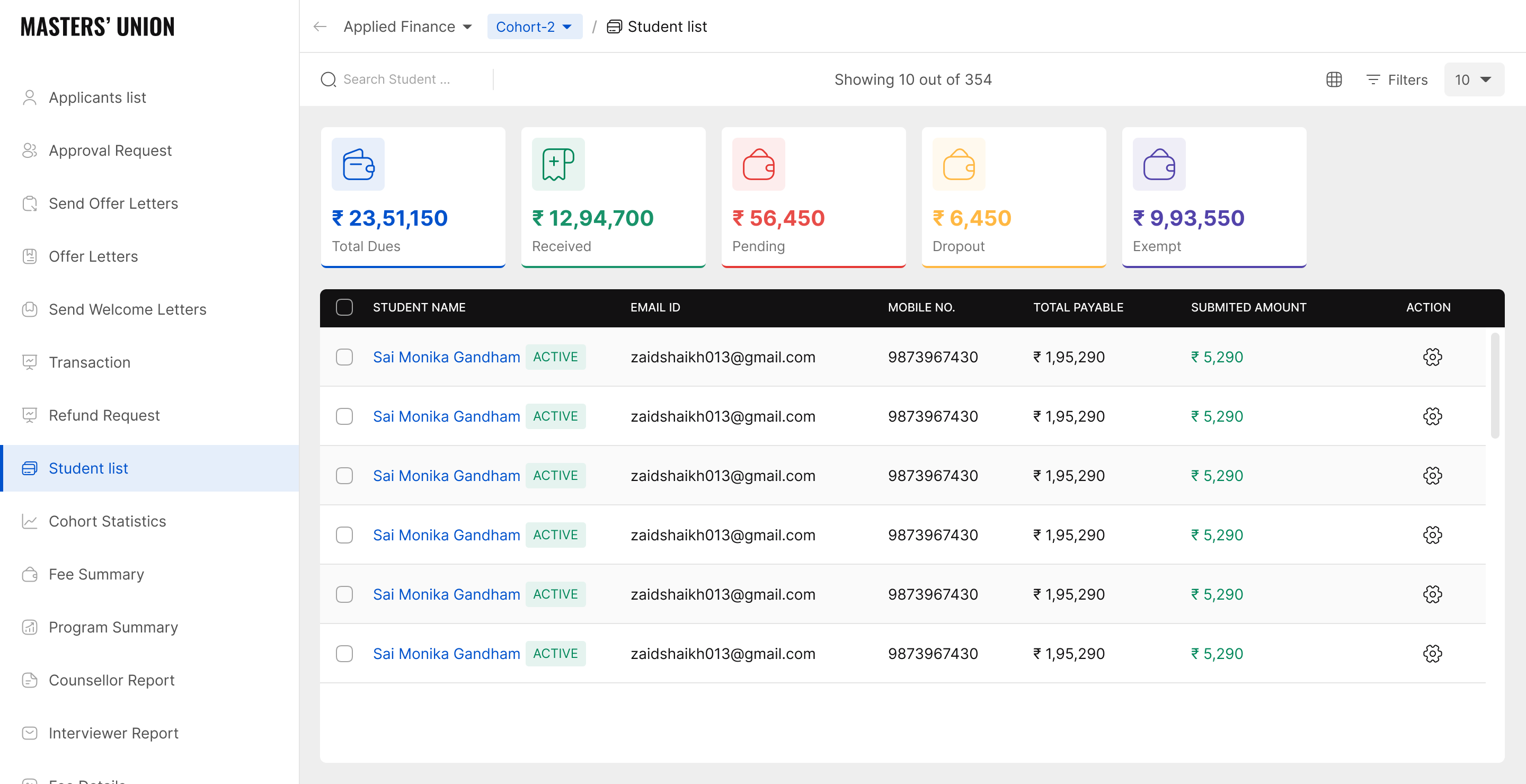

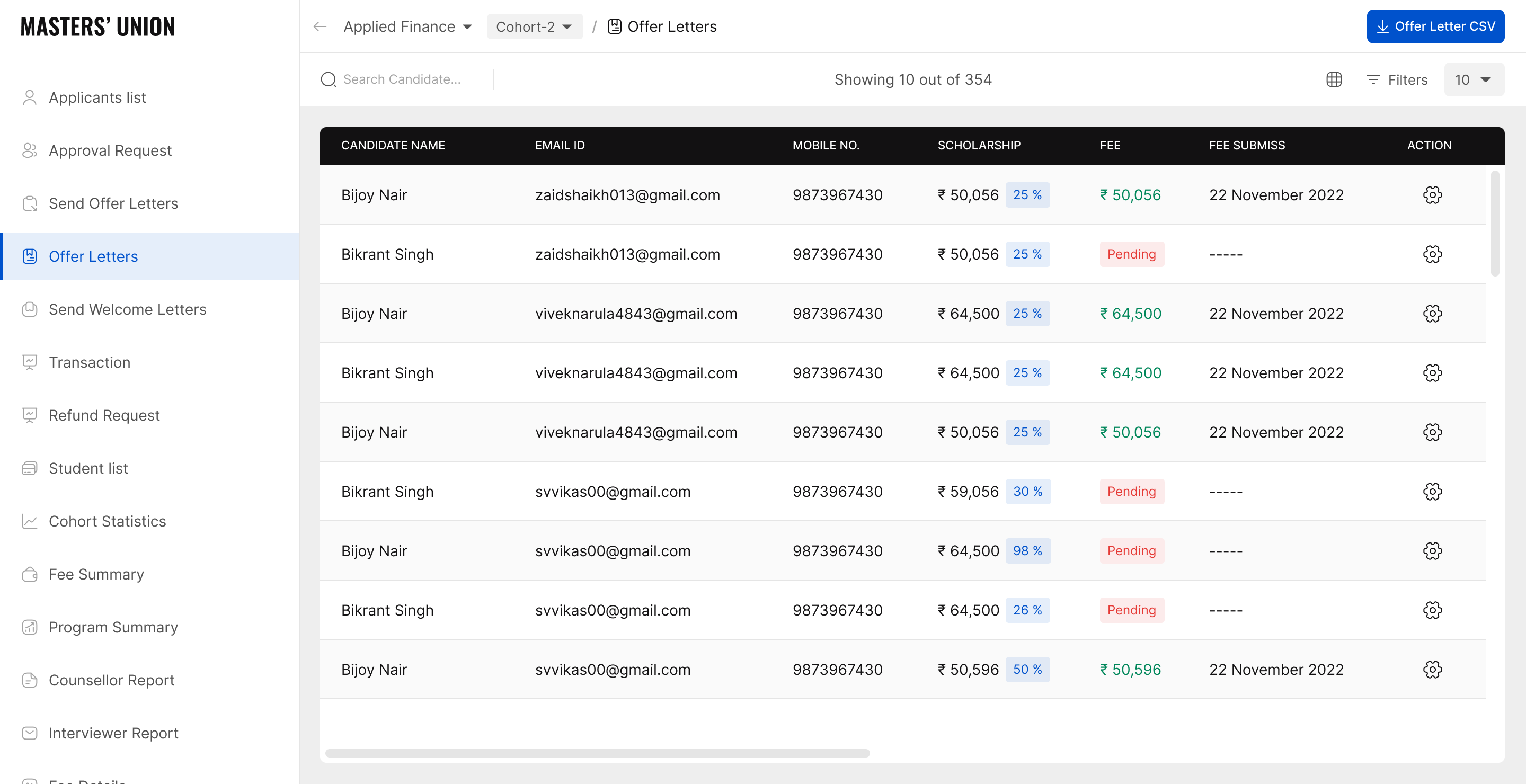

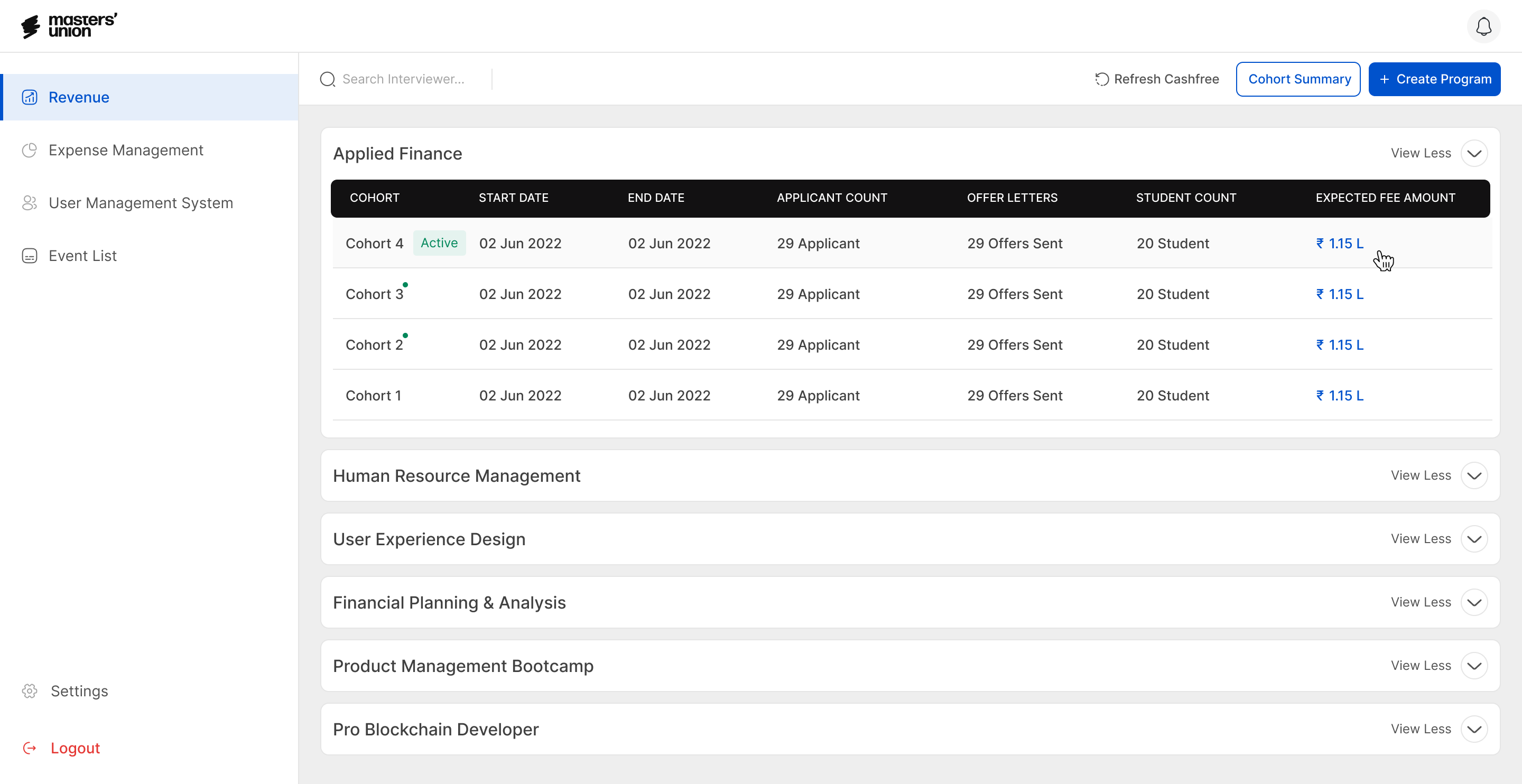

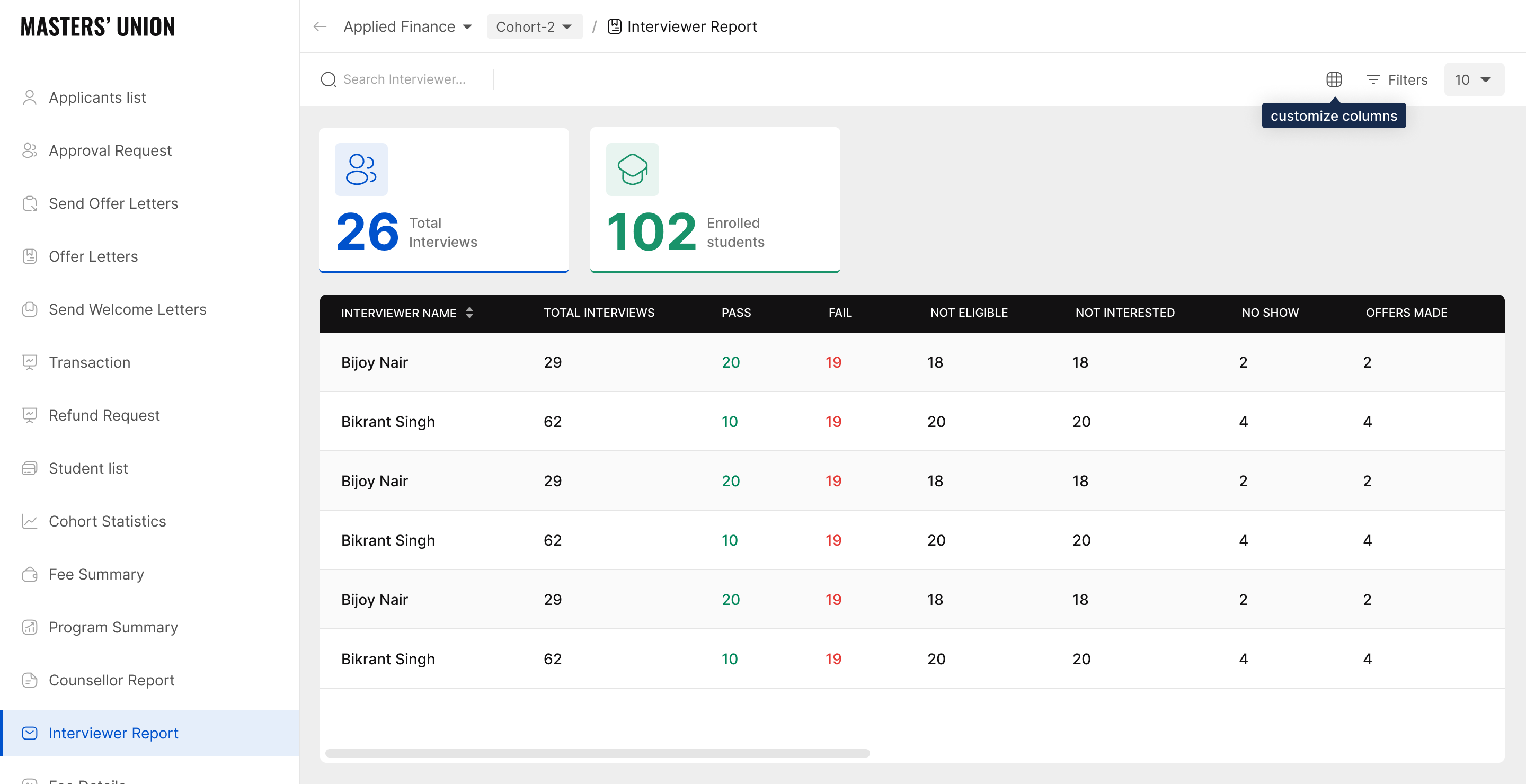

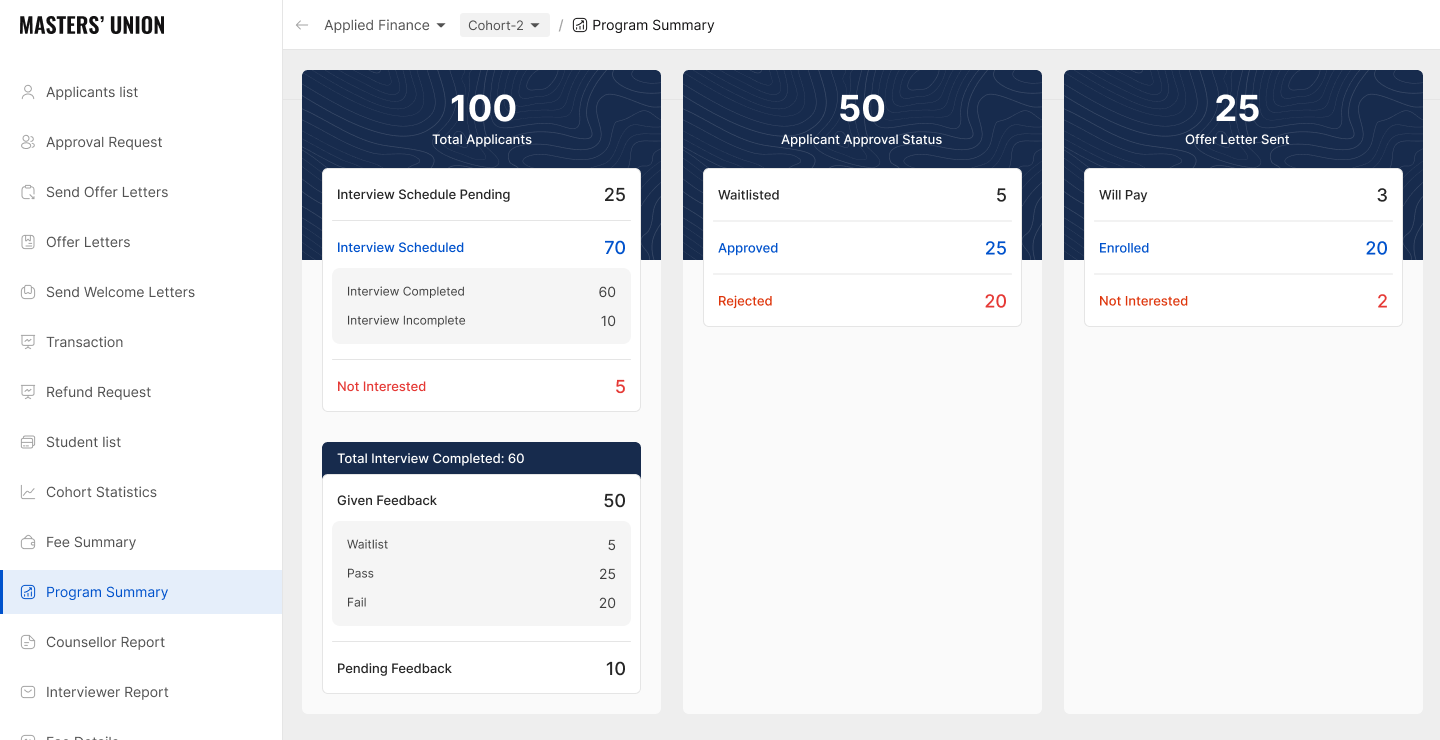

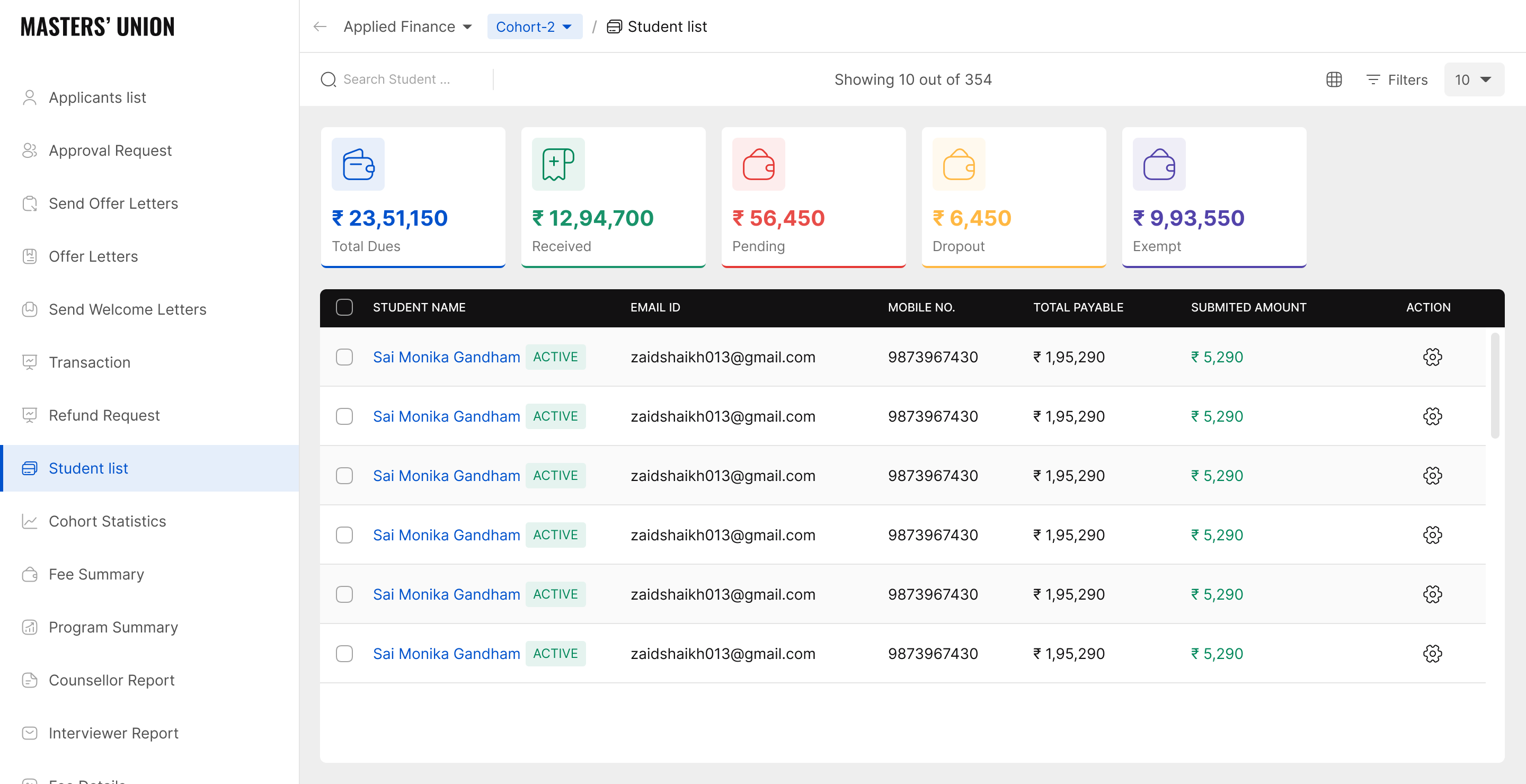

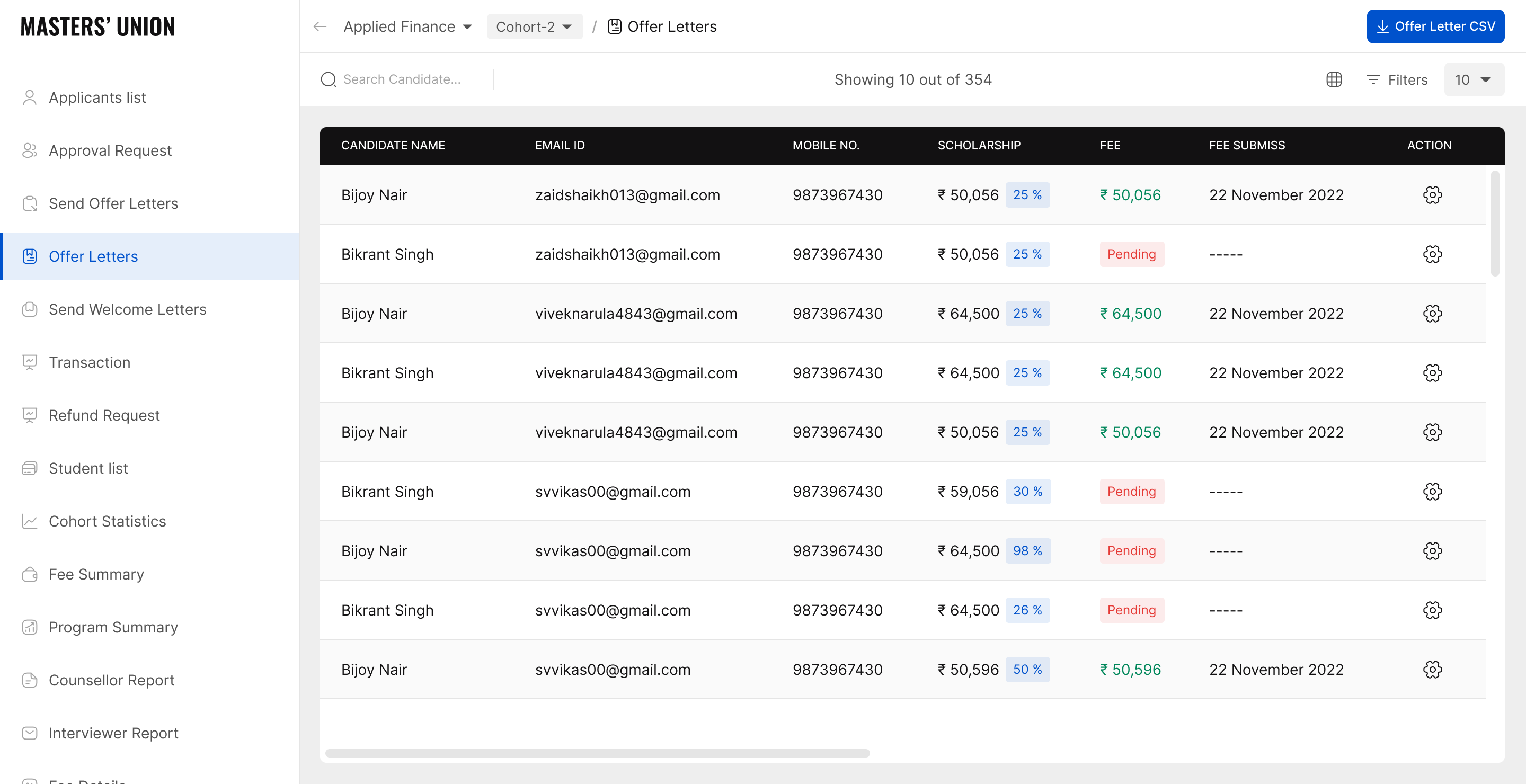

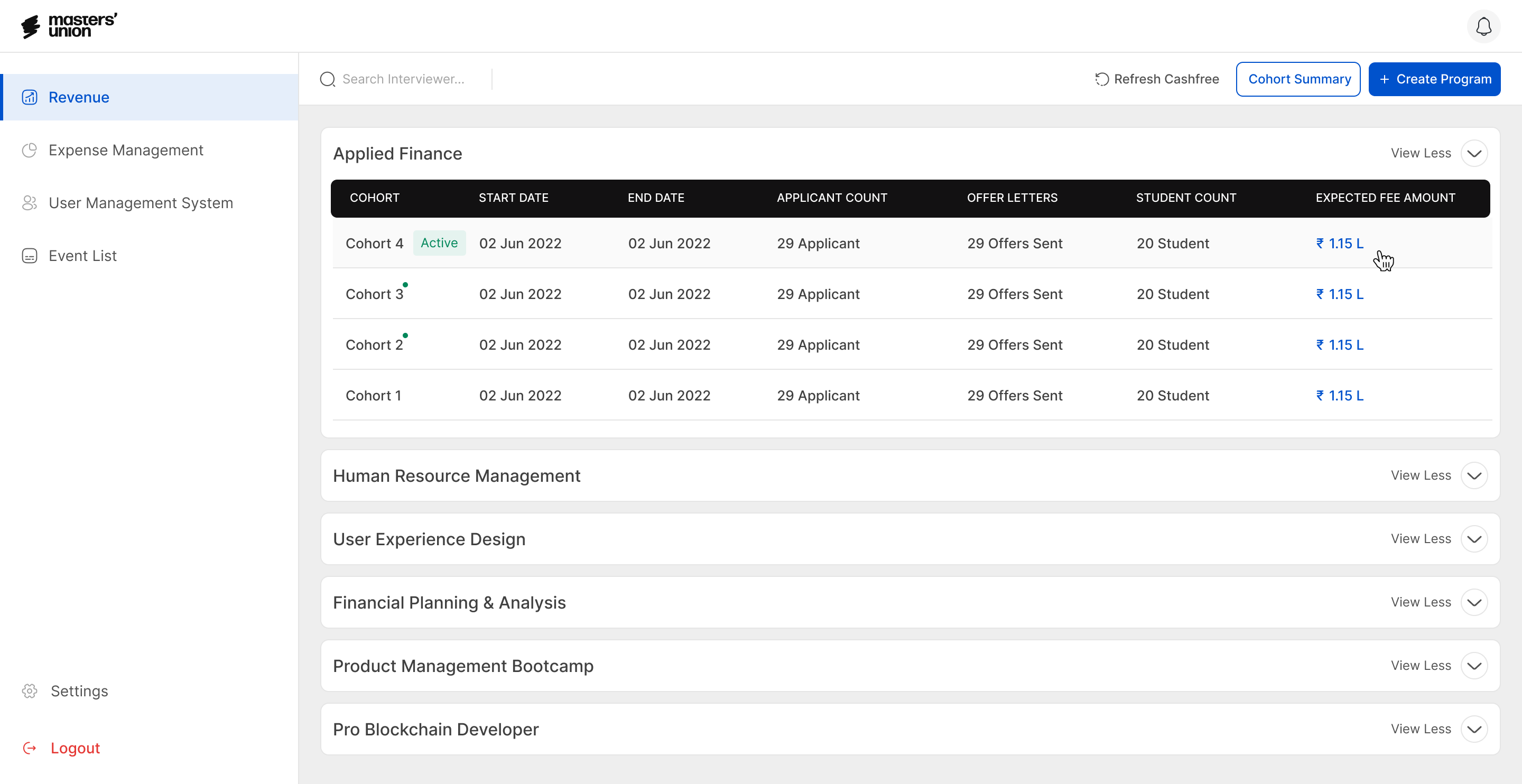

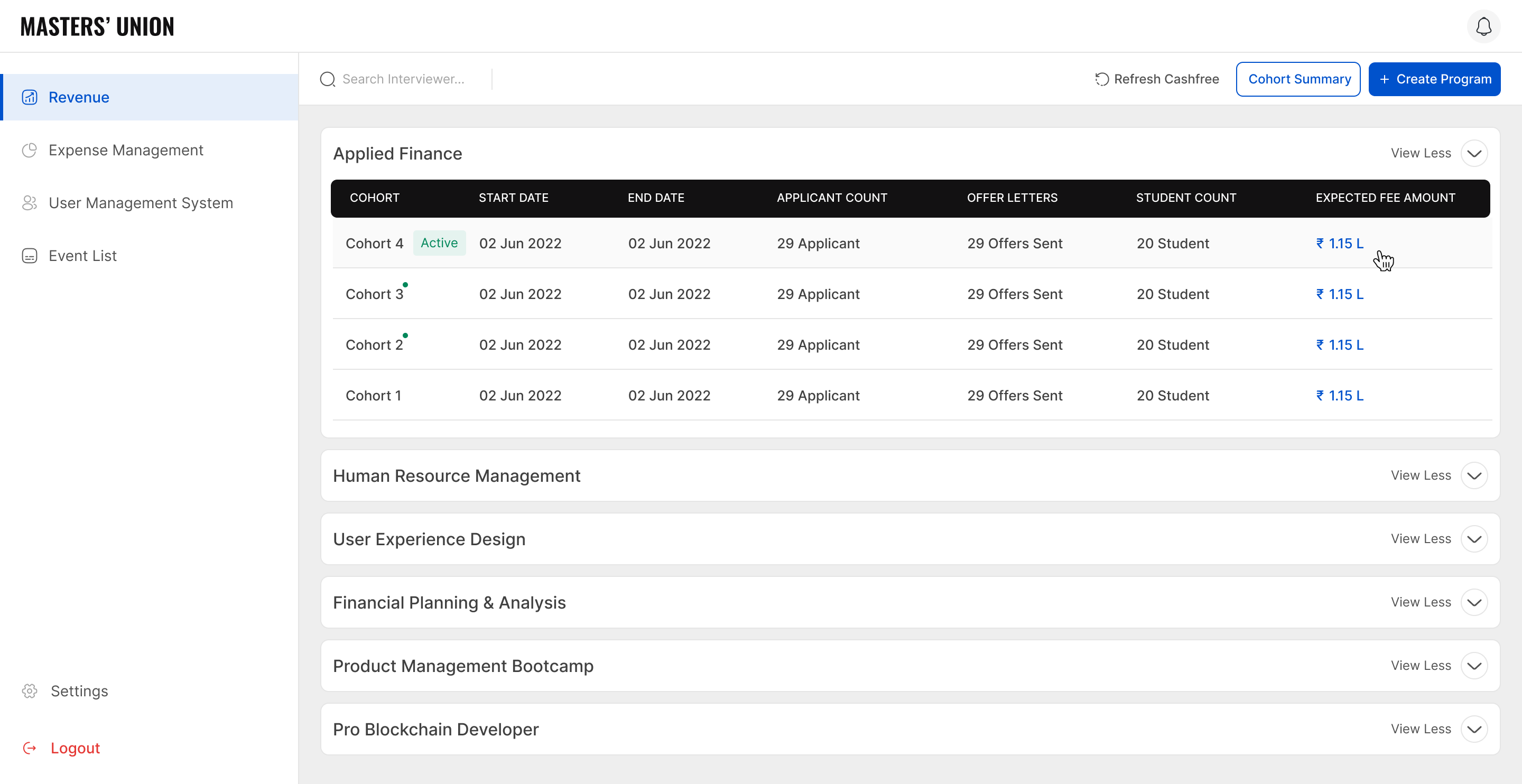

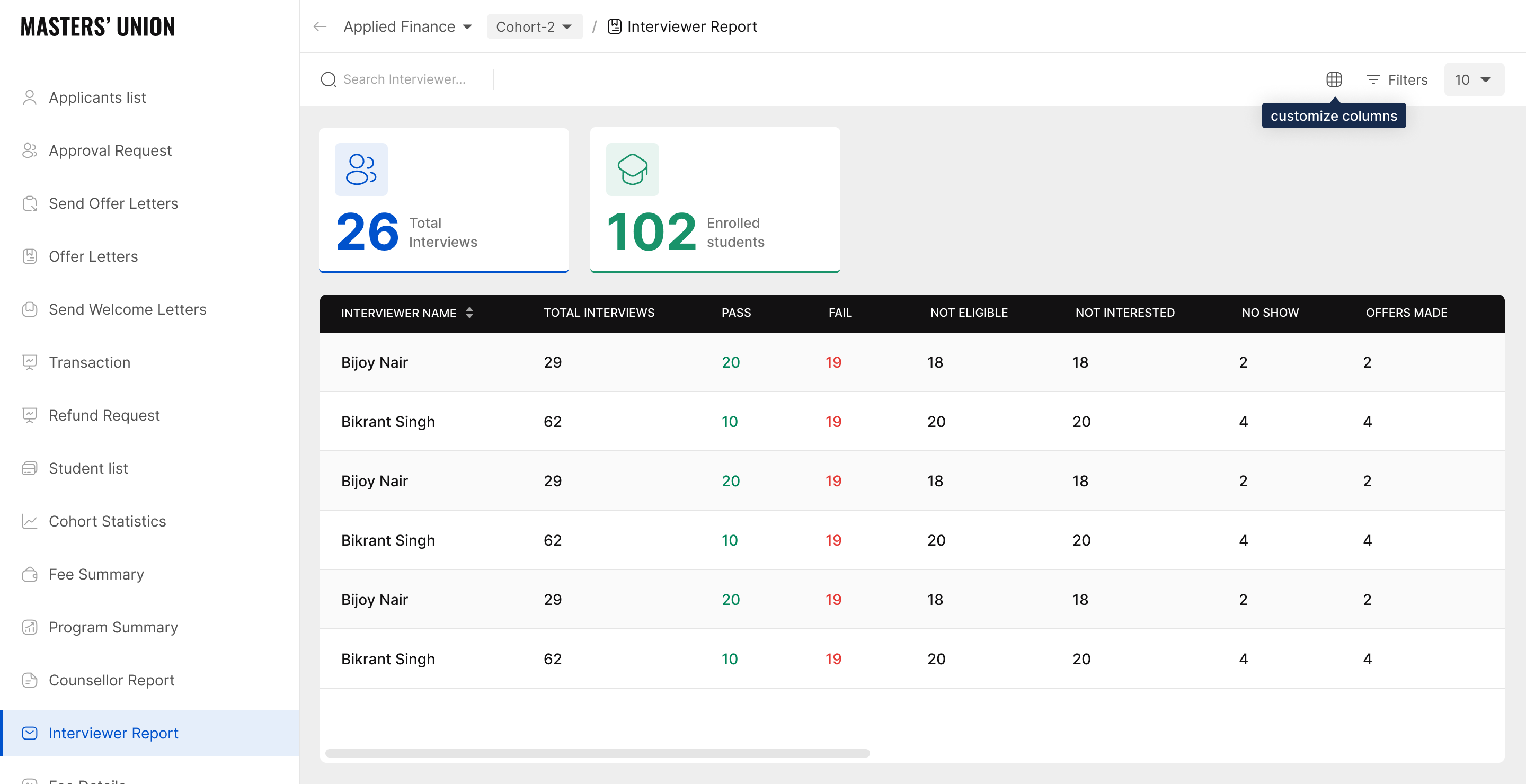

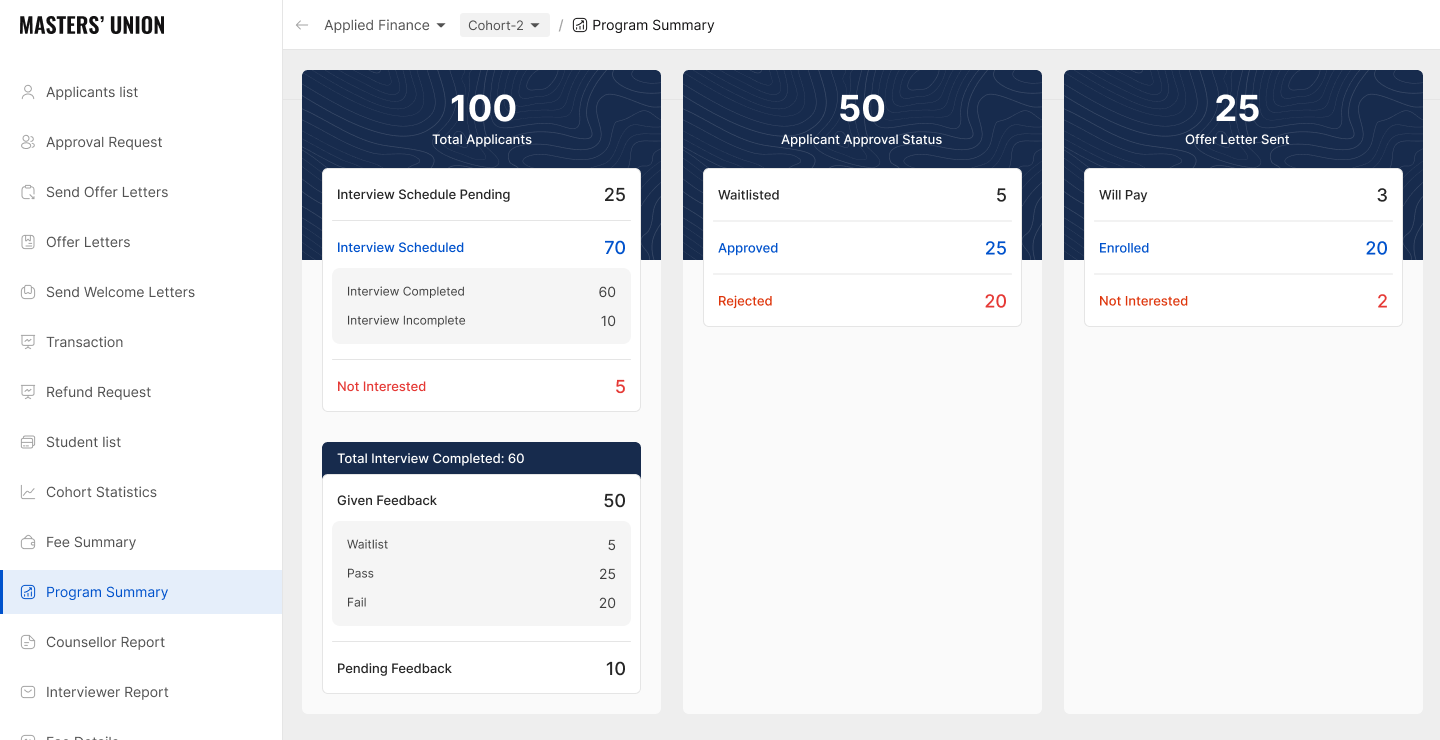

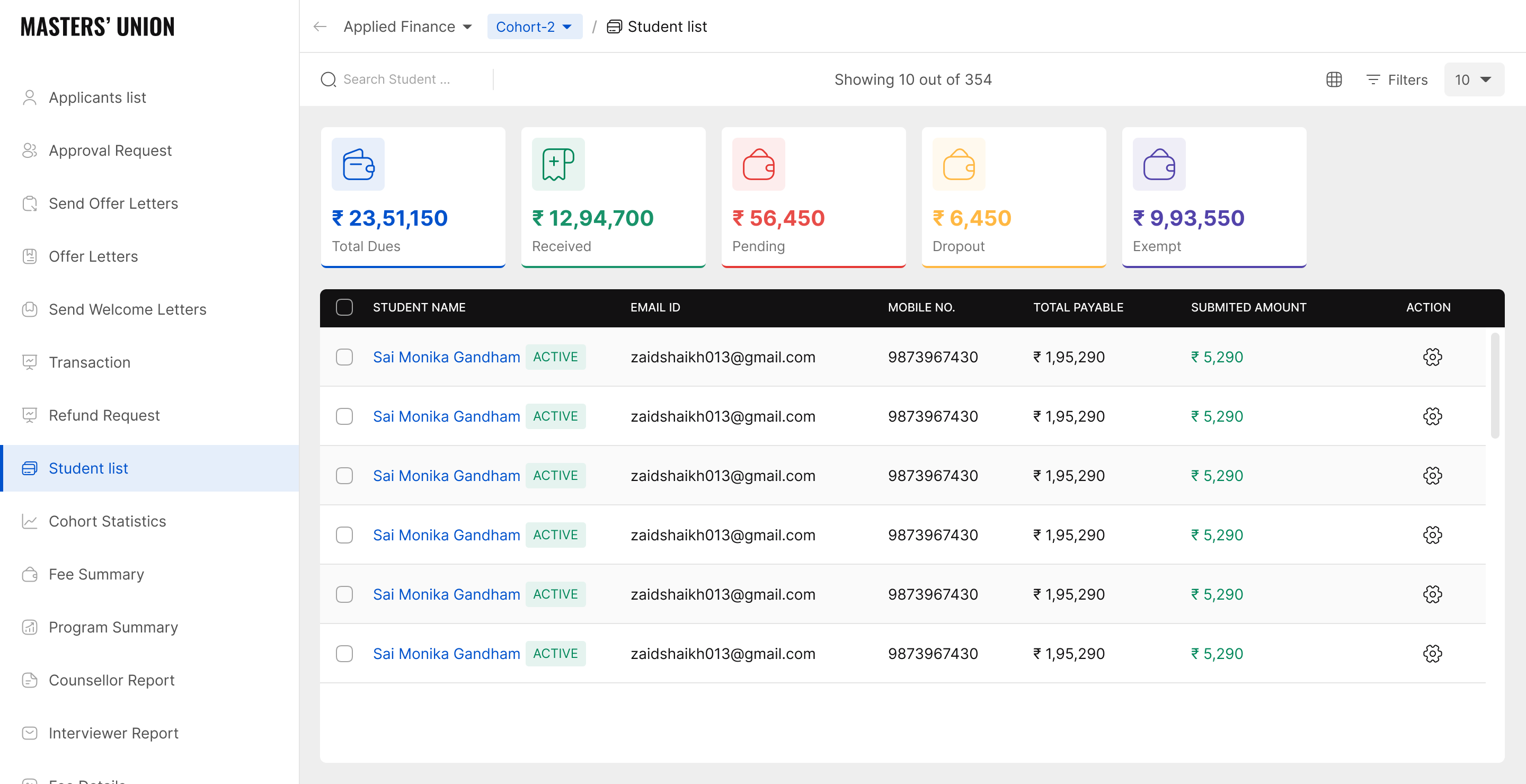

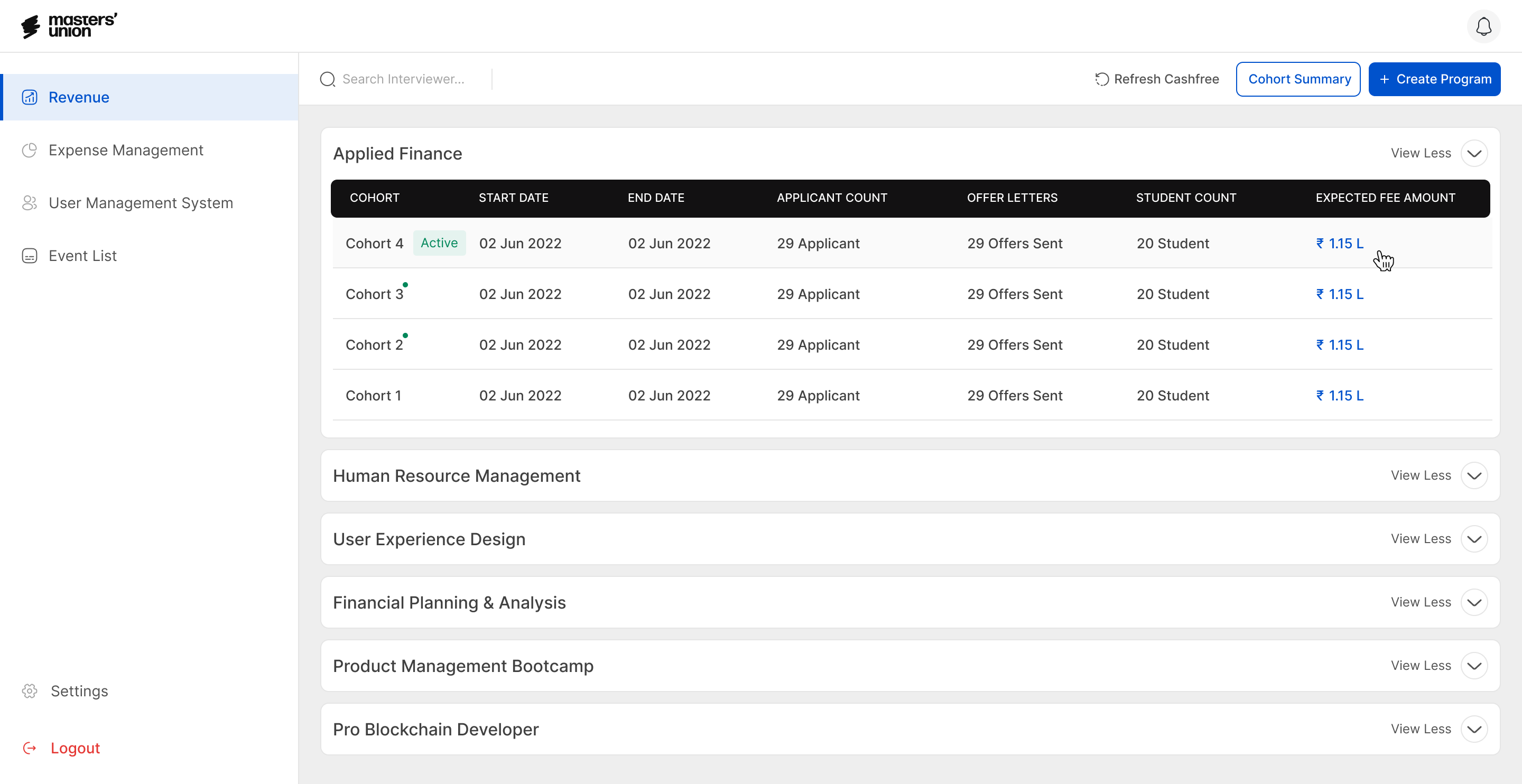

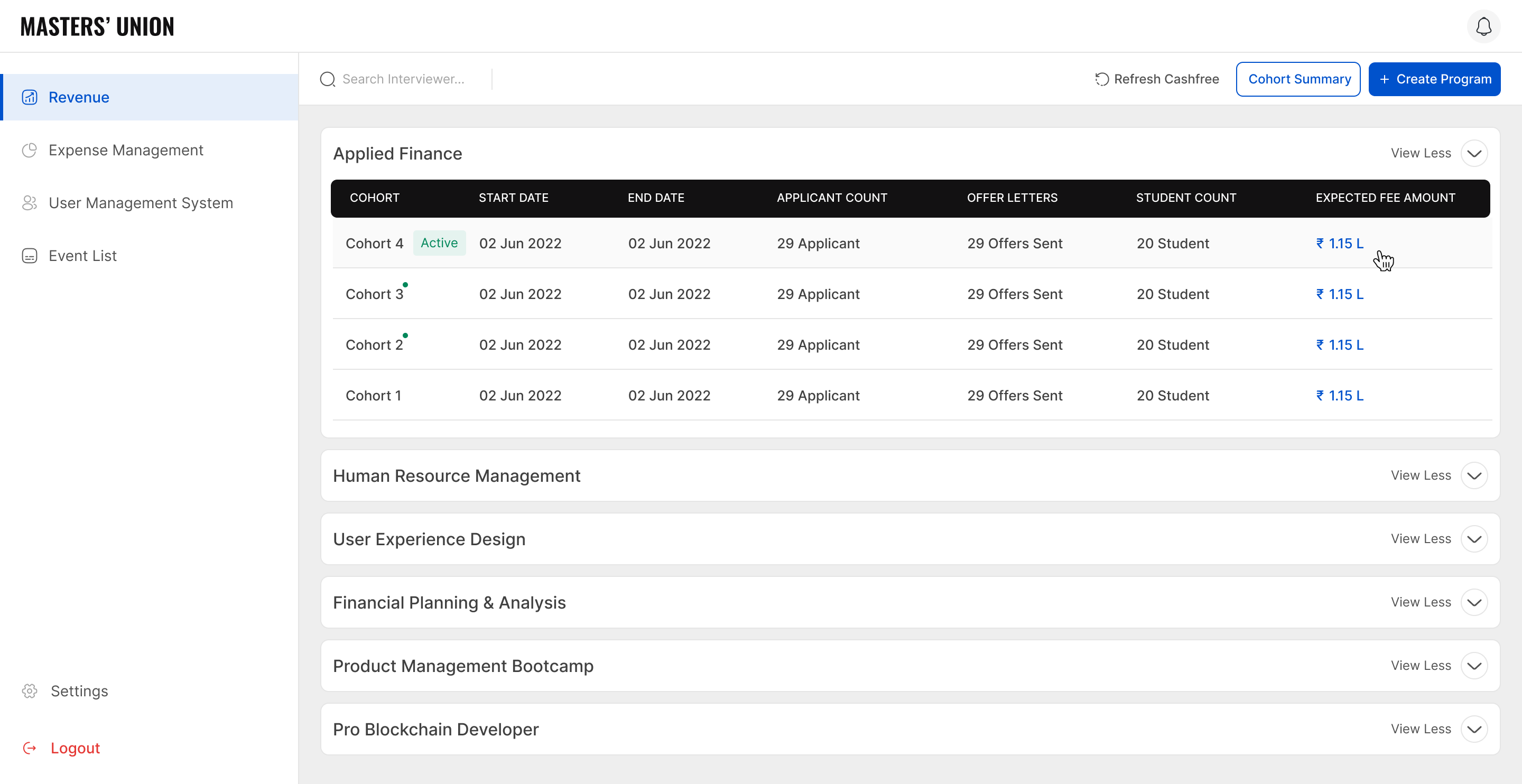

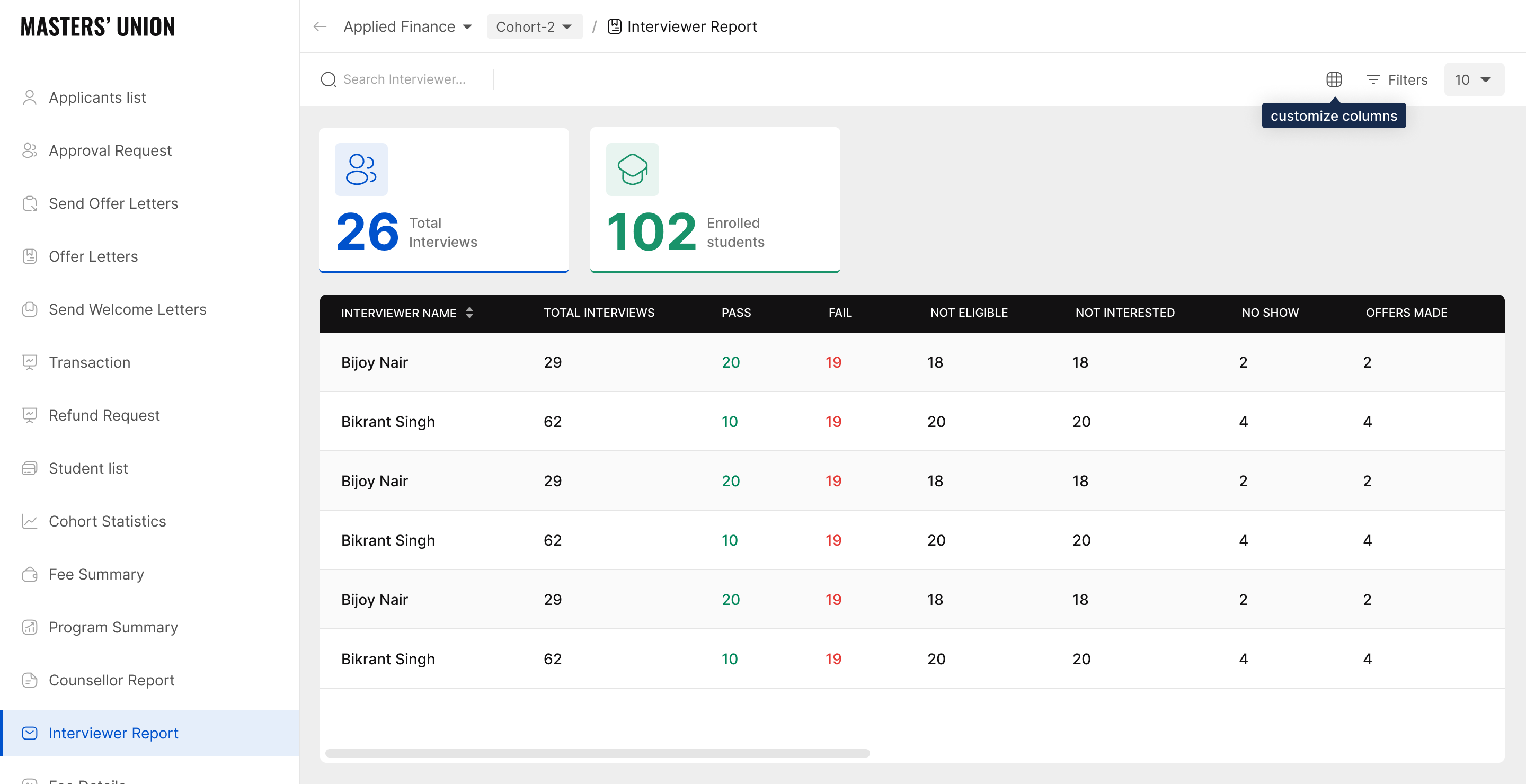

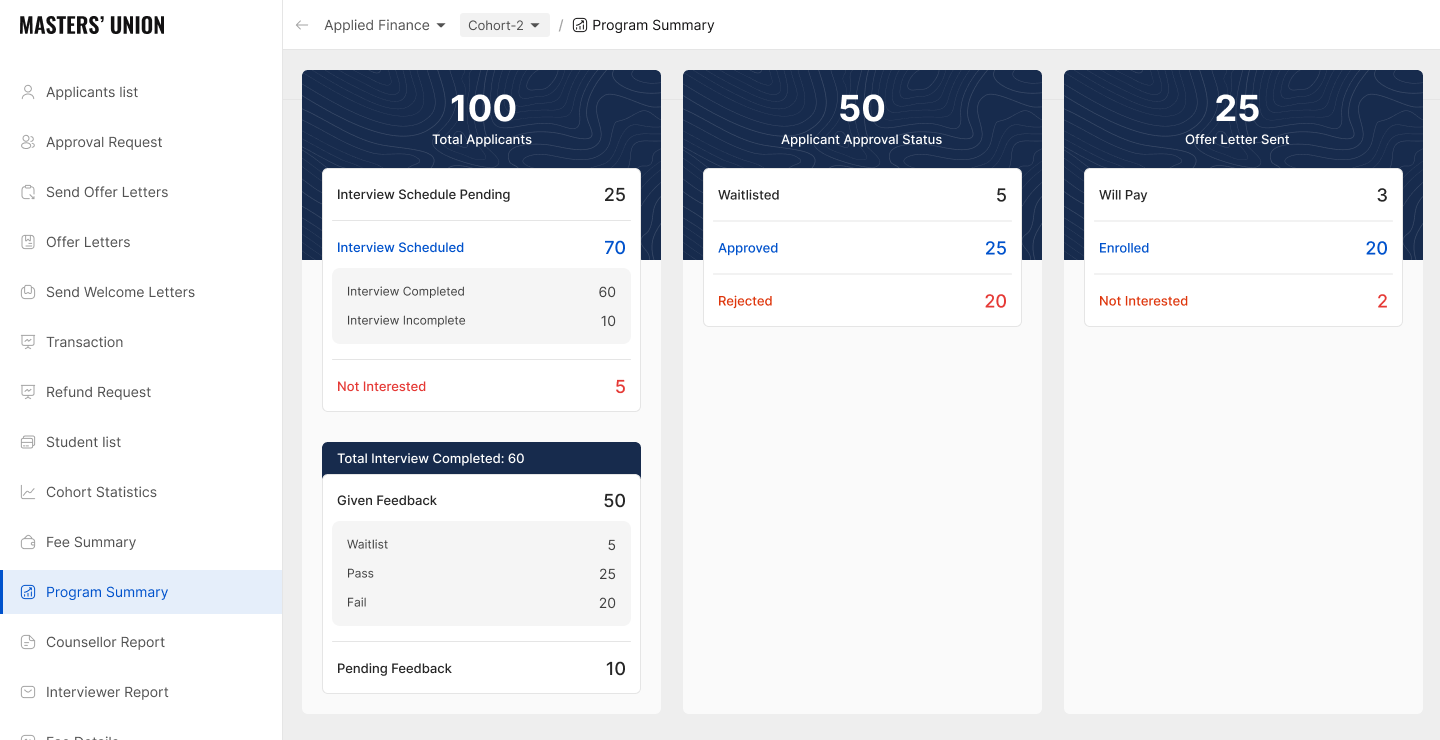

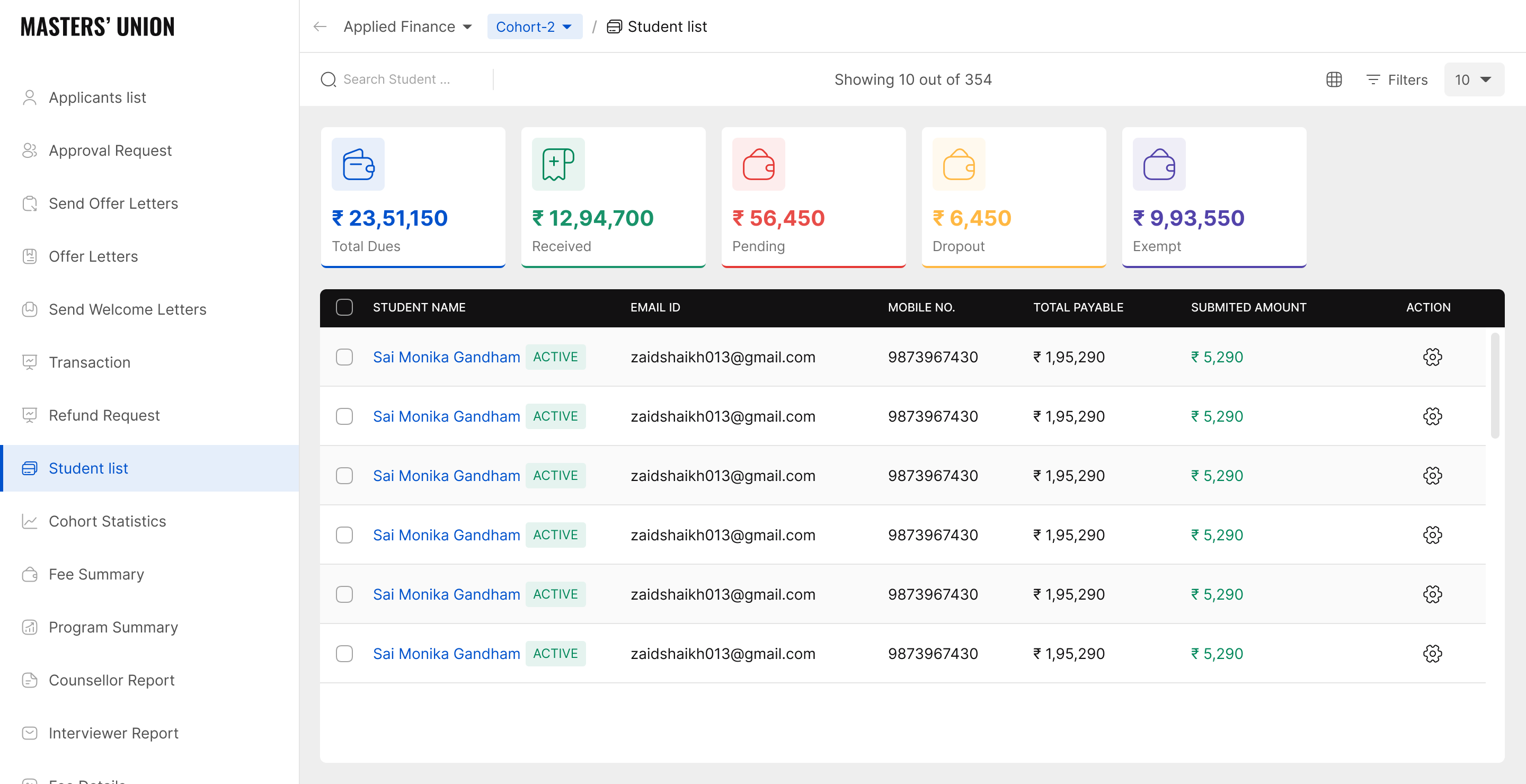

Finance Dashboard Aggregate financial view. Three primary metric cards: Total Applications | Fee Collected | Offers Pending. Replaces the daily manual Pinelab export + Excel reconciliation. Previously 45 minutes - now visible on login. (Note: Values shown are representative. Actual cohort data not shared due to institutional confidentiality.)

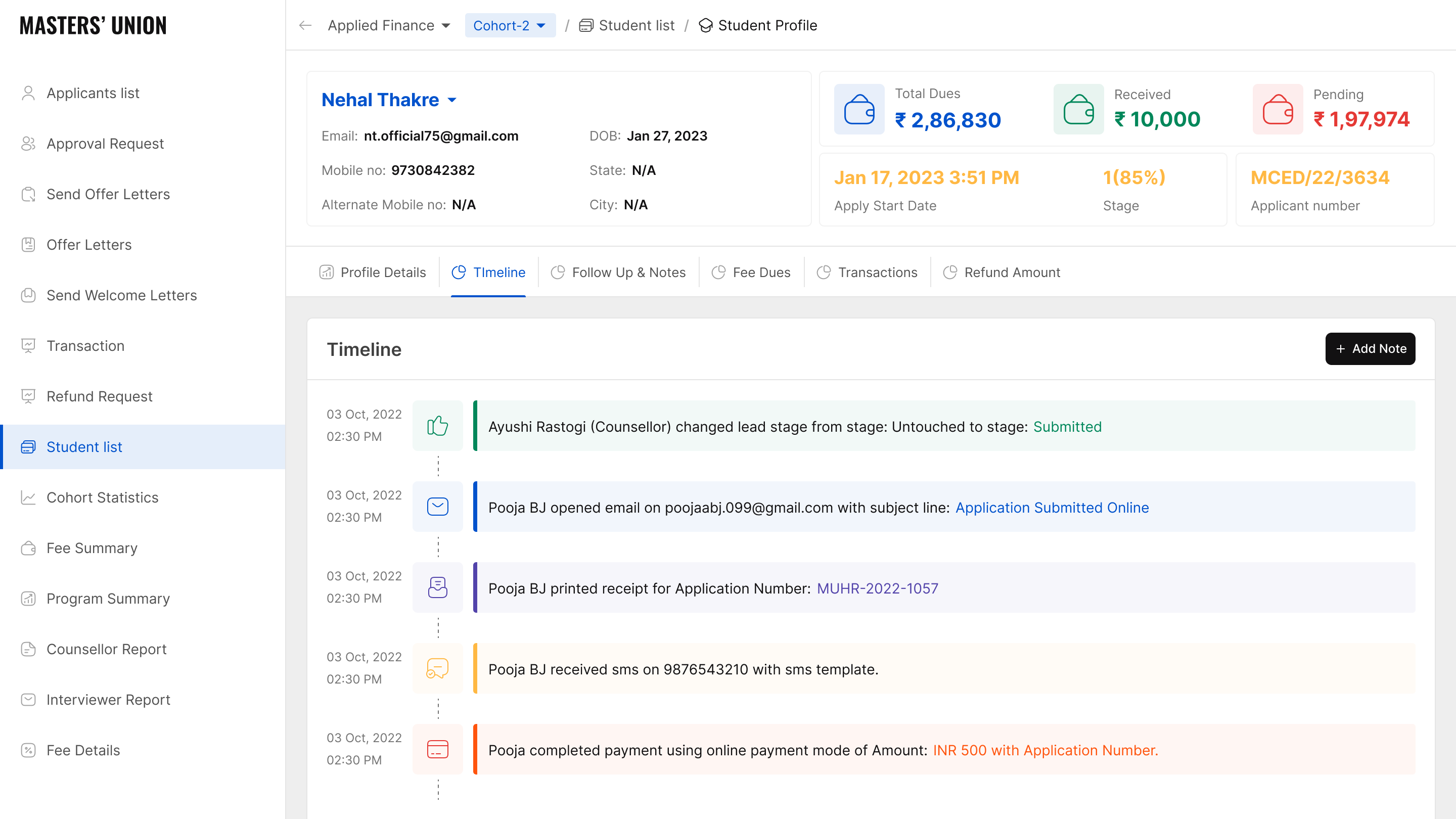

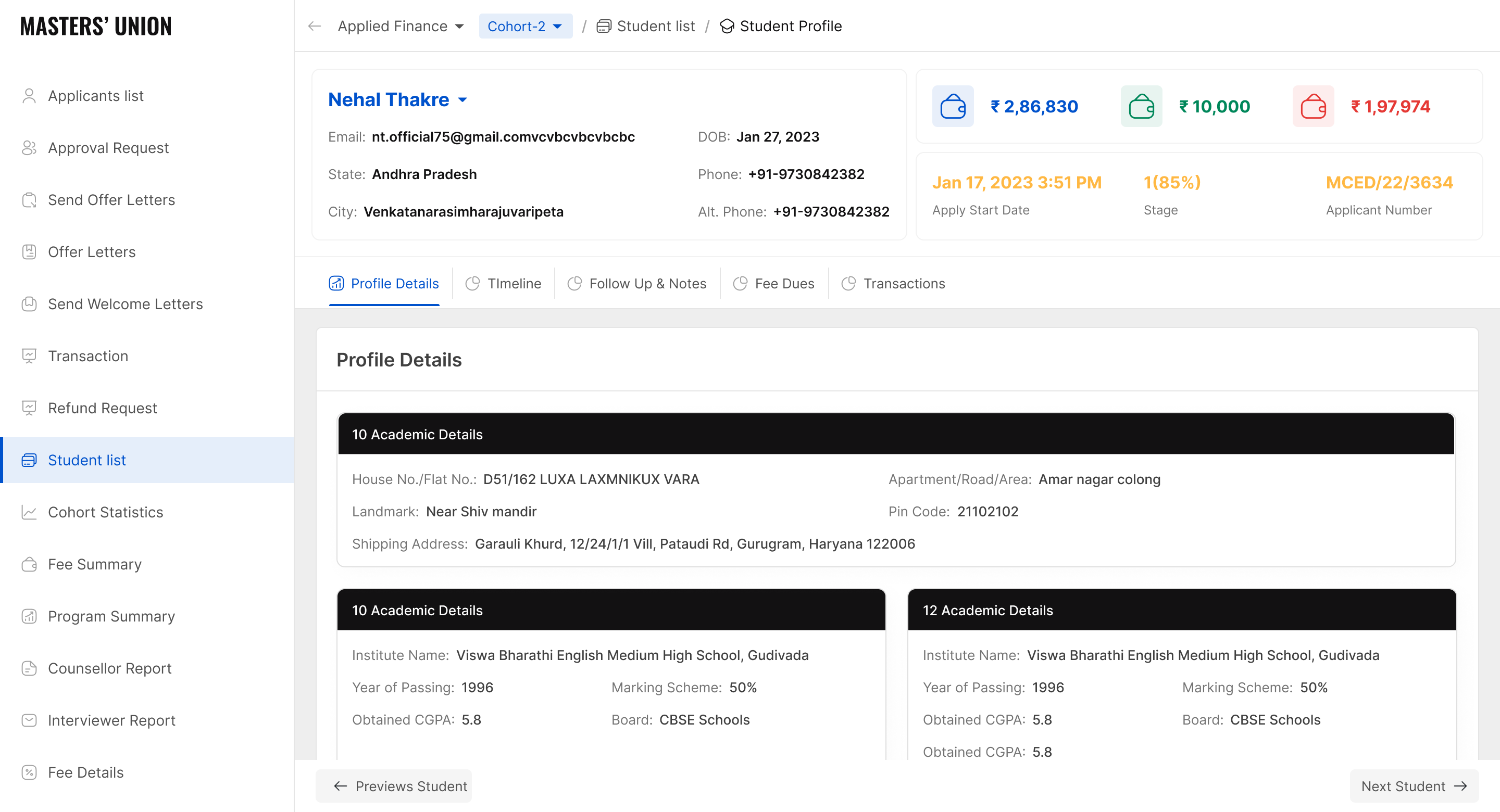

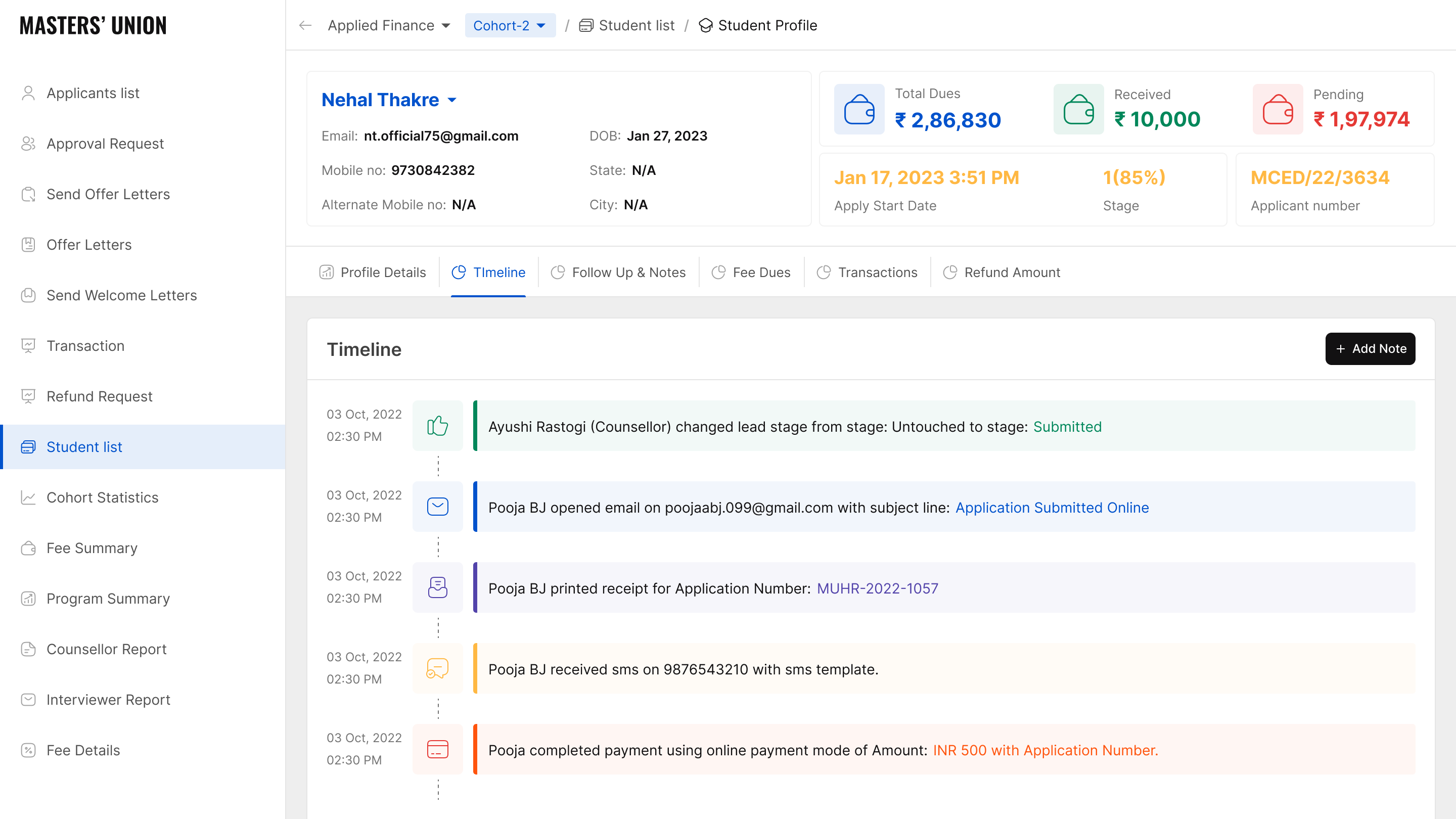

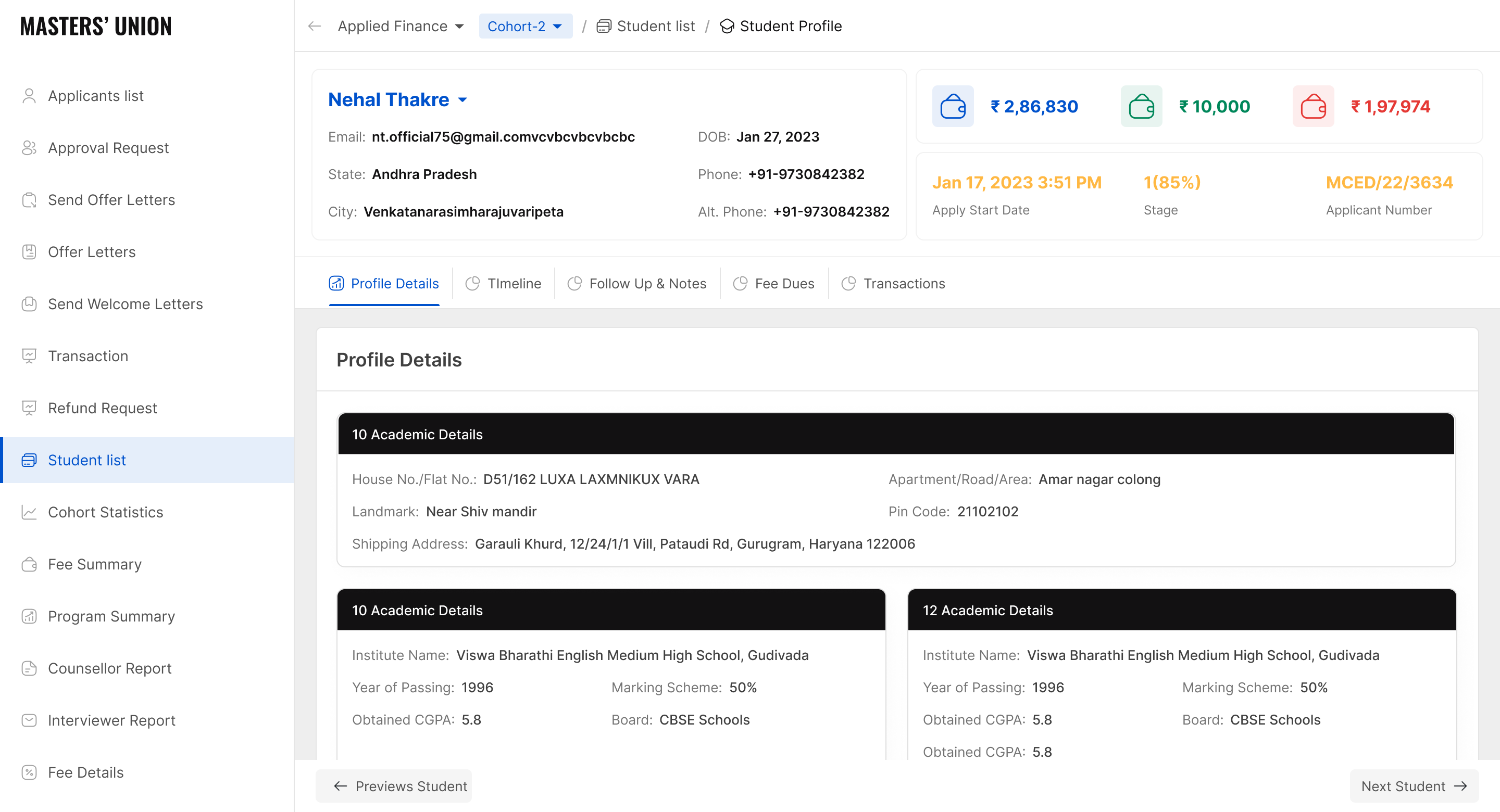

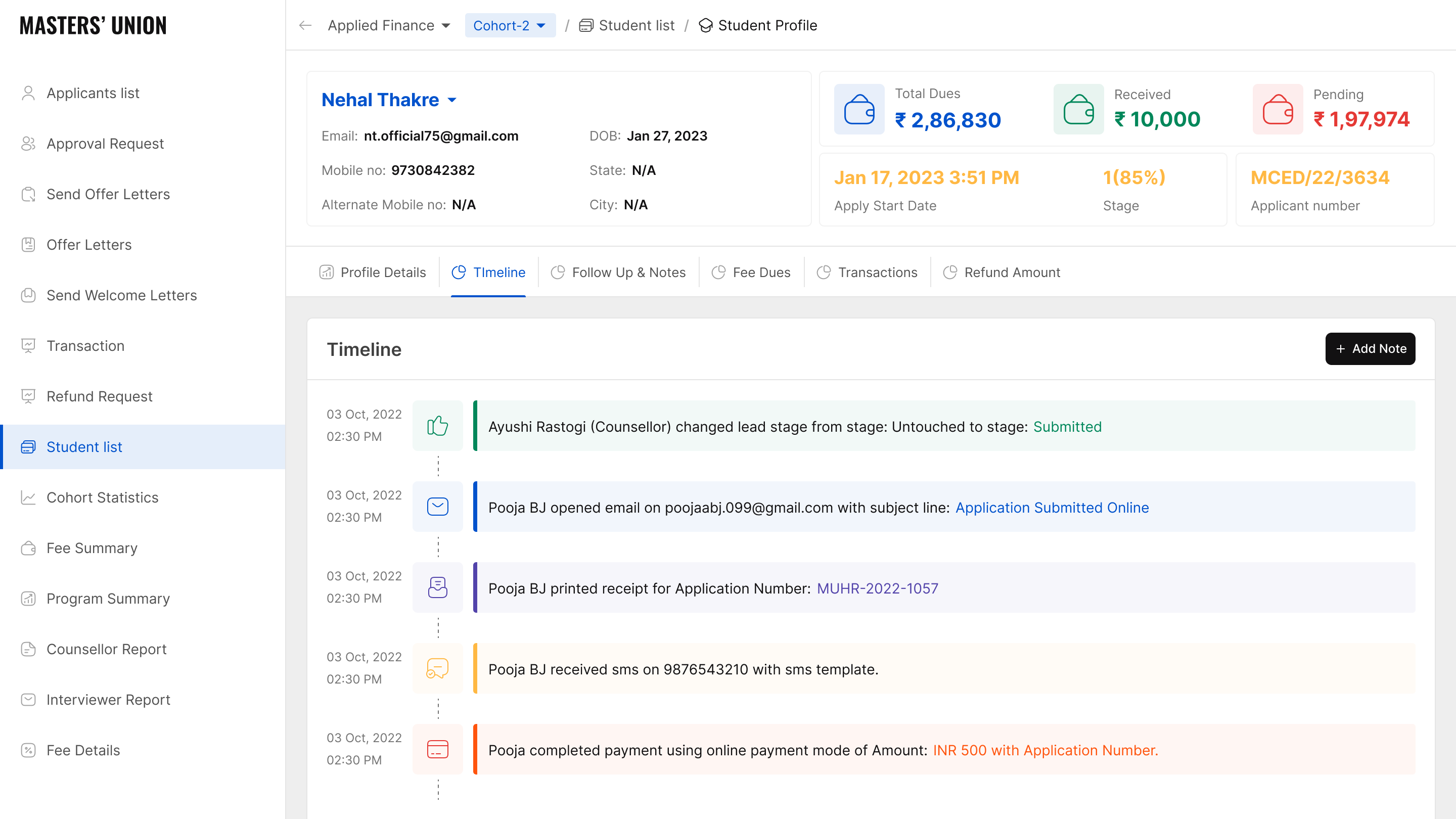

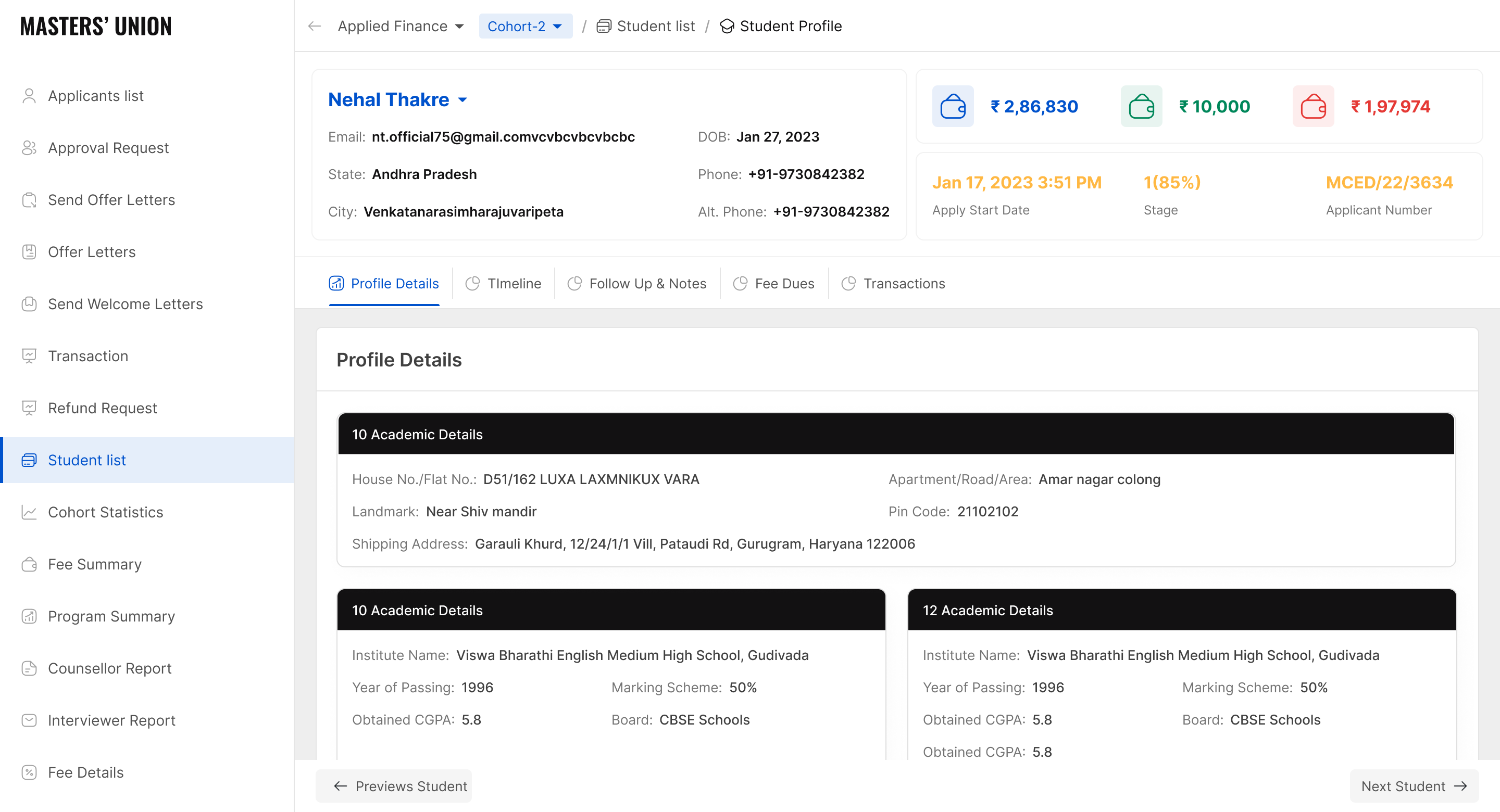

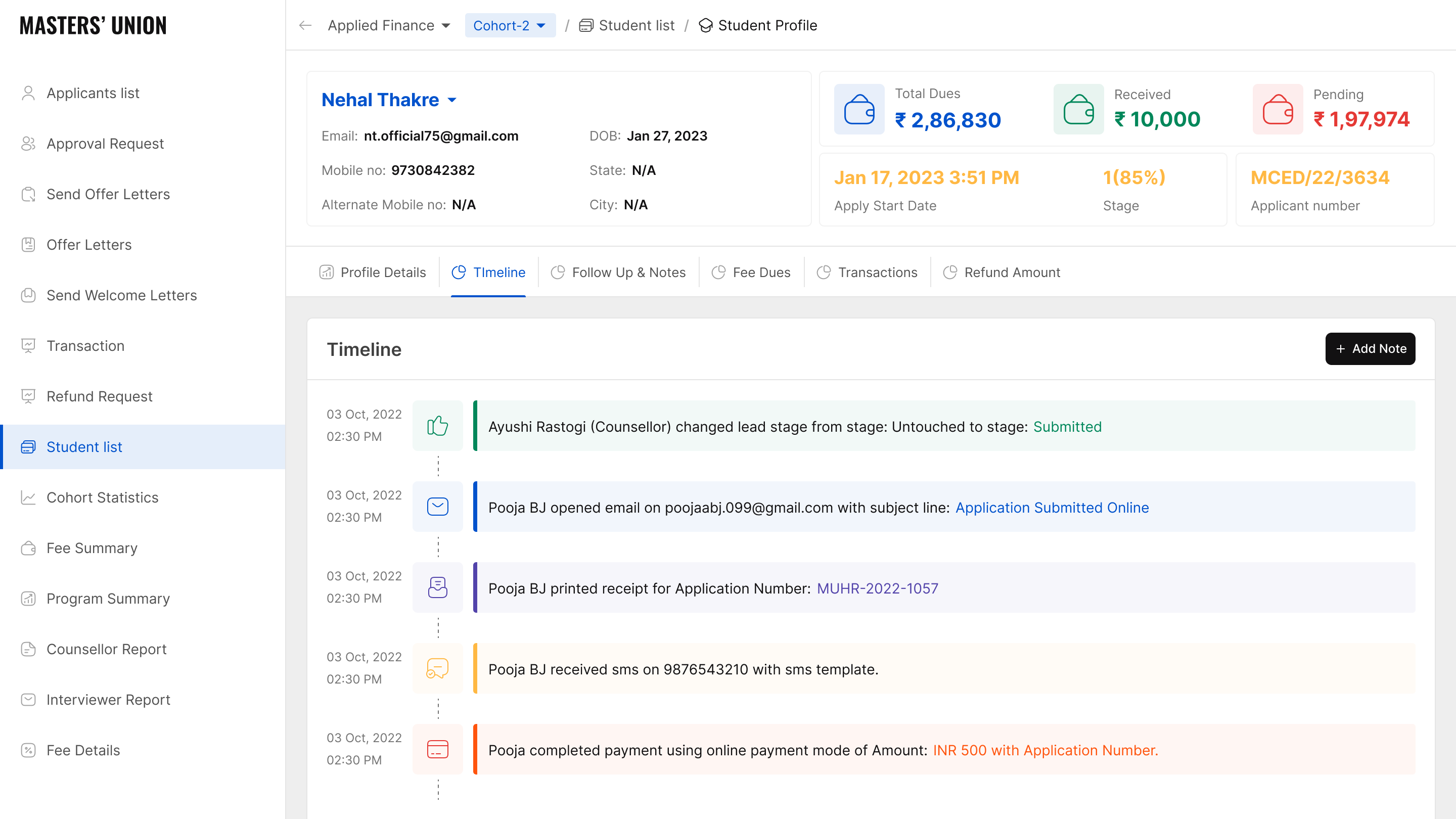

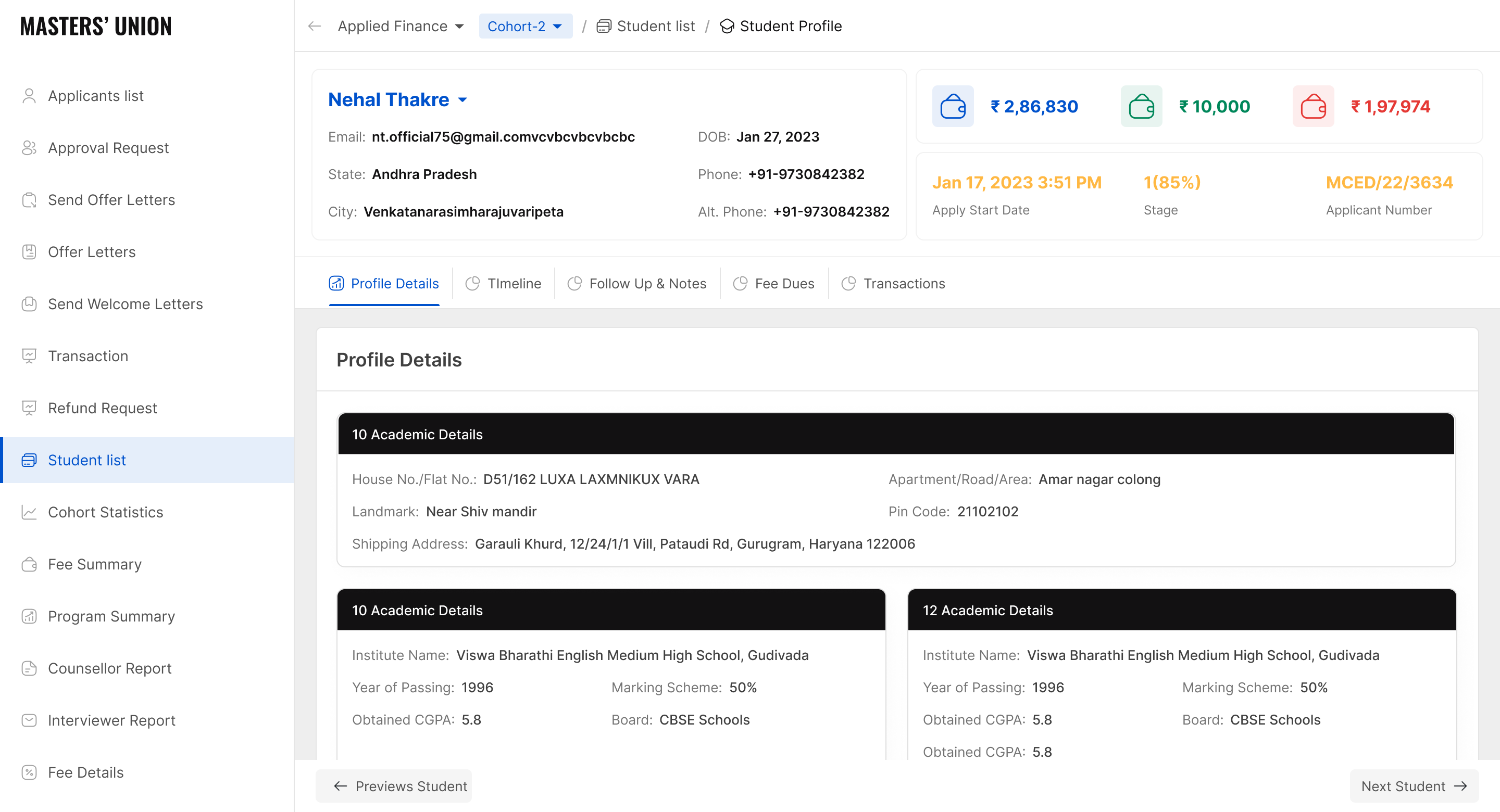

Student Detail: Status Timeline Individual student view. All 8 stages with timestamps as a persistent header - visible on every tab. Shows admin what happened and when without reconstructing from email threads. In-app + email notification fires automatically on stage change - replaces manual admin emails.

Finance Overview: Revenue Tracking Total revenue, collected, pending, refunds and adjustments in one screen. Auto-synced from Pinelab - no manual calculation. Replaced the 30-minute morning Excel compilation.

(Note: Values shown are representative. Actual financial data not shared due to institutional confidentiality.)

Student List: Full Payment Table Full-cohort view with payment amounts, dates and status per student. Sortable, filterable, one-click exportable. The table that replaced the NPF + Pinelab manual reconciliation entirely - report that took 30–45 minutes now takes 10 seconds.

/ 4.3 Results & Impact

1,000+

Students Onboarded

Validated platform scalability across full cohort cycle

87%

Usability Task Success

Up from 63% in Round 1 - direct outcome of iteration

93%

Payment Completion

Up from 74%, in-house trust flow

<1 hr

Admin Daily Overhead

Down from 3–5 hrs/day - all workflows unified

0

Platform Switching

For core user flows - students, admins, faculty

Licensed

Platform Scaled Externally

Internal tool became a licensable product

/ 4.4 HEART Framework - UX Quality Post-Launch

5 Dimensions · All Targets Achieved

H

Happiness

Post-session CSAT score

CSAT ≥ 4.2 / 5

✓ Positive - admin satisfaction notably high

E

Engagement

Daily active users per dashboard

70%+ DAU within 30 days

✓ All 3 dashboards actively adopted

A

Adoption

Time-to-first-task completion

< 5 min for all role types

✓ 1,000+ students onboarded

R

Retention

Weekly retention rate post-launch

85%+ weekly retention at 90 days

✓ No return to spreadsheets reported

T

Task Success

Task completion rate in usability testing

≥ 80% unassisted completion

✓ 87% avg. after Round 2 iteration

/ 4.4 Core Learnings

After organising data by role, cross-role synthesis revealed 5 patterns that cut across all three user groups. Each appeared in at least 2 of 3 roles.

01

Stakeholders misattribute problems - research locates them

Leadership came in believing the problem was students not completing payments. Research revealed it was a trust design failure: students were willing to pay, but the experience made them feel unsafe. The fix wasn't a reminder email - it was embedding the gateway. This project taught me to hold the question open longer before accepting a stakeholder's problem definition.

02

Observation reveals what interviews cannot

Admins told us in interviews they spent 1-2 hours on daily overhead. Contextual observation showed the real number was 3-5 hours. They weren't lying - they had normalized the effort and lost calibration on how long tasks actually took. Self-report and observation are different data sources. Both are necessary. This project made me default to observation-first for workflow-heavy user groups.

03

Card sorting isn't just an IA exercise - it's a conflict resolution tool

When I proposed role-based dashboards in early stakeholder reviews, the PM pushed back: Can't we just have one view with filters? The card sorting data ended that conversation. Participants didn't group the same cards the same way - the data made the case I couldn't make from intuition alone. Using research as a decision-making tool, not just a discovery tool, is the shift this project crystallized.

04

Test early enough to fail cheaply

Round 1 testing at mid-fi found 7 critical issues before a single line of code was written. The cost to fix those in Figma was hours. The cost to fix them post-development would have been weeks. The 63% -> 87% improvement wasn't just a UX win - it was a product risk mitigation. This is the argument I now use internally when engineering timelines push back against early testing.

05

The design system is a research artifact, not just a component library

The 30-component token-based system wasn't built for aesthetic consistency - it was built because 3 role-based dashboards serving different mental models still needed to feel like one product. Every token decision (colour, spacing, type) was constrained by the requirement that all 3 roles could trust the same visual language while having completely different information architectures. The system encoded the research.

/ 4.4 Core Learnings

After organising data by role, cross-role synthesis revealed 5 patterns that cut across all three user groups. Each appeared in at least 2 of 3 roles.

UX Research & Problem Mapping

Led all 11 user interviews, 2 contextual observation sessions, stakeholder interviews, competitive analysis, affinity mapping (200+ observations), JTBD framing

Information Architecture

Designed full role-based IA from card sorting results - validated by 11 participants across 3 groups, zero shared navigation

User Flows & Task Flows

Mapped 5 core task flows: fee payment, status check, session log, admin pipeline update, report export

UI Design - Web Dashboard

Designed all 30 screens across Student, Admin, Faculty dashboards - lo-fi sketch through hi-fi Figma

Design System

Built 30-component token-based system from scratch using Atomic Design - atoms → molecules → organisms

Usability Testing

Designed test scripts, moderated 2 rounds (5 participants each), synthesised findings, drove full iteration

Engineering Handoff

Delivered Figma Dev Mode specs to 8 frontend engineers - zero post-handoff design questions

I live for flow-that sweet spot where creativity meets clarity.

Download Resume

@imdhirajchouhan

Back to Top

नमस्कार

I’m Dhiraj Chouhan

I’m Dhiraj Chouhan

About me

Download Resume

The research process documented in this case study - the user interviews, card sorting, contextual observation, affinity mapping, usability testing and A/B testing - was conducted as described. Dinero was a real, 2-year product built and shipped at Masters' Union. Some specific data values, feedback quotes and metrics shown have been modified or made representative to protect participant privacy and institutional data confidentiality. The insights, findings and design decisions accurately reflect what was discovered during the research.

Back to Home Page

Dinero

Internal Platform

for Masters' Union

UX Research Case Study

From an 8-step manual admissions journey to a unified platform - serving 1,000+ students, eliminating 3–5 hours of daily admin overhead and converting an external payment link into 93% in-app completion.

UX Research

Product Design

Double Diamond

EdTech

1,000+

Students Onboarded

87%

Usability Task Success

93%

Payment Completion (A/B)

<1 hr

Admin Daily Overhead

Research Plan

Research Execution

Analysis & Synthesis

Outcomes

/ 1.1 Project Background

Masters' Union is a tech-first business school that scaled rapidly - but its internal operations couldn't keep pace. Before Dinero, two disconnected systems managed the entire admissions and fee cycle:

NPF handled the Lead Gen

Pinelab processed fee payments as an external gateway, accessed via a link in an email

Neither system talked to the other. Every handoff was manual. Every status update was a spreadsheet entry. Students clicking a payment link in an email - for a Rs.3,50,000+ transaction - were landing on an unbranded external page they had never seen before.

Dinero was built to unify this entire journey into one platform - grounded in a single core principle: you don't get to design the solution until you understand the problem.

/ The Real 8-Step Manual Journey (Pre-Dinero)

STEP 1

NPF application → Export to Excel

Eliminate Excel dependency, real-time pipeline view

STEP 2

Interview slot assigned → Confirmation email sent manually

In-app slot management + automatic confirmation

STEP 3

Interview conducted + exam scored

Structured faculty scoring inside the platform

STEP 4

Scholarship decision - Approved / Rejected

Decision workflow with automated student notification

STEP 5

Student clicks payment link in email

Embed Pinelab inside Dinero. Trust signals. Instant receipt.

↳ Lands on external Pinelab page

↳ No Masters’ Union branding · No in-app confirmation · 14–15 day manual processing

↳ ~25% abandonment - students feared the link was phishing

STEP 6

Offer letter sent by business team via email

Automated offer letter trigger inside platform

STEP 7

Student submits admission fee + tuition fee

In-platform fee split + loan request flow

STEP 7 or

If student cannot pay → Manual loan approval process begins

STEP 8

Payment confirmed → Enrollment updated manually across NPF + Excel + CRM

Auto-enrollment on payment confirmation. Single source of truth.

Total overhead: 3–5 hours of non-value-added admin work daily. Every step above = a design opportunity Dinero solved.

Total overhead: 3–5 hours of non-value-added admin work daily. Every step above = a design opportunity Dinero solved.

/ 1.2 Research Goals

G1:

Understand the end-to-end student journey across all 8 stages from NPF application to enrollment

G2:

Define what a unified platform must do to replace the fragmented NPF + Pinelab workflow

G3:

Identify the highest-severity pain points across students, admins and faculty

G4:

Validate design decisions through iterative usability testing before development

G5:UX quality post-launch using the HEART framework

/ 1.3 Research Questions

Type

Research Question

Method

What does the real 8-step admission journey look like and where does it break?

Contextual inquiry + User interviews

Why do students distrust the Pinelab payment link sent via email?

User interviews (laddering)

Where do admins lose the most time across NPF, Excel and Pinelab?

Contextual observation

How do counselors track student progress and session outcomes today?

User interviews

Can users complete core flows in Dinero without help?

Moderated usability testing

Generative

Generative

Generative

Generative

Evaluative

Evaluative

Which payment flow variant performs better?

Moderated usability testing

/ 1.4 KPIs & Success Metrics

Admin daily manual effort

Baseline

3–5 hrs/day

Target

< 1 hr/day

Outcome

Significantly reduced - all workflows unified

Payment abandonment rate

Baseline

~25%

Target

< 10%

Outcome

Drop-off eliminated - trust design solved

Platforms in daily use

Baseline

2+ disconnected

Target

1 platform

Outcome

Single platform shipped

Task success rate (usability)

Baseline

N/A

Target

≥ 80%

Outcome

87% in Round 2

Students onboarded

Baseline

0

Target

2,000+

Outcome

1,000+ onboarded

CSAT score

Baseline

N/A

Target

≥ 4.2 / 5

Outcome

Positive across all roles

/ 1.5 Methodology

Mixed Methods, Two Phases

We used a two-phase mixed-methods approach: Generative research to discover and frame the problem, then Evaluative research to validate and refine the solution. The rule was simple - understand before you design, test before you ship.

01

Discover

Qualitative

Stakeholder Alignment Interviews

Business goals, constraints, success definition

02

Discover

Qualitative

Contextual Inquiry

Real admin workflow map - NPF | Pinelab |→ Excel

03

Discover

Qualitative

Semi-structured User Interviews

Pain points, mental models, JTBD per role

04

Discover

Secondary

Competitive Analysis

Admission feature gap matrix

05

Define

Synthesis

Affinity Mapping (KJ Method)

200+ observations → 5 insight clusters

06

Define

Synthesis

Jobs to Be Done (JTBD)

JTBD map per role × use case

07

Define

Synthesis

User Personas

3 research-backed personas

08

Define

Synthesis

Experience / Journey Mapping

8-step emotional arc - application to enrollment

09

Ideate

Quantitative

Card Sorting (Open)

User-defined IA - confirmed role-based dashboards

10

Test

Evaluative

Moderated Usability Testing

Round 1: 63% · Round 2: 87% task success

11

Test

Expert Review

Heuristic Evaluation

Nielsen-rated issue list - 4 violations resolved

12

Test

Quantitative

A/B Testing

74% → 93% payment completion

13

Measure

Quantitative

HEART Framework

UX quality across 5 dimensions post-launch

/ 1.6 Participants

5

Students

User interviews + Usability testing

3

Admins

User interviews + Contextual observation

5

Counsellors

User interviews + Card sorting

/ Screening Criteria

→

Active users of NPF or Pinelab payment flow

→

Minimum 2 months tenure with the institution

→

Informed consent obtained for all sessions

/ 1.7 Usability Test Script

Moderator Introduction - Read Verbatim

"Hi, thank you for joining us. I'm Dhiraj - a designer on the Dinero team. We're testing the product today, not you. There are no wrong answers. Please think out loud - tell us what you're reading, what you expect, what surprises you. You can stop at any time. Any questions before we begin?"

Task 1 - Student: Pay Your Semester Fee

“You’ve just received your offer letter. Please pay your first semester fee using Dinero.”

- Success: Payment completed within 3 minutes, no moderator prompts

- Watch for: Trust hesitation, confusion on fee breakdown, missing confirmation

Task 2 - Admin: Update a Student Status

“A student just completed their interview. Please update their status to Shortlisted.”

- Success: Status updated within 90 seconds

- Watch for: Navigation path, search vs browse, dead ends

Task 3 - Faculty: Log a Session Note

“You just finished counseling a student. Please log your notes and set a follow-up reminder.”

- Success: Note saved + reminder set within 90 seconds

- Watch for: Where they look first, save confirmation, follow-up confusion

Post-Task Questions

- How difficult was that task? Rate 1 (very easy) to 5 (very hard).

- Was there anything that surprised you?

- What did you expect to happen after that action?

- If you could change one thing about that flow, what would it be?

Exit Interview Questions

- Overall, how does this compare to the tools you currently use?

- What is the single most important thing this platform does for you?

- What would stop you from using this daily?

/ 1.8 Study Schedule

2022–2023

Week 1–2

Week 3-4

Week 5-6

Week 7-8

Week 9-10

Week 11-12

Week 13–14

Week 15-16

Week 17-18

2022–2023

Stakeholder interviews · Research plan finalisation · Participant recruitment

User interviews Students (5 sessions) · Admin (3 sessions)

User interviews Faculty (3 sessions)

Card sorting

Competitive analysis

Affinity mapping · JTBD framework · Persona development · Journey mapping

Round 1 usability testing 5 participants

Design iteration based on Round 1 findings

Round 2 usability testing Heuristic evaluation

Wireframing · Lo-fi to mid-fi prototype (35 screens)

Hi-fi UI · Design system (30 components) · Figma Dev Mode handoff

A/B testing · HEART measurement · Post-launch analytics · Continuous iteration

/ 2.1 Stakeholder Interviews

Before talking to any end users, we ran alignment sessions with the Product Manager and two Admin Leads. The goal was not to gather requirements - it was to understand the business context, the constraints and how each stakeholder defined success.

60 min

Product Manager

Business goals, success definition, scope constraints

45 min

Admin Lead

Current NPF → CRM workflow, daily pain points, time estimates

45 min

Finance

Payment tracking, reconciliation process, error frequency

Key Tensions Surfaced

- Leadership believed the primary problem was "students not completing payments" - research revealed it was a trust design problem, not a student behaviour problem

- Admins underreported their daily manual effort in initial interviews (said "1-2 hours") - contextual observation revealed the real number was 3-5 hours

- No stakeholder had mapped the full 8-step journey end-to-end before this project - it had never been documented in one place

/ 2.2 Competitive Analysis

We mapped the tools the team was actually using - NPF for admissions tracking and Pinelab (alongside Flywire and Blackbaud as market alternatives) for payments - against what the team actually needed. The gap became the design brief.

Unique Value Proposition

What makes this company unique?

Company Advantages

What are the things that provide a leg up?

Company Disadvantages

Where might drawbacks exist?

- A lead tracking platform that helps institutions manage student applications and financial processes.

- Primarily a lead management system, helping institutions handle student inquiries, applications and enrolment.

- Focuses on CRM-style automation for student recruitment and communication.

- Handles lead tracking and applicant approvals.

- May include basic financial tracking for student payments.

- Automates admissions and lead funnel management with CRM-style tracking.

- Provides real-time applicant status updates for universities.

- Integrates AI-driven analytics for student recruitment trends.

- Reduces manual follow-ups by automating communication workflows.

- Limited admin-side financial tracking (refunds, reports and approvals).

- Lacks post-admission features, making it only useful for lead management.

- No fee tracking, refund approvals, or payment integration.

- Requires third-party integrations for financial & engagement tools.

- A secure global tuition payment platform that simplifies university transactions, loan processing and refunds.

- A global education payment solution, allowing universities to accept international and domestic tuition payments securely.

- Focuses on fraud prevention and currency conversion for global students.

- Provides secure fee payments & refunds.

- Supports loan approvals and payment verification.

- Supports multi-currency transactions with localized payment methods.

- Offers secure, institution-approved fee collection with built-in fraud detection.

- Provides detailed financial reporting dashboards for universities.

- Ensures compliance with financial regulations in different regions.

- No lead tracking or interview process.

- No student engagement features (meetings, clubs, help desk, etc.).

- Not an internal tool-relies on external payment providers.

- Lacks post-admission features, making it only useful for lead management.

- No fee tracking, refund approvals, or payment integration.

- Requires third-party integrations for financial & engagement tools.

Apparent Differences

What are the differences between the Product?

NPF automates communication & recruitment, but Flywire automates tuition processing & compliance.

NPF focuses on pre-admission (lead tracking, CRM) while Flywire focuses on post-admission (fee payment, financial tracking).

Global Fee Payments → Flywire is the only platform with a strong global payment system.

Lead Tracking & Admissions → Only NPF focuses on lead management, while Flywire and Blackbaud do not.

Similar Capabilities

What do all the companies have in common?

Fee & Payment Tracking → All platforms (NPF, Flywire, Blackbaud) provide financial transaction management.

Basic Student Data Management → Most competitors offer some form of student tracking (admissions, fee details, or loan approvals).

Secure Data Handling → Both platforms follow data security & compliance regulations for handling student information.

Administrative Support → Platforms allow admins to monitor payments, refunds and approvals.

CRM & Communication Automation → NPF and Flywire both help institutions communicate with students.

Custom API Integrations → Both offer API-based solutions, allowing institutions to connect their existing tools.

Key Learnings

What can we learn from this process?

High Dependence on External Integrations → Both competitors require third-party add-ons to handle a full student lifecycle.

There is no all-in-one solution → Competitors specialize in either lead tracking, finance, or student engagement-but not all three together.

Most platforms rely on third-party tools → Institutions often have to use multiple services to manage different aspects (NPF for leads, Flywire for payments, Blackbaud for academics).

Lack of an End-to-End Student Management Solution → No company combines admissions, financial tracking and student engagement in one tool.

Finance vs. Admissions Gap → Universities must manage payments and student services separately, leading to inefficiencies.

Admin workflows are still highly manual → Even Blackbaud (which offers reporting) lacks automation for student feedback, counselor interactions and engagement tracking.

Opportunities

Where can we progress or create value?

Centralized Platform → Develop a single internal tool that integrates lead tracking, finance, interview tracking and student engagement.

Automation & Smart Workflows → Streamline admin operations by reducing manual approval processes and real-time tracking of applications, finances and reports.

Holistic Student Experience → Provide a student-friendly interface where they can track interviews, fees, counselor meetings and participation in clubs/events-all in one place.

All-in-One University Lifecycle Management → Instead of just lead tracking (NPF) or payments (Flywire), an internal tool can streamline everything from admissions to student engagement.

Integrated Workflow Optimization → Reduce manual approvals and fragmented processes by connecting admissions, finance and engagement into one structured platform.

Automated Student-Centric Platform → Unlike Flywire, which only supports payments, a platform can include counselor feedback, interview tracking and real-time student interaction features.

/ 2.3 Card Sorting

Information Architecture Discovery

Before sketching a single screen, we ran open card sorting with all 11 participants to let users define the information architecture. We weren’t going to impose a structure - we were going to discover the one that already existed in users’ minds.

/ 2.4 Affinity Mapping

KJ Method + Jobs to Be Done

After 11 interviews and 2 contextual observation sessions, we had 200+ individual data points. Every observation, quote and pain point went onto its own sticky note. Then we grouped. The clusters don't come from analysis - they emerge from the grouping. That's the KJ method.

Cluster

Representative Quote

Notes

Fragmentation Fatigue

“I use 4 tabs before 9am - NPF, Pinelab, Excel, email”

48 notes

Visibility Anxiety

“I check email every hour just to know where I stand”

41 notes

Payment Trust Deficit

“The link looks fake. What if it’s phishing?”

26 notes

Admin Info Overload

“I’m firefighting every morning before I do any real work”

41 notes

Counselor Invisibility

“My session notes live in my personal email drafts”

32 notes

Jobs to Be Done

JTBD moves the conversation away from features and toward motivation. Instead of “what do users want?”, you ask: what job are they hiring this product to do?

User

When I…

I want to…

So I can…

STUDENt

Check my admission status

See it instantly - no emails

Stop anxiously checking my inbox

STUDENt

Pay my semester fee

Complete it inside one trusted platform

Have proof and peace of mind

ADMIN

Start my workday

See every pending action in one view

Stop opening NPF, Pinelab and Excel

ADMIN

Update a student status

Do it in one click with confirmation

Move to the next task immediately

Faculty

Finish a counseling session

Log notes right there in the platform

Not lose context or forget follow-ups

/ 2.5 User Personas - 3 Research-Backed Roles

These personas were built directly from interview data - not from assumptions. Every goal, pain point and JTBD below was mentioned by at least 2 of the participants in that role group.

Aditya Verma

MBA Student - Age: 22 | Location: Pune, India

Aditya is a driven MBA student focused on securing a great job post-graduation, but he finds the admission and financial process confusing. He struggles to track interview progress, fee payments and counselor feedback - often missing important updates. He prefers digital solutions but gets frustrated when he has to check multiple platforms. He wants one place where everything just works.

Goals

→

Track interview progress and feedback in real time - no more waiting for email updates

→

Pay fees quickly without worrying about third-party links or whether payment went through

→

Access counselor reports and meeting history in one place

→

Join clubs and events without long approval processes or scattered notifications

Pain Points

×

Confusing interview process - unclear next steps, no visibility into stage

×

Third-party payment issues - external link felt untrustworthy, no receipt

×

Scattered feedback from counselors - difficult to access reports from previous sessions

HEARS

→

You need to pay your fees via this third-party link.

→

Your interview status will be updated soon.

→

You missed the deadline for your counselor feedback session.

SEES

Multiple emails from admissions with unclear instructions. Screenshots and spreadsheets to track interview progress. Other students discussing the process in WhatsApp groups.

SAYS & DOES

→

Where do I check my interview status?

→

Did my payment go through? How do I get a receipt?

→

Asks peers for updates. Logs into multiple platforms.

THINKS & FEELS

→

I wish there was one place to track everything.

→

Why do I need to use third-party payment links?

→

Am I missing important deadlines?

Priya Sharma

Admissions & Finance Administrator - Age: 42 | Location: New Delhi, India

Priya is responsible for managing student admissions, financial records and interview tracking. She works with multiple tools daily to approve applications, verify transactions and track refund requests. The lack of a centralized system makes her job exhausting - she handles 2-4 data discrepancy errors daily across NPF, Pinelab, Excel and email.

Goals

→

Streamline the admission approval process to reduce manual work

→

Get real-time insights into student finances, transactions and interview status

→

Automate fee processing and refunds to avoid delays and student complaints

→

Ensure data accuracy in reports without switching between multiple platforms

Pain Points

×

Too many manual approvals - time-consuming and error-prone

×

Scattered data across NPF, Pinelab, Excel and email - hard to get a complete student picture

×

Delayed fee verification and refund processing - leading to daily student complaints

×

No single dashboard for managing student interactions across all touchpoints

HEARS

→

We need to approve 50+ applications today

→

How many students have completed fee payments?

→

Students are complaining about missing payment confirmations

SEES

Multiple spreadsheets tracking student fee payments. Unorganized email requests for refund approvals. Confusion about fee statuses across different platforms.

SAYS & DOES

→

I need to check multiple systems to track student applications

→

Tracks counselor interactions separately from financial records. Compiles manual reports each morning.

THINKS & FEELS

→

There must be a better way to manage all of this.

→

I spend 4 hours a day just reconciling data.

→

Why can't I see a student's full journey in one place?

Dr. Rajeev Menon

Faculty & Student Counselor- Age: 40 | Location: Bangalore, India

Dr. Rajeev Menon has been mentoring students for over a decade, helping them navigate academic and career paths. Without a structured system, his feedback gets lost in emails. He struggles to track student engagement across counseling sessions and has no way to flag at-risk students early.

Goals

→

Provide timely and structured feedback to students after interviews and sessions

→

Have a centralized system to track student history, progress and counselor interactions

→

Improve student engagement in career mentorship and academic guidance

→

Reduce the need for manual note-keeping and fragmented communication

Pain Points

×

No centralized student tracking system - feedback often gets lost in email drafts

×

Manual documentation is inefficient and takes too much time between sessions

×

Hard to ensure students are following up on counseling sessions and interview feedback

HEARS

→

Can you check in on this student? I think they're struggling

→

Your session notes weren't attached to the student record

→

A student from last month missed their follow-up.

SEES

No structured view of students he has counseled. Emails as the only record system. Students re-explaining context he should already know.

SAYS & DOES

→

My session notes live in my professional email drafts.

→

I have to reconstruct context from memory each time

→

Manually tracks follow-ups in a personal notebook.

THINKS & FEELS

→

I'm losing context between sessions - I can't be an effective mentor this way.

→

I want to flag at-risk students but there's no channel

→

This should all be in one place.

/ 2.6 Experience & Journey Mapping

We mapped the emotional arc of the full 8-step journey for each role - not just the tasks, but how users felt at each stage. This is where the design priorities became undeniable.

/ 2.7 Wireframing - Lo-Fi

With card sorting results defining the IA and research findings defining the priorities, we moved into solution space. The rule: generate first, judge later.

/ 2.8 Usability Testing - Two Rounds

Two rounds of moderated usability testing - Round 1 on mid-fidelity prototype before any iteration, Round 2 on hi-fidelity after design changes. Testing early meant failing cheaply. Testing again proved the iteration worked.

Parameter

Round 1 (Mid-Fi)

Round 2 (Hi-Fi)

Participants

5 (mixed roles)

5 (matched to Round 1)

Prototype fidelity

Mid-fi Figma clickthrough

Hi-fi Figma - real content

Tasks

Fee payment · Status check · Admin update

All Round 1 tasks + Session log + Report export

Duration

45–60 min

45 min

Format

Moderated in-person

Moderated remote (video call)

Task success rate

63%

87%

Issues found

7 critical · 4 high · 3 medium

1 critical · 2 high · 5 medium (resolved)

Task Difficulty Scores

Task

Round 1 Difficulty

Round 2 Difficulty

Key Change Made

Student: Pay semester fee

4.2 / 5 (hard)

1.9 / 5 (easy)

Pinelab embedded inside Dinero + itemised fee breakdown + trust signals

Admin: Update student status

2.4 / 5

1.6 / 5

Simplified dropdown + inline confirmation modal

Faculty: Log session note

4.1 / 5 (hard)

2.1 / 5

Dedicated Log Session CTA + autosave + follow-up reminder inline

Report export (added R2)

N/A

1.8 / 5 (easy)

One-click export with 3 pre-built templates

/ 2.9 User Interviews - Laddering Technique

11 semi-structured interviews across 3 user roles. Sessions were 45–60 minutes each. We used laddering: start with behaviour, move to consequence, then feeling, then the underlying need. This is where the real insight lives.

Laddering Example - How One Question Unlocked the Payment Trust Insight

Level

Exchange

L1 Behaviour | What they do

“How do you pay your semester fee?” → “I get an email with a link and just click it.”

L2 Consequence | What happens next

“What happens after you click it?” → “It goes to a page I’ve never seen before. Looks sketchy.”

L3 Emotion | How they feel

“How does that make you feel?” → “Anxious. I’m paying 3.5 lakhs - what if it’s phishing?”

L4 Value | What they actually need

“What would make you feel confident?” → “If it was inside the college portal. Official-looking.”

Insight

Payment trust is a design problem, not a behaviour problem. → Design decision: Embed Pinelab inside Dinero so students never leave the platform.

Student Interview Areas

Fee Payment: How did you pay your semester fee? Did you feel confident the payment went through? Why or why not?

Admission Process: Walk me through your application journey. How did you know what stage your application was at?

Post-Admission: After admission, how did you track your progress? How easy was it to connect with your counselor?

Admin Interview Areas

Managing Admissions: Take me through a typical morning. How do you track who has completed interviews? Where do you lose the most time?

Financial Management: How do you track fee payments? What happens when a student says they paid but it hasn't shown up?

Data & Reports: What information do you need at a glance first thing in the morning?

Faculty / Counselor Interview Areas

Student Progress: How do you manage your student meeting schedule? Where do you log session notes?

At-Risk Flagging: What would change if you had real-time visibility into each student's journey? How do you flag a student you're worried about?

/ 2.10 Data Collected

11 interview recordings (45–60 min each)

2 contextual observation sessions real admin workflow

3 card sorting result sets - 11 participants across 3 role groups

NPF vs Flywire competitive gap analysis

Usability test recordings - Round 1 (5 sessions) + Round 2 (5 sessions)

200+ individual sticky note observations from all interviews and sessions

Research Limitations

Naming limitations is not a weakness - it tells the reader exactly how far to generalise the findings.

Limitation

Impact

Mitigation

Small qualitative sample (n=11)

Findings are directional, not statistically significant

Triangulated across 3+ methods before elevating to design decision

Recruitment from single institution

Mental models may differ at other EdTech companies

Findings validated through usability testing - not assumed to transfer

Admin participants self-selected (volunteered)

May skew toward more engaged, tech-comfortable admins

Contextual observation sessions compensated for self-report bias

/ 3.1 Identify Patterns

Synthesis is where raw data becomes design direction. With 200+ observations, 11 interviews, 2 observation sessions and card sorting results, the risk was finding patterns that weren't really there - or missing the ones that were. This is how we separated signal from noise.

Step 1 - Role-Based Sorting

Every sticky note was first tagged by role (Student / Admin / Faculty) and by data type (Observation / Quote / Behaviour / Pain Point). This prevented cross-role noise from masking role-specific insights - and revealed which problems were universal vs. role-specific.

Step 2 - Open Clustering (KJ Method)

Notes were clustered by affinity - no predefined categories. Clusters that appeared independently across multiple sessions were flagged as candidate patterns. Clusters with notes from only one participant were kept as individual observations, not elevated to patterns.

Cluster

Name

Note

Count

Roles

Represented

Elevated

to Pattern?

Reason

Fragmentation Fatigue

48

Admin

Faculty

yes

Appeared in interviews + contextual observation (2 methods)

Visibility Anxiety

41

Student

Faculty

yes

Appeared in interviews + journey map + card sorting (3 methods)

Payment Trust Deficit

26

Student

yes

Same quote structure from 3 of 5 students independently

Admin Info Overload

41

Admin

yes

Corroborated by contextual observation (4 hrs vs 1-2 hrs self-reported)

Admin Info Overload

41

Admin

yes

Corroborated by contextual observation (4 hrs vs 1-2 hrs self-reported)

Counselor Invisibility

32

Faculty

yes

Consistent across all 3 faculty participants

Leadership Reporting Gap

11

Admin

No

Stakeholder need, not user pain. Shipped as low-priority in v1.5.

Step 3 - Triangulation Gate

Before a pattern became a design decision, it had to pass through a triangulation gate: corroboration by at least 2 independent methods. This prevented a single vivid interview quote from driving a design decision.

Visibility Anxiety

User interviews

(41 notes)

Journey mapping

(peak frustration at Stage 1)

Card sorting

(students separated Journey from Money)

Payment Trust Deficit

User interviews (phishing 3/5 unprompted)

Laddering (L4: want official portal, not external link)

Usability test R1

(Task 1 hardest: 4.2/5 difficulty)

Admin Fragmentation

User interviews

(1-2 hrs self-reported)

Contextual observation (real: 3-5 hrs)

Usability test R1 (admin status update: 2.4/5 difficulty)

Counselor Invisibility

User interviews

(32 notes, all 3 faculty)

Usability test R1 (session log: 4.1/5 hardest task)

Card sorting (faculty grouped by student, not by task)

/ 3.2 Key Findings

5 Findings, Severity-Rated

Findings rated by severity. Critical and High findings drove v1.0 priorities. Medium findings shipped in v1.5.

Finding

Design Decisions

F1